Introduction

Since 2011, it is an open secret that many published results in psychology journals do not replicate. The replicability of published results is particularly low in social psychology (Open Science Collaboration, 2015).

A key reason for low replicability is that researchers are rewarded for publishing as many articles as possible without concerns about the replicability of the published findings. This incentive structure is maintained by journal editors, review panels of granting agencies, and hiring and promotion committees at universities.

To change the incentive structure, I developed the Replicability Index, a blog that critically examined the replicability, credibility, and integrity of psychological science. In 2016, I created the first replicability rankings of psychology departments (Schimmack, 2016). Based on scientific criticisms of these methods, I have improved the selection process of articles to be used in departmental reviews.

1. I am using Web of Science to obtain lists of published articles from individual authors (Schimmack, 2022). This method minimizes the chance that articles that do not belong to an author are included in a replicability analysis. It also allows me to classify researchers into areas based on the frequency of publications in specialized journals. Currently, I cannot evaluate neuroscience research. So, the rankings are limited to cognitive, social, developmental, clinical, and applied psychologists.

2. I am using department’s websites to identify researchers that belong to the psychology department. This eliminates articles that are from other departments.

3. I am only using tenured, active professors. This eliminates emeritus professors from the evaluation of departments. I am not including assistant professors because the published results might negatively impact their chances to get tenure. Another reason is that they often do not have enough publications at their current university to produce meaningful results.

Like all empirical research, the present results rely on a number of assumptions and have some limitations. The main limitations are that

(a) only results that were found in an automatic search are included

(b) only results published in 120 journals are included (see list of journals)

(c) published significant results (p < .05) may not be a representative sample of all significant results

(d) point estimates are imprecise and can vary based on sampling error alone.

These limitations do not invalidate the results. Large difference in replicability estimates are likely to predict real differences in success rates of actual replication studies (Schimmack, 2022).

New York University

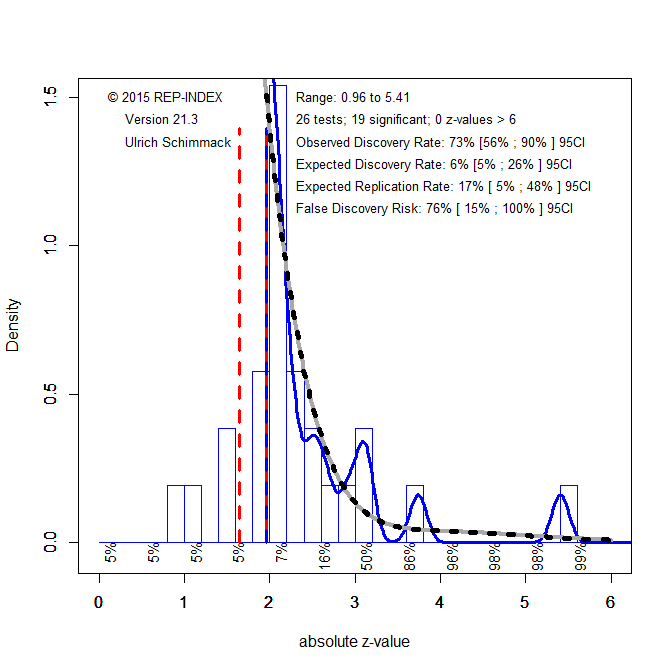

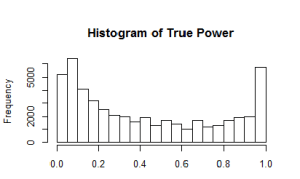

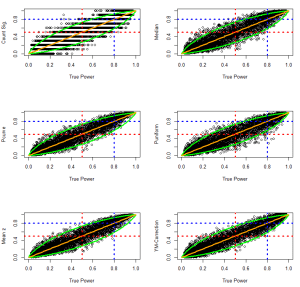

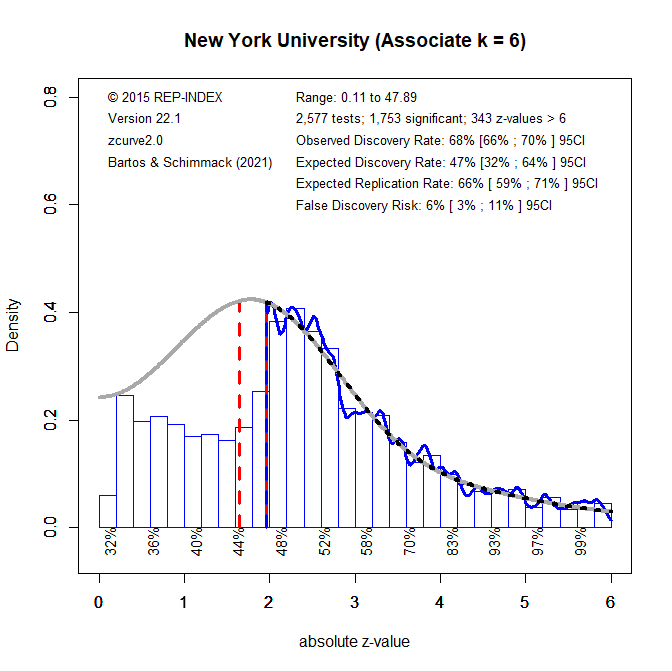

I used the department website to find core members of the psychology department. I found 13 professors and 6 associate professors. Figure 1 shows the z-curve for all 12,365 tests statistics in articles published by these 19 faculty members. I use the Figure to explain how a z-curve analysis provides information about replicability and other useful meta-statistics.

1. All test-statistics are converted into absolute z-scores as a common metric of the strength of evidence (effect size over sampling error) against the null-hypothesis (typically H0 = no effect). A z-curve plot is a histogram of absolute z-scores in the range from 0 to 6. The 1,239 (~ 10%) of z-scores greater than 6 are not shown because z-scores of this magnitude are extremely unlikely to occur when the null-hypothesis is true (particle physics uses z > 5 for significance). Although they are not shown, they are included in the meta-statistics.

2. Visual inspection of the histogram shows a steep drop in frequencies at z = 1.96 (dashed blue/red line) that corresponds to the standard criterion for statistical significance, p = .05 (two-tailed). This shows that published results are selected for significance. The dashed red/white line shows significance for p < .10, which is often used for marginal significance. There is another drop around this level of significance.

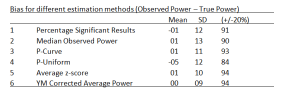

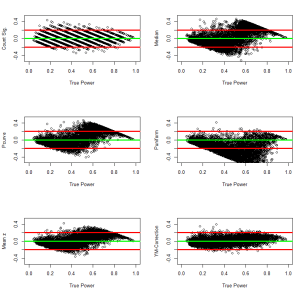

3. To quantify the amount of selection bias, z-curve fits a statistical model to the distribution of statistically significant results (z > 1.96). The grey curve shows the predicted values for the observed significant results and the unobserved non-significant results. The full grey curve is not shown to present a clear picture of the observed distribution. The statistically significant results (including z > 6) make up 20% of the total area under the grey curve. This is called the expected discovery rate because the results provide an estimate of the percentage of significant results that researchers actually obtain in their statistical analyses. In comparison, the percentage of significant results (including z > 6) includes 70% of the published results. This percentage is called the observed discovery rate, which is the rate of significant results in published journal articles. The difference between a 70% ODR and a 20% EDR provides an estimate of the extent of selection for significance. The difference of 50 percentage points is large. The upper level of the 95% confidence interval for the EDR is 28%. Thus, the discrepancy is not just random. To put this result in context, it is possible to compare it to the average for 120 psychology journals in 2010 (Schimmack, 2022). The ODR is similar (70 vs. 72%), but the EDR is a bit lower (20% vs. 28%), although the difference might be largely due to chance.

4. The EDR can be used to estimate the risk that published results are false positives (i.e., a statistically significant result when H0 is true), using Soric’s (1989) formula for the maximum false discovery rate. An EDR of 20% implies that no more than 20% of the significant results are false positives, however the upper limit of the 95%CI of the EDR, 28%, allows for 36% false positive results. Most readers are likely to agree that this is an unacceptably high risk that published results are false positives. One solution to this problem is to lower the conventional criterion for statistical significance (Benjamin et al., 2017). Figure 2 shows that alpha = .005 reduces the point estimate of the FDR to 3% with an upper limit of the 95% confidence interval of XX%. Thus, without any further information readers could use this criterion to interpret results published by NYU faculty members.

5. The estimated replication rate is based on the mean power of significant studies (Brunner & Schimmack, 2020). Under ideal condition, mean power is a predictor of the success rate in exact replication studies with the same sample sizes as the original studies. However, as NYU professor van Bavel pointed out in an article, replication studies are never exact, especially in social psychology (van Bavel et al., 2016). This implies that actual replication studies have a lower probability of producing a significant result, especially if selection for significance is large. In the worst case scenario, replication studies are not more powerful than original studies before selection for significance. Thus, the EDR provides an estimate of the worst possible success rate in actual replication studies. In the absence of further information, I have proposed to use the average of the EDR and ERR as a predictor of actual replication outcomes. With an ERR of 62% and an EDR of 20%, this implies an actual replication prediction of 41%. This is close to the actual replication rate in the Open Science Reproducibility Project (Open Science Collaboration, 2015). The prediction for results published in 120 journals in 2010 was (ERR = 67% + ERR = 28%)/ 2 = 48%. This suggests that results published by NYU faculty are slightly less replicable than the average result published in psychology journals, but the difference is relatively small and might be mostly due to chance.

6. There are two reasons for low replication rates in psychology. One possibility is that psychologists test many false hypotheses (i.e., H0 is true) and many false positive results are published. False positive results have a very low chance of replicating in actual replication studies (i.e. 5% when .05 is used to reject H0), and will lower the rate of actual replications a lot. Alternative, it is possible that psychologists tests true hypotheses (H0 is false), but with low statistical power (Cohen, 1961). It is difficult to distinguish between these two explanations because the actual rate of false positive results is unknown. However, it is possible to estimate the typical power of true hypotheses tests using Soric’s FDR. If 20% of the significant results are false positives, the power of the 80% true positives has to be (.62 – .2*.05)/.8 = 76%. This would be close to Cohen’s recommended level of 80%, but with a high level of false positive results. Alternatively, the null-hypothesis may never be really true. In this case, the ERR is an estimate of the average power to get a significant result for a true hypothesis. Thus, power is estimated to be between 62% and 76%. The main problem is that this is an average and that many studies have less power. This can be seen in Figure 1 by examining the local power estimates for different levels of z-scores. For z-scores between 2 and 2.5, the ERR is only 47%. Thus, many studies are underpowered and have a low probability of a successful replication with the same sample size even if they showed a true effect.

Area

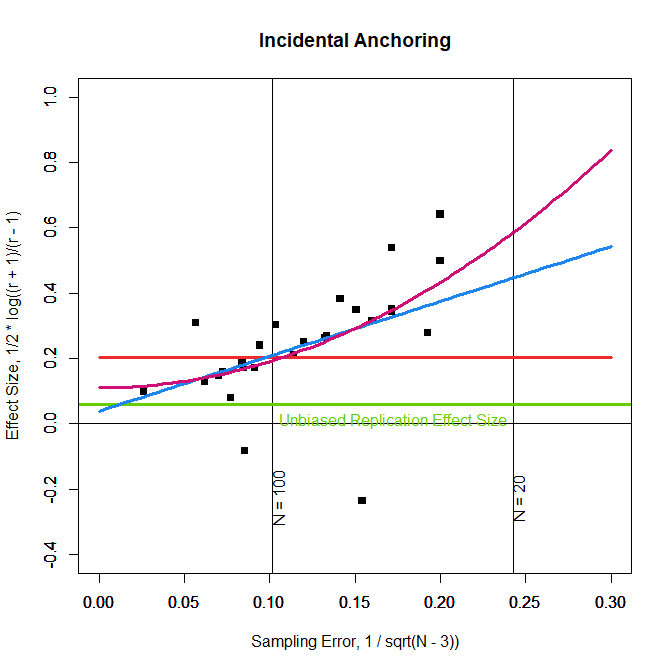

The results in Figure 1 provide highly aggregated information about replicability of research published by NYU faculty. The following analyses examine potential moderators. First, I examined social and cognitive research. Other areas were too small to be analyzed individually.

The z-curve for the 11 social psychologists was similar to the z-curve in Figure 1 because they provided more test statistics and had a stronger influence on the overall result.

The z-curve for the 6 cognitive psychologists looks different. The EDR and ERR are higher for cognitive psychology, and the 95%CI for social and cognitive psychology do not overlap. This suggests systematic differences between the two fields. These results are consistent with other comparisons of the two fields, including actual replication outcomes (OSC, 2015). With an EDR of 44%, the false discovery risk for cognitive psychology is only 7% with an upper limit of the 95%CI at 12%. This suggests that the conventional criterion of .05 does keep the false positive risk at a reasonably low level or that an adjustment to alpha = .01 is sufficient. In sum, the results show that results published by cognitive researchers at NYU are more replicable than those published by social psychologists.

Position

Since 2015 research practices in some areas of psychology, especially social psychology, have changed to increase replicability. This would imply that research by younger researchers is more replicable than research by more senior researchers that have more publications before 2015. A generation effect would also imply that a department’s replicability increases when older faculty members retire. On the other hand, associate professors are relatively young and likely to influence the reputation of a department for a long time.

The figure above shows that most test statistics come from the (k = 13) professors. As a result, the z-curve looks similar to the z-curve for all test values in Figure 1. The results for the 6 associate professors (below) are more interesting. Although five of the six associate professors are in the social area, the z-curve results show a higher EDR and less selection bias than the plot for all social psychologists. This suggests that the department will improve when full professors in social psychology retire.

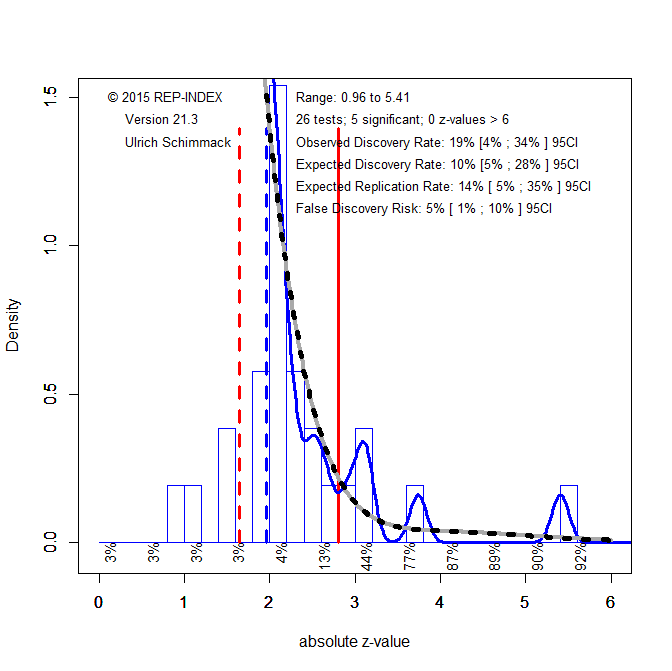

Some researchers have changed research practices in response to the replication crisis. It is therefore interesting to examine whether replicability of newer research has improved. To examine this question, I performed a z-curve analysis for articles published in the past five year (2016-2021).

The results show very little signs of improvement. The EDR increased from 20% to 26%, but the confidence intervals are too wide to infer that this is a systematic change. In contrast, Stanford University improved from 22% to 50%, a significant increase. For now, NYU results should be interpreted with alpha = .005 as threshold for significance to maintain a reasonable false positive risk.

The table below shows the meta-statistics of all 19 faculty members. You can see the z-curve for each faculty member by clicking on their name.

| Rank | Name | ARP | EDR | ERR | FDR |

| 1 | Karen E. Adolph | 66 | 76 | 56 | 4 |

| 2 | Bob Rehder | 61 | 75 | 47 | 6 |

| 3 | Marjorie Rhodes | 58 | 68 | 48 | 6 |

| 4 | Jay J. van Bavel | 55 | 66 | 44 | 7 |

| 5 | Brian McElree | 54 | 59 | 49 | 6 |

| 6 | David M. Amodio | 53 | 65 | 40 | 8 |

| 7 | Todd M. Gureckis | 49 | 75 | 23 | 17 |

| 8 | Emily Balcetis | 48 | 68 | 28 | 13 |

| 9 | Eric D. Knowles | 48 | 60 | 35 | 10 |

| 10 | Tessa V. West | 46 | 55 | 37 | 9 |

| 11 | Catherine A. Hartley | 45 | 70 | 19 | 23 |

| 12 | Madeline E. Heilman | 44 | 66 | 23 | 18 |

| 13 | John T. Jost | 44 | 62 | 26 | 15 |

| 14 | Andrei Cimpian | 42 | 64 | 20 | 21 |

| 15 | Peter M. Gollwitzer | 36 | 54 | 18 | 25 |

| 16 | Yaacov Trope | 34 | 54 | 14 | 32 |

| 17 | Gabriele Oettingen | 30 | 46 | 14 | 32 |

| 18 | Susan M. Andersen | 30 | 47 | 13 | 35 |