Since 2011, psychologists are wondering about the replicability of the results in their journals. Until then, psychologists blissfully ignored that success rates of 95% in their journals are eerily high and more akin to outcomes of totalitarian elections than actual success rates of empirical studies (Sterling, 1959).

The Open Science Framework (OSF) has conducted several stress-tests of psychological science by means of empirical replication studies. There have been several registered replication reports of individual studies of interest, a couple of many labs projects, and the reproducibility project.

Although these replication projects have produced many interesting results, headlines often focus on the percentage of successful replications. Typically, the criterion for a successful replication is a statistically significant (p < .05, two-tailed) result in the replication study.

The reproducibility project produced a success rate of 36%, and the just released results of Many Labs 2 showed a success rate of 50%. While it is crystal clear what these numbers are (they are a simple count of p-values less than .05 divided by the number of tests), it is much less clear what these numbers mean.

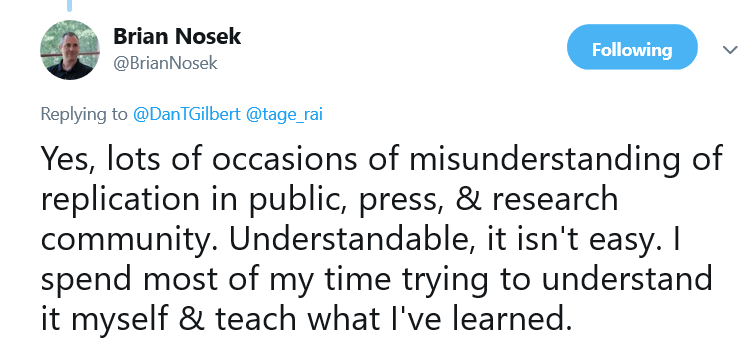

As Alison Ledgerwood pointed out, everybody knows the answer, but nobody seems to know the question.

Descriptive Statistics in Convenience Samples are Meaningless

The first misinterpretation of the number is that the percentage tells us something about the “reproducibility of psychological science” (Open Science Collaboration, 2015). As the actual article explains, the reproducibility project selected studies from three journals that publish mostly social and cognitive psychological experiments. To generalize from these two areas of psychology to all areas of psychological research is only slightly less questionable than it is to generalize from 40 undergraduate students at Harvard University to the world population. The problem is that replicability can vary across disciplines. In fact, the reproducibility project found differences between the two disciplines that were examined. While cognitive psychology achieved at least a success rate of 50%, the success rate for social psychology was only 25%.

If researchers had a goal to provide an average estimate of all areas of psychology, it would be necessary to define the population of results in psychology (which journals should be included) and to draw a representative sample from this population.

Without a sampling plan, the percentage is only valid for the sample of studies. The importance of sampling is well understood in most social sciences, but psychologists may have ignored this aspect because psychologists make unwarranted generalized claims based on convenience samples.

In their defense, OSC authors may argue that their results are at least representative of results published in the three journals that were selected for replication attempts; that is, the Journal of Personality and Social Psychology, the Journal of Experimental Psychology: Learning, Memory, and Cognition, and Psychological Science. Replication teams could pick any article that was published in the year 2008. As there is no reason to assume that 2008 was a particularly good or bad year, the results are likely to generalize to other years.

However, the sets of studies in Many Labs projects were picked ad hoc without any reference to a particular population. They were simply studies of interest that were sufficiently simple to be packaged into a battery of studies that could be completed within an hour to allow for massive replication across many labs. The percentage of successes in these projects is practically meaningless just like the average height of the next 20 customers at McDonals is a meaningless number. Thus, 50% successes in ManyLab2 is a very clear answer to a very unclear question because the percentage depends on an unclear selection mechanism. The next project that selects another 20 studies of interest could produce 20%, 50%, or 80% successes. Moreover, adding up meaningless success rates doesn’t improve things just like averaging data from Harvard and Kentucky State University does not address the problem that the samples are not representative of the population.

Thus, the only meaningful result of empirical replicability estimation studies is that the success rate in social psychology is estimated to be 25% and the success rate in cognitive psychology is estimated to be 50%. No other areas have been investigated.

Success Rates are Arbitrary

Another problem with the headline finding is that success rates of replication studies depend on the sample size of replication studies, unless an original finding was a false positive result (in this case, the success rate matches the significance criterion, i.e. 5%).

The reason is that statistical significance is a function of sampling error and sampling error decreases as sample sizes increase. Thus, replication studies can produce fewer successes if sample sizes are lowered (as they were for some cognitive studies in the reproducibility project) and they increase when sample sizes are increased (as they are in the ManyLabs projects).

It is therefore a fallacy to compare success rates in the reproducibility project with success rates in the Many Labs projects. The 37% success rate for the reproducibility project conflates social and cognitive psychology, while sample sizes remained fairly similar, while ManyLabs2 mostly focused on social psychology, but increased sample sizes by a factor of 64 (Median Original N = 112, replication N = 7157). A more reasonable comparison would focus on social psychology and compute successes for replication studies on the basis of effect size and sample size of the original study (see Table 5 of ManyLab2 report). Two studies are no longer significant and the success rate is reduced from 50% to 43%.

Estimates in Small Samples are Variable

The 43% success rate in ManyLabs2 has to be compared to the 25% success rate for social psychology, suggesting that the success rate is still higher. However, estimates in small samples are not very precise, and these differences are not statistically different. Thus, there is no empirical evidence for the claim that ManyLabs was more successful than the reproducibility project. Given the lack of representative sampling in ManyLabs projects, the estimate could be ignored, but assuming that there was no major bias in the selection process, the two estimates can be combined to produce an estimate somewhere in the middle. This would suggest that we should expect only one-third of results in social psychology to replicate.

Convergent Validation with Z-Curve

Empirical replicability estimation has major problems because not all studies can be easily replicated. Jerry Brunner and I have developed a statistical approach to estimate replicability based on published empirical results. I have applied this approach to Motly et al.’s (2017) representative set of statistical results in social psychology journals (PSPB, JPSP, JESP).

The statistical approach predicts that exact replication studies with the same sample sizes produce 44% successes. This estimate is a bit higher than the actual success rate. There are a number of possible reasons for this discrepancy. First, the estimate of the statistical approach is more precise because the sample size is much larger than the set of actually replicated studies. Second, the statistical model may not be correct, leading to an overestimation of the actual success rate. Third, it is difficult to conduct exact replication studies and effect sizes might be weaker in replication studies. Finally, the set of actual replication studies is not representative because pragmatic decisions influenced which studies were actually replicated.

Aside from these differences, it is noteworthy that both estimation methods produce converging evidence that less than 50% of published results in social psychology journals can be expected to reproduce a success, even if the study could be replicated exactly.

What Do Replication Failures Mean?

The refined conclusion about replicability in psychological science is that only social psychology has been examined and that replicability in social psychology is likely to be less than 50%. However, the question still remains unclear.

In fact, there are two questions that are often confused. One question is how many successes in social psychology are false positives; that is, contrary to the reported finding, the effect size in the (not well-defined population) is zero or even in the opposite direction of the original study. The other question is how much statistical power studies in social psychology have to discover true effects?

The problem is that replication failures do not provide a clear answer to the first question. A replication failure can reveal a type-I error in the original study (the original finding was a fluke) or a type-II error (the replication study failed to detect a true effect).

To estimate the percentage of false positives, it is not possible to simply count non-significant replication studies. As every undergraduate student learns (and may forget) a non-significant result does not prove that the null-hypothesis is true. To answer the question about false positives, it is necessary to define a region of interest around zero and to demonstrate that the population effect size is unlikely to fall outside the region. If the region is reasonably small, sample sizes have to be at least as large as those of the Many Lab projects. However, Many Lab projects are not representative samples. Thus, the percentage of false positives in social psychology remains unknown. I personally believe that the search for false positives is not very fruitful.

Thus, the real question that replicability estimates can answer is how much power studies in social psychology, on average, have. The results suggest that successful studies in social psychology only have about 20 to 40 percent power. As Tversky and Kahneman (1971) pointed out, no sane researcher would invest in studies that have a greater chance to fail than to succeed. Thus, we can conclude from the empirical finding that the actual power is less than 50% that social psychologists are either insane or unaware that they are conducting studies with less than 50% most of the time.

If social psychologist are sane, replicability estimates are very useful because they inform social psychologists that they need to change their research practices to increase statistical power. Cohen (1962) tried to tell them this, as did Tversky and Kahneman (1971), and Sedlmeier & Gigerenzer (1989), and Maxwell (2004), and myself (Schimmack, 2012). Maybe it was necessary to demonstrate low replicability with actual replication studies because statistical arguments alone were not powerful enough. Hopefully, the dismal success rates in actual replication studies provides the necessary wake-up call for social psychologists to finally change their research practices. That would be welcome evidence that science is self-correcting and social psychologists are sane.

Conclusion

The average replicability of results in social psychology journals is less than 50%. The reason is that original studies have low statistical power. To improve replicability, social psychologists need to conduct realistic a priori power calculations and honestly report non-significant results when they occur.

3 thoughts on “How Replicable is Psychological Science?”