The OSF-Reproducibility-Project examined the replicability of 99 statistical results published in three psychology journals. The journals covered mostly research in cognitive psychology and social psychology. An article in Science, reported that only 35% of the results were successfully replicated (i.e., produced a statistically significant result in the replication study).

I have conducted more detailed analyses of replication studies in social psychology and cognitive psychology. Cognitive psychology had a notably higher success rate (50%, 19 out of 38) than social psychology (8%, 3 out of 38). The main reason for this discrepancy is that social psychologists and cognitive psychologists use different designs. Whereas cognitive psychologists typically use within-subject designs with many repeated measurements of the same individual, social psychologists typically assign participants to different groups and compare behavior on a single measure. This so-called between-subject design makes it difficult to detect small experimental effects because it does not control the influence of other factors that influence participants’ behavior (e.g., personality dispositions, mood, etc.). To detect small effects in these noisy data, between-subject designs require large sample sizes.

It has been known for a long time that sample sizes in between-subject designs in psychology are too small to have a reasonable chance to detect an effect (less than 50% chance to find an effect that is actually there) (Cohen, 1962; Schimmack, 2012; Sedlmeier & Giegerenzer, 1989). As a result, many studies fail to find statistically significant results, but these studies are not submitted for publication. Thus, only studies that achieved statistical significance with the help of chance (the difference between two groups is inflated by uncontrolled factors such as personality) are reported in journals. The selective reporting of lucky results creates a bias in the published literature that gives a false impression of the replicability of published results. The OSF-results for social psychology make it possible to estimate the consequences of publication bias on the replicability of results published in social psychology journals.

A naïve estimate of the replicability of studies would rely on the actual success rate in journals. If journals would publish significant and non-significant results, this would be a reasonable approach. However, journals tend to publish exclusively significant results. As a result, the success rate in journals (over 90% significant results; Sterling, 1959; Sterling et al., 1995) gives a drastically inflated estimate of replicability.

A somewhat better estimate of replicability can be obtained by computing post-hoc power based on the observed effect sizes and sample sizes of published studies. Statistical power is the long-run probability that a series of exact replication studies with the same sample size would produce significant results. Cohen (1962) estimated that the typical power of psychological studies is about 60%. Thus, even for 100 studies that all reported significant results, only 60 are expected to produce a significant result again in the replication attempt.

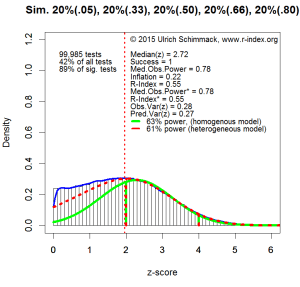

The problem with Cohen’s (1962) estimate of replicability is that post-hoc-power analysis uses the reported effect sizes as an estimate of the effect size in the population. However, due to the selection bias in journals, the reported effect sizes and power estimates are inflated. In collaboration with Jerry Brunner, I have developed an improved method to estimate typical power of reported results that corrects for the inflation in reported effect sizes. I applied this method to results from 38 social psychology articles included in the OSF-reproducibility project and obtained a replicability estimate of 35%.

The OSF-reproducbility project provides another opportunity to estimate the replicability of results in social psychology. The OSF-project selected a representative set of studies from two journals and tried to reproduce the same experimental conditions as closely as possible. This should produce unbiased results and the success rate provides an estimate of replicability. The advantage of this method is that it does not rely on statistical assumptions. The disadvantage is that the success rate depends on the ability to exactly recreate the conditions of the original studies. Any differences between studies (e.g., recruiting participants from different populations) can change the success rate. The OSF replication studies also often changed the sample size of the replication study, which will also change the success rate. If sample sizes in a replication study are larger, power increases and the success rate no longer can be used as an estimate of the typical replicability of social psychology. To address this problem, it is possible to apply a statistical adjustment and use the success rate that would have occurred with the original sample sizes. I found that 5 out of 38 (13%) produced significant results and after correcting for the increase in sample size, replicability was only 8% (3 out of 38).

One important question is how how representative the 38 results from the OSF-project are for social psychology in general. Unfortunately, it is practically impossible and too expensive to conduct a large number of exact replication studies. In comparison, it is relatively easy to apply post-hoc power analysis to a large number of statistical results reported in social psychology. Thus, I examined the representativeness of the OSF-reproducibility results by comparing the results of my post-hoc power analysis based on the 38 results in the OSF to a post-hoc-power analysis of a much larger number of results reported in major social psychology journals .

I downloaded articles from 12 social psychology journals, which are the primary outlets for publishing experimental social psychology research: Basic and Applied Social Psychology, British Journal of Social Psychology, European Journal of Social Psychology, Journal of Experimental Social Psychology, Journal of Personality and Social Psychology: Attitudes and Social Cognition, Journal of Personality and Social Psychology: Interpersonal Relationships and Group Processes, Journal of Social and Personal Relationships, Personal Relationships, Personality and Social Psychology Bulletin, Social Cognition, Social Psychology and Personality Science, Social Psychology.

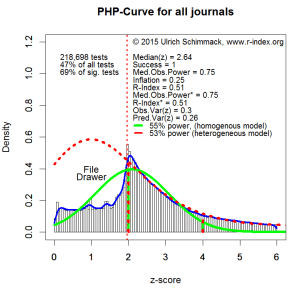

I converted pdf files into text files and searched for all reports of t-tests or F-tests and converted the reported test-statistic into exact two-tailed p-values. The two-tailed p-values were then converted into z-scores by finding the z-score corresponding to the probability of 1-p/2, with p equal the two-tailed p-value. The total number of z-scores included in the analysis is 134,929.

I limited my estimate of power to z-scores in the range between 2 and 4. Z-scores below 2 are not statistically significant (z = 1.96, p = .05). Sometimes these results are reported as marginal evidence for an effect, sometimes they are reported as evidence that an effect is not present, and sometimes they are reported without an inference about the population effect. It is more important to determine the replicability of results that are reported as statistically significant support for a prediction. Z-scores greater than 4 were excluded because z-scores greater than 4 imply that this test had high statistical power (> 99%). Many of these results replicated successfully in the OSF-project. Thus, a simple rule is to assign a success rate of 100% to these findings. The Figure below shows the distribution of z-scores in the range form z = 0 to6, but the power estimate is applied to z-scores in the range between 2 and 4 (n = 66,212).

The power estimate based on the post-hoc-power curve for z-scores between 2 and 4 is 46%. It is important to realize that this estimate is based on 70% of all significant results that were reported. As z-scores greater than 4 essentially have a power of 100%, the overall power estimate for all statistical tests that were reported is .46*.70 + .30 = .62. It is also important to keep in mind that this analysis uses all statistical tests that were reported including manipulation checks (e.g., pleasant picture were rated as more pleasant than unpleasant pictures). For this reason, the range of z-scores is limited to values between 2 and 4, which is much more likely to reflect a test of a focal hypothesis.

46% power for z-scores between 2 and 4 of is a higher estimate than the estimate for the 38 studies in the OSF-reproducibility project (35%). This suggests that the estimated replicability based on the OSF-results is an underestimation of the true replicability. The discrepancy between predicted and observed replicability in social psychology (8 vs. 38) and cognitive psychology (50 vs. 75), suggests that the rate of actual successful replications is about 20 to 30% lower than the success rate based on statistical prediction. Thus, the present analysis suggests that actual replication attempts of results in social psychology would produce significant results in about a quarter of all attempts (46% – 20% = 26%).

The large sample of test results makes it possible to make more detailed predictions for results with different strength of evidence. To provide estimates of replicability for different levels of evidence, I conducted post-hoc power analysis for intervals of half a standard deviation (z = .5). The power estimates are:

Strength of Evidence Power

2.0 to 2.5 33%

2.5 to 3.0 46%

3.0 to 3.5 58%

3.5 to 4.0 72%

IMPLICATIONS FOR PLANNING OF REPLICATION STUDIES

These estimates are important for researchers who are aiming to replicate a published study in social psychology. The reported effect sizes are inflated and a replication study with the same sample size has a low chance to produce a significant result even if a smaller effect exists. To conducted a properly powered replication study, researchers would have to increase sample sizes. To illustrate, imagine that a study demonstrate a significant difference between two groups with 40 participants (20 in each cell) with a z-score of 2.3 (p = .02, two-tailed). The observed power for this result is 65% and it would suggest that a slightly larger sample of N = 60 is sufficient to achieve 80% power (80% chance to get a significant result). However, after correcting for bias, the true power is more likely to be just 33% (see table above) and power for a study with N = 60 would still only be 50%. To achieve 80% power, the replication study would need a sample size of 130 participants. Sample sizes would need to be even larger taking into account that the actual probability of a successful replication is even lower than the probability based on post-hoc power analysis. In the OSF-project only 1 out of 30 studies with an original z-score between 2 and 3 was successfully replicated.

IMPLICATIONS FOR THE EVALUATION OF PUBLISHED RESULTS

The results also have implications for the way social psychologists should conduct and evaluate new research. The main reason why z-scores between 2 and 3 provide untrustworthy evidence for an effect is that they are obtained with underpowered studies and publication bias. As a result, it is likely that the strength of evidence is inflated. If, however, the same z-scores were obtained in studies with high power, a z-score of 2.5 would provide more credible evidence for an effect. The strength of evidence in a single study would still be subject to random sampling error, but it would no longer be subject to systematic bias. Therefore, the evidence would be more likely to reveal a true effect and it would be less like to be a false positive. This implies that z-scores should be interpreted in the context of other information about the likelihood of selection bias. For example, a z-score of 2.5 in a pre-registered study provides stronger evidence for an effect than the same z-score in a study where researchers may have had a chance to conduct multiple studies and to select the most favorable results for publication.

The same logic can also be applied to journals and labs. A z-score of 2.5 in a journal with an average z-score of 2.3 is less trustworthy than a z-score of 2.5 in a journal with an average z-score of 3.5. In the former journal, a z-score of 2.5 is likely to be inflated, whereas in the latter journal a z-score of 2.5 is more likely to be negatively biased by sampling error. For example, currently a z-score of 2.5 is more likely to reveal a true effect if it is published in a cognitive journal than a social journal (see ranking of psychology journals).

The same logic applies even more strongly to labs because labs have a distinct research culture (MO). Some labs conduct many underpowered studies and publish only the studies that worked. Other labs may conduct fewer studies with high power. A z-score of 2.5 is more trustworthy if it comes from a lab with high average power than from a lab with low average power. Thus, providing information about the post-hoc-power of individual researchers can help readers to evaluate the strength of evidence of individual studies in the context of the typical strength of evidence that is obtained in a specific lab. This will create an incentive to publish results with strong evidence rather than fishing for significant results because a low replicability index increases the criterion at which results from a lab provide evidence for an effect.