Project Implicit is a nonprofit company founded in 1998 by three social psychologists:

Tony Greenwald (University of Washington)

Mahzarin Banaji (Harvard University)

Brian Nosek (University of Virginia)

Project Implicit is mainly known as the company that hosts a website where people receive (false) feedback about their implicit associations based on the Implicit Association Test (IAT). The website is hosted by Harvard University, which is prominently displayed in web searchers, presumably because many Americans associate Harvard with excellent science.

However, the ethical oversight for the activities of Project Implicit rests with the Institutional Review Board with the University of Virginia’s IRB for Social and Behavioral Sciences. The Harvard branding is real but largely a legacy of Banaji’s professorship there; the organization is legally independent of Harvard.”

Project Implicit is now also hosting on an independent site as About the IAT – Project Implicit. Thus, the connection with Harvard may come to an end, but the website hosted by Harvard is still operational.

People

Based on the ProPublica 990 data, the leaders of Project Implicit in the fiscal year 2025 were:

- Amy Jin Johnson — Executive Director (the only compensated employee, at $111,038)

- Dr. Brian Nosek — President (University of Virginia; co-founder)

- Dr. Kate Ratliff — Treasurer (University of Florida)

- Keith Maddox, PhD — Director

- Jarvis Idowu — Director

- Bayet Ross Smith — Director

The affiliation with University of Virginia and Brian Nosek’s role as president and co-founder make it clear that Brian Nosek is the main person responsible for the ethical integrity of Project Implicit’s scientific work and the administration of IATs to the general public.

Financials

The picture that emerges is of a very small operation that is burning through reserves. As a 501(c)(3), Project Implicit files Form 990s with the IRS, which are publicly accessible. The ProPublica Nonprofit Explorer has their filings going back to 2011.

For fiscal year ending September 2025: revenue of $104,552, expenses of $296,971, a net loss of $192,419, and total net assets of $365,382. The dominant revenue source was program services (82% of revenue, at $86,100), with investment income making up most of the rest. Public donations were negligible at $675 (0.6%).

The prior year (FY2024) showed revenue of $273,966 against expenses of $489,223 — another large deficit — and the year before that (FY2023) showed revenue of $436,655 against expenses of $522,546.

So revenues have dropped sharply over three years (~$437K → ~$274K → ~$105K) while expenses remain high relative to income. They are drawing down net assets at a significant rate.

The main revenue of Project Implicit are fees for program services:

- Corporate/organizational DEI training and consulting — companies, government agencies, universities, and HR departments pay Project Implicit to run implicit bias workshops, license the IAT for their own use, or deliver training programs. This has been a significant revenue stream for them, especially during the DEI boom years of 2020–2022.

- Licensing or access fees — organizations that want to use the IAT infrastructure for research or applied purposes may pay for that.

- Speaking and educational programs — paid engagements where Project Implicit personnel deliver training.

The trajectory tells an interesting story. Program service revenue went from ~$308K (FY2023) to ~$240K (FY2024) to ~$86K (FY2025) — a collapse of roughly 72% in two years. That almost certainly tracks the broader pullback in corporate DEI spending that accelerated after 2023 and especially into 2024–2025. Thus, while the website hosts hundreds of different IATs, the race IAT is the bread and butter IAT that funds the organization. The collapse in revenues can be explained by the changing political climate under the “Make Racism Great Again” policies of the MAGA government. There is no evidence that sustained criticism of the validity of IATS in general and the race IAT specifically over the past decades has contributed to this sharp drop in revenues.

Mission Statement

Old mission statement, https://app-prod-03.implicit.harvard.edu/implicit/aboutus.html (retrieved 26-06-01)

Project Implicit’s mission statement has changed considerably over time, against the backdrop of accumulating scientific criticism of the IAT and the organization’s broader institutional repositioning. The changes are visible not only in the language itself, but also in where the organization now presents itself to the public.

An older version still visible on the Harvard-hosted site describes an organization that “provides consulting, education, and training services on implicit bias, diversity and inclusion, leadership, applying science to practice, and innovation” (app-prod-03.implicit.harvard.edu, retrieved June 1, 2026). The earliest version cached by the Wayback Machine, from 2013, contains the same language. The current projectimplicit.net site describes its educational work in considerably more cautious terms, as providing “research-based educational programs that translate findings from cognitive science into clear, accessible understanding of judgment and decision-making, without prescribing behavior change or organizational intervention.”

The phrase “without prescribing behavior change or organizational intervention” marks a significant retreat. The earlier language presented Project Implicit as an organization that translated implicit-bias science into diversity, inclusion, leadership, and applied organizational practice. The current language distances the organization from prescriptive behavior change and organizational intervention. This does not mean that Project Implicit has abandoned all consulting or educational services. Rather, it means that the organization has narrowed the public rationale for those services. It no longer presents itself as directly prescribing organizational change, but as providing research-based education about judgment and decision-making.

That retreat is important, but it is incomplete. Even the current mission statement continues to claim the authority of “research-based” education and the translation of “findings from cognitive science.” Those phrases preserve the impression that Project Implicit is communicating settled scientific knowledge. But the central scientific problem remains unresolved. The issue is not whether racial disparities, prejudice, or discrimination exist. They plainly do. The issue is whether IAT scores validly measure implicit prejudice at the individual level, and whether individualized feedback about hidden racial bias is scientifically justified.

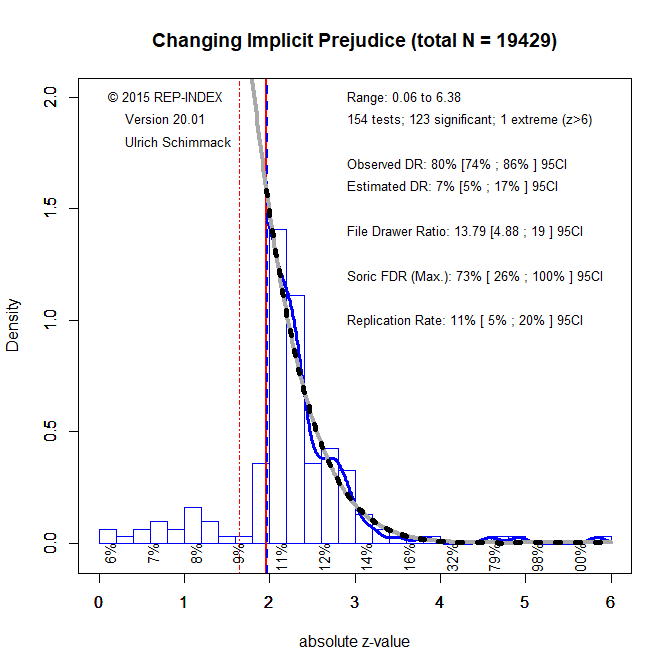

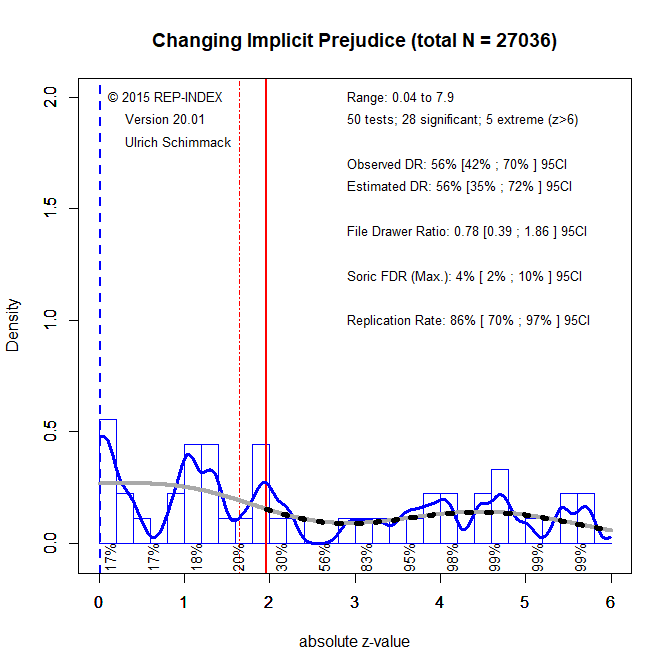

The evidence does not support that stronger interpretation. IAT scores have limited validity, weak relations with behavior, and substantial ambiguity in what they measure (Schimmack, 2021). They are influenced by task-specific processes, cultural associations, and systematic sources of measurement error. In the case of the race IAT, the color-valence confound raises the additional possibility that scores partly reflect general associations with black and white rather than racial attitudes themselves. These limitations are not minor qualifications. They go to the construct validity of the measure and to the ethical defensibility of giving people individualized feedback about hidden racial bias.

Ethics

The administration of psychological tests to assess individuals with clinical relevance is regulated by professional bodies such as the American Psychological Association. However, these strict ethical rules do not apply to test that are administered for other purposes. Anybody can host a website and give people scores on some test.

Millions of people have taken tests like astrological birth chart generators or the “What kind of pizza are you? test (Pizza Test). However, as academics, Brian Nosek and Project Implicit are required to have ethical approval for the administration of IATs, especially because they are using the data for research purposes. Currently, the IRB of the University of Virginia is responsible for the ethical oversight of Project Implicit’s activities.

The IRB protocol obtained from Brian Nosek — the only document he could find, dated 2006 — confirms that the ethical oversight of Project Implicit has not kept pace with the scientific evidence.

The 2006 protocol acknowledges that participants may be “surprised” and “concerned” by their results, and promises debriefing that contextualizes scores as having “no direct implications for individual scores.” But it makes no mention of the limited reliability of IAT scores, the color-valence confound, the absence of construct validity evidence, or the specific risks to African American participants of being told they harbor hidden pro-White bias.

A protocol written in 2006, before the major validity critiques were published, and apparently never formally updated, cannot provide adequate ethical oversight for a research enterprise that has since accumulated overwhelming evidence of the instrument’s limitations. The fact that Nosek’s response to a direct request for the current IRB protocol was to send a 20-year-old document is itself an answer.

UVA seems to treat this project like any other research project, but Project Implicit research is different because it gives people feedback about potential hidden biases. The key claim is that they measure processes that are not directly accessible to introspection. This is also used to explain why people may receive feedback that is inconsistent with their self-perceptions — the supposed reason being that the test revealed something true about them that is not accessible to conscious awareness, much like a psychoanalyst claiming to recover a forgotten or repressed memory. These claims are controversial because they are difficult to verify, and the epistemic structure is problematic: participants cannot dispute the feedback on the basis of their own experience because the whole point is that the bias is hidden from them. The danger is that discrepancies between IAT scores and self-perceptions are more likely to reflect measurement error in the IAT than truly hidden biases — a conclusion supported by published psychometric research (Schimmack, 2021). As a result, a substantial proportion of participants will receive false feedback about racial attitudes they do not hold and people are not given proper debriefing that the most likely reason for surprising results is measurement error.

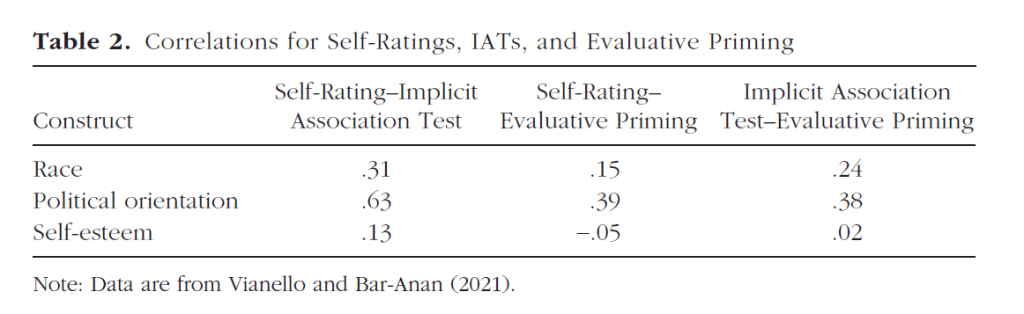

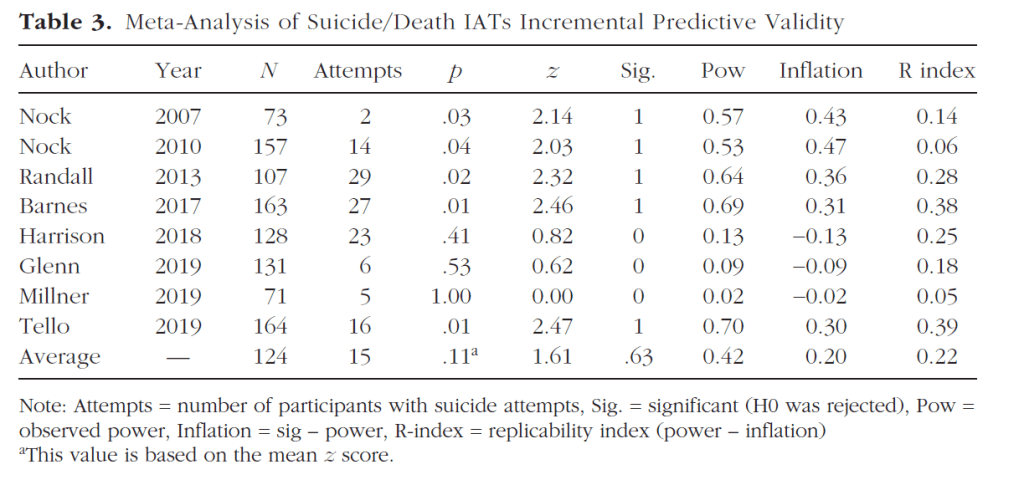

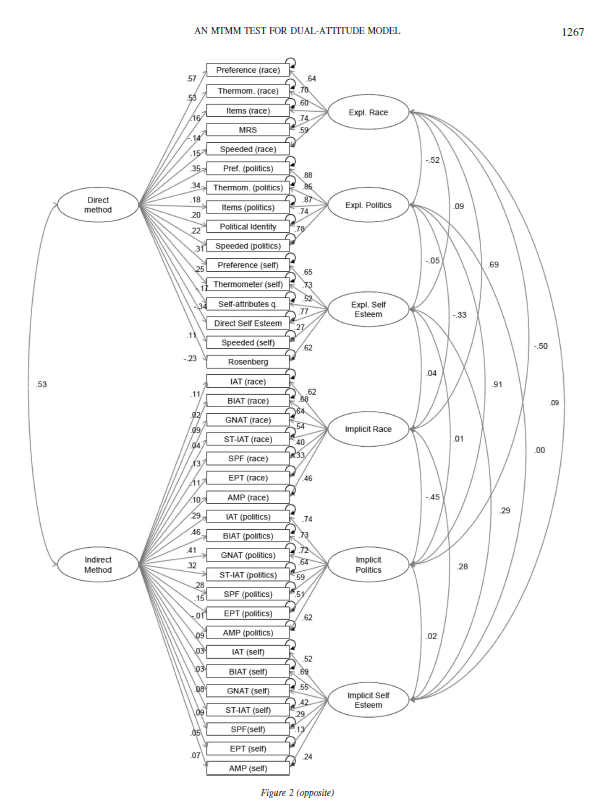

Implicit Biases of Project Implicit

Given the seriousness of providing people with feedback about hidden biases on topics like prejudice, depression, and suicide, one might expect that Project Implicit has carefully evaluated the psychometric properties of IATs — that is, assessed the accuracy of IAT scores. However, this is not the case. None of the three founding members has training in psychometrics or demonstrated understanding of modern test theory, as evidenced by their failure to apply basic psychometric concepts such as discriminant validity, convergent validity with other implicit measures, or the fundamental constraint that validity cannot exceed reliability (Schimmack, 2021).

Most of the discussion of measurement error in the IAT literature has focused on random measurement error and situational influences on IAT scores. This limited focus ignores that IAT scores can also be influenced by systematic measurement error. Random error averages out across repeated administrations; systematic error does not. If IAT scores are systematically influenced by factors such as cognitive ability or task-switching rather than hidden bias, repeated testing will not produce valid feedback about hidden biases. Neglect of systematic measurement error is common in psychology, but the ethical stakes are considerably higher when such error invalidates personal feedback about sensitive topics like racial prejudice, depression, or suicidal ideation.

The finding that the average white, Asian, or non-white Hispanic American finds it easier to associate white with good and black with bad rather than the other way around does not mean that they are prejudiced against Black people. It also does not show that they are unbiased. In fact, self-reports show that a substantial number of people are aware of and willing to admit their prejudices.

Brian Nosek, the director of Project Implicit, has ignored scientific criticism of the interpretation of IAT scores made by numerous researchers using independent lines of argument. One is that IAT scores show low convergent validity with other implicit measures — meaning that a person classified as biased on the IAT may not be classified as biased on other implicit measures of the same construct. Yet visitors to the Project Implicit website are offered only the IAT, with no acknowledgment that other implicit measures exist or that they frequently disagree with IAT scores. While the name Project Implicit implies a focus on implicit constructs, the site is really just promoting the Implicit Association Test, even though it lacks validity to measure implicit biases.

Is the race IAT itself racist?

The scoring of the race IAT rests on a simple assumption. If reaction times in favor of white-good, black-bad are faster than black-good and white-bad, a person shows an implicit bias favoring whites. This scoring assumes that a value of zero corresponds to a psychological attitude that is neutral and unbiased. While this assumption is intuitively appealing, it requires scientific evidence. An alternative possibility is that scores on the race IAT are also influenced by factors that have nothing to do with prejudice.

One way to validate the assumption is to see how scores on the IAT are related to actual behaviors. If zero reflects neutrality, people with scores above zero should show prejudice in their behaviors and people with scores below zero should show the opposite pattern, a preference for Black people. However, no compelling evidence has been provided that reaction time differences map directly on amount of bias in behavior.

A critical analysis of the literature failed to provide evidence for the scoring of the race IAT that is used to provide people with feedback about their hidden biases (Blanton, Jacard, Strauts, Mitchell, & Tetlock, 2015) [ironically, Mitchell is also affiliated with UVA that oversees the ethics of Project Implicit]. There has been no response to this criticism and no research to demonstrate that the scoring of the race IAT is valid by Project Implicit since then. There is also no response by Brian Nosek or other founders of Project Implicit to more recent criticisms (Schimmack, 2021).

Moreover, there has been research that has examined why the IAT may have a bias towards white-good/black-bad associations; that is, the test itself is biased. The first problem is that American culture is filled with racial stereotypes that associated Black people with negative attributes. Mere awareness of these stereotypes may influence IAT scores, even if people hold favorable attitudes towards specific Black people or even African Americans as a group (Olson & Fazio, 2004). Even African Americans are aware of these stereotypes and their responses may be influenced by these associations. In support of this argument, responses are more neutral on other tasks that rely on specific stimuli (faces of European and African Americans) rather than abstract associations.

More challenging for the race IAT is the finding that simple color associations explain a substantial portion of the variance in scores on the race IAT (Smith-McLallen, Johnson, Dovidio, & Pearson, 2006). This means the race IAT is not a pure measure of racial biases because it is contaminated by general associations related to the colors white and black. Although this problem was reported 20 years ago, it has been largely ignored by the research community and by Project Implicit. The implication is that African Americans who like white cars and white clothing may receive feedback that they have a hidden bias against African Americans.

Durgin, Diop, Lewis-Owona, and Eaton (in press) replicated and substantially extended Smith-McLallen et al.’s findings across six experiments. They showed that the correlation between color IAT scores and race IAT scores is of similar magnitude to the test-retest correlation of the race IAT itself, suggesting that the two instruments are measuring largely the same underlying construct. Critically, the shared variance between the color and race IATs was not explained by explicit racial bias but by metaphoric alignments of black and white — the deep cultural association of darkness with evil present across racial groups. Even Black participants showed similar metaphoric color alignments to White participants, and a blue-gray color IAT showed no correlation with the race IAT, confirming the effect is specific to black-white alignments rather than a general method artifact.

These results undermine the validity of race IAT scores, especially for African Americans. This matters because the validity of test scores must be assessed within populations, not just in aggregate. However, IAT validation studies have relied exclusively on White or mixed samples, meaning the test has never been properly validated for African Americans. Durgin et al.’s findings suggest that race IAT scores are even less valid for African Americans than for European Americans, as the metaphoric color bias and in-group effects pull in opposing directions, making individual scores particularly difficult to interpret.

Good Intentions and Bad Behavior

Racists often accuse social psychologists of a left-leaning, liberal bias. However, racial equality is enshrined in the 13th, 14th, and 15th Amendments to the Constitution of the United States, passed after the Northern States won the Civil War against the Confederate States that sought to maintain slavery. Working towards Martin Luther King’s dream of actual racial equality is therefore aligned with the moral and political ideals of the United States.

Project Implicit was founded on the idea that many Americans embrace Martin Luther King’s dream but often act in violation of egalitarian principles — sometimes due to limitations in their ability to control their behavior, and sometimes because they are not even aware that their actions are influenced by race. The founding vision of Project Implicit was that a five-minute reaction time task could help people become aware of their biases, and that this awareness would be a first step toward changing their behavior.

The problem is that early on, research findings suggested that the race IAT could not deliver on this promise. However, well-known motivated biases made it impossible for Nosek, Banaji, and Greenwald to acknowledge their own biases and temper their enthusiasm about IATs as “windows into people’s unconscious” (Banaji & Greenwald, 2013). Instead, they continued to promote the test, generated substantial revenues for Project Implicit, and aggressively promoted the concept of implicit biases to a broad public audience and ignored valid criticism of IATs as measures of implicit biases.

At this point, the dream of Martin Luther King and the dream of Nosek, Banaji, and Greenwald diverged. Project Implicit promoted a research program and a task that did not increase awareness of bias and did not reduce racism. In fact, the recent surge in open, old-fashioned racism may partly reflect a backlash against DEI programs and implicit bias training. Some people did not resent feedback that they were racist — they resented the implication that racism is bad and that they need to change. These people are now fighting back against DEI programs because they wish to maintain the racial hierarchy established during slavery and perpetuated through the Jim Crow laws of former Confederate states.

Project Implicit was built on a false understanding of racism in the United States, an invalid measure of racial bias, and a failure to connect laboratory findings to actual discriminatory behavior. These problems might have been recognized sooner had Project Implicit — which derived most of its revenues from the use of the race IAT in DEI training — consulted with African American communities or scholars. There is little public evidence that their work on racial issues involved meaningful engagement with the actual targets of racial discrimination.

Giving False Feedback to African Americans

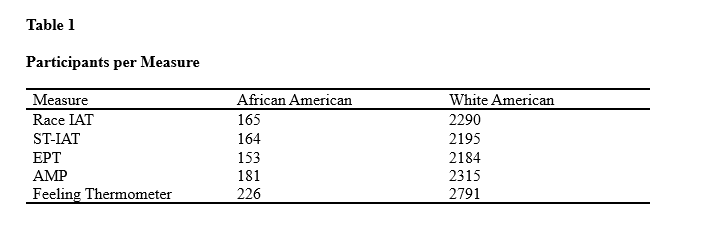

It seems that Brian Nosek trusted the validity of the race IAT even when self-reports of African Americans suggested otherwise (Jost, Banaji, & Nosek, 2004). Millions of people have taken the race IAT on the Project Implicit website and also reported their consciously accessible preferences. Many of them were African Americans and research articles show their results at the aggregate level.

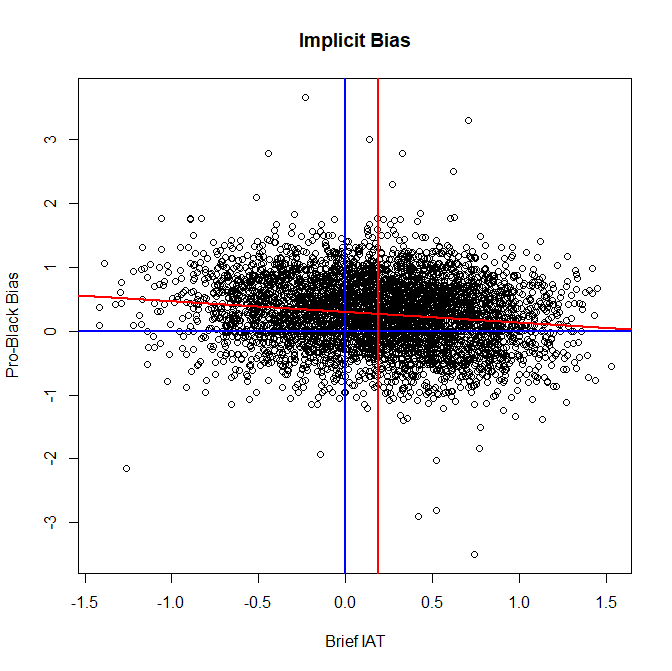

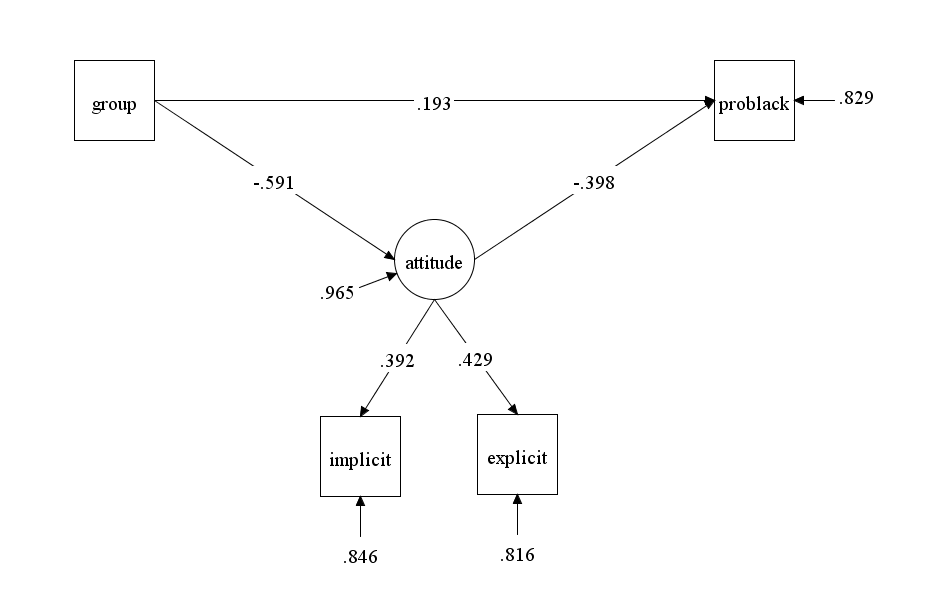

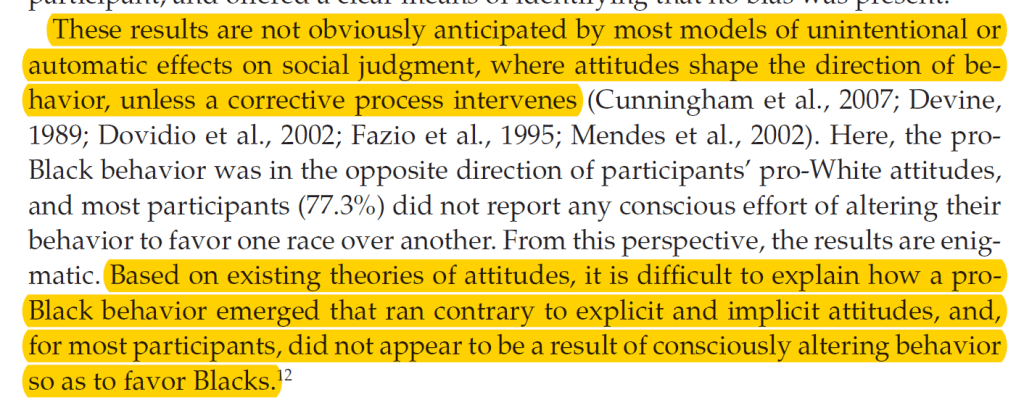

A robust finding based on hundreds of thousands of scores shows a striking dissociation in African Americans’ racial attitudes: on explicit self-report measures, African Americans show strong ingroup favoritism — clearly preferring their own group — yet on the race IAT they score close to zero, showing neither consistent preference for Black nor for White (Nosek et al., 2007; Jost et al., 2004).

This dissociation has two possible interpretations. Either African Americans hold two genuinely different attitudes — one conscious and pro-Black, one unconscious and neutral or pro-White — or they hold one attitude, the explicit measure captures it accurately, and the IAT is biased for this group in ways that suppress the ingroup preference that is clearly present in self-reports. The second interpretation is strongly supported by the documented color-valence confound in the race IAT, the near-zero mean being equally consistent with cultural contamination of the measure, and the fundamental psychometric principle that validity cannot exceed reliability.

Nevertheless, Nosek, Banaji, and Greenwald — three non-African American scholars with no documented engagement with African American communities or scholars — chose the most psychologically and politically loaded interpretation available: that many African Americans harbor a hidden pro-White bias rooted in system justification, a motivated tendency to endorse the existing social order even when that order places them at the bottom of the racial hierarchy.

This is a remarkable claim. Translated out of theoretical language, it asserts that the race IAT reveals that many African Americans are unconsciously motivated to maintain a social system that affords them fewer rights, lower status, and less economic opportunity than White Americans. The claim is made on the basis of a psychometrically compromised instrument, without consulting African American communities or scholars, and in direct contradiction of the most obvious behavioral evidence available. African Americans vote overwhelmingly Democratic — approximately 80% overall and 90% among women — consistently supporting the party associated with anti-racism policies and government intervention to address racial inequality. This is not the behavior of a group that unconsciously endorses the racial status quo. More broadly, African Americans have actively resisted racial hierarchy throughout their entire history in the United States, from the abolitionist movement and Reconstruction to the civil rights movement and beyond. System justification theory, as applied to African Americans through the race IAT, mistakes the cognitive fingerprints of living under racism for psychological endorsement of it.

Although this claim was made in the most highly cited article in the journal Political Psychology (1,277 citations in Web of Science), it has received little critical attention outside the academic literature. Black activists and scholars working on racism have largely ignored this work rather than directly challenging it — not because they accept it, but because Project Implicit’s research program is so disconnected from the empirical traditions and practical concerns that dominate Black psychology and anti-racism activism. This neglect further underscores that Project Implicit operates largely in isolation from broader anti-racism efforts in the United States. African American scholars from W.E.B. Du Bois onward have had good reasons to be skeptical of psychological instruments developed by White researchers to make claims about the inner lives of Black Americans — the history of IQ testing used to pathologize Black communities is instructive. Project Implicit repeated this pattern without appearing to recognize it. The fundamental problem is that the focus of Project Implicit is the measure, not the construct of racial bias. An organization genuinely committed to understanding and reducing racism would follow the evidence wherever it leads, including away from its flagship instrument. Project Implicit has done the opposite.

It is particularly troubling that this interpretation of African Americans’ scores was made by prominent members of Project Implicit, including Nosek himself. If the system justification interpretation is wrong — and the psychometric evidence strongly suggests it is — then African Americans who receive pro-White feedback on the race IAT are being told something false and potentially harmful about their own psychology. The ethical stakes are highest precisely for this group, yet the 2006 IRB protocol makes no mention of the specific risks to African American participants, provides no tailored debriefing to address the system justification interpretation, and offers no guidance on how to contextualize a pro-White result for a Black participant who strongly identifies with their own group. This is not a minor oversight. It is the most serious ethical failure in Project Implicit’s research program.

Conclusion: So, What is Project Implicit?

In my opinion, Project Implicit is a research project built around an experimental paradigm. Participants are asked to perform two complementary reaction time tasks, and the outcome is the difference in response times between them. This task is called the Implicit Association Test. Like many experimental paradigms, the IAT gives social psychologists something to do and write articles about. This academic research is inexpensive and not directly connected to real-world problems. It is basic research by academics in the ivory tower, for researchers in other ivory towers.

However, Project Implicit took this experimental paradigm and presented it to the public as a valid measure of hidden biases and unconscious processes, and as a tool capable of assessing those processes at the level of individual people. It provided individuals with feedback about their scores on a publicly accessible website, used the research to support seminars and public speaking engagements about implicit bias, and claimed that this work could address real social problems. This marketing was extremely effective, in part due to Banaji’s affiliation with Harvard, and Project Implicit generated substantial revenues over two decades while ignoring mounting evidence that the IAT is not a valid instrument for studying racism or reducing it.

Largely unrelated to this scientific evidence, the resurgence of open racism in American politics is draining Project Implicit of revenue, and the organization appears to be running out of money. This would be a serious loss if Project Implicit had made genuine progress in the fight against racism. But it did not. Instead, it deflected attention from real problems and drained resources — financial, institutional, and intellectual — from more effective anti-racism efforts. The projected demise of Project Implicit is therefore a blessing in disguise.

Unfortunately, the real problem of racism remains. Many Americans are unwilling to abandon their racial prejudices and to treat all people as equal under the law. Martin Luther King’s dream remains elusive — not because we lacked a reaction time task to measure hidden bias, but because we lacked the collective will to confront the bias that was never hidden at all.

References

Axt, J. R., Connor, P., Hoogeveen, S., Clark, C. J., Vianello, M., Lahey, J. N., Hahn, A., To, J., Petty, R. E., Costello, T. H., Mitchell, G., Tetlock, P. E., & Uhlmann, E. L. (in press). On the relationship between indirect measures of Black vs. White racial attitudes and discriminatory outcomes: An adversarial collaboration using a sample of White Americans. Journal of Personality and Social Psychology.

Banaji, M. R., & Greenwald, A. G. (2013). Blindspot: Hidden biases of good people. New York: Delacorte Press.

Blanton, H., & Jaccard, J. (2006). Arbitrary metrics in psychology. American Psychologist, 61(1), 27–41.

Blanton, H., Jaccard, J., Strauts, E., Mitchell, G., & Tetlock, P. E. (2015). Toward a meaningful metric of implicit prejudice. Journal of Applied Psychology, 100(5), 1468–1481.

Durgin, F. H., Diop, S. M., Lewis-Owona, J., & Eaton, O. (in press). A downside of conceptual metaphor: Metaphoric alignments of black and white. Manuscript submitted for publication.

Greenwald, A. G., McGhee, D. E., & Schwartz, J. L. K. (1998). Measuring individual differences in implicit cognition: The Implicit Association Test. Journal of Personality and Social Psychology, 74(6), 1464–1480.

Greenwald, A. G., Nosek, B. A., & Banaji, M. R. (2003). Understanding and using the Implicit Association Test: I. An improved scoring algorithm. Journal of Personality and Social Psychology, 85(2), 197–216.

Hahn, A., & Gawronski, B. (2019). Facing one’s implicit biases: From awareness to acknowledgment. Journal of Personality and Social Psychology, 116(5), 769–794.

Jost, J. T., Banaji, M. R., & Nosek, B. A. (2004). A decade of system justification theory: Accumulated evidence of conscious and unconscious bolstering of the status quo. Political Psychology, 25(6), 881–919.

Karpinski, A., & Hilton, J. L. (2001). Attitudes and the Implicit Association Test. Journal of Personality and Social Psychology, 81(5), 774–788.

Kurdi, B., Seitchik, A. E., Axt, J. R., Carroll, T. J., Karapetyan, A., Kaushik, N., … & Greenwald, A. G. (2019). Relationship between the Implicit Association Test and intergroup behavior: A meta-analysis. American Psychologist, 74(5), 569–586.

McFarland, S. G., & Crouch, Z. (2002). A cognitive skill confound on the Implicit Association Test. Social Cognition, 20(6), 483–510.

Meier, B. P., Robinson, M. D., & Clore, G. L. (2004). Why good guys wear white: Automatic inferences about stimulus valence based on brightness. Psychological Science, 15(2), 82–87.

Meier, B. P., Fetterman, A. K., & Robinson, M. D. (2015). The brightness of your smile: The solar hypothesis of the affect-brightness link. In Handbook of embodied cognition and sport psychology. MIT Press.

Nosek, B. A., Banaji, M. R., & Greenwald, A. G. (2002). Harvesting implicit group attitudes and beliefs from a demonstration website. Group Dynamics: Theory, Research, and Practice, 6(1), 101–115.

Nosek, B. A., Smyth, F. L., Hansen, J. J., Devos, T., Lindner, N. M., Ranganath, K. A., … & Banaji, M. R. (2007). Pervasiveness and correlates of implicit attitudes and stereotypes. European Review of Social Psychology, 18(1), 36–88.

Olson, M. A., & Fazio, R. H. (2004). Reducing the influence of extrapersonal associations on the Implicit Association Test: Personalizing the IAT. Journal of Personality and Social Psychology, 86(5), 653–667.

Oswald, F. L., Mitchell, G., Blanton, H., Jaccard, J., & Tetlock, P. E. (2013). Predicting ethnic and racial discrimination: A meta-analysis of IAT criterion studies. Journal of Personality and Social Psychology, 105(2), 171–192.

Oswald, F. L., Mitchell, G., Blanton, H., Jaccard, J., & Tetlock, P. E. (2015). Using the IAT to predict ethnic and racial discrimination: Small effect sizes of unknown societal significance. Journal of Personality and Social Psychology, 108(4), 562–571.

Pew Research Center. (2015, August 19). Exploring racial bias among biracial and single-race adults: The IAT. https://www.pewresearch.org/social-trends/2015/08/19/exploring-racial-bias-among-biracial-and-single-race-adults-the-iat/

ProPublica Nonprofit Explorer. Project Implicit Inc (EIN: 20-3939536). https://projects.propublica.org/nonprofits/organizations/203939536

Schimmack, U. (2021). The Implicit Association Test: A method in search of a construct. Perspectives on Psychological Science, 16(2), 396–414.

Smith-McLallen, A., Johnson, B. T., Dovidio, J. F., & Pearson, A. R. (2006). Black and White: The role of color bias in implicit race bias. Social Cognition, 24(1), 46–73.

Worden, R. E., Najdowski, C. J., McLean, S. J., Worden, K. M., Corsaro, N., Cochran, H., & Engel, R. S. (2024). Implicit bias training for police: Evaluating impacts on enforcement disparities. Law and Human Behavior, 48(5–6), 338–355.