Ten years ago, the foundations of psychological science were shaking by the realization that the standard scientific method of psychological science is faulty. Since then it has become apparent that many classic findings are not replicable and many widely used measures are invalid; especially in social psychology (Schimmack, 2020).

However, it is not uncommon to read articles in 2021 that ignore the low credibility of published results. There are too many of these pseudo-scientific articles, but some articles matter more than others; at last to me. I do care about suicide and like many people my age, I know people who have committed suicide. I was therefore concerned when I saw a review article that examines suicide from a dual-process perspective.

My main concern about this article is that dual-process models in social cognition are based on implicit priming studies with low replicability and implicit measures with low validity (Schimmack, 2021a, 2021b). It is therefore unclear how dual-process models can help us to understand and prevent suicides.

After reading the article, it is clear that the authors make many false statements and present questionable studies that have never been replicated as if they produce a solid body of empirical evidence.

Introduction of the Article

The introduction cites outdated studies that have either not been replicated or produced replication failures.

“Our position is that even these integrative models omit a fundamental and well-established

dynamic of the human mind: that complex human behavior is the result of an interplay between relatively automatic and relatively controlled modes of thought (e.g., Sherman et al., 2014). From basic processes of impression formation (e.g., Fiske et al., 1999) to romantic relationships (e.g., McNulty & Olson, 2015) and intergroup relations (e.g., Devine, 1989), dual-process frameworks that incorporate automatic and controlled cognition have provided a more complete understanding of a broad array of social phenomena.”

This is simply not true. For example, there is no evidence that we implicitly love our partners when we consciously hate them or vice versa, and there is no evidence that prejudice occurs outside of awareness.

Automatic cognitions can be characterized as unintentional (i.e., inescapably activated), uncontrollable (i.e., difficult to stop), efficient in operation (i.e., requiring few cognitive resources), and/or unconscious (Bargh, 1994) and are typically captured with implicit measures.

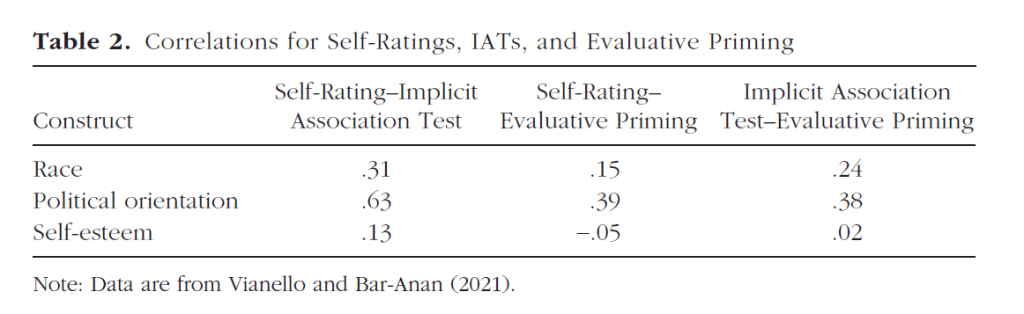

This statement ignores many articles that have criticized the assumption that implicit measures measure implicit constructs. Even the proponent of the most widely used implicit measure have walked back this assumption (Greenwald & Banaji, 2017).

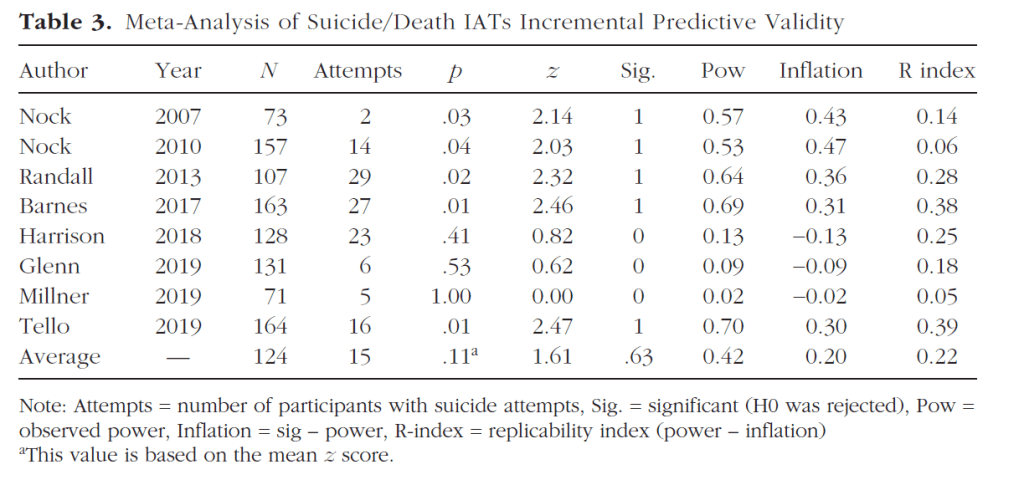

The authors then make the claim that implicit measures of suicide have incremental predictive validity of suicidal behavior.

“For example, automatic associations between the self and death predict suicidal ideation and action beyond traditional explicit (i.e., verbal) responses (Glenn et al., 2017).“

This claim has been made repeatedly by proponents of implicit measures, so I meta-analyzed the small set of studies that tested this prediction (Schimmack, 2021). Some of these studies produced non-significant results and the literature showed evidence that questionable research practices were used to produce significant results. Overall, the evidence is inconclusive. It is therefore incorrect to point to a single study as if there is clear evidence that implicit measures of suicidality are valid.

Further statements are also based on outdated research and a single reference.

“Research on threat has consistently shown that people preferentially process dangers to physical harm by prioritizing attention, response, and recall regarding threats (e.g., Öhman &

Mineka, 2001).”

There have been many proposals about stimuli that attract attention, and threatening stimuli are by no means the only attention grabbing stimuli. Sexual stimuli also attract attention and in general arousal rather than valence or threat is a better predictor of attention (Schimmack, 2005).

It is also not clear how threatening stimuli are relevant for suicide which is related to depression rather than anxiety disorders.

The introduction of implicit measures totally disregards the controversy about the validity of implicit measures or the fact that different implicit measures of the same construct show low convergent validity.

“Much has been written about implicit measures (for reviews, see De Houwer et al., 2009; Fazio & Olson, 2003; March et al., 2020; Nosek et al., 2011; Olson & Fazio, 2009), but for the present purposes, it is important to note the consensus that implicit measures index the automatic properties of attitudes.“

More relevant are claims that implicit measures have been successfully used to understand a variety of clinical topics.

The application of a dual-process framework has consequently improved explanation and prediction in a number of areas involving mental health, including addiction (Wiers & Stacy, 2006), anxiety (Teachman et al., 2012), and sexual assault (Widman & Olson, 2013). Much of this work incorporates advances in implicit measurement in clinical domains (Roefs et al., 2011).

The authors then make the common mistake to conflate self-deception and other-deception. The notion of implicit motives that can influence behavior without awareness implies self-deception. An alternative rational for the use of implicit measures is that they are better measures of consciously accessible thoughts and feelings that individuals are hiding from others. Here we do not need to assume a dual-process model. We simply have to assume that self-report measures are easy to fake, whereas implicit measures can reveal the truth because they are difficult to fake. Thus, even incremental predictive validity does not automatically support a dual-process model of suicide. However, this question is only relevant if implicit measures of suicidality show incremental predictive validity, which has not been demonstrated.

Consistent with the idea that such automatic evaluative associations can predict suicidality later, automatic spouse-negative associations predicted increases in suicidal ideation over time across all three studies, even after accounting for their controlled counterparts (McNulty et al., 2019).

Conclusion Section

In the conclusion section, the authors repeat their false claim that implicit measures of suicidality reflect valid variance in implicit suicidality and that they are superior to explicit measures.

“As evidence of their impact on suicidality has accumulated, so has the need for incorporating automatic processes into integrative models that address questions surrounding how and under what circumstances automatic processes impact suicidality, as well as how automatic and controlled processes interact in determining suicide-relevant outcomes.”

“Implicit measures are better-suited to assess constructs that are more affective

(Kendrick & Olson, 2012), spontaneous (e.g., Phillips & Olson, 2014), and uncontrollable (e.g., Klauer & Teige-Mocigemba, 2007).“

As recent work has shown (e.g., Creemers et al., 2012; Franck, De Raedt, Dereu, et al., 2007; Franklin et al., 2016; Glashouwer et al., 2010; Glenn et al., 2017; Hussey et al., 2016; McNulty et al., 2019; Nock et al., 2010; Tucker,Wingate, et al., 2018), the psychology of suicidality requires formal consideration of automatic processes, their proper measurement, and how they relate

to one another and corresponding controlled processes.

We have articulated a number of hypotheses, several already with empirical support, regarding interactions between automatic and controlled processes in predicting suicidal ideation and lethal acts, as well as their combination into an integrated model.

Then they finally mention the measurement problems of implicit measures.

Research utilizing the model should be mindful of specific challenges. First, although the model answers calls to diversify measurement in suicidality research by incorporating implicit measures, such measures are not without their own problems. Reaction time measures often have problematically low reliabilities, and some include confounds (e.g., Olson et al., 2009). Further, implicit and explicit measures can differ in a number of ways, and structural differences between them can artificially deflate their correspondence (Payne et al., 2008). Researchers should be aware of the strengths and weaknesses of implicit measures.

Evaluation of the Evidence

Here I provide a brief summary of the actual results of studies cited in the review article so that readers can make up their own mind about the relevance and credibility of the evidence.

Creemers, D. H., Scholte, R. H., Engels, R. C., Prinstein, M. J., & Wiers, R. W. (2012). Implicit and explicit self-esteem as concurrent predictors of suicidal ideation, depressive symptoms, and loneliness. Journal of Behavior Therapy and Experimental Psychiatry, 43(1), 638–646

Participants: 95 undergraduate students

Implicit Construct / Measure: Implicit self-esteem / Name Latter Task

Dependent Variables: depression, loneliness, suicidal ideation

Results: No significant direct relationship. Interaction between explicit and implicit self-esteem for suicidal ideation only, b = .28.

Franck, E., De Raedt, R., & De Houwer, J. (2007). Implicit but not explicit self-esteem predicts future depressive symptomatology. Behaviour Research and Therapy, 45(10), 2448–2455.

Participants: 28 clinically depressed patients; 67 not-depressed participants.

Implicit Construct / Measure: Implicit self-esteem / Name Latter Task

Dependent Variable: change in depression controlling for T1

Result: However, after controlling for initial symptoms of depression, implicit, t(48) = 2.21, p = .03, b = .25, but not explicit self-esteem, t(48) = 1.26, p = .22, b = .17, proved to be a significant predictor for depressive symptomatology at 6 months follow-up.

Franck, E., De Raedt, R., Dereu, M., & Van den Abbeele, D. (2007). Implicit and explicit self- esteem in currently depressed individuals with and without suicidal ideation. Journal of Behavior Therapy and Experimental Psychiatry, 38(1), 75–85.

Participants: Depressed patients with suicidal ideation (N = 15), depressed patients without suicidal ideation (N = 14) and controls (N = 15)

Implicit Construct / Measure: Implicit self-esteem / IAT

Dependent variable. Group status

Contrast analysis revealed that the currently depressed individuals with suicidal ideation showed a significantly higher implicit self-esteem as compared to the currently depressed individuals without suicidal ideation, t(43) = 3.0, p < 0.01. Furthermore, the non-depressed controls showed a significantly higher implicit self-esteem as compared to the currently depressed individuals without suicidal ideation, t(43) = 3.7, p < 0.001.

[this finding implies that suicidal depressed patients have HIGHER implicit self-esteem than depressed patients who are not suicidal].

Glashouwer,K.A., de Jong,P. J., Penninx, B.W.,Kerkhof,A. J., vanDyck, R., & Ormel, J. (2010). Do automatic self-associations relate to suicidal ideation? Journal of Psychopathology and Behavioral Assessment, 32(3), 428–437.

Participants: General population (N = 2,837)

Implicit Constructs / Measure: Implicit depression, Implicit Anxiety / IAT

Dependent variable: Suicidal Ideation, Suicide Attempt

Results: simple correlations

Depression IAT – Suicidal Ideation, r = .22

Depression IAT – Suicide Attempt, r = .12

Anxiety IAT – Suicide Ideation, r = .18

Anxiety IAT – Suicide Attempt, r = .11

Controlling for Explicit Measures of Depression / Anxiety

Depression IAT – Suicidal Ideation, b = ..024, p = .179

Depression IAT – Suicide Attempt, b = .037, p = .061

Anxiety IAT – Suicide Ideation, b = .024, p = .178

Anxiety IAT – Suicide Attempt, r = ..039, p = .046

Glenn, J. J., Werntz, A. J., Slama, S. J., Steinman, S. A., Teachman, B. A., &

Nock, M. K. (2017). Suicide and self-injury-related implicit cognition: A

large-scale examination and replication. Journal of Abnormal Psychology,

126(2), 199–211.

Participants: Self-selected online sample with high rates of self-harm (> 50%). Ns = 3,115, 3114

Implicit Constructs / Measure: Self-Harm, Death, Suicide / IAT

Dependent variables: Group differences (non-suicidal self-injury / control; suicide attempt / control)

Results:

Non-suicidal self-injury versus control

Self-injury IAT, d = .81/.97; Death IAT d = .52/.61, Suicide IAT d = .58/.72

Suicide Attempt versus control

Self-injury IAT, d = ..52/.54; Death IAT d = .37/.32, Suicide IAT d = .54/.67

[these results show that self-ratings and IAT scores reflect a common construct;

they do not show discriminant validity; no evidence that they measure distinct

constructs and they do not show incremental predictive validity]

Hussey, I., Barnes-Holmes, D., & Booth, R. (2016). Individuals with current

suicidal ideation demonstrate implicit “fearlessness of death..” Journal of

Behavior Therapy and Experimental Psychiatry, 51, 1–9.

Participants: 23 patients with suicidal ideation and 25 controls (university students)

Implicit Constructs / Measure: Death attitudes (general / personal) / IRAP

Dependent variable: Group difference

Results: No main effects were found for either group (p = .08). Critically, however, a three-way interaction effect was found between group, IRAP type, and trial-type, F(3, 37) = 3.88, p = .01. Specifically, the suicidal ideation group produced a moderate “my death-not-negative” bias (M = .29, SD = .41), whereas the normative group produced a weak “my death-negative” bias (M = -.12, SD = .38, p < .01). This differential performance was of a very large effect size (Hedges’ g = 1.02).

[This study suggests that evaluations of personal death show stronger relationships than generic death]

McNulty, J. K., Olson, M. A., & Joiner, T. E. (2019). Implicit interpersonal evaluations as a risk factor for suicidality: Automatic spousal attitudes predict changes in the probability of suicidal thoughts. Journal of Personality and Social Psychology, 117(5), 978–997

Participants. Integrative analysis of 399 couples from 3 longitudinal study of marriages.

Implicit Construct / Measure: Partner attitudes / evaluative priming task

Dependent variable: Change in suicidal thoughts (yes/no) over time

Result: (preferred scoring method)

without covariates, b = -.69, se = .27, p = .010.

with covariate, b = -.64, se = .29, p = .027

Nock, M. K., Park, J. M., Finn, C. T., Deliberto, T. L., Dour, H. J., & Banaji, M. R. (2010). Measuring the suicidal mind: Implicit cognition predicts suicidal behavior. Psychological Science, 21(4), 511–517.

Participants. 157 patients with mental health problems

Implicit Construct / Measure: death attitudes / IAT

Dependent variable: Prospective Prediction of Suicide

Result: controlling for prior attempts / no explicit covariates

b = 1.85, SE = 0.94, z = 2.03, p = .042

Tucker, R. P., Wingate, L. R., Burkley, M., & Wells, T. T. (2018). Implicit Association with Suicide as Measured by the Suicide Affect Misattribution Procedure (S-AMP) predicts suicide ideation. Suicide and Life-Threatening Behavior, 48(6), 720–731.

Participants. 138 students oversampled for suicidal ideation

Implicit Construct / Measure: suicide attitudes / AMP

Dependent variable: Suicidal Ideation

Result: simple correlation, r = .24

regression controlling for depression, b = .09, se = .04, p = .028

Taken together the reference show a mix of constructs, measures and outcomes, and p-values cluster just below .05. Not one of these p-values is below .005. Moreover, many studies relied on small convenience samples. The most informative study is the study by Glashouwer et al. that examined incremental predictive validity of a depression IAT in a large, population wide, sample. The result was not significant and the effect size was less than r = .1. Thus, the references do not provide compelling evidence for dual-attitude models of depression.

Conclusion

Social psychology have abused the scientific method for decades. Over the past decade, criticism of their practices has become louder, but many social psychologists ignore this criticism and continue to abuse significance testing and to misrepresent these results as if they provide empirical evidence that can inform understanding of human behavior. This article is just another example of the unwillingness of social psychologists to “clean up their act” (Kahneman, 2012). Readers of this article should be warned that the claims made in this article are not scientific. Fortunately, there is a credible research on depression and suicide outside of social psychology.