Over 20 years ago, Anthony Greenwald and colleagues introduced the Implicit Association Test (IAT) as a measure of individual differences in implicit bias (Greenwald et al., 1998). The assumption underlying the IAT is that individuals can harbour unconscious, automatic, hidden, or implicit racial biases. These implicit biases are distinct from explicit bias. Somebody could be consciously unbiased, while their unconscious is prejudice. Theoretically, the opposite would also be possible, but taking IAT scores at face value, the unconscious is more prejudice than conscious reports of attitudes imply. It is also assumed that these implicit attitudes can influence behavior in ways that bypass conscious control of behavior. As a result, implicit bias in attitudes leads to implicit bias in behavior.

The problem with this simple model of implicit bias is that it lacks scientific support. In a recent review of validation studies, I found no scientific evidence that the IAT measures hidden or implicit biases outside of people’s awareness (Schimmack, 2019a). Rather, it seems to be a messy measure of consciously accessible attitudes.

Another contentious issue is the predictive validity of IAT scores. It is commonly implied that IAT scores predict bias in actual behavior. This prediction is so straightforward that the IAT is routinely used in implicit bias training (e.g., at my university) with the assumption that individuals who show bias on the IAT are likely to show anti-Black bias in actual behavior.

Even though the link between IAT scores and actual behavior is crucial for the use of the IAT in implicit bias training, this important question has been examined in relatively few studies and many of these studies had serious methodological limitations (Schimmack, 20199b).

To make things even more confusing, a couple of papers even suggested that White individuals’ unconscious is not always biased against Black people: “An unintentional, robust, and replicable Pro-Black bias in social judgment (Axt, Ebersole, & Nosek, 2016; Axt, 2017).

I used the open data of these two articles to examine more closely the relationship between scores on the attitude measures (the Brief Implicit Association Test & a direct explicit rating on a 7-point scale) and performance on a task where participants had to accept or reject 60 applicants into an academic honor society. Along with pictures of applicants, participants were provided with information about academic performance. These data were analyzed with signal-detection theory to obtain a measure of bias. Pro-White bias would be reflected in a lower admission standard for White applicants than for Black applicants. However, despite pro-White attitudes, participants showed a pro-Black bias in their admissions to the honor society.

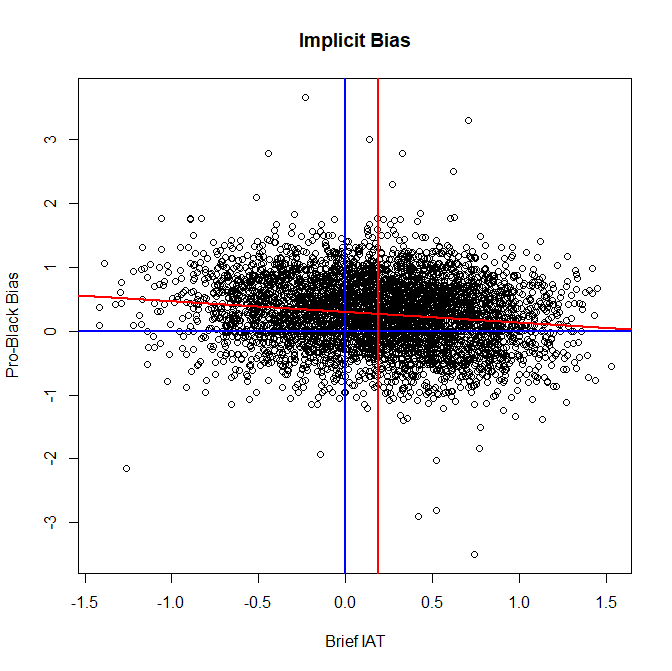

Figure 1 shows the results for the Brief IAT. The blue lines show are the coordinates with 0 scores (no bias) on both tasks. The decreasing red line shows the linear relationship between BIAT scores on the x-axis and bias in admission decisions on the y-axis. The decreasing trend shows that, as expected, respondents with more pro-White bias on the BIAT are less likely to accept Black applicants. However, the picture also shows that participants with no bias on the BIAT have a bias to select more Black than White applicants. Most important, the vertical red line shows behavior of participants with the average performance on the BIAT. Even though these participants are considered to have a moderate pro-White bias, they show a pro-Black bias in their acceptance rates. Thus, there is no evidence that IAT scores are a predictor of discriminatory behavior. In fact, even the most extreme IAT scores fail to identify participants who discriminate against Black applicants.

A similar picture emerges for the explicit ratings of racial attitudes.

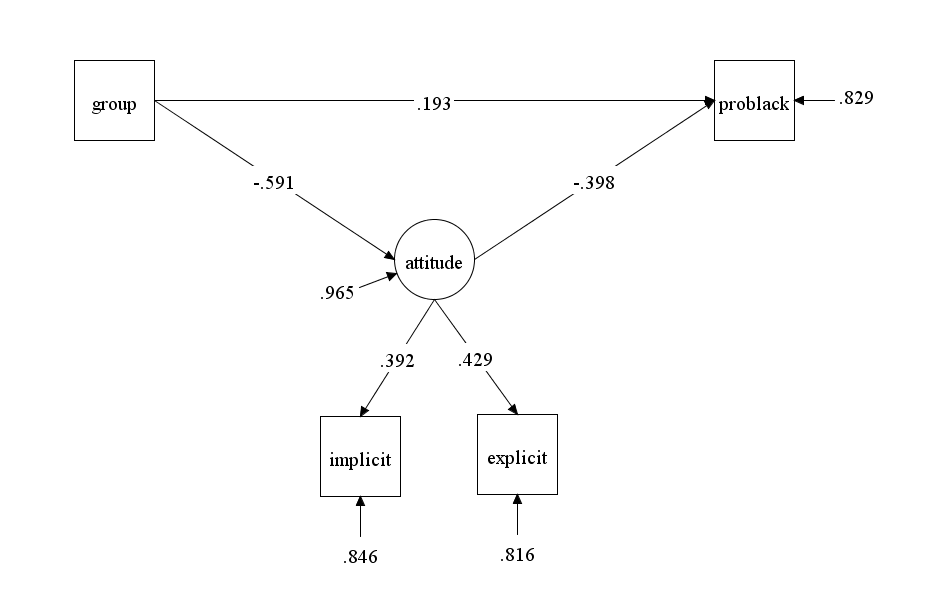

The next analysis examine convergent and predictive validity of the BIAT in a latent variable model (Schimmack, 2019). In this model, the BIAT and the explicit measure are treated as complementary measures of a single attitude for two reasons. First, multi-method studies fail to show that the IAT and explicit measures tap different attitudes (Schimmack, 2019a). Second, it is impossible to model systematic method variance in the BIAT in studies that use only a single implicit measure of attitudes.

The model also includes a group variable that distinguishes the convenience samples in Axt et al.’s studies (2016) and the sample of educators in Axt (2017). The grouping variable is coded with 1 for educators and 0 for the comparison samples.

The model meets standard criteria of model fit, CFI = .996, RMSEA = .002.

Figure 3 shows the y-standardized results so that relationships with the group variable can be interpreted as Cohen’s d effect sizes. The results show a notable difference (d = -59) in attitudes between the two samples with less pro-White attitudes for educators. In addition, educators have a small bias to favor Black applicants in their acceptance decisions (d = .19).

The model also shows that racial attitudes influence acceptance decisions with a moderate effect size, r = -.398. Finally, the model shows that the BIAT and the single-item explicit rating have modest validity as measures of racial attitudes, r = .392, .429, respectively. The results for the BIAT are consistent with other estimates that a single IAT has no more than 20% (.392^2 = 15%) valid variance. Thus, the results here are entirely consistent with the view that explicit and implicit measures tap a single attitude and that there is no need to postulate hidden, unconscious attitudes that can have an independent influence on behavior.

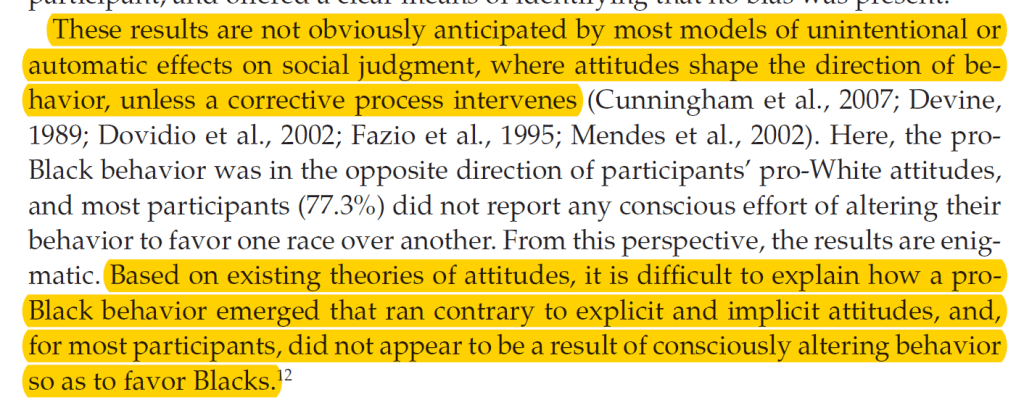

Based on their results, Axt et al. (2016) caution readers that the relationship between attitudes and behaviors is more complex than the common narrative of implicit bias assumes.

The authors “suggest that the prevailing emphasis on pro-White biases in judgment and behavior in the existing literature would improve by refining the theoretical understanding of under what conditions behavior favoring dominant or minority groups will occur.” (p. 33).

Implications

For two decades, the developers of the IAT have argued that the IAT measures a distinct type of attitudes that reside in individuals’ unconscious and can influence behavior in ways that bypass conscious control. As a result, even individuals who aim to be unbiased might exhibit prejudice in their behavior. Moreover, the finding that the majority of White people show a pro-White bias in their IAT scores was used to explain why discrimination and prejudice persist. This narrative is at the core of implicit bias training.

The problem with this story is that it is not supported by scientific evidence. First, there is no evidence that IAT scores reflect some form of unconscious or implicit bias. Rather, IAT scores seem to tap the same cognitive and affective processes that influence explicit ratings. Second, there is no evidence that processes that influence IAT scores can bypass conscious control of behavior. Third, there is no evidence that a pro-White bias in attitudes automatically produces a pro-White bias in actual behaviors. Not even Freud assumed that unconscious processes would have this effect on behavior. In fact, he postulated that various defense mechanisms may prevent individuals from acting on their undesirable impulses. Thus, the prediction that attitudes are sufficient to predict behavior is too simplistic.

Axt et al. (2016= speculate that “bias correction can occur automatically and without awareness” (p. 32). While this is an intriguing hypothesis, there is little evidence for such smart automatic control processes. This model also implies that it is impossible to predict actual behaviors from attitudes because correction processes can alter the influence of attitudes on behavior. This implies that only studies of actual behavior can reveal the ability of IAT scores to predict actual behavior. For example, only studies of actual behavior can demonstrate whether police officers with pro-White IAT scores show racial bias in the use of force. The problem is that 20 years of IAT research have uncovered no robust evidence that IAT scores actually predict important real-world behaviors (Schimmack, 2019b).

In conclusion, the results of Axt’s studies suggest that the use of the IAT in implicit bias training needs to be reconsidered. Not only are test scores highly variable and often provide false information about individuals’ attitudes; they also do not predict actual behavior of discrimination. It is wrong to assume that individuals who show a pro-White bias on the IAT are bound to act on these attitudes and discriminate against Black people or other minorities. Therefore, the focus on attitudes in implicit bias training may be misguided. It may be more productive to focus on factors that do influence actual behaviors and to provide individuals with clear guidelines that help them to act in accordance with these norms. The belief that this is not sufficient is based on an unsupported model of unconscious forces that can bypass awareness.

This conclusion is not totally new. In 2008, Blanton criticized the use of the IAT in applied settings (IAT: Fad or fabulous?)

“There’s not a single study showing that above and below that cutoff people differ in any way based on that score,” says Blanton.

And Brian Nosek agreed.

Guilty as charged, says the University of Virginia’s Brian Nosek, PhD, an IAT developer.

However, this admission of guilt has not changed behavior. Nosek and other IAT proponents continue to support Project Implicit that provided millions of visitors with false information about their attitudes or mental health issues based on a test with poor psychometric properties. A true admission of guilt would be to stop this unscientific and unethical practice.

References

Axt, J.R. (2017). An unintentional pro-Black bias in judgement among educators. British Journal of Educational Psychology, 87, 408-421.

Axt, J.R., Ebersole, C.R. & Nosek, B.A. (2016). An unintentional, robust, and replicable pro-Black bias in social judgment. Social Cognition, 34, 1-39.

Greenwald, A. G., McGhee, D. E., & Schwartz, J. L. K. (1998). Measuring individual differences in implicit cognition: The Implicit Association Test. Journal of Personality and Social Psychology, 74, 1464–1480.

Schimmack, U. (2019). The Implicit Association Test: A Method in Search of a construct. Perspectives on Psychological Science. https://doi.org/10.1177/1745691619863798

Schimmack, U. (2019). The race IAT: A Case Study of The Validity Crisis in Psychology.

https://replicationindex.com/2019/02/06/the-race-iat-a-case-study-of-the-validity-crisis-in-psychology/

1 thought on “Anti-Black Bias on the IAT predicts Pro-Black Bias in Behavior”