This blog post was a quick response to Pek et al.’s biased criticism of z-curve in a commentary on our z-curve analysis of the emotion literature. We have now written a shorter, more focused, and useful rebuttal that is now under review at Cognition and Emotion. You can see the preprint here.

“Windmills are evil” (Don Quixote cited by Trump)

“Zcurve is made by the devil” (Pek et al., 2026)

Preamble

Ideal conceptions of science have a set of rules that help to distinguish beliefs from knowledge. Actual science is a game with few rules. Anything goes (Feyerabend), if you can sell it to an editor of a peer-reviewed journal. US American psychologist also conflate the meaning of freedom in “Freedom of speech” and “academic freedom” to assume that there are no standards for truth in science, just like there are none in American politics. The game is to get more publications, citations, views, and clicks, and truth is decided by the winner of popularity contests. Well, not to be outdone in this war, I am posting yet another blog post about Pek’s quixotian attacks on z-curve.

For context, Pek has already received an F by statistics professor Jerry Brunner for her nonsensical attacks on a statistical method (Brunner, 2024), but even criticism by a professor of statistics has not deterred her from repeating misinformation about z-curve. I call this willful incompetence; the inability to listen to feedback and to wonder whether somebody else could have more expertise than yourself. This is not to be confused with the Dunning-Kruger effect, where people have no feedback about their failures. Here failures are repeated again and again, despite strong feedback that errors are being made.

Context

One of the editors of Cognition and Emotion, Sander Koole, has been following our work and encouraged us to submit our work on the credibility of emotion research as an article to Cognition & Emotion. We were happy to do so. The manuscript was handled by the other editor Klaus Rothermund. In the first round of reviews, we received a factually incorrect and hostile review by an anonymous reviewer. We were able to address these false criticisms of z-curve and resubmitted the manuscript. In a new round of reviews, the hostile reviewer came up with simulation studies that showed z-curve fails. We showed that this is indeed the case in the simulations that used studies with N = 3 and 2 degrees of freedom. The problem here is not z-curve, but the transformation of t-values into z-values. When degrees of freedom are like those in the published literature we examined, this is not a problem. The article was finally accepted, but the hostile reviewer was allowed to write a commentary. At least, it wa now clear that the hostile reviewer was Pek.

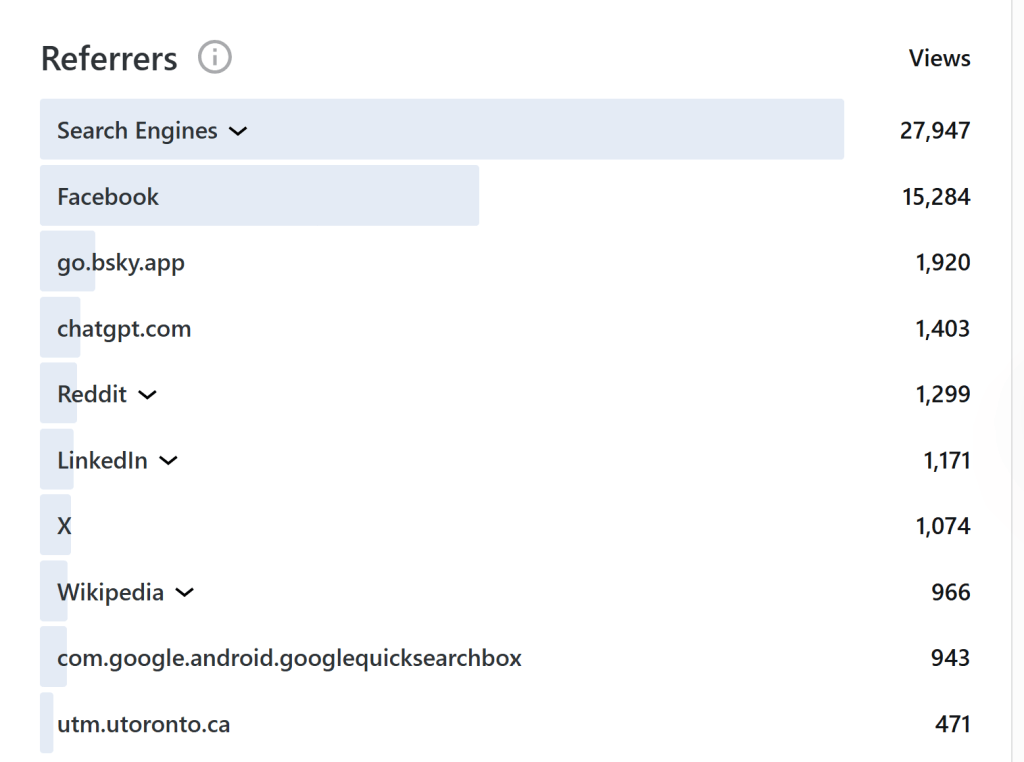

I found out that the commentary is apparently accepted for publication when somebody sent me the link to it on ResearchGate and a friendly over to help with a rebuttal. However, I could not wait and drafted a rebuttal with the help of ChatGPT. Importantly, I use ChatGPT to fact check claims and control my emotions, not to write for me. Below you can find a clear, point by point response to all the factually incorrect claims about z-curve made by Pek et al. that passed whatever counts as human peer-review at Cognition and Emotion.

Rebuttal

Abstract

What is the Expected Discovery Rate?

“EDR also lacks a clear interpretation in relation to credibility because it reflects both the average pre-data power of tests and the estimated average population effect size for studied effects.”

This sentence is unclear and introduces several poorly defined or conflated concepts. In particular, it confuses the meaning of the Expected Discovery Rate (EDR) and misrepresents what z-curve is designed to estimate.

A clear and correct definition of the Expected Discovery Rate (EDR) is that it is an estimate of the average true power of a set of studies. Each empirical study has an unknown population effect size and is subject to sampling error. The observed effect size is therefore a function of these two components. In standard null-hypothesis significance testing, the observed effect size is converted into a test statistic and a p-value, and the null hypothesis is rejected when the p-value falls below a prespecified criterion, typically α = .05.

Hypothetically, if the population effect size were known, one could specify the sampling distribution of the test statistic and compute the probability that the study would yield a statistically significant result—that is, its power (Cohen, 1988). The difficulty, of course, is that the true population effect size is unknown. However, when one considers a large set of studies, the distribution of observed p-values (or equivalently, z-values) provides information about the average true power of those studies. This is the quantity that z-curve seeks to estimate.

Average true power predicts the proportion of statistically significant results that should be observed in an actual body of studies (Brunner & Schimmack, 2020), in much the same way that the probability of heads predicts the proportion of heads in a long series of coin flips. The realized outcome will deviate from this expectation due to sampling error—for example, flipping a fair coin 100 times will rarely yield exactly 50 heads—but large deviations from the expected proportion would indicate that the assumed probability is incorrect. Analogously, if a set of studies has an average true power of 80%, the observed discovery rate should be close to 80%. Substantially lower rates imply that the true power of the studies is lower than assumed.

Crucially, true power has nothing to do with pre-study (or pre-data) power, contrary to the claim made by Pek et al. Pre-study power is a hypothetical quantity based on researchers’ assumptions—often optimistic or wishful—about population effect sizes. These beliefs can influence study design decisions, such as planned sample size, but they cannot influence the outcome of a study. Study outcomes are determined by the actual population effect size and sampling variability, not by researchers’ expectations.

Pek et al. therefore conflate hypothetical pre-study power with true power in their description of EDR. This conflation is a fundamental conceptual error. Hypothetical power is irrelevant for interpreting observed results or evaluating their credibility. What matters for assessing the credibility of a body of empirical findings is the true power of the studies to produce statistically significant results, and EDR is explicitly designed to estimate that quantity.

Pek et al.’s misunderstanding of the z-curve estimands (i.e., the parameters the method is designed to estimate) undermines their more specific criticisms. If a critique misidentifies the target quantity, then objections about bias, consistency, or interpretability are no longer diagnostics of the method as defined; they are diagnostics of a different construct.

The situation is analogous to Bayesian critiques of NHST that proceed from an incorrect description of what p-values or Type I error rates mean. In that case, the criticism may sound principled, but it does not actually engage the inferential object used in NHST. Likewise here, Pek et al.’s argument rests on a category error about “power,” conflating hypothetical pre-study power (a design-stage quantity based on assumed effect sizes) with true power (the long-run success probability implied by the actual population effects and the study designs). Because z-curve’s EDR is an estimand tied to the latter, not the former, their critique is anchored in conceptual rather than empirical disagreement.

2. Z-Curve Does Not Follow the Law of Large Numbers

.“simulation results further demonstrate that z-curve estimators can often be biased and inconsistent (i.e., they fail to follow the Law of Large Numbers), leading to potentially misleading conclusions.”

This statement is scientifically improper as written, for three reasons.

First, it generalizes from a limited set of simulation conditions to z-curve as a method in general. A simulation can establish that an estimator performs poorly under the specific data-generating process that was simulated, but it cannot justify a blanket claim about “z-curve estimators” across applications unless the simulated conditions represent the method’s intended model and cover the relevant range of plausible selection mechanisms. Pek et al. do not make those limitations explicit in the abstract, where readers typically take broad claims at face value.

Second, the statement is presented as if Pek et al.’s simulations settle the question, while omitting that z-curve has already been evaluated in extensive prior simulation work. That omission is not neutral: it creates the impression that the authors’ results are uniquely diagnostic, rather than one contribution within an existing validation literature. Because this point has been raised previously, continuing to omit it is not a minor oversight; it materially misleads readers about the evidentiary base for the method.

Third, and most importantly, their claim that z-curve estimates “fail to follow the Law of Large Numbers” is incorrect. Z-curve estimates are subject to ordinary sampling error, just like any other estimator based on finite data. A simple analogy is coin flipping: flipping a fair coin 10 times can, by chance, produce 10 heads, but flipping it 10,000 times will not produce 10,000 heads by chance. The same logic applies to z-curve. With a small number of studies, the estimated EDR can deviate substantially from its population value due to sampling variability; as the number of studies increases, those random deviations shrink. This is exactly why z-curve confidence intervals narrow as the number of included studies grows: sampling error decreases as the amount of information increases. Nothing about z-curve exempts it from this basic statistical principle. Suggesting otherwise implies that z-curve is somehow unique in how sampling error operates, when in fact it is a standard statistical model that estimates population parameters from observed data and, accordingly, becomes more precise as the sample size increases.

3. Sweeping Conclusion Not Supported by Evidence

“Accordingly, we do not recommend using 𝑍-curve to evaluate research findings.”

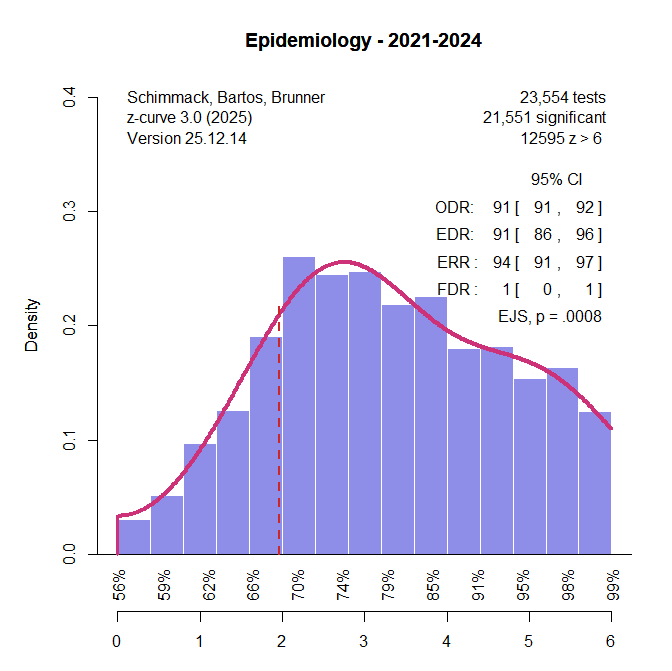

Based on these misrepresentations of z-curve, Pek et al. make a sweeping recommendation that z-curve estimates provide no useful information for evaluating published research and should be ignored. This recommendation is not only disproportionate to the issues they raise; it is also misaligned with the practical needs of emotion researchers. Researchers in this area have a legitimate interest in whether their literature resembles domains with comparatively strong replication performance or domains where replication has been markedly weaker. For example, a reasonable applied question is whether the published record in emotion research looks more like areas of cognitive psychology, where about 50% of results replicate or more like social psychology, where about 25% replicate (Open Science Collaboration, 2015).

Z-curve is not a crystal ball capable of predicting the outcome of any particular future replication with certainty. Rather, the appropriate claim is more modest and more useful: z-curve provides model-based estimates that can help distinguish bodies of evidence that are broadly consistent with high average evidential strength from those that are more consistent with low average evidential strength and substantial selection. Used in that way, z-curve can assist emotion researchers in critically appraising decades of published results without requiring the field to replicate every study individually.

4. Ignoring the Replication Crisis That Led to The Development of Z-curve

“We advocate for traditional meta-analytic methods, which have a well-established history of producing appropriate and reliable statistical conclusions regarding focal research findings”

This statement ignores that traditional meta-analysis ignores publication bias and have produced dramatically effect size estimates. The authors ignore the need to take biases into account to separate true findings from false ones.

Article

5. False definition of EDR (again)

“EDR (cf. statistical power) is described as “the long-run success rate in a series of exact replication studies” (Brunner & Schimmack, 2020, p. 1)”.

This quotation describes statistical power in Brunner and Schimmack (2020), not the Expected Discovery Rate (EDR). The EDR was introduced later in Bartoš and Schimmack (2022) as part of z-curve 2.0, and, as described above, the EDR is an estimate of average true power. While the power of a single study can be defined in terms of the expected long-run frequency of significant results (Cohen, 1988), it can also be defined as the probability of obtaining a significant result in a single study. This is the typical use of power in a priori power calculations to plan a specific study. More importantly, the EDR is defined as the average true power of a set of unique studies and does not assume that these studies are exact replications.

Thus, the error is not merely a misplaced citation, but a substantive misrepresentation of what EDR is intended to estimate. Pek et al. import language used to motivate the concept of power in Brunner and Schimmack (2020) and incorrectly present it as a defining interpretation of EDR. This move obscures the fact that EDR is a summary parameter of a heterogeneous literature, not a prediction about repeated replications of a single experiment.

6. Confusing Observed Data with Unobserved Population Parameters (Ontological Error)

“Because z-curve analysis infers EDR from observed p-values, EDR can be understood as a measure of average observed power.”

This statement is incorrect. To clarify the issue without delving into technical statistical terminology, consider a simple coin-toss example. Suppose we flip a coin that is unknown to us to be biased, producing heads 60% of the time, and we toss it 100 times. We observe 55 heads. In this situation, we have an observed outcome (55 heads), an unknown population parameter (the true probability of heads, 60%), and an unknown expected value (60 heads in 100 tosses). Based on the observed data, we attempt to estimate the true probability of heads or to test the hypothesis that the coin is fair (i.e., that the expected number of heads is 50 out of 100). Importantly, we do not confuse the observed outcome with the true probability; rather, we use the observed outcome as noisy information about an underlying parameter. That is, we treat 55 as a reasonable estimate of the true power and use confidence intervals to see whether it includes 50. If it does not, we can reject the hypothesis that it is fair.

Estimating average true power works in exactly the same way. If 100 honestly reported studies yield 36 statistically significant results, the best estimate of the average true power of these studies is 36%, and we would expect a similar discovery rate if the same 100 studies were repeated under identical conditions (Open Science Collaboration, 2015). Of course, we recognize that the observed rate of 36% is influenced by sampling error and that a replication might yield, for example, 35 or 37 significant results. The observed outcome is therefore treated as an estimate of an unknown parameter, not as the parameter itself. The true average power is probably not 36%, but it is somewhere around this estimate and not 80%.

The problem with so-called “observed power” calculations arises precisely when this distinction is ignored—when estimates derived from noisy data are mistaken for true underlying parameters. This is the issue discussed by Hoenig and Heisey (2001). There is nothing inherently wrong with computing power using effect-size estimates from a study (see, e.g., Yuan & Maxwell, 200x); the problem arises when sampling error is ignored and estimated quantities are treated as if they were known population values. In a single study, the observed power could be 36% power, and the true power is 80%, but in a reasonably large set of studies this will not happen.

Z-curve explicitly treats average true power as an unknown population parameter and uses the distribution of observed p-values to estimate it. Moreover, z-curve quantifies the uncertainty of this estimate by providing confidence intervals, and correct interpretations of z-curve results explicitly take this uncertainty into account. Thus, the alleged ontological error attributed to z-curve reflects a misunderstanding of basic statistical inference rather than a flaw in the method itself.

7. Modeling Sampling Error of Z-Values

“z-curve analysis assumes independence among the K analyzed p-values, making the inclusion criteria for p-values critical to defining the population of inference…. Including multiple 𝑝-values from the same sampling unit (e.g., an article) violates the independence assumption, as 𝑝-values within a sampling unit are often correlated. Such dependence can introduce bias, especially because the 𝑍-curve does not account for unequal numbers of 𝑝-values across sampling units or within-unit correlations.”

It is true that z-curve assumes that sampling error for a specific result converted into a z-value follows the standard normal distribution with a variance of 1. Correlations among results can lead to violations of this assumption. However, this does not imply that z-curve “fails” in the presence of any dependence, nor does it justify treating this point as a decisive objection to our application. Rather, it means that analysts should take reasonable steps to limit dependence or to use inference procedures that are robust to clustering of results within studies or articles.

A conservative way to meet the independence assumption is to select only one test per study or one test per article in multiple-study articles where the origin of results is not clear. It is also possible to use more than one result per study by computing confidence intervals that sample one result at random, but different results for each sample with replacement. This is closely related to standard practices in meta-analysis for handling multiple dependent effects per study, where uncertainty is estimated with resampling or hierarchical approaches rather than by treating every effect size as independent. The practical impact of dependence also depends on the extent of clustering. In z-curve applications with large sets of articles (e.g., all articles in Cognition and Emotion), the influence of modest dependence is typically limited, and in our application we obtain similar estimates whether we treat results as independent or use clustered bootstrapping to compute uncertainty. Thus, even if Pek et al.’s point is granted in principle, it does not materially change the interpretation of our empirical findings about the emotion literature. Although we pointed this out in our previous review, the authors continue to misrepresent how our z-curve analyses addressed non-independence among p-values (e.g., by using clustered bootstrapping and/or one-test-per-study rules).

8. Automatic Extraction of Test Statistics

“Unsurprisingly, automated text mining methods for extracting test statistics has been criticized for its inability to reliably identify 𝑝-values suitable for forensic meta-analysis, such as 𝑍-curve analysis.”

This statement fails to take into account advantages and disadvantageous of automatically extracting results from articles. The advantages are that we have nearly population level data for research in the top two emotion journals. This makes it possible to examine time trends (did power increase; did selection bias decrease). The main drawback is that automatic extraction does not, by itself, distinguish between focal tests (i.e., tests that bear directly on an article’s key claim) and non-focal tests. We are explicit about this limitation and also included analyses of hand-coded focal analyses to supplement the results based on automatically extracted test statistics. Importantly, our conclusion that z-curve estimates are similar across these coding approaches is consistent with an often-overlooked feature of Cohen’s (1962) classic assessment of statistical power: Cohen explicitly distinguished between focal and non-focal tests and reported that this distinction did not materially change his inferences about typical power. In this respect, our hand-coded focal analyses suggest that the inclusion of non-focal tests in large-scale automated extraction is not necessarily a fatal limitation for estimating average evidential strength at the level of a literature, although it remains essential to be transparent about what is being sampled and to supplement automated extraction with focal coding when possible.

Pek et al. accurately describe our automated extraction procedure as relying on reported test statistics (e.g., t, F), which are then converted into z-values for z-curve analysis. However, their subsequent criticism shifts to objections that apply specifically to analyses based on scraped p-values, such as concerns about rounded or imprecise information about p-values (e.g., p < .05) and their suitability for forensic meta-analysis. This criticism is valid, but it is also the reason why we do not use p-values for z-curve analysis when better information is available.

9. Pek et al.’s Simulation Study: What it really shows

Pek et al.’s description of their simulation study is confusing. They call one condition “no bias” and the other “bias.” The problem here is that “no bias” refers to a simulation in which selection bias is present. The bias here assumes that α = .05 serves as the selection mechanism. That is, studies are selected based on statistical significance, but there is no additional selection among statistically significant results. Most importantly, it is assumed that there is no further selection based on effect sizes.

Pek et al.’s simulation of “bias” instead implies that researchers would not publish a result if d = .2, but would publish it if d = .5, consistent with a selection mechanism that favors larger observed effects among statistically significant results. Importantly, their simulation does not generalize to other violations of the assumptions underlying z-curve. In particular, it represents only one specific form of within-significance selection and does not address alternative selection mechanisms that have been widely discussed in the literature.

For example, a major concern about the credibility of psychological research is p-hacking, where researchers use flexibility in data analysis to obtain statistically significant results from studies with low power. P-hacking has the opposite effect of Pek et al.’s simulated bias. Rather than boosting the representation of studies with high power, studies with low power are over-represented among the statistically significant results.

Pek et al. are correct that z-curve estimates depend on assumptions about the selection mechanism, but this is not a fundamental problem. All selection models necessarily rely on assumptions about how studies enter the published literature, and different models make different assumptions (e.g., selection on significance thresholds, on p-value intervals, or on effect sizes). Because the specific practices that generate bias in published results are unknown, no selection model can avoid such assumptions, and z-curve’s assumptions are neither unique nor unusually restrictive.

Pek et al.’s simulations are also confusing because they include scenarios in which all p-values are reported and analyzed. These conditions are not relevant for standard applications of z-curve that assume and usually find evidence of bias. Accordingly, we focus on the simulations that match the usual publication environment, in which z-curve is fitted to the distribution of statistically significant z-values.

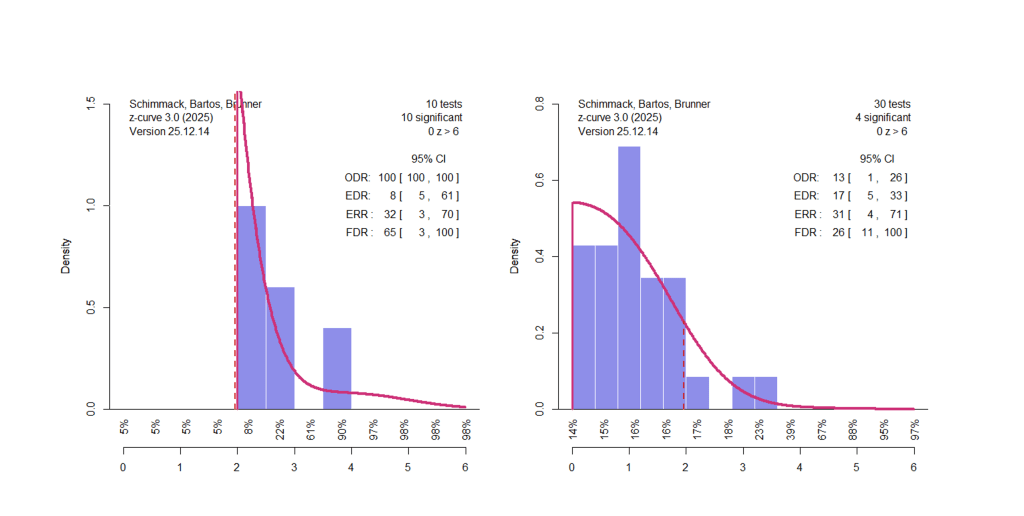

Pek et al.’s figures are also easy to misinterpret because the y-axis is restricted to a very narrow range of values. Although EDR estimates can in principle range from alpha (5% )to 100%, the y-axis in Figure 1a spans only approximately 60% to 85%. This makes estimation errors look big visually, when they are numerically relatively small.

In the relevant condition, the true EDR is 72.5%. For small sets of studies (e.g., K = 100), the estimated EDR falls roughly 10 percentage points below this value, a deviation that is visually exaggerated by the truncated y-axis. As the number of studies increases the point estimate approaches the true value. In short, Pek et al.’s simulation reproduces Bartos and Schimmack’s results that z-curve estimates are fairly accurate when bias is simply selection for significance.

The simulation based on selection by strength of evidence leads to an overestimation of the EDR. Here smaller samples appear more accurate because they underestimated the EDR and the two biases cancel out. More relevant is that with large samples, z-curve overestimates true average power by about 10 percentage points. This value is limited to one specific simulation of bias and could be larger or smaller. The main point of this simulation is to show that z-curve estimates depend on the type of selection bias in a set of studies. The simulation does not tell us the nature of actual selection biases and the amount of bias in z-curve estimates that violations of the selection assumption introduce.

From a practical point of view an overestimation by 10 percentage points is not fatal. If the EDR estimate is 80% and the true average power is only 70%, the literature is still credible. The problem is bigger with literatures that already have low EDRs like experimental social psychology. With an EDR of 21% a 10 percentage point correction would reduce the EDR to 11% and the lower bound of the CI would include 5% (Schimmack, 2020), implying that all significant results could be false positives. Thus, Pek et al.’s simulation suggests that z-curve estimates may be overly optimistic. In fact, z-curve overestimates replicability compared to actual replication outcomes in the reproducibility project (Open Science Collaboration, 2015). Pek et al.’s simulations suggest that selection for effect sizes could be reason, but other reasons cannot be ruled out.

Simulation results for the False Discovery Risk and bias (Observed Discovery Rate minus Expected Discoery Rate) are the same because they are a direct function of the EDR. The Expected Replication Rate (ERR), average true power of the significant results, is a different parameter, but shows the same pattern.

In short, Pek et al.’s simulations show that z-curve estimates depend on the actual selection processes that are unknown, but that does not invalidate z-curve estimates. Especially important is that z-curve evaluations of credibility are asymmetrical (Schimmack, 2012). Low values raise concerns about a literature, but high values do not ensure credibility (Soto & Schimmack, 2024).

Specific Criticism of the Z-Curve Results in the Emotion Literature

10. Automatic Extraction (Again)

“Based on our discussion on the importance of determining independent sampling units, formulating a well-defined research question, establishing rigorous inclusion and exclusion criteria for 𝑝-values, and conducting thorough quality checks on selected 𝑝-values, we have strong reservations about the methods used in SS2024.” (Pek et al.)

As already mentioned, the population of all statistical hypothesis tests reported in a literature is meaningful for researchers in this area. Concerns about low replicability and high false positive rates have undermined the credibility of the empirical foundations of psychological research. We examined this question empirically using all available statistical test results. This defines a clearly specified population of reported results and a well-defined research question. The key limitation remains that automatic extraction does not distinguish focal and non-focal results. We believe that information for all tests is still important. After all, why are they reported if they are entirely useless? Does it not matter whether a manipulation check was important or whether a predicted result was moderated by gender? Moreover, it is well known that focality is often determined only after results are known in order to construct a compelling narrative (Kerr, 1998). A prominent illustration is provided by Cesario, Plaks, and Higgins (2006), where a failure to replicate the original main effect was nonetheless presented as a successful conceptual replication based on a significant moderator effect.

Pek et al. further argue that analyzing all reported tests violates the independence assumption. However, our inference relied on bootstrapping with articles as the clustering unit, which is the appropriate approach when multiple test statistics are nested within articles and directly addresses the dependence they emphasize. In addition, SS2024 reports z-curve analyses based on hand-coded focal tests that are not subject to these objections; these results are not discussed in Pek et al.’s critique.

11. No Bias in Psychology

“Even I f the 𝑍-curve estimates and their CIs are unbiased and exhibit proper coverage, SS2024’s claim of selection bias in emotional research – based on observing that.EDR for both journals were not contained within their respective 95% CIs for ODR – is dubious”.

It is striking that Pek et al. question z-curve evidence of publication bias. Even setting aside z-curve entirely, it is difficult to defend the assumption of honest and unbiased reporting in psychology. Sterling (1959) already noted that success rates approaching those observed in the literature are implausible under unbiased reporting, and subsequent surveys have repeatedly documented overwhelmingly high rates of statistically significant findings (Sterling et al., 1995).

To dismiss z-curve evidence of selection bias as “dubious” would therefore require assuming that average true power in psychology is extraordinarily high. This assumption is inconsistent with longstanding evidence that psychological studies are typically underpowered to detect even moderate effect sizes, with average power estimates far below conventional benchmarks (Cohen, 1988). None of these well-established considerations appear to inform Pek et al.’s evaluation of z-curve, which treats its results in isolation from the broader empirical literature on publication bias and research credibility. In this broader context, the combination of extremely high observed discovery rates for focal tests and low EDR estimates—such as the EDR of 27% reported in SS2024—is neither surprising nor dubious, but aligns with conclusions drawn from independent approaches, including large-scale replication efforts (Open Science Collaboration, 2015).

12. Misunderstanding of Estimation

“Inference using these estimators in the presence of bias would be misleading because the estimators converge onto an incorrect value.”

This statement repeats the fallacy of drawing general conclusions about the interpretability of z-curve from a specific, stylized simulation. In addition, Pek et al.’s argument effectively treats point estimates as the sole inferential output of z-curve analyses while disregarding uncertainty. Point estimates are never exact representations of unknown population parameters. If this standard were applied consistently, virtually all empirical research would have to be dismissed on the grounds that estimates are imperfect. Instead, estimates must be interpreted in light of their associated uncertainty and reasonable assumptions about error.

For the 227 significant hand-coded focal tests, the point estimate of the EDR was 27%, with a confidence interval ranging from 10% to 67%. Even if one were to assume an overestimation of 10 percentage points, as suggested by Pek et al.’s most pessimistic simulation scenario, the adjusted estimate would be 17%, and the lower bound of the confidence interval would include 5%. Under such conditions, it cannot be ruled out that a substantial proportion—or even all—statistically significant focal results in this literature are false positives. Rather than undermining our conclusions, Pek et al.’s simulation therefore reinforces the concern that many focal findings in the emotion literature may lack evidential value. At the same time, the width of the confidence interval also allows for more optimistic scenarios. The appropriate response to this uncertainty is to code and analyze additional studies, not to dismiss z-curve results simply because they do not yield perfect estimates of unknown population parameters.

13. Conclusion Does Not Follow From the Arguments

“𝑍-curve as a tool to index credibility faces fundamental challenges – both at the definitional and interpretational levels as well as in the statistical performance of its estimators.”

This conclusion does not follow from Pek et al.’s analyses. Their critique rests on selective simulations, treats point estimates as decisive while disregarding uncertainty, and evaluates z-curve in isolation from the broader literature on publication bias, statistical power, and replication. Rather than engaging with z-curve’s assumptions, scope, and documented performance under realistic conditions, their argument relies on narrow counterexamples that are then generalized to broad claims about invalidity.

More broadly, the article exemplifies a familiar pattern in which methodological tools are evaluated against unrealistic standards of perfection rather than by their ability to provide informative, uncertainty-qualified evidence under real-world conditions. Such standards would invalidate not only z-curve, but most statistical methods used in empirical science. When competing conclusions are presented about the credibility of a research literature, the appropriate response is not to dismiss imperfect tools, but to weigh the totality of evidence, assumptions, and robustness checks supporting each position.

We can debate whether the average true power of studies in the emotion literature is closer to 5% or 50%, but there is no plausible scenario under which average true power would justify success rates exceeding 90%. We can also debate the appropriate trade-off between false positives and false negatives, but it is equally clear that the standard significance criterion does not warrant the conclusion that no more than 5% of statistically significant results are false positives, especially in the presence of selection bias and low power. One may choose to dismiss z-curve results, but what cannot be justified is a return to uncorrected effect-size meta-analyses that assume unbiased reporting. Such approaches systematically inflate effect-size estimates and can even produce compelling meta-analytic evidence for effects that do not exist, as vividly illustrated by Bem’s (2011) meta-analysis of extrasensory perception findings.

Postscript

Ideally, the Schimmack-Pek controversy will attract some attention from human third parties with sufficient statistical expertise to understand the issues and weigh in on this important issue. As Pek et al. point out, a statistical tool that can distinguish credible and unbelievable research is needed. Effect size meta-analyses are also increasingly recognizing the need to correct for bias and new methods show promise. Z-curve is one tool among others. Rather than dismissing these attempts, we need to improve them, because we cannot go back to the time where psychologists were advised to err on the side of discovery (Bem, 2000).