Last update 8/25/2021

(expanded to 410 social/personality psychologists; included Dan Ariely)

Introduction

Since Fisher invented null-hypothesis significance testing, researchers have used p < .05 as a statistical criterion to interpret results as discoveries worthwhile of discussion (i.e., the null-hypothesis is false). Once published, these results are often treated as real findings even though alpha does not control the risk of false discoveries.

Statisticians have warned against the exclusive reliance on p < .05, but nearly 100 years after Fisher popularized this approach, it is still the most common way to interpret data. The main reason is that many attempts to improve on this practice have failed. The main problem is that a single statistical result is difficult to interpret. However, when individual results are interpreted in the context of other results, they become more informative. Based on the distribution of p-values it is possible to estimate the maximum false discovery rate (Bartos & Schimmack, 2020; Jager & Leek, 2014). This approach can be applied to the p-values published by individual authors to adjust p-values to keep the risk of false discoveries at a reasonable level, FDR < .05.

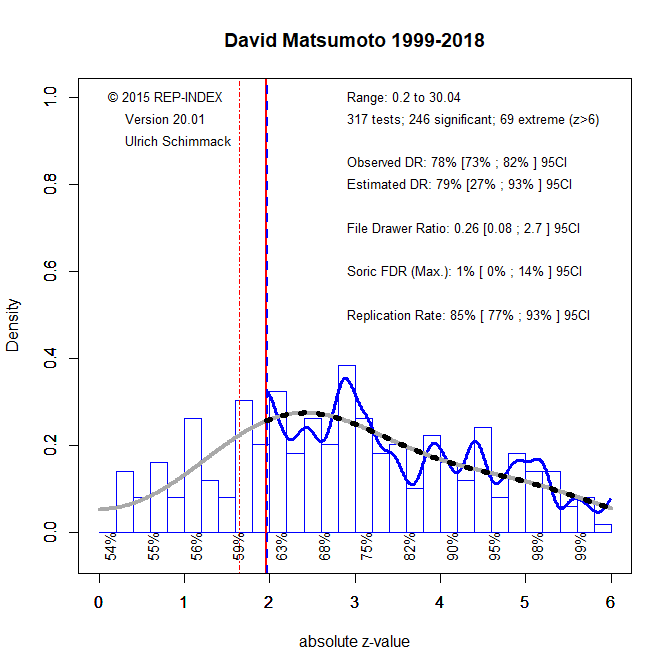

Researchers who mainly test true hypotheses with high power have a high discovery rate (many p-values below .05) and a low false discovery rate (FDR < .05). Figure 1 shows an example of a researcher who followed this strategy (for a detailed description of z-curve plots, see Schimmack, 2021).

We see that out of the 317 test-statistics retrieved from his articles, 246 were significant with alpha = .05. This is an observed discovery rate of 78%. We also see that this discovery rate closely matches the estimated discovery rate based on the distribution of the significant p-values, p < .05. The EDR is 79%. With an EDR of 79%, the maximum false discovery rate is only 1%. However, the 95%CI is wide and the lower bound of the CI for the EDR, 27%, allows for 14% false discoveries.

When the ODR matches the EDR, there is no evidence of publication bias. In this case, we can improve the estimates by fitting all p-values, including the non-significant ones. With a tighter CI for the EDR, we see that the 95%CI for the maximum FDR ranges from 1% to 3%. Thus, we can be confident that no more than 5% of the significant results wit alpha = .05 are false discoveries. Readers can therefore continue to use alpha = .05 to look for interesting discoveries in Matsumoto’s articles.

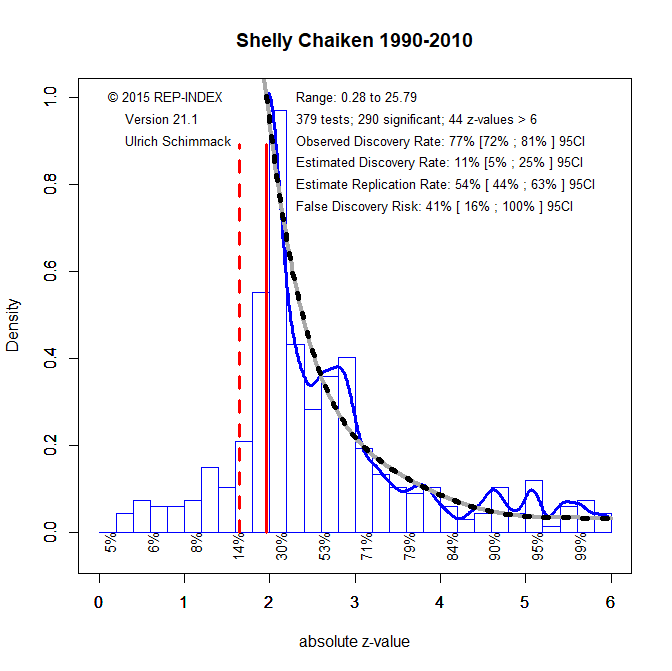

Figure 3 shows the results for a different type of researcher who took a risk and studied weak effect sizes with small samples. This produces many non-significant results that are often not published. The selection for significance inflates the observed discovery rate, but the z-curve plot and the comparison with the EDR shows the influence of publication bias. Here the ODR is similar to Figure 1, but the EDR is only 11%. An EDR of 11% translates into a large maximum false discovery rate of 41%. In addition, the 95%CI of the EDR includes 5%, which means the risk of false positives could be as high as 100%. In this case, using alpha = .05 to interpret results as discoveries is very risky. Clearly, p < .05 means something very different when reading an article by David Matsumoto or Shelly Chaiken.

Rather than dismissing all of Chaiken’s results, we can try to lower alpha to reduce the false discovery rate. If we set alpha = .01, the FDR is 15%. If we set alpha = .005, the FDR is 8%. To get the FDR below 5%, we need to set alpha to .001.

A uniform criterion of FDR < 5% is applied to all researchers in the rankings below. For some this means no adjustment to the traditional criterion. For others, alpha is lowered to .01, and for a few even lower than that.

The rankings below are based on automatrically extracted test-statistics from 40 journals (List of journals). The results should be interpreted with caution and treated as preliminary. They depend on the specific set of journals that were searched, the way results are being reported, and many other factors. The data are available (data.drop) and researchers can exclude articles or add articles and run their own analyses using the z-curve package in R (https://replicationindex.com/2020/01/10/z-curve-2-0/).

I am also happy to receive feedback about coding errors. I also recommended to hand-code articles to adjust alpha for focal hypothesis tests. This typically lowers the EDR and increases the FDR. For example, the automated method produced an EDR of 31 for Bargh, whereas hand-coding of focal tests produced an EDR of 12 (Bargh-Audit).

And here are the rankings. The results are fully automated and I was not able to cover up the fact that I placed only #188 out of 400 in the rankings. In another post, I will explain how researchers can move up in the rankings. Of course, one way to move up in the rankings is to increase statistical power in future studies. The rankings will be updated again when the 2021 data are available.

Despite the preliminary nature, I am confident that the results provide valuable information. Until know all p-values below .05 have been treated as if they are equally informative. The rankings here show that this is not the case. While p = .02 can be informative for one researcher, p = .002 may still entail a high false discovery risk for another researcher.

Good science requires not only open and objective reporting of new data; it also requires unbiased review of the literature. However, there are no rules and regulations regarding citations, and many authors cherry-pick citations that are consistent with their claims. Even when studies have failed to replicate, original studies are cited without citing the replication failures. In some cases, authors even cite original articles that have been retracted. Fortunately, it is easy to spot these acts of unscientific behavior. Here I am starting a project to list examples of bad scientific behaviors. Hopefully, more scientists will take the time to hold their colleagues accountable for ethical behavior in citations. They can even do so by posting anonymously on the PubPeer comment site.

| Rank | Name | Tests | ODR | EDR | ERR | FDR | Alpha |

|---|---|---|---|---|---|---|---|

| 1 | Robert A. Emmons | 53 | 87 | 89 | 90 | 1 | .05 |

| 2 | Allison L. Skinner | 229 | 59 | 81 | 85 | 1 | .05 |

| 3 | David Matsumoto | 378 | 83 | 79 | 85 | 1 | .05 |

| 4 | Linda J. Skitka | 532 | 68 | 75 | 82 | 2 | .05 |

| 5 | Todd K. Shackelford | 305 | 77 | 75 | 82 | 2 | .05 |

| 6 | Jonathan B. Freeman | 274 | 59 | 75 | 81 | 2 | .05 |

| 7 | Virgil Zeigler-Hill | 515 | 72 | 74 | 81 | 2 | .05 |

| 8 | Arthur A. Stone | 310 | 75 | 73 | 81 | 2 | .05 |

| 9 | David P. Schmitt | 207 | 78 | 71 | 77 | 2 | .05 |

| 10 | Emily A. Impett | 549 | 77 | 70 | 76 | 2 | .05 |

| 11 | Paula Bressan | 62 | 82 | 70 | 76 | 2 | .05 |

| 12 | Kurt Gray | 487 | 79 | 69 | 81 | 2 | .05 |

| 13 | Michael E. McCullough | 334 | 69 | 69 | 78 | 2 | .05 |

| 14 | Kipling D. Williams | 843 | 75 | 69 | 77 | 2 | .05 |

| 15 | John M. Zelenski | 156 | 71 | 69 | 76 | 2 | .05 |

| 16 | Amy J. C. Cuddy | 212 | 83 | 68 | 78 | 2 | .05 |

| 17 | Elke U. Weber | 312 | 69 | 68 | 77 | 0 | .05 |

| 18 | Hilary B. Bergsieker | 439 | 67 | 68 | 74 | 2 | .05 |

| 19 | Cameron Anderson | 652 | 71 | 67 | 74 | 3 | .05 |

| 20 | Rachael E. Jack | 249 | 70 | 66 | 80 | 3 | .05 |

| 21 | Jamil Zaki | 430 | 78 | 66 | 76 | 3 | .05 |

| 22 | A. Janet Tomiyama | 76 | 78 | 65 | 76 | 3 | .05 |

| 23 | Benjamin R. Karney | 392 | 56 | 65 | 73 | 3 | .05 |

| 24 | Phoebe C. Ellsworth | 605 | 74 | 65 | 72 | 3 | .05 |

| 25 | Jim Sidanius | 487 | 69 | 65 | 72 | 3 | .05 |

| 26 | Amelie Mummendey | 461 | 70 | 65 | 72 | 3 | .05 |

| 27 | Carol D. Ryff | 280 | 84 | 64 | 76 | 3 | .05 |

| 28 | Juliane Degner | 435 | 63 | 64 | 71 | 3 | .05 |

| 29 | Steven J. Heine | 597 | 78 | 63 | 77 | 3 | .05 |

| 30 | David M. Amodio | 584 | 66 | 63 | 70 | 3 | .05 |

| 31 | Thomas N Bradbury | 398 | 61 | 63 | 69 | 3 | .05 |

| 32 | Elaine Fox | 472 | 79 | 62 | 78 | 3 | .05 |

| 33 | Miles Hewstone | 1427 | 70 | 62 | 73 | 3 | .05 |

| 34 | Linda R. Tropp | 344 | 65 | 61 | 80 | 3 | .05 |

| 35 | Rainer Greifeneder | 944 | 75 | 61 | 77 | 3 | .05 |

| 36 | Klaus Fiedler | 1950 | 77 | 61 | 74 | 3 | .05 |

| 37 | Jesse Graham | 377 | 70 | 60 | 76 | 3 | .05 |

| 38 | Richard W. Robins | 270 | 76 | 60 | 70 | 4 | .05 |

| 39 | Simine Vazire | 137 | 66 | 60 | 64 | 4 | .05 |

| 40 | On Amir | 267 | 67 | 59 | 88 | 4 | .05 |

| 41 | Edward P. Lemay | 289 | 87 | 59 | 81 | 4 | .05 |

| 42 | William B. Swann Jr. | 1070 | 78 | 59 | 80 | 4 | .05 |

| 43 | Margaret S. Clark | 505 | 75 | 59 | 77 | 4 | .05 |

| 44 | Bernhard Leidner | 724 | 64 | 59 | 65 | 4 | .05 |

| 45 | B. Keith Payne | 879 | 71 | 58 | 76 | 4 | .05 |

| 46 | Ximena B. Arriaga | 284 | 66 | 58 | 69 | 4 | .05 |

| 47 | Joris Lammers | 728 | 69 | 58 | 69 | 4 | .05 |

| 48 | Patricia G. Devine | 606 | 71 | 58 | 67 | 4 | .05 |

| 49 | Rainer Reisenzein | 201 | 65 | 57 | 69 | 4 | .05 |

| 50 | Barbara A. Mellers | 287 | 80 | 56 | 78 | 4 | .05 |

| 51 | Joris Lammers | 705 | 69 | 56 | 69 | 4 | .05 |

| 52 | Jean M. Twenge | 381 | 72 | 56 | 59 | 4 | .05 |

| 53 | Nicholas Epley | 1504 | 74 | 55 | 72 | 4 | .05 |

| 54 | Kaiping Peng | 566 | 77 | 54 | 75 | 4 | .05 |

| 55 | Krishna Savani | 638 | 71 | 53 | 69 | 5 | .05 |

| 56 | Leslie Ashburn-Nardo | 109 | 80 | 52 | 83 | 5 | .05 |

| 57 | Lee Jussim | 226 | 80 | 52 | 71 | 5 | .05 |

| 58 | Richard M. Ryan | 998 | 78 | 52 | 69 | 5 | .05 |

| 59 | Ethan Kross | 614 | 66 | 52 | 67 | 5 | .05 |

| 60 | Edward L. Deci | 284 | 79 | 52 | 63 | 5 | .05 |

| 61 | Roger Giner-Sorolla | 663 | 81 | 51 | 80 | 5 | .05 |

| 62 | Bertram F. Malle | 422 | 73 | 51 | 75 | 5 | .05 |

| 63 | George A. Bonanno | 479 | 72 | 51 | 70 | 5 | .05 |

| 64 | Jens B. Asendorpf | 253 | 74 | 51 | 69 | 5 | .05 |

| 65 | Samuel D. Gosling | 108 | 58 | 51 | 62 | 5 | .05 |

| 66 | Tessa V. West | 691 | 71 | 51 | 59 | 5 | .05 |

| 67 | Paul Rozin | 449 | 78 | 50 | 84 | 5 | .05 |

| 68 | Joachim I. Krueger | 436 | 78 | 50 | 81 | 5 | .05 |

| 69 | Sheena S. Iyengar | 207 | 63 | 50 | 80 | 5 | .05 |

| 70 | James J. Gross | 1104 | 72 | 50 | 77 | 5 | .05 |

| 71 | Mark Rubin | 306 | 68 | 50 | 75 | 5 | .05 |

| 72 | Pieter Van Dessel | 578 | 70 | 50 | 75 | 5 | .05 |

| 73 | Shinobu Kitayama | 983 | 76 | 50 | 71 | 5 | .05 |

| 74 | Matthew J. Hornsey | 1656 | 74 | 50 | 71 | 5 | .05 |

| 75 | Janice R. Kelly | 366 | 75 | 50 | 70 | 5 | .05 |

| 76 | Antonio L. Freitas | 247 | 79 | 50 | 64 | 5 | .05 |

| 77 | Paul K. Piff | 166 | 77 | 50 | 63 | 5 | .05 |

| 78 | Mina Cikara | 392 | 71 | 49 | 80 | 5 | .05 |

| 79 | Beate Seibt | 379 | 72 | 49 | 62 | 6 | .01 |

| 80 | Ludwin E. Molina | 163 | 69 | 49 | 61 | 5 | .05 |

| 81 | Bertram Gawronski | 1803 | 72 | 48 | 76 | 6 | .01 |

| 82 | Penelope Lockwood | 458 | 71 | 48 | 70 | 6 | .01 |

| 83 | Edward R. Hirt | 1042 | 81 | 48 | 65 | 6 | .01 |

| 84 | Matthew D. Lieberman | 398 | 72 | 47 | 80 | 6 | .01 |

| 85 | John T. Cacioppo | 438 | 76 | 47 | 69 | 6 | .01 |

| 86 | Agneta H. Fischer | 952 | 75 | 47 | 69 | 6 | .01 |

| 87 | Leaf van Boven | 711 | 72 | 47 | 67 | 6 | .01 |

| 88 | Stephanie A. Fryberg | 248 | 62 | 47 | 66 | 6 | .01 |

| 89 | Daniel M. Wegner | 602 | 76 | 47 | 65 | 6 | .01 |

| 90 | Anne E. Wilson | 785 | 71 | 47 | 64 | 6 | .01 |

| 91 | Rainer Banse | 402 | 78 | 46 | 72 | 6 | .01 |

| 92 | Alice H. Eagly | 330 | 75 | 46 | 71 | 6 | .01 |

| 93 | Jeanne L. Tsai | 1241 | 73 | 46 | 67 | 6 | .01 |

| 94 | Jennifer S. Lerner | 181 | 80 | 46 | 61 | 6 | .01 |

| 95 | Andrea L. Meltzer | 549 | 52 | 45 | 72 | 6 | .01 |

| 96 | R. Chris Fraley | 642 | 70 | 45 | 72 | 7 | .01 |

| 97 | Constantine Sedikides | 2566 | 71 | 45 | 70 | 6 | .01 |

| 98 | Paul Slovic | 377 | 74 | 45 | 70 | 6 | .01 |

| 99 | Dacher Keltner | 1233 | 72 | 45 | 64 | 6 | .01 |

| 100 | Brian A. Nosek | 816 | 68 | 44 | 81 | 7 | .01 |

| 101 | George Loewenstein | 752 | 71 | 44 | 72 | 7 | .01 |

| 102 | Ursula Hess | 774 | 78 | 44 | 71 | 7 | .01 |

| 103 | Jason P. Mitchell | 600 | 73 | 43 | 73 | 7 | .01 |

| 104 | Jessica L. Tracy | 632 | 74 | 43 | 71 | 7 | .01 |

| 105 | Charles M. Judd | 1054 | 76 | 43 | 68 | 7 | .01 |

| 106 | S. Alexander Haslam | 1198 | 72 | 43 | 64 | 7 | .01 |

| 107 | Mark Schaller | 565 | 73 | 43 | 61 | 7 | .01 |

| 108 | Susan T. Fiske | 911 | 78 | 42 | 74 | 7 | .01 |

| 109 | Lisa Feldman Barrett | 644 | 69 | 42 | 70 | 7 | .01 |

| 110 | Jolanda Jetten | 1956 | 73 | 42 | 67 | 7 | .01 |

| 111 | Mario Mikulincer | 901 | 89 | 42 | 64 | 7 | .01 |

| 112 | Bernadette Park | 973 | 77 | 42 | 64 | 7 | .01 |

| 113 | Paul A. M. Van Lange | 1092 | 70 | 42 | 63 | 7 | .01 |

| 114 | Wendi L. Gardner | 798 | 67 | 42 | 63 | 7 | .01 |

| 115 | Will M. Gervais | 110 | 69 | 42 | 59 | 7 | .01 |

| 116 | Jordan B. Peterson | 266 | 60 | 41 | 79 | 7 | .01 |

| 117 | Philip E. Tetlock | 549 | 79 | 41 | 73 | 7 | .01 |

| 118 | Amanda B. Diekman | 438 | 83 | 41 | 70 | 7 | .01 |

| 119 | Daniel H. J. Wigboldus | 492 | 76 | 41 | 67 | 8 | .01 |

| 120 | Michael Inzlicht | 686 | 66 | 41 | 63 | 8 | .01 |

| 121 | Naomi Ellemers | 2388 | 74 | 41 | 63 | 8 | .01 |

| 122 | Phillip Atiba Goff | 299 | 68 | 41 | 62 | 7 | .01 |

| 123 | Stacey Sinclair | 327 | 70 | 41 | 57 | 8 | .01 |

| 124 | Francesca Gino | 2521 | 75 | 40 | 69 | 8 | .01 |

| 125 | Michael I. Norton | 1136 | 71 | 40 | 69 | 8 | .01 |

| 126 | David J. Hauser | 156 | 74 | 40 | 68 | 8 | .01 |

| 127 | Elizabeth Page-Gould | 411 | 57 | 40 | 66 | 8 | .01 |

| 128 | Tiffany A. Ito | 349 | 80 | 40 | 64 | 8 | .01 |

| 129 | Richard E. Petty | 2771 | 69 | 40 | 64 | 8 | .01 |

| 130 | Tim Wildschut | 1374 | 73 | 40 | 64 | 8 | .01 |

| 131 | Norbert Schwarz | 1337 | 72 | 40 | 63 | 8 | .01 |

| 132 | Veronika Job | 362 | 70 | 40 | 63 | 8 | .01 |

| 133 | Wendy Wood | 462 | 75 | 40 | 62 | 8 | .01 |

| 134 | Minah H. Jung | 156 | 83 | 39 | 83 | 8 | .01 |

| 135 | Marcel Zeelenberg | 868 | 76 | 39 | 79 | 8 | .01 |

| 136 | Tobias Greitemeyer | 1737 | 72 | 39 | 67 | 8 | .01 |

| 137 | Jason E. Plaks | 582 | 70 | 39 | 67 | 8 | .01 |

| 138 | Carol S. Dweck | 1028 | 70 | 39 | 63 | 8 | .01 |

| 139 | Christian S. Crandall | 362 | 75 | 39 | 59 | 8 | .01 |

| 140 | Harry T. Reis | 998 | 69 | 38 | 74 | 9 | .01 |

| 141 | Vanessa K. Bohns | 420 | 77 | 38 | 74 | 8 | .01 |

| 142 | Jerry Suls | 413 | 71 | 38 | 68 | 8 | .01 |

| 143 | Eric D. Knowles | 384 | 68 | 38 | 64 | 8 | .01 |

| 144 | C. Nathan DeWall | 1336 | 73 | 38 | 63 | 9 | .01 |

| 145 | Clayton R. Critcher | 697 | 82 | 38 | 63 | 9 | .01 |

| 146 | John F. Dovidio | 2019 | 69 | 38 | 62 | 9 | .01 |

| 147 | Joshua Correll | 549 | 61 | 38 | 62 | 9 | .01 |

| 148 | Abigail A. Scholer | 556 | 58 | 38 | 62 | 9 | .01 |

| 149 | Chris Janiszewski | 107 | 81 | 38 | 58 | 9 | .01 |

| 150 | Herbert Bless | 586 | 73 | 38 | 57 | 9 | .01 |

| 151 | Mahzarin R. Banaji | 880 | 73 | 37 | 78 | 9 | .01 |

| 152 | Rolf Reber | 280 | 64 | 37 | 72 | 9 | .01 |

| 153 | Kevin N. Ochsner | 406 | 79 | 37 | 70 | 9 | .01 |

| 154 | Mark J. Brandt | 277 | 70 | 37 | 70 | 9 | .01 |

| 155 | Geoff MacDonald | 406 | 67 | 37 | 67 | 9 | .01 |

| 156 | Mara Mather | 1038 | 78 | 37 | 67 | 9 | .01 |

| 157 | Antony S. R. Manstead | 1656 | 72 | 37 | 62 | 9 | .01 |

| 158 | Lorne Campbell | 433 | 67 | 37 | 61 | 9 | .01 |

| 159 | Sanford E. DeVoe | 236 | 71 | 37 | 61 | 9 | .01 |

| 160 | Ayelet Fishbach | 1416 | 78 | 37 | 59 | 9 | .01 |

| 161 | Fritz Strack | 607 | 75 | 37 | 56 | 9 | .01 |

| 162 | Jeff T. Larsen | 181 | 74 | 36 | 67 | 10 | .01 |

| 163 | Nyla R. Branscombe | 1276 | 70 | 36 | 65 | 9 | .01 |

| 164 | Yaacov Schul | 411 | 61 | 36 | 64 | 9 | .01 |

| 165 | D. S. Moskowitz | 3418 | 74 | 36 | 63 | 9 | .01 |

| 166 | Pablo Brinol | 1356 | 67 | 36 | 62 | 9 | .01 |

| 167 | Todd B. Kashdan | 377 | 73 | 36 | 61 | 9 | .01 |

| 168 | Barbara L. Fredrickson | 287 | 72 | 36 | 61 | 9 | .01 |

| 169 | Duane T. Wegener | 980 | 77 | 36 | 60 | 9 | .01 |

| 170 | Joanne V. Wood | 1093 | 74 | 36 | 60 | 9 | .01 |

| 171 | Daniel A. Effron | 484 | 66 | 36 | 60 | 9 | .01 |

| 172 | Niall Bolger | 376 | 67 | 36 | 58 | 9 | .01 |

| 173 | Craig A. Anderson | 467 | 76 | 36 | 55 | 9 | .01 |

| 174 | Michael Harris Bond | 378 | 73 | 35 | 84 | 10 | .01 |

| 175 | Glenn Adams | 270 | 71 | 35 | 73 | 10 | .01 |

| 176 | Daniel M. Bernstein | 404 | 73 | 35 | 70 | 10 | .01 |

| 177 | C. Miguel Brendl | 121 | 76 | 35 | 68 | 10 | .01 |

| 178 | Azim F. Sharif | 183 | 74 | 35 | 68 | 10 | .01 |

| 179 | Emily Balcetis | 599 | 69 | 35 | 68 | 10 | .01 |

| 180 | Eva Walther | 493 | 82 | 35 | 66 | 10 | .01 |

| 181 | Michael D. Robinson | 1388 | 78 | 35 | 66 | 10 | .01 |

| 182 | Igor Grossmann | 203 | 64 | 35 | 66 | 10 | .01 |

| 183 | Diana I. Tamir | 156 | 62 | 35 | 62 | 10 | .01 |

| 184 | Samuel L. Gaertner | 321 | 75 | 35 | 61 | 10 | .01 |

| 185 | John T. Jost | 794 | 70 | 35 | 61 | 10 | .01 |

| 186 | Eric L. Uhlmann | 457 | 67 | 35 | 61 | 10 | .01 |

| 187 | Nalini Ambady | 1256 | 62 | 35 | 56 | 10 | .01 |

| 188 | Daphna Oyserman | 446 | 55 | 35 | 54 | 10 | .01 |

| 189 | Victoria M. Esses | 295 | 75 | 35 | 53 | 10 | .01 |

| 190 | Linda J. Levine | 495 | 74 | 34 | 78 | 10 | .01 |

| 191 | Wiebke Bleidorn | 99 | 63 | 34 | 74 | 10 | .01 |

| 192 | Thomas Gilovich | 1193 | 80 | 34 | 69 | 10 | .01 |

| 193 | Alexander J. Rothman | 133 | 69 | 34 | 65 | 10 | .01 |

| 194 | Francis J. Flynn | 378 | 72 | 34 | 63 | 10 | .01 |

| 195 | Paula M. Niedenthal | 522 | 69 | 34 | 61 | 10 | .01 |

| 196 | Ozlem Ayduk | 549 | 62 | 34 | 59 | 10 | .01 |

| 197 | Paul Ekman | 88 | 70 | 34 | 55 | 10 | .01 |

| 198 | Alison Ledgerwood | 214 | 75 | 34 | 54 | 10 | .01 |

| 199 | Christopher R. Agnew | 325 | 75 | 33 | 76 | 10 | .01 |

| 200 | Michelle N. Shiota | 242 | 60 | 33 | 63 | 11 | .01 |

| 201 | Malte Friese | 501 | 61 | 33 | 57 | 11 | .01 |

| 202 | Kerry Kawakami | 487 | 68 | 33 | 56 | 10 | .01 |

| 203 | Danu Anthony Stinson | 494 | 77 | 33 | 54 | 11 | .01 |

| 204 | Jennifer A. Richeson | 831 | 67 | 33 | 52 | 11 | .01 |

| 205 | Margo J. Monteith | 773 | 76 | 32 | 77 | 11 | .01 |

| 206 | Ulrich Schimmack | 318 | 75 | 32 | 63 | 11 | .01 |

| 207 | Mark Snyder | 562 | 72 | 32 | 63 | 11 | .01 |

| 208 | Michele J. Gelfand | 365 | 76 | 32 | 63 | 11 | .01 |

| 209 | Russell H. Fazio | 1094 | 69 | 32 | 61 | 11 | .01 |

| 210 | Eric van Dijk | 238 | 67 | 32 | 60 | 11 | .01 |

| 211 | Tom Meyvis | 377 | 77 | 32 | 60 | 11 | .01 |

| 212 | Eli J. Finkel | 1392 | 62 | 32 | 57 | 11 | .01 |

| 213 | Robert B. Cialdini | 379 | 72 | 32 | 56 | 11 | .01 |

| 214 | Jonathan W. Kunstman | 430 | 66 | 32 | 53 | 11 | .01 |

| 215 | Delroy L. Paulhus | 121 | 77 | 31 | 82 | 12 | .01 |

| 216 | Yuen J. Huo | 132 | 74 | 31 | 80 | 11 | .01 |

| 217 | Gerd Bohner | 513 | 71 | 31 | 70 | 11 | .01 |

| 218 | Christopher K. Hsee | 689 | 75 | 31 | 63 | 11 | .01 |

| 219 | Vivian Zayas | 251 | 71 | 31 | 60 | 12 | .01 |

| 220 | John A. Bargh | 651 | 72 | 31 | 55 | 12 | .01 |

| 221 | Tom Pyszczynski | 948 | 69 | 31 | 54 | 12 | .01 |

| 222 | Roy F. Baumeister | 2442 | 69 | 31 | 52 | 12 | .01 |

| 223 | E. Ashby Plant | 831 | 77 | 31 | 51 | 11 | .01 |

| 224 | Kathleen D. Vohs | 944 | 68 | 31 | 51 | 12 | .01 |

| 225 | Jamie Arndt | 1318 | 69 | 31 | 50 | 12 | .01 |

| 226 | Anthony G. Greenwald | 357 | 72 | 30 | 83 | 12 | .01 |

| 227 | Nicholas O. Rule | 1294 | 68 | 30 | 75 | 13 | .01 |

| 228 | Lauren J. Human | 447 | 59 | 30 | 70 | 12 | .01 |

| 229 | Jennifer Crocker | 515 | 68 | 30 | 67 | 12 | .01 |

| 230 | Dale T. Miller | 521 | 71 | 30 | 64 | 12 | .01 |

| 231 | Thomas W. Schubert | 353 | 70 | 30 | 60 | 12 | .01 |

| 232 | Joseph A. Vandello | 494 | 73 | 30 | 60 | 12 | .01 |

| 233 | W. Keith Campbell | 528 | 70 | 30 | 58 | 12 | .01 |

| 234 | Arthur Aron | 307 | 65 | 30 | 56 | 12 | .01 |

| 235 | Pamela K. Smith | 149 | 66 | 30 | 52 | 12 | .01 |

| 236 | Aaron C. Kay | 1320 | 70 | 30 | 51 | 12 | .01 |

| 237 | Steven W. Gangestad | 198 | 63 | 30 | 41 | 13 | .005 |

| 238 | Eliot R. Smith | 445 | 79 | 29 | 73 | 13 | .01 |

| 239 | Nir Halevy | 262 | 68 | 29 | 72 | 13 | .01 |

| 240 | E. Allan Lind | 370 | 82 | 29 | 72 | 13 | .01 |

| 241 | Richard E. Nisbett | 319 | 73 | 29 | 69 | 13 | .01 |

| 242 | Hazel Rose Markus | 674 | 76 | 29 | 68 | 13 | .01 |

| 243 | Emanuele Castano | 445 | 69 | 29 | 65 | 13 | .01 |

| 244 | Dirk Wentura | 830 | 65 | 29 | 64 | 13 | .01 |

| 245 | Boris Egloff | 274 | 81 | 29 | 58 | 13 | .01 |

| 246 | Monica Biernat | 813 | 77 | 29 | 57 | 13 | .01 |

| 247 | Gordon B. Moskowitz | 374 | 72 | 29 | 57 | 13 | .01 |

| 248 | Russell Spears | 2286 | 73 | 29 | 55 | 13 | .01 |

| 249 | Jeff Greenberg | 1358 | 77 | 29 | 54 | 13 | .01 |

| 250 | Caryl E. Rusbult | 218 | 60 | 29 | 54 | 13 | .01 |

| 251 | Naomi I. Eisenberger | 179 | 74 | 28 | 79 | 14 | .01 |

| 252 | Brent W. Roberts | 562 | 72 | 28 | 77 | 14 | .01 |

| 253 | Yoav Bar-Anan | 525 | 75 | 28 | 76 | 13 | .01 |

| 254 | Eddie Harmon-Jones | 738 | 73 | 28 | 70 | 14 | .01 |

| 255 | Matthew Feinberg | 295 | 77 | 28 | 69 | 14 | .01 |

| 256 | Roland Neumann | 258 | 77 | 28 | 67 | 13 | .01 |

| 257 | Eugene M. Caruso | 822 | 75 | 28 | 64 | 13 | .01 |

| 258 | Ulrich Kuehnen | 822 | 75 | 28 | 64 | 13 | .01 |

| 259 | Elizabeth W. Dunn | 395 | 75 | 28 | 64 | 14 | .01 |

| 260 | Jeffry A. Simpson | 697 | 74 | 28 | 55 | 13 | .01 |

| 261 | Sander L. Koole | 767 | 65 | 28 | 52 | 14 | .01 |

| 262 | Richard J. Davidson | 380 | 64 | 28 | 51 | 14 | .01 |

| 263 | Shelly L. Gable | 364 | 64 | 28 | 50 | 14 | .01 |

| 264 | Adam D. Galinsky | 2154 | 70 | 28 | 49 | 13 | .01 |

| 265 | Grainne M. Fitzsimons | 585 | 68 | 28 | 49 | 14 | .01 |

| 266 | Geoffrey J. Leonardelli | 290 | 68 | 28 | 48 | 14 | .005 |

| 267 | Joshua Aronson | 183 | 85 | 28 | 46 | 14 | .005 |

| 268 | Henk Aarts | 1003 | 67 | 28 | 45 | 14 | .005 |

| 269 | Vanessa K. Bohns | 422 | 76 | 27 | 74 | 15 | .01 |

| 270 | Jan De Houwer | 1972 | 70 | 27 | 72 | 14 | .01 |

| 271 | Dan Ariely | 600 | 70 | 27 | 69 | 14 | .01 |

| 272 | Charles Stangor | 185 | 81 | 27 | 68 | 15 | .01 |

| 273 | Karl Christoph Klauer | 801 | 67 | 27 | 65 | 14 | .01 |

| 274 | Mario Gollwitzer | 500 | 58 | 27 | 62 | 14 | .01 |

| 275 | Jennifer S. Beer | 80 | 56 | 27 | 54 | 14 | .01 |

| 276 | Eldar Shafir | 107 | 78 | 27 | 51 | 14 | .01 |

| 277 | Guido H. E. Gendolla | 422 | 76 | 27 | 47 | 14 | .005 |

| 278 | Klaus R. Scherer | 467 | 83 | 26 | 78 | 15 | .01 |

| 279 | William G. Graziano | 532 | 71 | 26 | 66 | 15 | .01 |

| 280 | Galen V. Bodenhausen | 585 | 74 | 26 | 61 | 15 | .01 |

| 281 | Sonja Lyubomirsky | 530 | 71 | 26 | 59 | 15 | .01 |

| 282 | Kai Sassenberg | 872 | 71 | 26 | 56 | 15 | .01 |

| 283 | Kristin Laurin | 648 | 63 | 26 | 51 | 15 | .01 |

| 284 | Claude M. Steele | 434 | 73 | 26 | 42 | 15 | .005 |

| 285 | David G. Rand | 392 | 70 | 25 | 81 | 15 | .01 |

| 286 | Paul Bloom | 502 | 72 | 25 | 79 | 16 | .01 |

| 287 | Kerri L. Johnson | 532 | 76 | 25 | 76 | 15 | .01 |

| 288 | Batja Mesquita | 416 | 71 | 25 | 73 | 16 | .01 |

| 289 | Rebecca J. Schlegel | 261 | 67 | 25 | 71 | 15 | .01 |

| 290 | Phillip R. Shaver | 566 | 81 | 25 | 71 | 16 | .01 |

| 291 | David Dunning | 818 | 74 | 25 | 70 | 16 | .01 |

| 292 | Laurie A. Rudman | 482 | 72 | 25 | 68 | 16 | .01 |

| 293 | David A. Lishner | 105 | 65 | 25 | 63 | 16 | .01 |

| 294 | Mark J. Landau | 950 | 78 | 25 | 45 | 16 | .005 |

| 295 | Ronald S. Friedman | 183 | 79 | 25 | 44 | 16 | .005 |

| 296 | Joel Cooper | 257 | 72 | 25 | 39 | 16 | .005 |

| 297 | Alison L. Chasteen | 223 | 68 | 24 | 69 | 16 | .01 |

| 298 | Jeff Galak | 313 | 73 | 24 | 68 | 17 | .01 |

| 299 | Steven J. Sherman | 888 | 74 | 24 | 62 | 16 | .01 |

| 300 | Shigehiro Oishi | 1109 | 64 | 24 | 61 | 17 | .01 |

| 301 | Thomas Mussweiler | 604 | 70 | 24 | 43 | 17 | .005 |

| 302 | Mark W. Baldwin | 247 | 72 | 24 | 41 | 17 | .005 |

| 303 | Evan P. Apfelbaum | 256 | 62 | 24 | 41 | 17 | .005 |

| 304 | Nurit Shnabel | 564 | 76 | 23 | 78 | 18 | .01 |

| 305 | Klaus Rothermund | 738 | 71 | 23 | 76 | 18 | .01 |

| 306 | Felicia Pratto | 410 | 73 | 23 | 75 | 18 | .01 |

| 307 | Jonathan Haidt | 368 | 76 | 23 | 73 | 17 | .01 |

| 308 | Roland Imhoff | 365 | 74 | 23 | 73 | 18 | .01 |

| 309 | Jeffrey W Sherman | 992 | 68 | 23 | 71 | 17 | .01 |

| 310 | Jennifer L. Eberhardt | 202 | 71 | 23 | 62 | 18 | .005 |

| 311 | Bernard A. Nijstad | 693 | 71 | 23 | 52 | 18 | .005 |

| 312 | Brandon J. Schmeichel | 652 | 66 | 23 | 45 | 17 | .005 |

| 313 | Sam J. Maglio | 325 | 72 | 23 | 42 | 17 | .005 |

| 314 | David M. Buss | 461 | 82 | 22 | 80 | 19 | .01 |

| 315 | Yoel Inbar | 280 | 67 | 22 | 71 | 19 | .01 |

| 316 | Serena Chen | 865 | 72 | 22 | 67 | 19 | .005 |

| 317 | Spike W. S. Lee | 145 | 68 | 22 | 64 | 19 | .005 |

| 318 | Marilynn B. Brewer | 314 | 75 | 22 | 62 | 18 | .005 |

| 319 | Michael Ross | 1164 | 70 | 22 | 62 | 18 | .005 |

| 320 | Dieter Frey | 1538 | 68 | 22 | 58 | 18 | .005 |

| 321 | G. Daniel Lassiter | 189 | 82 | 22 | 55 | 19 | .01 |

| 322 | Sean M. McCrea | 584 | 73 | 22 | 54 | 19 | .005 |

| 323 | Wendy Berry Mendes | 965 | 68 | 22 | 44 | 19 | .005 |

| 324 | Paul W. Eastwick | 583 | 65 | 21 | 69 | 19 | .005 |

| 325 | Kees van den Bos | 1150 | 84 | 21 | 69 | 20 | .005 |

| 326 | Maya Tamir | 1342 | 80 | 21 | 64 | 19 | .005 |

| 327 | Joseph P. Forgas | 888 | 83 | 21 | 59 | 19 | .005 |

| 328 | Michaela Wanke | 362 | 74 | 21 | 59 | 19 | .005 |

| 329 | Dolores Albarracin | 540 | 66 | 21 | 56 | 20 | .005 |

| 330 | Elizabeth Levy Paluck | 31 | 84 | 21 | 55 | 20 | .005 |

| 331 | Vanessa LoBue | 299 | 68 | 20 | 76 | 21 | .01 |

| 332 | Christopher J. Armitage | 160 | 62 | 20 | 73 | 21 | .005 |

| 333 | Elizabeth A. Phelps | 686 | 78 | 20 | 72 | 21 | .005 |

| 334 | Jay J. van Bavel | 437 | 64 | 20 | 71 | 21 | .005 |

| 335 | David A. Pizarro | 227 | 71 | 20 | 69 | 21 | .005 |

| 336 | Andrew J. Elliot | 1018 | 81 | 20 | 67 | 21 | .005 |

| 337 | William A. Cunningham | 238 | 76 | 20 | 64 | 22 | .005 |

| 338 | Laura D. Scherer | 212 | 69 | 20 | 64 | 21 | .01 |

| 339 | Kentaro Fujita | 458 | 69 | 20 | 62 | 21 | .005 |

| 340 | Geoffrey L. Cohen | 1590 | 68 | 20 | 50 | 21 | .005 |

| 341 | Ana Guinote | 378 | 76 | 20 | 47 | 21 | .005 |

| 342 | Tanya L. Chartrand | 424 | 67 | 20 | 33 | 21 | .001 |

| 343 | Selin Kesebir | 328 | 66 | 19 | 73 | 22 | .005 |

| 344 | Vincent Y. Yzerbyt | 1412 | 73 | 19 | 73 | 22 | .01 |

| 345 | James K. McNulty | 1047 | 56 | 19 | 65 | 23 | .005 |

| 346 | Robert S. Wyer | 871 | 82 | 19 | 63 | 22 | .005 |

| 347 | Travis Proulx | 174 | 63 | 19 | 62 | 22 | .005 |

| 348 | Peter M. Gollwitzer | 1303 | 64 | 19 | 58 | 22 | .005 |

| 349 | Nilanjana Dasgupta | 383 | 76 | 19 | 52 | 22 | .005 |

| 350 | Jamie L. Goldenberg | 568 | 77 | 19 | 50 | 22 | .01 |

| 351 | Richard P. Eibach | 753 | 69 | 19 | 47 | 23 | .001 |

| 352 | Gerald L. Clore | 456 | 74 | 19 | 45 | 22 | .001 |

| 353 | James M. Tyler | 130 | 87 | 18 | 74 | 24 | .005 |

| 354 | Roland Deutsch | 365 | 78 | 18 | 71 | 24 | .005 |

| 355 | Ed Diener | 498 | 64 | 18 | 68 | 24 | .005 |

| 356 | Kennon M. Sheldon | 698 | 74 | 18 | 66 | 23 | .005 |

| 357 | Wilhelm Hofmann | 624 | 67 | 18 | 66 | 23 | .005 |

| 358 | Laura L. Carstensen | 723 | 77 | 18 | 64 | 24 | .005 |

| 359 | Toni Schmader | 546 | 69 | 18 | 61 | 24 | .005 |

| 360 | Frank D. Fincham | 734 | 69 | 18 | 59 | 24 | .005 |

| 361 | David K. Sherman | 1128 | 61 | 18 | 57 | 24 | .005 |

| 362 | Lisa K. Libby | 418 | 65 | 18 | 54 | 24 | .005 |

| 363 | Chen-Bo Zhong | 327 | 68 | 18 | 49 | 25 | .005 |

| 364 | Stefan C. Schmukle | 114 | 62 | 17 | 71 | 26 | .005 |

| 365 | Michel Tuan Pham | 246 | 86 | 17 | 68 | 25 | .005 |

| 366 | Leandre R. Fabrigar | 632 | 70 | 17 | 67 | 26 | .005 |

| 367 | Neal J. Roese | 368 | 64 | 17 | 65 | 25 | .005 |

| 368 | Carey K. Morewedge | 633 | 76 | 17 | 65 | 26 | .005 |

| 369 | Timothy D. Wilson | 798 | 65 | 17 | 63 | 26 | .005 |

| 370 | Brad J. Bushman | 897 | 74 | 17 | 62 | 25 | .005 |

| 371 | Ara Norenzayan | 225 | 72 | 17 | 61 | 25 | .005 |

| 372 | Benoit Monin | 635 | 65 | 17 | 56 | 25 | .005 |

| 373 | Michael W. Kraus | 617 | 72 | 17 | 55 | 26 | .005 |

| 374 | Ad van Knippenberg | 683 | 72 | 17 | 55 | 26 | .001 |

| 375 | E. Tory. Higgins | 1868 | 68 | 17 | 54 | 25 | .001 |

| 376 | Ap Dijksterhuis | 750 | 68 | 17 | 54 | 26 | .005 |

| 377 | Joseph Cesario | 146 | 62 | 17 | 45 | 26 | .001 |

| 378 | Simone Schnall | 270 | 62 | 17 | 31 | 26 | .001 |

| 379 | Joshua M. Ackerman | 380 | 53 | 16 | 70 | 13 | .01 |

| 380 | Melissa J. Ferguson | 1163 | 72 | 16 | 69 | 27 | .005 |

| 381 | Laura A. King | 391 | 76 | 16 | 68 | 29 | .005 |

| 382 | Daniel T. Gilbert | 724 | 65 | 16 | 65 | 27 | .005 |

| 383 | Charles S. Carver | 154 | 82 | 16 | 64 | 28 | .005 |

| 384 | Leif D. Nelson | 409 | 74 | 16 | 64 | 28 | .005 |

| 385 | David DeSteno | 201 | 83 | 16 | 57 | 28 | .005 |

| 386 | Sandra L. Murray | 697 | 60 | 16 | 55 | 28 | .001 |

| 387 | Heejung S. Kim | 858 | 59 | 16 | 55 | 29 | .001 |

| 388 | Mark P. Zanna | 659 | 64 | 16 | 48 | 28 | .001 |

| 389 | Nira Liberman | 1304 | 75 | 15 | 65 | 31 | .005 |

| 390 | Gun R. Semin | 159 | 79 | 15 | 64 | 29 | .005 |

| 391 | Tal Eyal | 439 | 62 | 15 | 62 | 29 | .005 |

| 392 | Nathaniel M Lambert | 456 | 66 | 15 | 59 | 30 | .001 |

| 393 | Angela L. Duckworth | 122 | 61 | 15 | 55 | 30 | .005 |

| 394 | Dana R. Carney | 200 | 60 | 15 | 53 | 30 | .001 |

| 395 | Garriy Shteynberg | 168 | 54 | 15 | 31 | 30 | .005 |

| 396 | Lee Ross | 349 | 77 | 14 | 63 | 31 | .001 |

| 397 | Arie W. Kruglanski | 1228 | 78 | 14 | 58 | 33 | .001 |

| 398 | Ziva Kunda | 217 | 67 | 14 | 56 | 31 | .001 |

| 399 | Shelley E. Taylor | 427 | 69 | 14 | 52 | 31 | .001 |

| 400 | Jon K. Maner | 1040 | 65 | 14 | 52 | 32 | .001 |

| 401 | Gabriele Oettingen | 1047 | 61 | 14 | 49 | 33 | .001 |

| 402 | Nicole L. Mead | 240 | 70 | 14 | 46 | 33 | .01 |

| 403 | Gregory M. Walton | 587 | 69 | 14 | 44 | 33 | .001 |

| 404 | Michael A. Olson | 346 | 65 | 13 | 63 | 35 | .001 |

| 405 | Fiona Lee | 221 | 67 | 13 | 58 | 34 | .001 |

| 406 | Melody M. Chao | 237 | 57 | 13 | 58 | 36 | .001 |

| 407 | Adam L. Alter | 314 | 78 | 13 | 54 | 36 | .001 |

| 408 | Sarah E. Hill | 509 | 78 | 13 | 52 | 34 | .001 |

| 409 | Jaime L. Kurtz | 91 | 55 | 13 | 38 | 37 | .001 |

| 410 | Michael A. Zarate | 120 | 52 | 13 | 31 | 36 | .001 |

| 411 | Jennifer K. Bosson | 659 | 76 | 12 | 64 | 40 | .001 |

| 412 | Daniel M. Oppenheimer | 198 | 80 | 12 | 60 | 37 | .001 |

| 413 | Deborah A. Prentice | 89 | 80 | 12 | 57 | 38 | .001 |

| 414 | Yaacov Trope | 1277 | 73 | 12 | 57 | 38 | .001 |

| 415 | Oscar Ybarra | 305 | 63 | 12 | 55 | 40 | .001 |

| 416 | William von Hippel | 398 | 65 | 12 | 48 | 40 | .001 |

| 417 | Steven J. Spencer | 541 | 67 | 12 | 44 | 38 | .001 |

| 418 | Martie G. Haselton | 186 | 73 | 11 | 54 | 43 | .001 |

| 419 | Shelly Chaiken | 360 | 74 | 11 | 52 | 44 | .001 |

| 420 | Susan M. Andersen | 361 | 74 | 11 | 48 | 43 | .001 |

| 421 | Dov Cohen | 641 | 68 | 11 | 44 | 41 | .001 |

| 422 | Mark Muraven | 496 | 52 | 11 | 44 | 41 | .001 |

| 423 | Ian McGregor | 409 | 66 | 11 | 40 | 41 | .001 |

| 424 | Hans Ijzerman | 214 | 56 | 9 | 46 | 51 | .001 |

| 425 | Linda M. Isbell | 115 | 64 | 9 | 41 | 50 | .001 |

| 426 | Cheryl J. Wakslak | 278 | 73 | 8 | 35 | 59 | .001 |

Only 801 of the listed 1260 effects were actually taken from research that I was involved in (some seem to stem from articles for which I was editor, others are a mystery to me). On the other hand, the majority of my research is missing. It seems preferable to publish data that is actually based on a more or less representative sample of research actually done by the person with whom that data is associated.

Thank you for the comment. They are valuable to improve the informativeness of the z-curve analyses.

1. only social/personalty journals and general journals like Psych Science were used (I posted a list of the journals).

I will make clear which journals were used.

2. I am trying to screen out mentions of names as editor, but the program is not perfect. I will look into this and update according.

3. I found a way to screen out more articles where your name appeared in footnotes (thank you).

4. I updated the results and they did improve.

5. Please check the new results.

Thank you for the quick response. Some of my research is published in psychophysiology or cognitive journals hence I now understand why so much is missing.

I figure that research practices can vary once physiological measures are taken or in cognitive studies with within-subject designs. I will eventually do similar posts for other areas.

I’m dismayed (and aghast) to see that I’m almost at the bottom of this list. Any advice on how to investigate this further to see where the problem lies?

Thank you for your comment.

You can download a file called “William von Hippel-rindex.csv”

It contains all the articles that were used and computes the R-Index based on the z-scores found for that article. The R-Index is a simple way to estimate replicability that works for small sets of test statistics. An R-Index of 50 would suggest that the replicability is about 50%. The EDR would be lower, but is hard to estimate with a small set of test statistics. The file is sorted by the R-Index. Articles with an R-Index below 50 are probably not robust. This is a good way to start diagnosing the problem.

Hi Uli, that’s very helpful – thanks!

But now I’m confused. To start with the worst offenders on my list, I have four papers with an R-Index of 0. I can’t tell what two of them are, as your identifier doesn’t include the article title or authors, but two of them are clear. The first of those two has large samples, reports a wide variety of large and small correlations, and strikes me as highly replicable. Indeed, study 2 (N=466) is a direct replication of study 1 (N=196) with an even larger sample. Study 3 goes in a slightly different direction, but mostly relies on the data from Study 2. The other paper reports large samples (Ns = 200) but small effects. We submitted it with only one study, the editor asked for replication, we ran a direct replication with the same sample size and found the same effect. Those are both in the paper. Since then we’ve tried to replicate it once and have succeeded (that finding isn’t yet published).

That’s the first issue, and strikes me as the most important. Secondarily, there are at least four or five papers in this list that aren’t my own – perhaps more but it’s hard to tell what some of the papers are – and the resultant list of papers is only about 1/3 of my empirical publications. Thus, setting aside the most important issue above, I don’t have a clear sense of what my actual replicability score would look like with all of my papers.

All the best, Bill

please check the number of results. Many papers with R-Index of 0 have only 1 result which is often just a missing value, meaning no results were found. So, you can ignore those.

I also made clear which journals were searched for these articles. Please see the list on the blog post.

I would also be happy to run an analysis on all of your articles, if you send me the pdfs.

There are numerous correlations reported in both papers, along with various mediational analyses in one of them, so definitely not a single result.

With regard to the second issue, the file lists the journal title and year, but that’s it. Sometimes I haven’t published in that journal in that year, so I know it’s not me. Sometimes I have, but in this particular case the only paper I published in that journal in that year has another one of the R = 0 examples, but includes a sample in the millions and a multiverse analysis. There’s no chance that could have a replicability index of 0.

Thanks Uli, very kind of you to offer to run the analysis for me. I’ve created a dropbox folder with all of my empirical articles in it and shared it with you. Let me know if that doesn’t come through. Best, Bill

Hi Uli,

I am surprised that the work you are analyzing for my index contains only 36 entries (when Web of Science retrieves 155). Two of the entries you list are not mine.

Please make sure you include all empirical publications for an author, which in my case span a variety of areas, methods, and journals. Excluding the papers that are not mine would also be methodologically sound and make your own work more authoritative before you disseminate it.

Thanks,

Dolores

The method uses a sampling approach. It is based on the journals that are tracked in the replicability rankings of 120 psychology journals, although it may expand as the rankings expand. The list of 120 journals includes the major social psychology journals. So, it is possible that your results might differ for other areas, but as this list focusses on social psychology, it also makes sense to focus on these journals.

If you point out the two articles that are not yours, I will exclude them.

P.S. We all learned after 2011 that the way we collected and analyzed data was wrong and resulted in inflated estimates of replicability and effect sizes. These results mainly reflect this. How have your research practices changed over the past 10 years in response to the replication crisis?

There are two articles of which I am not an author. They are not too easy to find in the very small sample you have. I have to find your data again to point them out but they are pretty obvious from the authors and should be checked. Thanks

It is not that easy. I would have to open all 36 articles to check the full author list. As you already did the work, it would be nice to share the information with me so that I can remove them.

These two are not mine, Uli:

entry 26, The impression management of intelligence

entry 5, US southern and northern differences in perception

You should add an index of how much of an author’s work you’re tracking. It is likely not a valid representation of the author per se unless you select papers at random from the person’s record.

The replication crisis was fully established towards the mid-2010s right? so the last 10 years do not reflect the changes in response to it.

I found the problem why those two studies were included and ran the search again. The main results showed a change in the ODR from 67 to 66 percent and in the EDR from 19 to 21 percent.

Not all of your other publications are original studies (e.g. meta-analysis). Others are in years that are not covered or journals that are not covered. If you send me a folder with pdf files of these articles, I am happy to include them.

Thanks, Uli. I will get you the PDFs. Meta-analyses are still quantitative research, so you should find a way of including them. The statistics are all comparable and power matters too.

Meta-analysis are different from original articles in important ways.

1. Authors have no influence on the quality of the studies they meta-analyze.

2. The focus is on effect size estimation and not on hypothesis testing.

Thus, it makes no sense to include them in an investigation of the robustness of original research.

Well, they don’t control the quality but they can select for quality and they definitely have statistical power considerations. In many cases, the focus IS actually on hypothesis testing and testing new hypotheses, and the method does signal an interest in reproducibility for sure.

I am not saying meta-analysis are not important and cannot be evaluated, but my method cannot do this. This also means that the results here are not an overall evaluation of a researcher, which no single index can (including H-Index).