Psychological Science is the flagship journal of the Association for Psychological Science (APS). In response to the replication crisis, D. Stephen Lindsay worked hard to increase the credibility of results published in this journal as editor from 2014-2019 (Schimmack, 2020). This work paid off and meta-scientific evidence shows that publication bias decreased and replicability increased (Schimmack, 2020). In the replicability rankings, Psychological Science is one of a few journals that show reliable improvement over the past decade (Schimmack, 2020).

This year, Patricia J. Bauer took over as editor. Some meta-psychologists were concerned that replicability might be less of a priority because she did not embrace initiatives like preregistration (New Psychological Science Editor Plans to Further Expand the Journal’s Reach).

The good news is that these concerns were unfounded. The meta-scientific criteria of credibility did not change notably from 2019 to 2020.

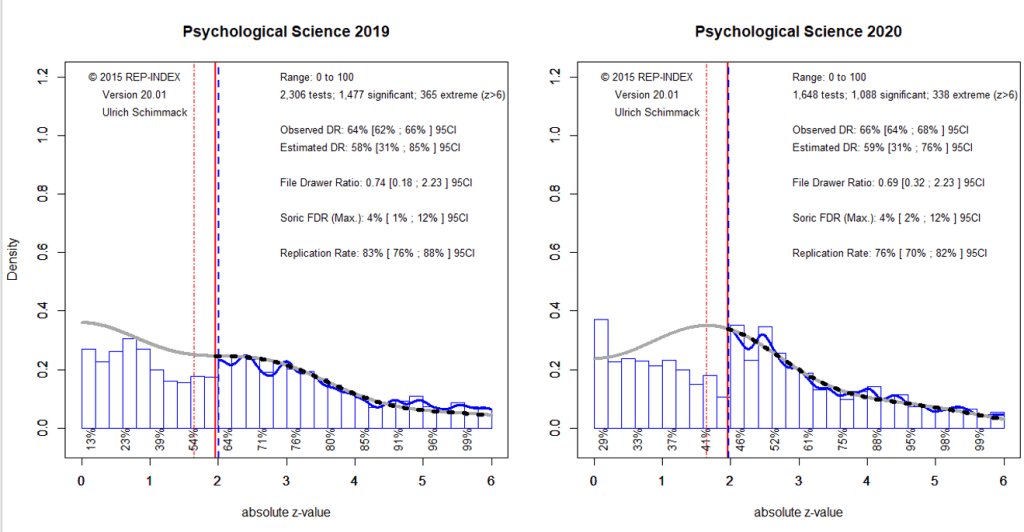

The observed discovery rates were 64% in 2019 and 66% in 2020. The estimated discovery rates were 58% in 2019 and 59%, respectively. Visual inspection of the z-curves and the slightly higher ODR than EDR suggests that there is still some selection for significant result. That is, researchers use so-called questionable research practices to produce statistically significant results. However, the magnitude of these questionable research practices is small and much lower than in 2010 (ODR = 77%, EDR = 38%).

Based on the EDR, it is possible to estimate the maximum false discovery rate (i.e., the percentage of significant results where the null-hypothesis is true). This rate is low with 4% in both years. Even the upper limit of the 95%CI is only 12%. This contradicts the widespread concern that most published (significant) results are false (Ioannidis, 2005).

The expected replication rate is slightly, but not significantly (i.e., it could be just sampling error) lower in 2020 (76% vs. 83%). Given the small risk of a false positive result, this means that on average significant results were obtained with the recommended power of 80% (Cohen, 1988).

Overall, these results suggest that published results in Psychological Science are credible and replicable. However, this positive evaluations comes with a few caveats.

First, null-hypothesis significance testing can only provide information that there is an effect and the direction of the effect. It cannot provide information about the effect size. Moreover, it is not possible to use the point estimates of effect sizes in small samples to draw inferences about the actual population effect size. Often the 95% confidence interval will include small effect sizes that may have no practical significance. Readers should clearly evaluate the lower limit of the 95%CI to examine whether a practically significant effect was demonstrated.

Second, the replicability estimate of 80% is an average. The average power of results that are just significant is lower. The local power estimates below the x-axis suggest that results with z-scores between 2 and 3 (p < .05 & p > .005) have only 50% power. It is recommended to increase sample sizes for follow-up studies.

Third, the local power estimates also show that most non-significant results are false negatives (type-II errors). Z-scores between 1 and 2 are estimated to have 40% average power. It is unclear how often articles falsely infer that an effect does not exist or can be ignored because the test was not significant. Often sampling error alone is sufficient to explain differences between test statistics in the range from 1 to 2 and from 2 to 3.

Finally, 80% power is sufficient for a single focal test. However, with 80% power, multiple focal tests are likely to produce at least one non-significant result. If all focal tests are significant, there is a concern that questionable research practices were used (Schimmack, 2012).

Readers should also carefully examine the results of individual articles. The present results are based on automatic extraction of all statistical tests. If focal tests have only p-values in the range between .05 and .005, the results are less credible than if at least some p-values are below .005 (Schimmack, 2020).

In conclusion, Psychological Science has responded to concerns about a high rate of false positive results by increasing statistical power and reducing publication bias. This positive trend continued in 2020 under the leadership of the new editor Patricia Bauer.

That’s encouraging. Not to throw any shade on Patricia Bauer (whom I respect and admire), I think that many of the articles that appeared in Psychological Science in 2020 were submitted during my term as editor. My impression is that few mss go from initial submission to publication in less than a year. That said, I expect we will see continued improvement in the 2021 volume.

Steve

I was wondering what percentage of articles was edited by the new team. In any case, we are seeing some persistent improvement and you played a big part in it. Happy New Year.