Many sciences, including psychology, rely on statistical significance to draw inferences from data. A widely accepted practice is to consider results with a p-value less than .05 as evidence that an effect occurred.

Hundreds of articles have discussed the problems of this approach, but few have offered attractive alternatives. As a result, very little has changed in the way results are interpreted and published in 2020.

Even if this would suddenly change, researchers still have to decide what they should do with the results that have been published so far. At present there are only two options. Either trust all results and hope for the best or assume that most published results are false and start from scratch. Trust everything or trust nothing are not very attractive options. Ideally, we would want to find a method that can sperate more credible findings from less credible ones.

One solution to this problem comes from molecular genetics. When it became possible to measure genetic variation across individuals, geneticists started correlating single variants with phenotypes (e.g., the serotonin transporter gene variation and neuroticism). These studies used the standard approach of declaring results with p-values below .05 as a discovery. Actual replication studies showed that many of these results could not be replicated. In response to these replication failures, the field moved towards genome-wide association studies that tested many genetic variants simultaneously. This further increased the risk of false discoveries. To avoid this problem, geneticists lowered the criterion for a significant finding. This criterion was not picked arbitrarily. Rather it was determined by estimating the false discovery rate or false discovery risk. The classic article that recommeded this approach has been cited over 40,000 times (Benjamin & Hochberg, 1995).

In genetics, a single study produces thousands of p-values that require a correction for multiple comparisons. Studies in other disciplines usually produce a much smaller (typically less than 100) p-values. However, an entire scientific field also generates thousands of p-values. This makes it necessary to control for multiple comparisons and to lower p-values from the nominal value of .05 to maintain a reasonably low false discovery rate.

The main difference between original studies in genomics and meta-analysis of studies in other fields is that publication bias can inflate the percentage of significant results. This leads to biased estimates of the actual false discovery rate (Schimmack, 2020).

One solution to this problem are selection models that take publication bias into account. Jager and Leek (2014) used this approach to estimate the false discovery rate in medical journals for statistically significant results, p < .05. In response to this article, Goodman (2014) suggested to ask a different question.

What significance criterion would ensure a false discovery rate of 5%?

Although this is a useful question, selection models have not been used to answer it. Instead, recommendations for adjusting alpha have been based on ad-hoc assumptions about the number of true hypotheses that are being tested and power of studies.

For example, the false positive rate is greater than 33% with prior odds of 1:10 and a P value threshold of 0.05, regardless of the level of statistical power. Reducing the threshold to 0.005 would reduce this minimum false positive rate to 5% (D. J. Benjamin et al., 2017, p. 7).

Rather than relying on assumptions, it is possible to estimate the maximum false discovery rate based on the distribution of statistically significant p-values (Bartos & Schimmack, 2020).

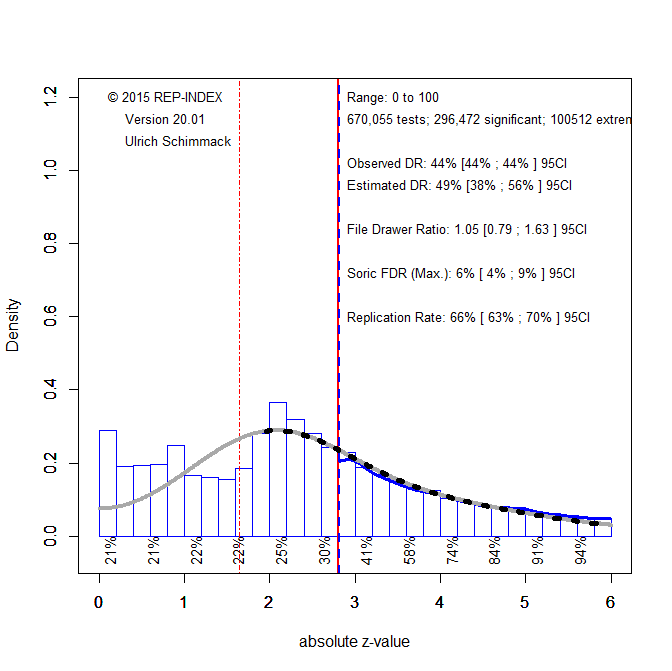

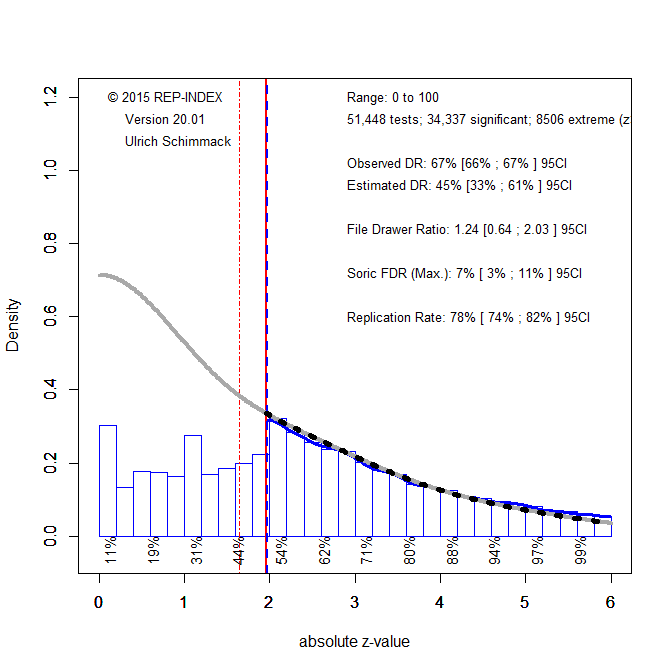

Here, I illustrate this approach with p-values from 120 psychology journals for articles published between 2010 and 2019. An automated extraction of test-statistics found 670,055 useable test-statistics. All test-statistics were converted into absolute z-scores that reflect the amount of evidence against the null-hypothesis.

Figure 1 shows the distribution of the absolute z-scores. The first notable observation is the drop (from right to left) in the distribution right at the standard level for statistical significance, p < .05 (two-tailed) that corresponds to a z-score of 1.96. This drop reveals publication bias. The amount of bias is reflected in a comparison of the observed discovery rate and the estimated discovery rate. The observed discovery rate of 67% is simply the percentage of p-values below .05. The estimated discovery rate is the percentage of significant results based on the z-curve model that is fitted to the significant results (grey curve). The estimated discovery rate is only 38% and the 95% confidence interval around this estimate, 32% to 49%, does not include the observed discovery rate. This shows that significant results are more likely to be reported and that non-significant results are missing from published article.

If we would use the observed discovery rate of 67%, we would underestimate the risk of false positive results. Using Soric’s (1989) formula,

FDR = (1/DR – 1)*(.05/.95)

a discovery rate of 67% implies a maximum false discovery rate of 3%. Thus, no adjustment to the significance criterion would be needed to maintain a false discovery rate below 5%.

However, publication bias is present and inflates the discovery rate. To adjust for this, we can use the estimated discovery rate of 38% and get a maximum false discovery rate of 9%. As this value exceeds the desired number of false discoveries, we need to lower alpha to reduce the false discovery rate.

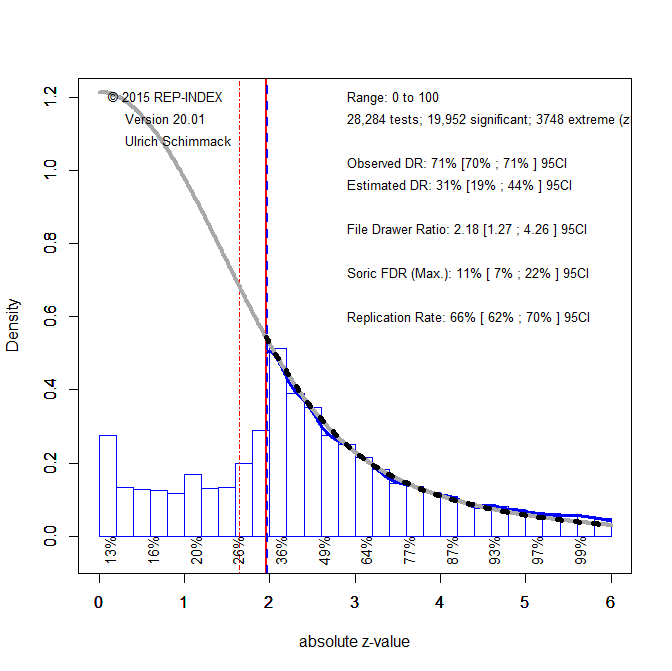

Figure 2 shows the results when alpha is set .005 (z = 2.80) as recommended by Benjamin et al. (2017). The model is only fitted to data that are significant with this new criterion. We now see that the observed discovery rate (44%) is even lower than the estimated discovery rate (49%), although the difference is not significant. Thus, there is no evidence of publication bias with this new criterion for significance. The reason is that many questionable practices that are used to report significant results produce just significant results. This is seen in the excess of just significant results between z = 2 and z = 2.8. These results no longer inflate the discovery rate because they are no longer counted as discoveries. We also see that the estimated discovery rate produces a maximum false discovery rate of 6%, which may be close enough to the desired level of 5%.

Another piece of useful information is the estimated replication rate (ERR). This is the average power of results that are significant with p < .005 as criterion. Although lowering the alpha level decreases power, the average power of 66% suggests that many results should replicate successfully in exact replication studies with the same sample size. Increasing sample sizes could help to achieve 80% power.

In conclusion, we can use the distribution of p-values in the psychological literature to evaluate published findings. Based on the present results, readers of published articles could use p < .005 (rule of thumb: z > 2.8, t > 3, or chi-square > 9, F > 9) to evaluate statistical evidence.

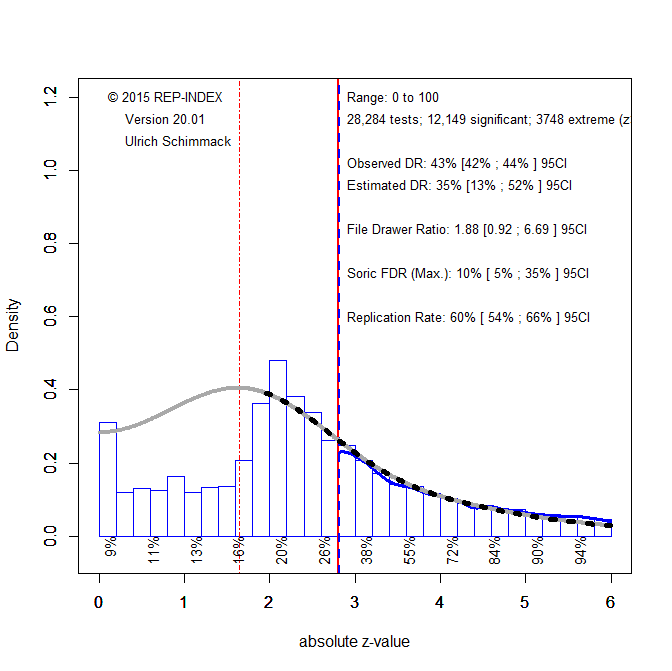

The empirical approach to justify alpha with FDRs has the advantage that it can be adjusted for different literatures. This is illustrated with the Attitudes and Social Cognition section of JPSP. Social cognition research has experienced a replication crisis due to massive use of questionable research practices. It is possible that even alpha = .005 is too liberal for this research area.

Figure 3 shows the results for test statistics published in JPSP-ASC from 2000 to 2020.

There is clear evidence of publication bias (ODR = 71%, EDR = 31%). Based on the EDR of 31%, the maximum false discovery rate is 11%, well above the desired level of 5%. Even the 95%CI around the FDR does not include 5%. Thus, it is necessary to lower the alpha criterion.

Using p = .005 as criterion improves things, but not fully. First, a comparison of the ODR and EDR suggests that publication bias was not fully removed, 43% vs. 35%. Second, the EDR of 35% still implies a maximum FDR of 10%, although the 95%CI now touches 5%, but also has 35% as the upper limit. Thus, even with p = .005, the social cognition literature is not credible.

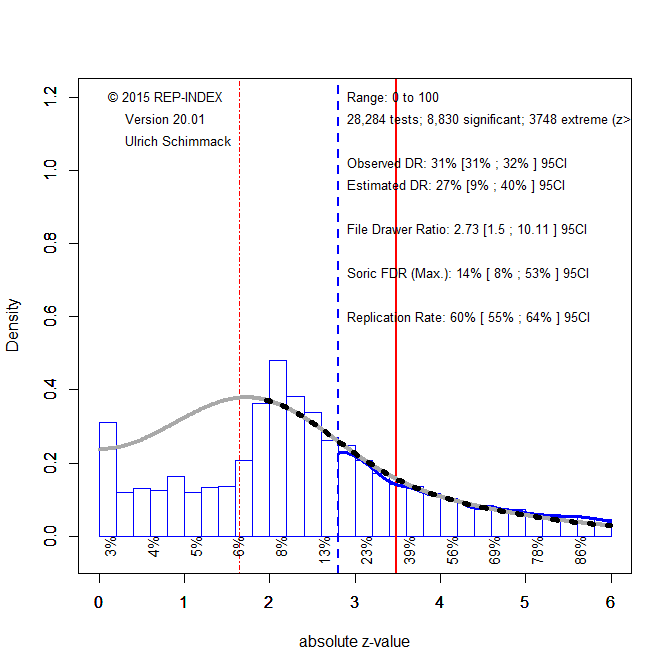

Lowering the criterion further does not solve this problem. The reason is that there are now so few significant results that the discovery rate remains low. This is shown in the next figure where the criterion is set to p < .0005 (z = 3.5). The model cannot be fitted to z-scores so extreme because there is insufficient information about lower power studies. Thus, the model was fitted to z-scores greater than 2.8 (p < .005). in this scenario, the expected discovery rate is 27%, which implies a maximum false discovery rate of 14% and the 95%CI still does not include 5%.

These results illustrate the problem of conducting many studies with low power. The false discovery risk remains high because there are only few test statistics with extreme values and a few extreme test statistics are expected by chance.

In short, setting alpha to .005 is still too liberal for this research area. Given the ample replication failures in social cognition research, most results cannot be trusted. This conclusion is also consistent with the actual replication rate in the Open Science Collaboration (2015) project that could only replicate 7/31 (23% results). With a discovery rate of 23%, the maximum false discovery rate is 18%. This is still way below Ioannidis’s claim that most published results are false positives, but it is also well above 5%.

Different results are expected for the Journal of Experimental Psychology, Learning, Memory, and Cognition (JEP-LMC). Here the OSC project was able to replicate 13/47 (48%) results. A discovery rate of 48% implies a maximum false discovery rate of 6%. Thus, no adjustment to the alpha level may be needed for this journal.

Figure 6 shows the results for the z-curve analysis of test statistics published from 2000 to 2020. There is evidence of publication bias. The ODR of 67% is outside the 95%CI of the EDR 45%, 95%CI = . However, with an EDR of 45%, the maximum FDR is 7%. This is close to the estimate based on the OSC results and close to the desired level of 5%.

For this journal it was sufficient to set the alpha criterion to p < .03. This produced a fairly close match between the ODR (61%) and EDR (58%) and a maximum FDR of 4%.

Conclusion

Significance testing was introduced by Fisher, 100 years ago. He would recognize the way scientists analyze their data because not much has changed. Over the past 100 years, many statisticians and practitioners have pointed out problems with this approach, but no practical alternatives have been offered. Adjusting the significance criterion depending on the research question is one reasonable modification, but often requires more a priori knowledge than researchers have (Lakens et al., 2018). Lowering alpha makes sense when there is a concern about too many false positive results, but can be a costly mistake when false positive results are fewer than feared (Benjamin et al., 2017). Here I presented a solution to this problem. It is possible to use the maximum false-discovery rate to pick alpha so that the percentage of false discoveries is kept at a reasonable minimum.

Even if this recommendation does not influence the behavior of scientists or the practices of journals, it can be helpful to compute alpha values that ensure a low false discovery rate. At present, consumers of scientific research (mostly other scientists) are used to treat all significant results with p-values less than .05 as discoveries. Literature reviews mention studies with p = .04 as if they have the same status as studies with p = .000001. Once a p-values crosses the magic .05 level, it becomes a solid fact. This is wrong because statistical significance alone does not ensure that a finding is a true positive. To avoid this fallacy, consumers of research can do their own adjustment to the alpha level. Readers of JEP:LMC may use .05 or .03 because this alpha level is sufficient. Readers of JPSP-ASC may lower alpha to .001.

Once readers demand stronger evidence from journals that publish weak evidence, researchers may actually change their practices. As long as consumers buy every p-values less than .05, there is little incentive for producers of p-values to try harder to produce stronger evidence, but when consumers demand p-values below .005, supply will follow. Unfortunately, consumers have been gullible and it was easy to sell them results that do not replicate with a p < .05 warranty because they had no rational way to decide which p-values they should trust or not. Maintaining a reasonably low false discovery rate has proved useful in genomics, it may also prove useful for other sciences.

1 thought on “Empirical Standards for Statistical Significance”