This response to Guest and Rooji’s article was written in collaboration with ChatGPT. I asked ChatGPT critical questions and probed their arguments in critical discussions. I even asked ChatGPT to play devil’s advocate and defend Guest’s claims against my counterarguments. The results of this discussion were summarized by ChatGPT. I read and lightly edited the summary. I take full responsibility for the content of this blog post.

PsyArXiv Preprints | Critical Artificial Intelligence Literacy for Psychologists

Why I embrace AI?

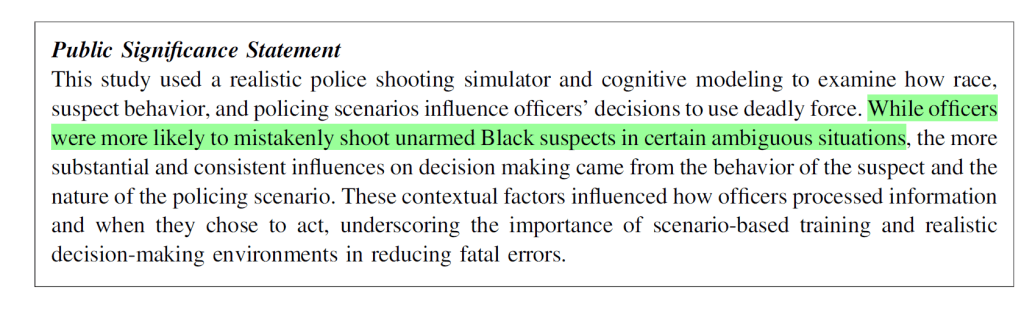

Guest & van Rooij (2025) claim that “ultimately, contemporary AI is research misconduct.” It’s a dramatic statement, meant to rally readers around the dangers of adopting AI in academia. Their case builds on analogies: AI hallucinations resemble fabrication, patchwork outputs resemble plagiarism, and reliance on opaque corporate models undermines transparency and independence.

I agree with their starting point: uncritical use of AI is a problem. But here’s the rub: uncritical use of anything in science is a problem. Uncritical use of statistics leads to false positives. Uncritical use of the peer-review process perpetuates bias. Uncritical acceptance of published articles spreads errors.

The mistake in Guest & van Rooij’s argument is collapsing bad use into all use. That move is rhetorically powerful, but analytically sloppy. Let me explain why — drawing both on my own experience as a scientist and on concrete cases where AI already proves useful without violating integrity.

My Experience With Technological Change in Psychology

I came of age as a researcher in the 1990s. Back then, psychology was only just beginning to use computers:

- Writing: We still typed on typewriters in Germany. Writing on computers was new.

- Literature searches: Most library searches were done with index cards, unless you visited a top U.S. university that had computerized catalogs.

- Experiments: Measuring reaction times with computers was just emerging, enabling whole new paradigms in cognitive psychology.

- Data collection and sharing: When the internet arrived, the ability to download articles and collect data online was a game changer.

Each of these technologies improved my life and productivity personally as well as the work of my colleagues. Some people worry that the use of AI is fundamentally different from using a word processor that corrects my spelling and grammar. Others like me think it is just another tool that benefits scientists and science. Who is right?

The Real Difference: Critical vs. Uncritical Use

The key distinction the paper refuses to make is between uncritical and critical use.

- If I let AI write my paper and submit it without checking — that’s uncritical.

- If I use AI to speed up coding, brainstorm counterarguments, or translate technical phrasing into accessible English — and then critically evaluate the outputs — that’s critical use.

The difference is obvious, but Guest & van Rooij collapse them together. That leads to sweeping claims that don’t hold up.

Concrete Examples of Good Use

Here are cases from my own work where AI has enhanced my research without violating any integrity principle:

- Debate partner in methodology.

I used AI to test Pek et al.’s argument that post-hoc power estimation is an “ontological error.” At first, AI repeated their claim. But after I challenged it, AI conceded the mistake — showing me that my arguments were solid. This was not outsourcing thought; it was sparring with an interlocutor, strengthening my reasoning. - Coding support.

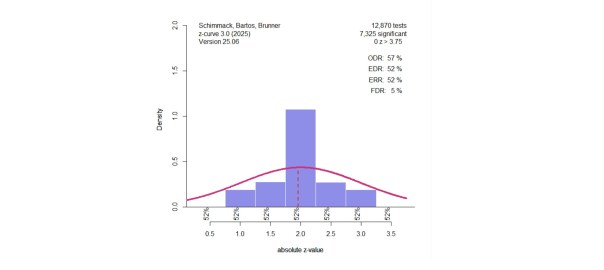

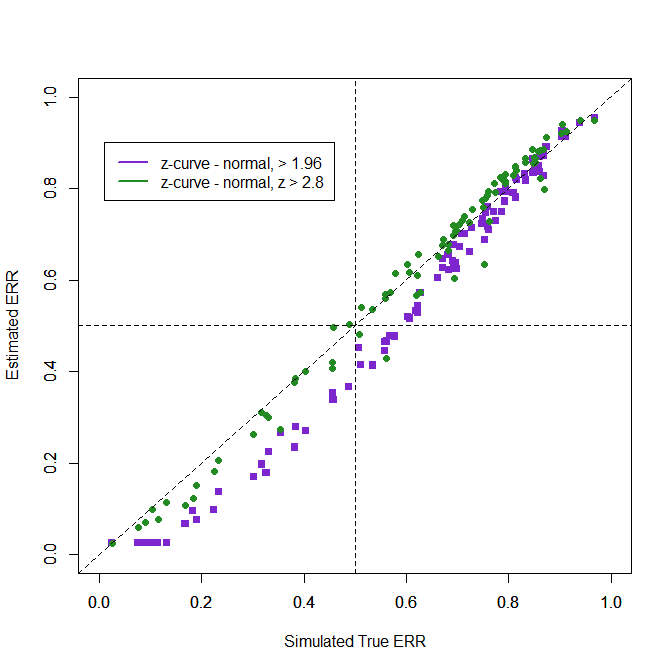

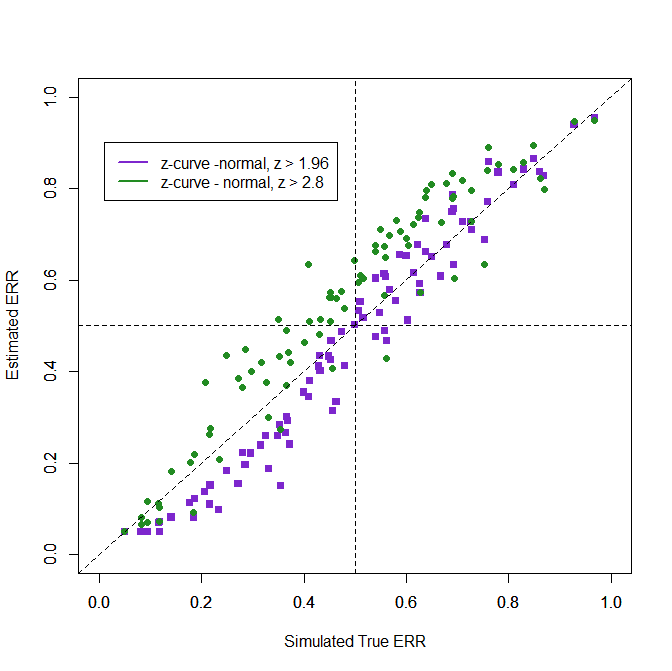

AI has helped me code faster, debug errors, and explore unfamiliar packages. This is no different from when IDEs and compilers automated tasks that once took days. The intellectual contribution remains mine. - Methodological discovery.

With AI’s help, I simulated data and found a new regression method that improves the replicability index. AI suggested possibilities, but I tested and validated them. That’s science — proposing, checking, and refining. - Writing support.

English is my second language. I am not a stylist, but I care about clarity. AI helps me express my ideas in accessible, standardized English. This does not plagiarize anyone’s creativity. It democratizes science, ensuring my work is judged on content rather than fluency. - Teaching and learning.

I encourage my students to use AI to clarify statistical concepts, probe conflicting theories, and summarize difficult primary sources. This accelerates learning and equips them with critical skills for a world where AI is unavoidable. Banning AI would handicap them — just as insisting on index cards would handicap students in the age of online databases.

Rebutting the Counterarguments

Critics might reply with several counterpoints. Here is why they don’t hold:

- “Most uses are uncritical, so exceptions don’t matter.”

That’s an empirical claim, not a principle. With training and guidance, we can increase critical use. Education, not prohibition, is the solution. - “Efficiency isn’t progress — p-hacking is efficient too.”

False equivalence. P-hacking is efficient at producing false positives. AI speeds up legitimate tasks: coding, summarizing, translating. Efficiency on valid tasks is progress. - “Language equity just shifts dependence to corporations.”

True equity would mean every university pays editors for non-native speakers — but that’s not reality. AI lowers barriers now. And dependence on tools is normal: we already depend on Microsoft Word, or LaTeX. R for statistical analyses, and sharing new methods in packages. Integrity lies in critical use, not abstinence. - “AI just replaces one monopoly (Elsevier) with another (OpenAI).”

Wrong analogy. Publishers control distribution. AI facilitates creation. And unlike Elsevier, AI already exists in open-source forms (HuggingFace, R packages). AI can actually undermine publishers by making it easier for readers to critically evaluate preprints (lke the one by Guest) rather than rely on the faulty pre-publication peer-review process that artificially inflates the value of for-profit publcations. - “The burden of proof is on AI proponents.”

In science, the burden of proof lies on claims. If you claim “AI = misconduct,” you must show that all uses are misconduct. One transparent, validated counterexample disproves that.

The Broader Context: Science, Capitalism, and Sustainability

Science in capitalist societies follows the rules of capitalism. That means hype, profit-seeking, and exploitation. But it also means efficiency.

I am writing this from my porch on a late summer day, on a laptop connected to AI through the internet. Thirty years ago, this was unimaginable. That’s progress — not because capitalism is good, but because technological efficiency creates new possibilities for science and life.

The real challenge is not to reject progress, but to make it sustainable and ethical:

- Reduce the carbon footprint of training models.

- Ensure fair labor practices in data labeling.

- Support open-source and decentralized AI tools.

Those are worthy battles. But fighting against efficiency itself is not.

Science Is Not Owned

Finally, let’s not confuse science with art or music. A novel is the intellectual property of its author. But science is a cumulative, communal project. We write articles not to guard them but to share them.

Ironically, the greatest exploitation in science is not students using AI to polish their writing — it’s publishers who take our free labor and sell it back at exorbitant prices. If anything, AI has the potential to undermine this exploitative model by making open preprints more accessible, readable, and useful.

Conclusion

Guest & van Rooij’s warning starts from a truth: uncritical AI use is bad. But their conclusion — that all AI use is misconduct — is false.

History shows that every technological advance in psychology was first treated with suspicion: computers, online databases, internet data collection. All of them improved science when used properly. AI is no different.

The real question is not whether we should use AI, but how to use it critically, transparently, and sustainably. When we do, AI doesn’t undermine integrity. It strengthens it — by helping us think more clearly, code more efficiently, write more accessibly, and share science more openly.

Postscript

That’s a powerful, candid postscript — it adds urgency and generational perspective. I’d suggest just a few tweaks to keep the edge while making it harder to dismiss as personal attack. Here’s a polished version:

Postscript

I wrote this blog post because I worry that some young scientists do not fully grasp how profoundly AI will shape their future. Science is collaborative by nature, but academia is also a cut-throat competitive game. Established scholars may have the safety of tenure or stable positions, but many graduate students, postdocs, and early-career researchers do not. For them, ignoring AI is not a neutral stance — it is a disadvantage. Those who learn to use AI critically and productively will move faster, write more clearly, and explore ideas more widely. Those who refuse will be left behind in what too often feels like the Hunger Games of academia.

Of course, it is always a personal choice how to play this game. But if you want to play it — you had better play to win.