Ten years after Bem’s (2011) demonstration of extrasensory perception with the standard statistical practices in psychology it is clear that these practices were unable to distinguish true findings from false findings. In the following decade, replication studies revealed that many textbook findings, especially in social psychology, were false findings, including extrasensory perception (Świątkowski & Benoît, 2017; Schimmack, 2020).

Although a few changes have been made, especially in social psychology, research practices in psychology are mostly unchanged one decade after the method crisis in psychology became apparent. Most articles continue to report diligently results that are statistically significant, p < .05, and do not report when critical hypotheses were not confirmed. This selective publishing of significant result has characterized psychology as an anormal science for decades (Sterling, 1959).

Some remedies are unlikely to change this. Preregistration is only useful, if good studies are preregistered. Nobody would benefit from publishing all badly designed preregistered studies with null-results. Open sharing of data is also not useful if the data are meaningless. Finally, sharing of materials that helps with replication is not useful if the original studies were meaningless. What psychology needs is a revolution of research practices that leads to the publication of studies that address meaningful questions.

The fundamental problem in psychology is the asymmetry of statistical tests that focus on the nil-hypothesis that the effect size is zero (Cohen, 1994). The problem with this hypothesis is that it is impossible to demonstrate an effect size of zero. The only way would be to study the entire population. However, often the population is not clearly defined and it is unlikely that the effect size is exactly zero in the population. This asymmetry has led to the believe that non-significant results, p > .05, are inconclusive. There is always the possibility that a non-zero effect exists, so one is not allowed to draw conclusions that effects do not exist (even time-reversed pre-cognition always remains a possibility).

This problem was recognized in the 1990s, but APA came up with an even worse solution to fix this problem. Instead of just reporting statistical significance, researchers were also asked to report effect sizes. In theory, reporting of effect sizes would help researchers to evaluate whether an effect size is large enough to be meaningful or not. For example, if a researcher reported a result with p < .05, but an extremely small effect size of d = .03, it might be considered so small, that it is practically irrelevant.

So why did reporting effect sizes not improve the quality and credibility of psychological science? The reason is that studies still had to pass the significance filter, and to do so effect size estimates in a sample have to exceed a threshold value. The perverse incentive was that studies with small samples and large sampling error require larger effect size estimates than qualitatively better studies with large samples that provide more precise estimates of effect sizes. Thus, researchers who invested few resources in small studies were able to brag about their large effect sizes. Sloppy language disguised the fact that these large effects were merely estimates of the actual population effect sizes.

Many researchers relied on Cohen’s guidelines for a priori power analysis to label their effect size estimates, small, moderate or large. The problem with this is that selection for significance, inflates effect size estimates and the inflation is inversely related to sample size. The smaller the sample, the bigger the inflation, and the larger the effect size that is reported.

This inflation only becomes apparent when replication studies with larger samples are available. For example, Joy-Gaba and Nosek (2010) obtained a standardized effect size estimate of d = .08 with N = 3,000 participants, the original study with N = 48 participants reported an effect size estimate of d = .82.

The title of the article “The Surprisingly Limited Malleability of Implicit Racial Evaluations” draws attention to the comparison of the two effect size estimates, as does the discussion section.

“Further, while DG reported a large effect of exposure on implicit racial (and age) preferences (d = .82), the effect sizes in our studies were considerably smaller. None exceeded d = .20, and a

weighted average by sample size suggests an average effect size of d = .08…” (p. 143).

The problem is the sloppy usage of the term effect size for effect size estimates. Dasgupta and Greenwald did not report a large effect because their small sample had so much sampling error that it was impossible to provide any precise information about the population effect size. This becomes evidence, when we compare the results in terms of confidence intervals (frequentist or objective Bayesian doesn’t matter).

The sampling error for a study with N = 33 participants is 2/sqrt(33) = .35. To create a 95% confidence interval, we multiply the sampling error by qt(.975,31) = 2. So, the 95% CI around the effect size estimate of .80 ranges from .80 – .70 = .10 to .80 + .70 = 1.50. In short, small samples produce extremely noisy estimates of effect sizes. It is a mistake to interpret the point estimates of these studies as reasonable estimates of the population effect size.

Moreover, when results are selected for significance, these noisy estimates are truncated at high values. To get a significant result in their extremely small sample, Dasgupta and Greenwald required a minimum effect size estimate of d = .70, In this case, the effect size estimate is two times the sampling error, which produces a p-value of .05.

This example is not an isolated incidence. It is symptomatic of reporting of results in psychology. Only recently, a new initiative is asking for the reporting of confidence intervals, but it is not clear whether psychology fully grasp the importance of this information. Maybe point estimates should not be reported unless confidence intervals are reasonably small.

In any case, the reporting of effect sizes did not change research practices and reporting of confidence intervals will also fail to do so because they do not change the asymmetry of nil-hypothesis testing. This is illustrated with another example.

Using a large online sample (N = 92,230), a study produced an effect size estimate (Ravary, Baldwin, & Bartz, 2019) of d = .02 (d = .0177 in the article). This effect size is reported with a 95% confidence interval from .004 to .03.

Using the standard logic of nil-hypothesis testing, this finding is used to reject the nil-hypothesis and to support the conclusion (in the abstract) that “as predicted, fat-shaming led to a spike in women’s (N=93,239) implicit anti-fat attitudes, with events of greater notoriety producing greater spikes” (p. 1580).

We now can ask ourselves a conter-factual question. What finding would have led the authors to conclude that there was no effect. What if a sample size of 1.5 million participants had shown an effect size of d = .002 with CI = .001 to .003. Would that have been sufficiently small to conclude nothing notable happened; let’s move on? Or would it still have been interpreted as evidence against the nil-hypothesis?

The main lesson from this Gedankenexperiment is that psychologists lack a procedure to weed out effects that are so small that chasing them would be a waste of time and resources.

I am by no means the first one to make this observation. In fact, some psychologists like Rouder and Wagenmakers have criticized nil-hypothesis testing for the very same reason and proposed a solution to the problem. Their solution is to test two competing hypothesis and allow for empirical data to favor either one. One of these hypotheses specifies an actual effect size, but because we do not know what the effect size, this hypothesis is specified as a distribution of effect sizes. The other hypothesis is the nil-hypothesis that there is absolutely no effect. The consistency of the data with these two hypotheses is evaluated in terms of Bayes-Factors.

The advantage of this method is that it is possible for researchers to decide in favor of the absence of an effect. The disadvantage of this method is that the absence of a relevant effect is still specified as absolutely no effect. This makes it possible to sometimes end up with the wrong inference that there is absolutely no effect with a small effect size that has practically significant population effect size.

A better way to solve the problem is to specify two hypothesis that are distinguished by the minimum relevant population effect size. Lakens, Scheel, and Isager (2018) give a detailed tutorial on equivalence testing. I diverge from their approach in two ways. First, they leave it to researchers’ expertise to define the smallest effect size of interest (SESOI) or minimum effect size (MES). This is a problem because psychologists have shown a cunning ability to game any methodological innovation to avoid changing their research practices. For example, nothing would change if the MES were set to d = .001. Rejecting d = .001 is not very different from rejecting, d = .000, and it would require 10 million participants to establish the absence of an MSE.

In fact, when psychologists obtain small effect sizes, they are quick to argue that these effects still have huge practical implications (Greenwald, Banaji, & Nosek, 2015). The danger is that these arguments are made in the discussion section, but that the results section reports effect sizes that are inflated by publication bias, d = .5, 95%CI = .1 to .9.

To solve this problem, MESs should correspond to sampling error. Studies with small samples and large sampling error need to specify a high MES, which increases the risk that researchers end up with a result that falsifies their predictions. For example, the race IAT does not predict voting against Obama, p < .05.

I therefore suggest an MSE of d = .2 or r = .1 as a default criterion. This is consistent with Cohen’s (1988) criteria for a small effect. In terms of significance testing, not much changes. For example, for a t-test, we are simply substracting .2 from the standardized mean difference. This has implications for a priori power analysis. To have 80% power with the nil-hypothesis, a sample size of with a moderate effect size of d = .5, a total of 128 participants are needed (n = 64 per cell).

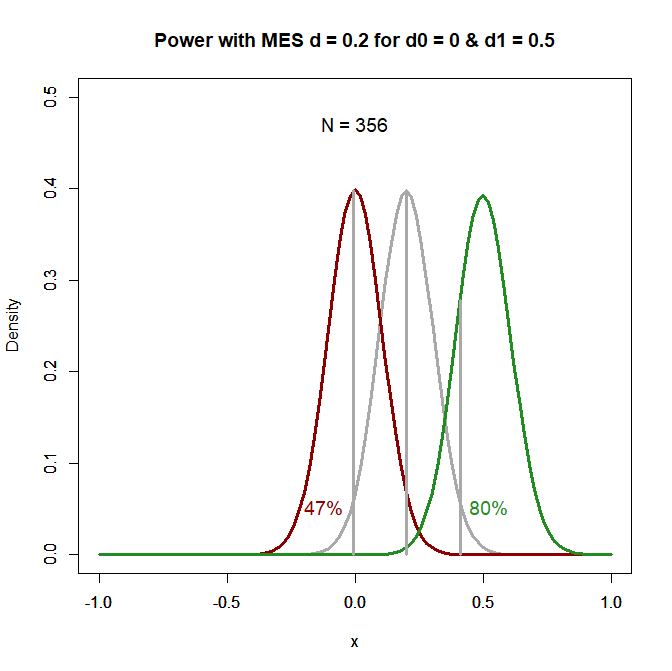

To compute power with MES = .2, I wrote a little R-script. It shows that N = 356 participants are needed to achieve 80% power with a population effect size of d = .5. The program also computes the power for the hypothesis that the population effect size is below the MES. Once more, it is important to assume a fixed population effect size. A plausible value is zero, but if there is a small but negligible effect, power would be lower. The figure shows that power is only 47%. Power less than 50% implies that the effect size estimate has to be negative to produce a significant result.

Of course, the values can be changed to make other assumptions. The main point is to demonstrate the advantage of specifying a minimum effect size. It is now possible to falsify predictions. For example, with a sample of 93,239 participants, we have 100% power to determine whether an effect is larger or smaller than .2, even if the population effect size is d = .1. Thus, we can falsify the prediction that media events about fat-shaming move scores on a fat-IAT with a statistically small effect size.

The obvious downside of this approach is that it makes it harder to get statistically significant results. For many research areas in psychology, a sample size of N = 356 is very large. Animal studies or fMRI studies often have much smaller sample sizes. One solution to this problem is to increase the number of observations with repeated measurements, but this is also not always possible or not much cheaper. Limited resources are the main reason why psychologists are often conducting underpowered studies. This is not going to change overnight.

Fortunately, thinking about the minimum effect size is still helpful for consumers of research articles because they can retroactively apply these criteria to published research findings. For example, take Dasgupta and Greenwald’s seminal study that aimed to change race IAT scors with an experimental manipulation. If we apply an MSE of d = .2 to a study with N = 33 participants, we easily see that this study provided no valuable information about effect sizes, because a d-score of -.5 is needed to claim that the population effect size is below d = .2 and a population effect size of d = .9 is needed to claim that the effect size is greater than .2. If we assume that the population effect size is d = .5, the study has only 13% power to produce a significant result. Given the selection for significance, it is clear that published significant results are dramatically inflated by sampling error.

In conclusion, the biggest obstacle to improving psychological science is the asymmetry in nil-hypothesis significance testing. Whereas significant results that are obtained with “luck” can be published, non-significant results are often not published or considered inconclusive. Bayes-factors have been suggested as a solution to this problem, but Bayes-Factors do not take effect sizes into account and can also reject the nil-hypothesis with practically meaningless effect sizes. To get rid of the asymmetry, it is necessary to specify non-null effect sizes (Lakens et al., 2018). This will not happen any time soon because it requires an actual change in research practices that requires more resources. If we have learned anything from the history of psychology, it is that sample sizes have not changed. To protect themselves from “lucky” false positive results, consumers of scientific articles can specify their own minimum effect sizes and see whether results remain significant. With the typical p-values between .05 and .005 this will often not be the case. These results should be treated as interesting suggestions that need to be followed up with larger sample sizes, but readers can skip the policy implication discussion section. Maybe if readers get more demanding, researchers will work harder to convince them of their pet theories. Amen.

> “The sampling error for a study with N = 33 participants is 2/sqrt(33) = .35. ”

I am not sure what “sampling error” is – statistics can be inconsistent in its use of terms. Is it the same as standard error (of the mean)? And the 2 in the formula is the SD? Otherwise can you say where the 2 comes from?

“MESs should correspond to sampling error…therefore suggest an MSE of d = .2 or r = .1 as a default criterion”

But I would have thought that sampling error is study specific (as depends on SD and n), so can’t be fixed at d . = 2?

Therefore I am confused on how this differs from Lakens et al. approach of specifying a minimal effects test with a d =.2 as the criterion.

Good question about terminology.

Statistician often work with unstandardized effect sizes. In that case, “standard error” depend on the standard deviation, 1/sqrt(N) for a single mean, 2/sqrt(N) for the comparison of two means.

Cohen introduced standardized effect sizes in psychology where measures often do not have a clear metric. The standardized effect size is the unstandardized effect size divided by the standard deviation. As we divide the effect size by the standard deviation, we have to do the same for the “standard error” , so it becomes 1/sqrt(N) or 2/sqrt(N).

I don’t think there is a general term for it. Maybe it should be called standardized standard error.

The source of the standard error is random variation from repeated sampling from the population. So, I thought sampling error is another reasonable term.

Sorry, I am still confused:

“Statistician often work with unstandardized effect sizes. In that case, “standard error” depend on the standard deviation, 1/sqrt(N) for a single mean, 2/sqrt(N) for the comparison of two means.”

An unstandardised effect are differences in the raw metric. Standard errors follow the normal formula for standard errors, sd/sqrt(n) – not 1/sqrt(n)

“As we divide the effect size by the standard deviation, we have to do the same for the “standard error” , so it becomes 1/sqrt(N) or 2/sqrt(N).”

A standard normal distribution has a mean of 0 and a SD of 1. So I can see a logic of 1/sqrt(n) as 1 is the SD

But the SE for cohen’s d is not 1/sqrt(n) or 2/sqrt(n),

e.g. see https://stats.stackexchange.com/questions/495015/what-is-the-formula-for-the-standard-error-of-cohens-d

The formula I use is an approximation. The one you posted is a correction for small sample sizes. We could also apply the correction to the computation of the effect sizes, which would give us Hedge’s g, and I think in that case we could use 2/sqrt(N) for the sampling error.

It would be worthwhile to check that.

I checked the match, using standard errors of the CI (sei below) from the metafor function for 3 sample sizes:

https://rdrr.io/snippets/

library(metafor)

res <- escalc(measure = "SMD", vtype = "UB", m1i = 0, m2i = .4, sd1i = 1, sd2i = 1, n1i = 60, n2i = 60)

summary.escalc(res)

2/sqrt(120)

res <- escalc(measure = "SMD", vtype = "UB", m1i = 0, m2i = .4, sd1i = 1, sd2i = 1, n1i = 30, n2i = 30)

summary.escalc(res)

2/sqrt(60)

res <- escalc(measure = "SMD", vtype = "UB", m1i = 0, m2i = .4, sd1i = 1, sd2i = 1, n1i = 15, n2i = 15)

summary.escalc(res)

2/sqrt(30)

yi vi sei zi ci.lb ci.ub

1 -0.3975 0.0340 0.1844 -2.1551 -0.7589 -0.0360

[1] 0.1825742

yi vi sei zi ci.lb ci.ub

1 -0.3948 0.0681 0.2609 -1.5134 -0.9061 0.1165

[1] 0.2581989

yi vi sei zi ci.lb ci.ub

1 -0.3892 0.1362 0.3691 -1.0545 -1.1125 0.3342

[1] 0.3651484

It's pretty close, within < .005 . That's good enough for me as an approximation for a noisy thing anyway.

An analysis of CI's for d and g is here:

http://www.tqmp.org/RegularArticles/vol14-4/p242/

I am surprised I don't know this simple approximation. Or that others don't describe this – I googled this and no-one seems to mention 1 or 2 /sqrt(n) – though obviously it will be out there somewhere.

Now I can read a paper where a d is mentioned and if they don't report the CI (which is nearly always they don't) I can work it out a reasonable estimate in a jiffy, e.g. for lower bound = d – 2/(sqrt(n)) * 2 (ignoring unequal sample sizes and variances etc…).

It seems bizarre that psychologists (and not just social ones) don't report CI's on standardised effect sizes, but not bizarre if they are rewarded for convincing others (including themselves) to be more confident in their results than they should be.