In several blog posts, Dr. Schnall made some critical comments about attempts to replicate her work and these blogs created a heated debate about replication studies. Heated debates are typically a reflection of insufficient information. Is the Earth flat? This question created heated debates hundreds of years ago. In the age of space travel it is no longer debated. In this blog, I presented some statistical information that sheds light on the debate about the replicability of Dr. Schnall’s research.

The Original Study

Dr. Schnall and colleagues conducted a study with 40 participants. A comparison of two groups on a dependent variable showed a significant difference, F(1,38) = 3.63. In these days, Psychological Science asked researchers to report P-Rep instead of p-values. P-rep was 90%. The interpretation of P-rep was that there is a 90% chance to find an effect with the SAME SIGN in an exact replication study with the same sample size. The conventional p-value for F(1,38) = 3.63 is p = .06, a finding that commonly is interpreted as marginally significant. The standardized effect size is d = .60, which is considered a moderate effect size. The 95% confidence interval is -.01 to 1.47.

The wide confidence interval makes it difficult to know the true effect size. A post-hoc power analysis, assuming the true effect size is d = .60 suggests that an exact replication study has a 46% chance to produce a significant results (p < .05, two-tailed). However, if the true effect size is lower, actual power is lower. For example, if the true effect size is small (d = .2), a study with N = 40 has only 9% power (that is a 9% chance) to produce a significant result.

The First Replication Study

Drs. Johnson, Cheung, and Donnellan conducted a replication study with 209 participants. Assuming the effect size in the original study is the true effect size, this replication study has 99% power. However, assuming the true effect size is only d = .2, the study has only 31% power to produce a significant result. The study produce a non-significant result, F(1, 206) = .004, p = .95. The effect size was d = .01 (in the same direction). Due to the larger sample, the confidence interval is smaller and ranges from -.26 to .28. The confidence interval includes d = 2. Thus, both studies are consistent with the hypothesis that the effect exists and that the effect size is small, d = .2.

The Second Replication Study

Dr. Huang conducted another replication study with N = 214 participants (Huang, 2004, Study 1). Based on the previous two studies, the true effect might be expected to be somewhere between -.01 and .28, which includes a small effect size of d = .20. A study with N = 214 participants has 31% power to produce a significant result. Not surprisingly, the study produce a non-significant result, t(212) = 1.22, p = .23. At the same time, the effect size fell within the confidence interval set by the previous two studies, d = .17.

A Third Replication Study

Dr. Hung conducted a replication study with N = 440 participants (Study 2). Maintaining the plausible effect size of d = .2 as the best estimate of the true effect size, the study has 55% power to produce a significant result, which means it is nearly as likely to produce a non-significant result as it is to produce a significant result, if the effect size is small (d = .2). The study failed to produce a significant result, t(438) = .042, p = 68. The effect size was d = .04 with a confidence interval ranging from -.14 to .23. Again, this confidence interval includes a small effect size of d = .2.

A Fourth Replication Study

Dr. Hung published a replication study in the supplementary materials to the article. The study again failed to demonstrate a main effect, t(434) = 0.42, p = .38. The effect size is d = .08 with a confidence interval of -.11 to .27. Again, the confidence interval is consistent with a small true effect size of d = .2. However, the study with 436 participants had only a 55% chance to produce a significant result.

If Dr. Huang had combined the two samples to conduct a more powerful study, a study with 878 participants would have 80% power to detect a small effect size of d = .2. However, the combined effect size of d = .06 for the combined samples is still not significant, t(876) = .89. The confidence interval ranges from -.07 to .19. It no longer includes d = .20, but the results are still consistent with a positive, yet small effect in the range between 0 and .20.

Conclusion

In sum, nobody has been able to replicate Schnall’s finding that a simple priming manipulation with cleanliness related words has a moderate to strong effect (d = .6) on moral judgments of hypothetical scenarios. However, all replication studies show a trend in the same direction. This suggests that the effect exists, but that the effect size is much smaller than in the original study; somewhere between 0 and .2 rather than .6.

Now there are three possible explanations for the much larger effect size in Schnall’s original study.

1. The replication studies were not exact replications and the true effect size in Schnall’s version of the experiment is stronger than in the other studies.

2. The true effect size is the same in all studies, but Dr. Schnall was lucky to observe an effect size that was three times as large as the true effect size and large enough to produce a marginally significant result.

3. It is possible that Dr. Schnall did not disclose all of the information about her original study. For example, she may have conducted additional studies that produced smaller and non-significant results and did not report these results. Importantly, this practice is common and legal and in an anonymous survey many researchers admitted using practices that produce inflated effect sizes in published studies. However, it is extremely rare for researchers to admit that these common practices explain one of their own findings and Dr. Schnall has attributed the discrepancy in effect sizes to problems with replication studies.

Dr. Schnall’s Replicability Index

Based on Dr. Schnall’s original study it is impossible to say which of these explanations accounts for her results. However, additional evidence makes it possible to test the third hypothesis that Dr. Schnall knows more than she was reporting in her article. The reason is that luck does not repeat itself. If Dr. Schnall was just lucky, other studies by her should have failed because Lady Luck is only on your side half the time. If, however, disconfirming evidence is systematically excluded from a manuscript, the rate of successful studies is higher than the observed statistical power in published studies (Schimmack, 2012).

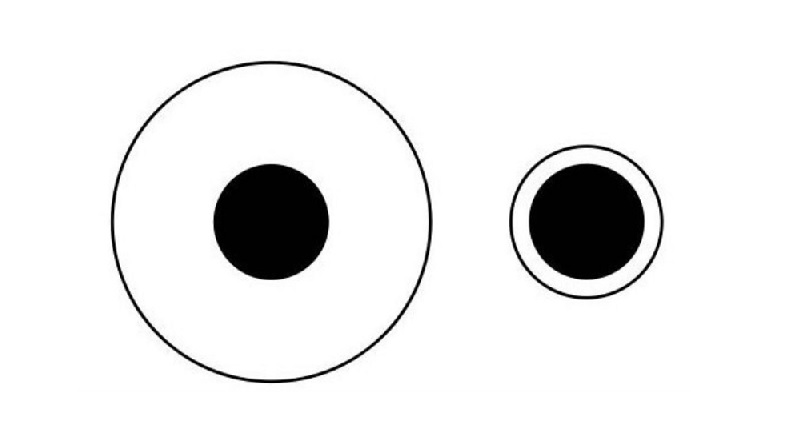

To test this hypothesis, I downloaded Dr. Schnall’s 10 most cited articles (in Web of Science, July, 2014). These 10 articles contained 23 independent studies. For each study, I computed the median observed power of statistical tests that tested a theoretically important hypothesis. I also calculated the success rate for each study. The average success rate was 91% (ranging from 45% to 100%, median = 100%). The median observed power was 61%. The inflation rate is 30% (91%-61%). Importantly, observed power is an inflated estimate of replicability when the success rate is inflated. I created the replicability index (R-index) to take this inflation into account. The R-Index subtracts the inflation rate from observed median power.

Dr. Schnall’s R-Index is 31% (61% – 30%).

What does an R-Index of 31% mean? Here are some comparisons that can help to interpret the Index.

Imagine the null-hypothesis is always true, and a researcher publishes only type-I errors. In this case, observed power is 61% and the success rate is 100%. The R-Index is 22%.

Dr. Baumeister admitted that his publications select studies that report the most favorable results. His R-Index is 49%.

The Open Science Framework conducted replication studies of psychological studies published in 2008. A set of 25 completed studies in November 2014 had an R-Index of 43%. The actual rate of successful replications was 28%.

Given this comparison standards, it is hardly surprising that one of Dr. Schnall’s study did not replicate even when the sample size and power of replication studies were considerably higher.

Conclusion

Dr. Schnall’s R-Index suggests that the omission of failed studies provides the most parsimonious explanation for the discrepancy between Dr. Schnall’s original effect size and effect sizes in the replication studies.

Importantly, the selective reporting of favorable results was and still is an accepted practice in psychology. It is a statistical fact that these practices reduce the replicability of published results. So why do failed replication studies that are entirely predictable create so much heated debate? Why does Dr. Schnall fear that her reputation is tarnished when a replication study reveals that her effect sizes were inflated? The reason is that psychologists are collectively motivated to exaggerate the importance and robustness of empirical results. Replication studies break with the code to maintain an image that psychology is a successful science that produces stunning novel insights. Nobody was supposed to test whether published findings are actually true.

However, Bem (2011) let the cat out of the bag and there is no turning back. Many researchers have recognized that the public is losing trust in science. To regain trust, science has to be transparent and empirical findings have to be replicable. The R-Index can be used to show that researchers reported all the evidence and that significant results are based on true effect sizes rather than gambling with sampling error.

In this new world of transparency, researchers still need to publish significant results. Fortunately, there is a simple and honest way to do so that was proposed by Jacob Cohen over 50 years ago. Conduct a power analysis and invest resources only in studies that have high statistical power. If your expertise led you to make a correct prediction, the force of the true effect size will be with you and you do not have to rely on Lady Luck or witchcraft to get a significant result.

P.S. I nearly forgot to comment on Dr. Huang’s moderator effects. Dr. Huang claims that the effect of the cleanliness manipulation depends on how much effort participants exert on the priming task.

First, as noted above, no moderator hypothesis is needed because all studies are consistent with a true effect size in the range between 0 and .2.

Second, Dr. Huang found significant interaction effects in two studies. In Study 2, the effect was F(1,438) = 6.05, p = .014, observed power = 69%. In Study 2a, the effect was F(1,434) = 7.53, p = .006, observed power = 78%. The R-Index for these two studies is 74% – 26% = 48%. I am waiting for an open science replication with 95% power before I believe in the moderator effect.

Third, even if the moderator effect exists, it doesn’t explain Dr. Schnall’s main effect of d = .6.

The second of Huang’s studies was reported to be an exact replication in response to a reviewer’s request, and its observed power was quite close to .8; Is waiting for an open-science replication with 95% power before believing in that effect a standard you think we should apply to all reports of effects before accepting them?

Hi Tony,

I am not saying that my standard should be considered a norm. I think researchers have their own preferences about type-I errors and type-II errors. I personally hate type-I errors a lot more because I would incorporate this finding in the building of a theory.

I think it would have been better to ask for 95% power in the exact replication study, but I understand that that would have been asking for a bit much.

Thanks for posting.

Thanks for your response. Those are fair points in my view. I’ve sometimes thought myself that the typical standard of .80 power does a disservice to our hypotheses (why tolerate .20 type II errors but only .05 type I errors?). So having .95 power would seem not only better for replication but also fairer to our hypotheses. Of course, this level of power is easier to accomplish with some kinds of research than others, but I guess we need to face up to the fact that we can be better off not doing a study at all than doing an underpowered study.

Interesting. But who’s behind this page? The “About” section is not too informative…

I am in the process of updating the About section that will answer your question.

Another replication study has been posted on a blog. Please post links to additional studies in a comment. Thank you.

http://babieslearninglanguage.blogspot.ca/2014/05/another-replication-of-schnall-benton.html

Psychfiledrawer has a few replications of Schnall et al. (2008) on its site:

http://psychfiledrawer.org/chart.php?target_article=48

Thank you for link.

Melissa Besman, Caton Dubensky, Leanna Dunsmore and Kimberly Daubman. Cleanliness Primes Less Severe Moral Judgments. (2013, February 23). Retrieved 15:22, January 07, 2015 from http://www.PsychFileDrawer.org/replication.php?attempt=MTQ5

d = .46, 95%CI = -.04 to .97, p = .077 (two-tailed), p = .04 (one-tailed)

Julia Arbesfeld, Tricia Collins, Demetrius Baldwin, Kimberly Daubman. Clean Thoughts Lead to Less Severe Moral Judgment. (2014, February 13). Retrieved 15:26, January 07, 2015 from http://www.PsychFileDrawer.org/replication.php?attempt=MTc3

d = .48, 95% CI = -.03 to .98 p = .071 (two-tailed), p = .036 (one-tailed).

Both studies list Dr. Daubman as senior author. It is extremely unlikely that two independent studies would produce these similar results. I assume that the same study was reported twice. I contacted Dr. Daubman to clarify this issue, but didn’t receive a response.

Here is a nice overview as well:

https://curatescience.org/schnalletal.html

Updated link is: http://curatescience.org/#sbh2008a

I just confirmed with Dr. Daubman that the two successful replication studies of Dr. Schnall posted on psychfiledrawer are indeed two independent studies that were conducted as part of a research methods course.

http://psychfiledrawer.org/chart.php?target_article=48

The problem is that the t-values in both studies are virtually identical.

t(58) = 1.8;

t(58) = 1.84.

This is unlikely because sampling error should produce variation in t-values across replication studies. The Test of Insufficient Variance quantifies how unlikely this outcome is.

TIVA uses a chi-square distribution with k – 1 degrees of freedom. With two studies there is one degree of freedom.

The observed variance of z-scores is .0007 (expected value 1). Chi-square (df = 1) = 1370.02. I haven’t found a computer that gives me the correct number of zeros, but the probability is less than 1 out of 1,000,000,000,000,000 and then some.

In short, it is simply implausible that these are real data that just happen to replicate the original finding twice with p = .039 and p = .035 (one-tailed).

These results are statistically too improbable to be considered successful replication studies of Dr. Schnall’s original study.

Read more about TIVA here:

https://replicationindex.wordpress.com/2014/12/30/the-test-of-insufficient-variance-tiva-a-new-tool-for-the-detection-of-questionable-research-practices/