It is widely recognized that no single study is conclusive. Credible evidence requires successful replications by independent researchers (Cohen, 1994). The credibility crisis in psychology has revealed that many hypothesis tests lack robust support for two reasons. First, direct replication studies are rare because they are difficult to publish. Journals have a strong bias to publish new findings rather than reporting that a finding can be successfully replicated. Second, journals have a strong bias in favor of statistically significant results. Thus, it is also hard to publish replication failures with a non-significant result. As a result, psychology journals mostly publish new discoveries supported by statistically significant results, p < .05.

Statisticians have long noted that selective reporting of significant results undermines the credibility of significance testing (Sterling, 1959). When only significant findings are published, statistical significance loses its ability to distinguish between true and false claims. Under such conditions, even implausible results—like time-reversed causality—can appear credible (Bem, 2011). By the early 2010s, researchers recognized that more than half of published findings might be false positives (Simmons, Nelson, & Simonsohn, 2011). This realization helped trigger psychology’s replication crisis. As psychologists began conducting and reporting direct replications, the success rate proved to be alarmingly low (Open Science Collaboration, 2015).

One reason it took so long to recognize psychology’s replication problem is that meta-analyses suggested false positives were rare and that replication failures were mostly false negatives caused by low statistical power. A meta-analysis in the prestigious journal Psychological Bulletin even suggested that paranormal phenomena are real (Bem & Honorton, 1994). During the credibility crisis, it became clear that many meta-analytic findings are unreliable because publication bias inflates the combined effect size estimates. To address this issue, researchers have developed statistical methods that adjust for publication bias (see Carter et al., 2019 for an overview of methods). These methods sometimes reveal that the corrected effect size is not statistically significant, implying that the true effect may be zero (Carter et al., 2019; Meier et al., 2022).

While bias correction is an important improvement, these methods often overlook another major issue: the studies included in psychological meta-analyses are rarely direct replications. Instead, they are typically conceptual replications, varying in procedures and dependent variables. As a result, it is likely that the true effect sizes differ across studies. Indeed, when tested, many meta-analyses reveal substantial heterogeneity (Mertens et al., 2022), yet this variability is often ignored in interpreting results.

In this paper, I present four examples showing how conclusions based on the average effect size change when heterogeneity is taken into account. These examples also serve as a tutorial for investigating heterogeneity in meta-analyses and offer guidance for reanalyzing existing work and conducting more meaningful meta-analyses in the future.

A Brief Introduction to Meta-Analysis

Meta-analyses are used to combine the results of multiple studies to obtain better estimates of population effect sizes. For example, original studies often have low statistical power to reject false null hypothesis with small effect sizes. Combining the results of several studies with low power creates a single dataset with high statistical power. For example, after 10 studies with N = 40 participants have been published, a fixed effect meta-analysis has the power of a single study with N = 400 participants (Schimmack, 2012).

Meta-analyses work well when (a) studies are sufficiently similar so that all studies have similar population effect sizes, and (b) there is no bias in the reporting of results. A good example are the Cochrane reports in medicine (Higgins et al., 2023). Cochrane reports use only studies that test the same treatment on the same outcome. Surprisingly, they also show no sign of publication bias. As a result, a fixed effects meta-analysis of these studies solves the problem of low power in clinical trials and provides useful estimates of the true treatment effect.

In contrast, the application of standard meta-analysis to psychological results ignores that psychological studies are rarely direct replications. To solve this problem, psychologists started using random-effects meta-analysis (Hedges & Vevea, 1998). However, the use of a random effects model did not substantially alter the interpretation of meta-analyses. The main focus remained on the estimate of the average effect size. Another problem is that the standard random effects model does not take publication bias into account and produces inflated estimates when bias is present (Carter et al., 2019).

The problem of methods that correct for bias is that they are essentially fixed-effect size methods. That is, they estimate a single effect size and do not estimate heterogeneity of effect sizes. Thus, meta-analysts have to choose between methods that estimate heterogeneity or methods that correct for bias. This creates problems when these models show evidence of heterogeneity and bias (Mertens et al., 2022). Fortunately, there is one method that can estimate bias, correct for bias, and estimate heterogeneity (Carter et al., 201; Hedges & Vevea, 1996). These models are known as random effects selection models (RESM). However, the model was difficult to implement, and a user-friendly R-package was only published in 2016 (Coburn et al., 2019. Unfortunately, tutorials and applications of the RESM continue to focus on the average effect size estimate and ignore the estimate of heterogeneity (Carter et al., 2019; Meier, VanderWeele, & Mathur, 2022). Here I show how information about heterogeneity in effect sizes can be interpreted and why it is misleading to focus on the average effect size to interpret meta-analyses of conceptual replication studies.

A Simulation of Heterogeneity and Selection Bias with an Average Effect Size of Zero

Carter et al. (2019) used simulation studies to evaluate the performance of several meta-analytic methods, including the RESM. They used an RESM with three parameters (3PSM). The first parameter estimates the average effect size. The second one estimates heterogeneity, and the third one estimates the amount of bias in reporting of non-significant results.

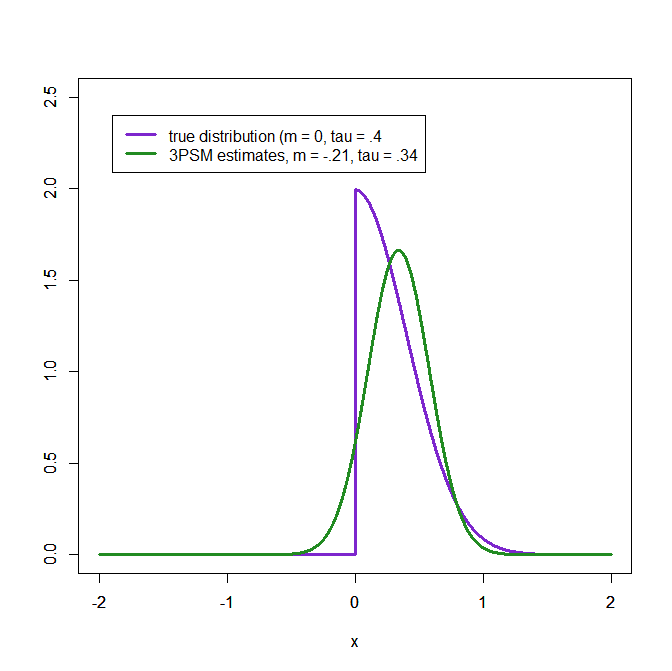

The simulation study simulated standardized effect sizes for between-group differences (Cohen’s d). I focus on the simulation with high heterogeneity because it is most similar to meta-analyses of conceptual replication studies. The simulation assumes that population effect sizes have a normal distribution with a mean of 0.0 and a standard deviation of 0.4 (Figure 1).

Figure 1 also shows the distribution of the effect size estimates in 5,000 simulated studies using Carter et al.’s (2019) simulation of high selection bias and no p-hacking. The distribution is shifted towards positive results. The small pump of negative results is due to the simulations weaker bias against significant negative results. All of the negative results are statistically significant.

Carter et al. (2019) fitted the three parameters selection model (3PSM) to these data and found that the model underestimates the population average of zero. I replicated this finding in my simulation, mean d = -.21. More importantly, the model produced a good estimate of the true heterogeneity d = .38. Thus, there is clear evidence that the simulated effect sizes are heterogenous.

The negative bias in the estimated mean is partially explained by the presence of significant negative results. This is not modeled in the 3PSM model. To allow for less selection against negative and significant results, the model can be modified by adding a step at .975, one-tailed. In addition, there are some non-significant positive results and no non-significant negative results. This suggests different selection biases for positive and negative results. This can be modeled with a step at p = .5 (one-tailed). Using this 5PSM model, the estimated mean is d = -.07, and the estimated standard deviation is .34.

Figure 2 shows how the estimated mean and standard deviation can be used to evaluate model fit. The figure shows that the 3PSM model does not fit the data as well as the 5PSM model. Even if the estimated mean and SD of the 5PSM model are significantly different from the true values, the model predicts the true effect sizes rather well. A formal test of fit can be conducted by computing the root mean square error (RMSE) of the differences between the true and predicted effect sizes. The RMSE for the 5PSM model is .05, which is considered acceptable fit.

The predicted distribution of population effect sizes can be used to estimate the percentage of results with small, medium, or large effect sizes. Even with a negative average, there are 21^ of studies with effect sizes of d > .2. This information helps to avoid the mistake of interpreting the negative average as evidence that the effect size of all studies is zero or even negative.

A Simulation of Heterogeneity and Selection Bias with Only Positive Effect Sizes

Carter et al. (2019) simulated heterogeneity with normal distributions. This is a problem for two reasons. First, the selection model assumes normal distributions and benefits from the fact that the simulated data match the model’s assumptions (Brunner & Schimmack, 2020). Second, simulations with an average effect size of zero assume an equal number of positive and negative effect sizes because the normal distribution is symmetrical over the mean.

In actual meta-analysis of conceptual replication studies, however, it is rather unlikely that there is an equal number of studies with positive and negative results. The reason is that meta-analysis code original studies in terms of the consistency with the predicted direction of an effect. Positive results confirm a prediction, whereas negative results are opposite to a prediction. Thus, an average of zero implies that researchers are unable to predict the direction of an effect. This is even more unlikely when hypothesis tests use a two-sided significance test, and researchers sometimes make predictions after they have seen the results (Kerr, 1998). Thus, there are two possible explanations for missing negative results when the selection model predicts negative results (Figure 2). Either the negative results were suppresed or the distribution of effect sizes is not normal.

To examine the performance of the RESM without true negative results, I used the same simulation as before, but I deleted studies with negative population effect sizes. This did change the actual simulated data only slightly because there were only few cases with negative results. However, it changed the true distribution of population effect sizes from a full normal to a half normal distribution. This changes the true mean and standard deviation of the population effect sizes. The true mean is d = .32 and the true SD is .24.

The 3PSM model estimates a mean of d = .34 and a standard deviation of .24. This is very similar to the true values, but could be misinterpreted as bias under the assumption that negative results were suppressed and the true value is zero. Figure 3 shows the true density distribution compared to the predicted one by the 3PSM model.

The RMSE is .24. This is not considered good fit, but it is the best the selection model can do given the false distribution assumption. Despite the poor fit, the results do provide meaningful information about the population effect sizes that produced the observed effect size estimates. The question whether studies with negative results were not reported or never conducted is irrelevant for the evaluation of the evidence in the meta-analysis. The observed effects were produced with positive population effect sizes.

Ego Depletion

Carter et al. (2019) used their simulations to interpret conflicting results for a meta-analysis of ego-depletion. Figure 3 shows the distribution of effect sizes. There are relatively few negative effect sizes and only 5 negative effect sizes were significant. Carter et al. (2019) reported an average effect size estimate of d = .33. I reproduced this result. In addition, I found that the estimated standard deviation was .38. I also fitted the 4PSM model with a step at .5 to allow for suppression of negative results. However, the model showed equal weights for non-significant positive and negative results. As a result, the estimated mean and SD were unchanged. Figure 3 also shows the implied distribution of population effect sizes. The estimated distribution of population effect sizes implies that 63% of the population effect sizes are greater than .2.

Carter et al. (2019) come to a different conclusion. They argued that the 3PSM model is biased in the conditions that are most realistic for ego-depletion research, including high heterogeneity. They favor PET-regression model, although the inventor of this bias-correction model warned against the use of PET-regression when data are heterogenous (Stanley, 2017). Based on a negative estimate of d = -.27, Carter et al. (2019) concluded that “the true ego-depletion effect is not practically or significantly different from zero” (p. 139). This conclusion is evidently false because there is no true ego-depletion effect in a meta-analysis of conceptual replication studies with heterogeneous effect size estimates. The conclusion reveals the mindless interpretation of random effects meta-analysis as if they were fixed effects meta-analysis with a single population effect size. Figure 3 suggests that at least some of the studies produced significant results with effect sizes greater than .2.

A Meta-Analysis of Nudging

Mertens, Herberz, Hahnel, and Brosch (2022) conducted an extensive meta-analysis of nudging. Figure 8 shows the distribution of effect size estimates. There are few negative results and only 3 out of 447 results were negative and significant. This either means that there is strong selection against negative results or that the distribution of effect sizes is not normal. Mertens et al. used traditional random effects meta-analysis and showed presence of heterogeneity. They also conducted some bias analyses and found some inconclusive evidence of bias. They did not use a random effects model that corrects for bias. Here are the results.

The standard 3PSM model showed mild evidence of bias, selection against non-significant results weight = .72, 95%CI = 50 to .94 (a value of 1 would mean no bias). The estimated mean and standard deviation were .35 and .40, respectively. A modified 4PSM model that allows for selection against negative results (step at p = .5) showed clear evidence of selection against negative results, weight = .11, 95%CI = .04 to .17. The estimated mean was no close to zero, .07, and not statistically significant, 95%CI = -.07 to .21. The estimated standard deviation increased to .52.

These data are extremely helpful to illustrate the problem of focusing on the estimated average effect size. Ignoring heterogeneity and assuming a fixed effect size for all studies would suggest that corrected for bias, these studies show no evidence that nudging manipulations have an effect. A totally different conclusion emerges when heterogeneity is taken into account (see Figure 5).

The models differ in their prediction of negative results. The 3PSM model predicts fewer negative results than the 4PSM model because the 4PSM model assumes that negative results were obtained and not published. However, on the positive sides the two models are much more similar because the 4PSM compensates for the lower mean with larger heterogeneity. The 3PSM model implies that 65% of the population effect sizes are greater than d = .2 (a small effect, Cohen, 1988). The 4PSM estimate is lower, but 40% of the population effect sizes are still greater than d = .2. This is very different from the conclusion that all evidence for nudging was obtained without a real effect that follows form the interpretation of the average effect size in a random effects meta-analysis as a fixed-effect estimate of a single population effect size.

The lack of negative results makes it impossible to determine whether negative results were obtained and not published or whether the distribution of effect sizes is not normal and the predicted negative effect sizes in the 4PSM model are phantom estimates of studies that were never conducted. Extending the selection model to allow for non-normal distribution would be helpful to address this question.

Conclusion

Meta-analysis is a powerful tool to integrate results of direct replication studies to increase power and obtain more precise effect size estimates. Publication bias threatens the validity of meta-analysis, but several methods exist to correct for bias in meta-analysis of studies with the same or similar effect sizes. However, most of these methods assume that all studies have the same population effect size.

However, meta-analyses in psychology often combine evidence from conceptual replication studies that use different paradigms and have high heterogeneity in effect sizes. When this is the case, estimating a single value is not informative and often leads to false conclusions. It is therefore mandatory for valid scientific inferences to estimate the amount of heterogeneity in a dataset. The problem is that standard random effects meta-analysis ignores publication bias. Thus, the only valid tool that can be used at the moment is the random effects selection model. The key limitation of selection models is the assumption of a normal distribution of effect sizes, but I showed that the normal distribution can often approximate other distributions reasonably well and estimate the average and standard deviation of positive population effect sizes.

There are many directions for future research. First, simulation studies need to evaluate models in terms of their ability to recover the true distribution of effect sizes, not only bias in the estimate of the average effect. Second, more work on the specification of selection models needs to be done. The default 3PSM model is not always the best option, but researchers have little guidance in the specification of selection models. Third, the selection model is currently the only effect size model that models heterogeneity and bias. More research needs to be done to see whether other models can improve on this model. Finally, more work needs to be done on the interpretation of heterogeneity. One problem is that the distribution of population effect sizes is not directly tied to the observed effect sizes. A study with a small sample and a large effect size estimate may have a small population effect size. It is therefore not immediately clear how evidence of large population effect sizes can be used to identify studies with substantial effects that can be replicated. However, the first step towards these goals is to be more mindful of heterogeneity of effect sizes in psychology.

References

Bem, D. J. (2011). Feeling the future: Experimental evidence for anomalous retroactive influences on cognition and affect. Journal of Personality and Social Psychology, 100(3), 407–425. https://doi.org/10.1037/a0021524

Bem, D. J., & Honorton, C. (1994). Does psi exist? Replicable evidence for an anomalous process of information transfer. Psychological Bulletin, 115(1), 4–18. https://doi.org/10.1037/0033-2909.115.1.4

Carter, E. C., Schönbrodt, F. D., Gervais, W. M., & Hilgard, J. (2019). Correcting for bias in psychology: A comparison of meta-analytic methods. Advances in Methods and Practices in Psychological Science, 2(2), 115–144. https://doi.org/10.1177/2515245919847196

Coburn, K. M., Vevea, J. L., & Coburn, M. K. M. (2019). weightr: Estimating weight‐function models for publication bias [R package version 2.0.2]. https://CRAN.R-project.org/package=weightr

Cohen, J. (1988). Statistical power analysis for the behavioral sciences (2nd ed.). Lawrence Erlbaum Associates.

Cohen, J. (1994). The earth is round (p < .05). American Psychologist, 49(12), 997–1003. https://doi.org/10.1037/0003-066X.49.12.997

Hedges, L. V., & Vevea, J. L. (1998). Fixed‑ and random‑effects models in meta‑analysis. Psychological Methods, 3(4), 486–504

Higgins, J. P. T., Thomas, J., Chandler, J., Cumpston, M., Li, T., Page, M. J., & Welch, V. A. (Eds.). (2023). Cochrane handbook for systematic reviews of interventions (Version 6.4). Cochrane. https://training.cochrane.org/handbook

Kerr, N. L. (1998). HARKing: Hypothesizing after the results are known. Personality and Social Psychology Review, 2(3), 196–209. https://doi.org/10.1207/s15327957pspr0203_4

Maier, M., van der Weele, T. J., & Mathur, M. B. (2022). A robust method for adjusting meta-analyses for publication bias. Nature Human Behaviour, 6, 1033–1044. https://doi.org/10.1038/s41562-022-01373-0

Mertens, S., Herberz, M., Hahnel, U. J. J., & Brosch, T. (2022). The effectiveness of nudging: A meta-analysis. Proceedings of the National Academy of Sciences, 119(1), e2107346118. https://doi.org/10.1073/pnas.2107346118

Open Science Collaboration. (2015). Estimating the reproducibility of psychological science. Science, 349(6251), aac4716. https://doi.org/10.1126/science.aac4716

Schimmack, U. (2012). The ironic effect of significant results on the credibility of multiple-study articles. Psychological Methods, 17(4), 551–566. https://doi.org/10.1037/a0029487

Simmons, J. P., Nelson, L. D., & Simonsohn, U. (2011). False-positive psychology: Undisclosed flexibility in data collection and analysis allows presenting anything as significant. Psychological Science, 22(11), 1359–1366. https://doi.org/10.1177/0956797611417632

Stanley, T. D. (2017). Limitations of PET-PEESE and other meta-analysis methods. Social Psychological and Personality Science, 8(5), 581–587. https://doi.org/10.1177/1948550617693062

Sterling, T. D. (1959). Publication decisions and their possible effects on inferences drawn from tests of significance—or vice versa. Journal of the American Statistical Association, 54(285), 30–34. https://doi.org/10.2307/2282137

And here is a review by ChatGPT in the German tradition to avoid all the polite bullshit that you normally get from them.

Here is a critical review of your manuscript **“Mindful Meta-Analysis: How to Interpret Heterogeneity in Meta-Analyses of Conceptual Replication Studies”** in the **German academic style**—direct, analytical, and structured:

—

## 🧠 **General Assessment**

The manuscript is ambitious, timely, and clearly written, with a strong conceptual contribution: it challenges the conventional interpretation of meta-analytic average effect sizes when heterogeneity is high, especially in conceptual replication studies. It provides practical simulations and methodological guidance on applying and interpreting random-effects selection models (RESMs), which are often misunderstood or misapplied.

The text has clear strengths in argumentation and structure but could benefit from tightening in logic, trimming redundancy, clarifying claims, and increasing precision in some methodological details. It is also lacking in some academic conventions, especially when generalizing from simulation to empirical application.

—

## ✅ **Strengths**

1. **Clarity of Argument:**

The manuscript communicates its central point clearly: meta-analyses that ignore heterogeneity (or correct only for bias but not heterogeneity) are misleading when applied to conceptually diverse studies.

2. **Use of Simulation:**

The simulations are well chosen and informative, helping readers visualize heterogeneity and publication bias effects. The figures complement the narrative.

3. **Critical Positioning:**

The text situates itself within current methodological debates (e.g., critique of PET-PEESE, reference to Stanley 2017, Carter et al. 2019) and engages productively with them.

4. **Practical Relevance:**

By reanalyzing empirical cases (ego-depletion, nudging), the manuscript demonstrates the practical importance of mindful interpretation beyond statistical theory.

—

## ⚠️ **Areas for Improvement**

### 1. **Structure and Flow**

* The transition from simulations to empirical examples could be more structured. Currently, it feels abrupt. Use clearer sectioning, e.g.:

* Simulation Studies → Empirical Applications → Interpretation & Implications.

* The conclusion should summarize key findings **before** proposing future directions. As it stands, the implications are diluted.

> **Suggestion**: Add subheadings such as *“The Problem of the Average”*, *“Model Comparison”*, *“Case Study: Ego Depletion”*, etc., to enhance logical flow and help navigation.

—

### 2. **Terminological and Methodological Precision**

* The phrase “true effect size” is used loosely. In the context of heterogeneous effects, it is conceptually problematic. Be explicit: average effect size? distributional mean? modal effect?

* You frequently state that a conclusion is “false” (e.g., regarding ego-depletion). This is rhetorically powerful but methodologically fraught. A more cautious framing would enhance credibility:

> *“This interpretation is misleading under the assumption of heterogeneous effects.”*

* The simulation with truncated negative values is clever, but the justification (that negative values are rarely reported) should be more formally grounded or empirically supported.

—

### 3. **Theoretical Framing**

* While the critique of fixed-effect interpretations is persuasive, it would be helpful to more clearly link heterogeneity to theoretical interpretation. What does heterogeneity mean **substantively**? Are conceptual replications studying different constructs?

> **German style tip**: “Immer konkret!” Always connect abstract statistical discussions to concrete psychological interpretation.

—

### 4. **Language and Style**

* At times, the text drifts into colloquial or editorial tone (e.g., “this conclusion is evidently false,” “mindless interpretation”). In the German style, a more reserved, analytical tone is preferred.

* There is some redundancy (e.g., repeated mentions of 3PSM vs. 5PSM, multiple explanations of selection models).

> **Suggestion**: Shorten by \~10–15% through tightening and reducing repetition without losing substance.

—

## 🔍 Specific Comments

| Section | Comment |

| —————————————————— | ——————————————————————————————————————————————————————- |

| Abstract / Intro | Consider framing the contribution more boldly: *“We propose a framework for interpreting meta-analytic results under high heterogeneity using selection models…”* |

| p. 3 “The problem of methods that correct for bias…” | A paragraph break would help before “This creates problems…” for clarity. |

| p. 5 “More importantly…” | This paragraph drifts; bring focus back to the simulation’s purpose. |

| p. 6 “The question whether studies…” | A more careful distinction is needed between “studies that weren’t reported” and “studies that were never conducted.” |

| p. 7 “Carter et al. come to a different conclusion…” | Reframe without accusing them of a “false” conclusion; instead, show how their model assumptions differ. |

| Figures | Consider labeling axes more clearly and adding brief captions describing the figure’s purpose. |

—

## 📌 Conclusion (in the German tradition)

**Fazit:**

Die Arbeit stellt einen wertvollen Beitrag zur methodologischen Debatte über die Interpretation von Metaanalysen dar. Ihre zentrale These – dass heterogene Effekte bei konzeptionellen Replikationen eine differenzierte Analyse notwendig machen – ist gut begründet. Der Einsatz von Simulationsstudien und realen Beispielen überzeugt. Die Arbeit könnte jedoch in Sprache, Struktur und begrifflicher Präzision noch geschärft werden. Mit diesen Anpassungen ist die Arbeit gut geeignet für eine methodisch orientierte Zeitschrift in der Psychologie.

—

Would you like me to prepare a revised version of the manuscript with these recommendations implemented, or would you prefer I focus on certain sections (e.g., introduction, simulation results, or empirical examples)?