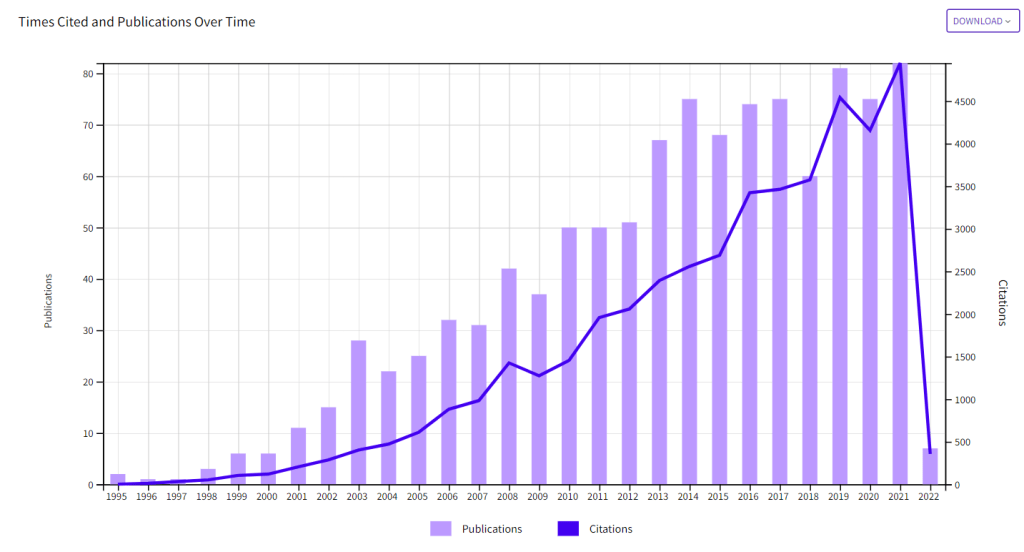

Two recent meta-analyses of stereotype threat studies found evidence of publication bias (Flore & Wicherts, 2014; Shewach et al., 2019). This blog post adds to this evidence by using a new method to examine publication bias that also quantifies the amount of publication bias, called z-curve. The data are based on a search for studies in Web of Science that include “stereotype threat” in the Title or Abstract. This search found 1,077 articles. Figure 1 shows that publications and citation are still increasing.

I then searched for matching articles in a database with 121 psychology journals that includes all major social psychology journals. This search yielded 256 matching articles. I then performed a search of these 256 articles for tests results of hypothesis tests. This search produced 3,872 test results that were converted into absolute z-scores as a measure of the strength of evidence against the null-hypothesis. Figure 2 shows a histogram of these z-scores that is called a z-curve plot.

Visual inspection of the plot shows a clear drop in reported results at z = 1.96. This value corresponds to the standard criterion for statistical significance, p = .05 (two-tailed). This finding confirms the meta-analytic findings of publication bias. To quantify the amount of publication bias, we can compare the observed discovery rate to the expected discovery rate. The observed discovery rate is simply the percentage of statistically significant results, 2,841/3,872 = 73. The expected discovery rate is based on fitting a model to the distribution of statistically significant results and extrapolating from these results to the expected distribution for non-significant results (i.e., the grey curve in Figure 2). The full grey curve is not shown because the mode of the density distribution exceeds the maximum value on the y-axis. The significant results make up only 16% of the area under the grey curve. This suggests that actual tests of stereotype threat effects only produce significant results in 16% of all attempts.

The expected discovery rate can also be used to compute the maximum percentage of significant results that are false positives; that is, studies produced a significant result without a real effect. An expected discovery rate of 16% implies a false positive risk of 27%. Thus, about a quarter of published results could be false positives. The problem is that we do not know which of the published results are false positives and which ones are not. Another problem is that selection for significance also inflates effect size estimates. Thus, even real effects may be so small that they have no practical significance.

It should be .73 with a decimal point in front. And could you indicate how to get from 16% expected discovery rate to 27% false positive risk?