The basic idea of terror management theory is that humans are aware of their own mortality and that thoughts about one’s own death elicit fear. To cope with this fear or anxiety, humans engage in various behaviors that reduce death anxiety. To study these effects, participants in experimental studies are either asked to think about death or some other unpleasant event (e.g., dental visits). Numerous studies show statistically significant effects of these manipulations on a variety of measures.

Figure 1 shows that terror management research has grown exponentially. Although the rate of publications is leveling off, citations are still increasing exponentially.

While the growth of terror management research suggests that the theory rests on a large body of evidence, it is not clear whether this evidence is trustworthy. The reason is that psychologists have used a biased statistical procedure to test theories. When a statistically significant result is obtained, the results are written up and submitted for publication. However, when the results are not significant and do not support a prediction, the results typically remain unpublished. It has been pointed out a long time ago, that this bias can produce entirely literatures with significant results in the absence of a real effects (Rosenthal, 1979).

Recent advances in statistical methods make it possible to examine the strength of evidence for a theory after taking publication bias into account. To use this method, I searched Web of Science for articles with the topic “terror management”. This search retrieved 2,394 articles. I then searched for matching articles in a database of 121 psychology journals that includes all major social psychology journals (Schimmack, 2022). This search produced a list of 259 articles. I then searched these 259 articles for statistical tests and converted the results of these tests into absolute z-scores as a measure of the strength of evidence against the null-hypothesis. Figure 2 shows the z-curve plot of the 4,014 results.

The z-curve shows a peak at the criterion for statistical significance (z = 1.96 equals p = .05, two-tailed). This peak is not a natural phenomenon. It rather reflects the selective reporting of supporting evidence. Whereas the published results show 72% significant results, the z-curve model that is fitted to the distribution of significant z-scores estimates that studies had only 14% power to produce significant results. This difference between the observed discovery rate of 72% and the expected discovery rate of 14% shows that unscientific practices dramatically inflate the evidence in favor of terror management theory. This means reported effect sizes are inflated. Moreover, an expected discovery rate of 14% implies that up to 32% of the significant results could be false positive results that were obtained without any real effect. The upper limit of the 95% confidence interval even allows for 71% false positive results. The problem is that it is unclear which published results produced real findings that could be replicated. Thus, it is currently unclear how reminders of death influence human behavior.

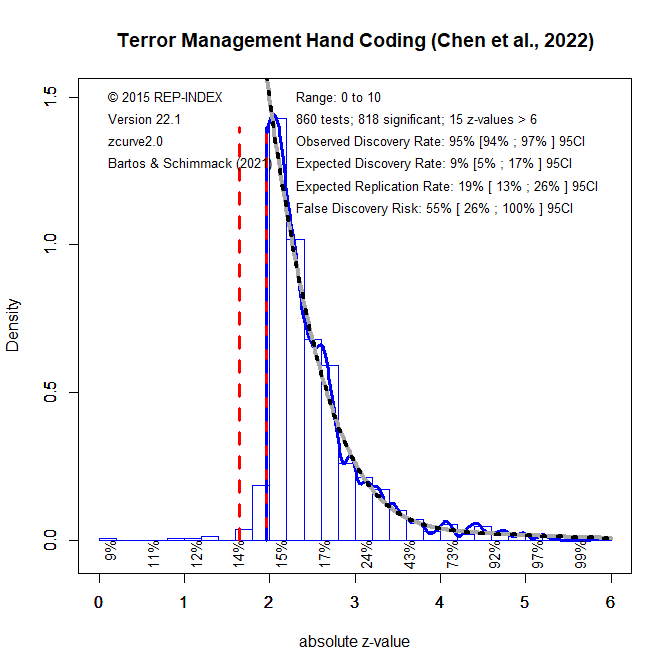

One limitation of the method used to generate Figure 2 is the automatic extraction of all test results from articles. A better method uses hand-coding of focal hypothesis tests of terror management theory. Fortunately, an independent team of researchers conducted a hand-coding analysis of terror management studies (Chen, Benjamin, Lai, & Heine, 2022).

The results mostly confirm the results of the automated analysis. The key difference is that the selection for significance effect is even more evident because researchers hardly ever report non-significant results for focal hypothesis tests. The observed discovery rate of 95% is consistent with analyses by Sterling over 50 years ago (Sterling, 1959). Moreover, most of the non-significant results are in the range between z = 1.65 (p = .10) and z = 1.96 (p = .05) that are often used as evidence to reject the null-hypothesis. While the observed discovery rate in published articles is nearly 100%, the expected discovery rate is only 9% and the 95%CI includes 5%, which is expected by chance alone. Thus, the data provide no credible evidence for any terror management effects and it is possible that 100% of the significant results are false positive results without any real effect.

1 thought on “Is Terror Management Theory Immortal?”