The main problem with claims about the status of science ranging from “most published results are false” (Ioannidis, 2005) to “science is doing very well” (Shiffrin) is that they are not based on empirical facts. Everybody has an opinion, but opinions are cheap.

Shiffrin is a cognitive psychologists and it would be strange for him to comment on other sciences like social psychology that to the best of my knowledge he is not following.

The main empirical evidence about cognitive psychology is the replication rate in the Open Science Collaboration project. In this project about 30 studies were replicated and 50% produced a significant result in the replication attempt. i think it is fair to call this a glass half full and half empty. It doesn’t support claims that most published results in cognitive psychology are false positives, nor does it justify the claim that cognitive psychology is doing very well. We can simply ignore these unfounded proclamations.

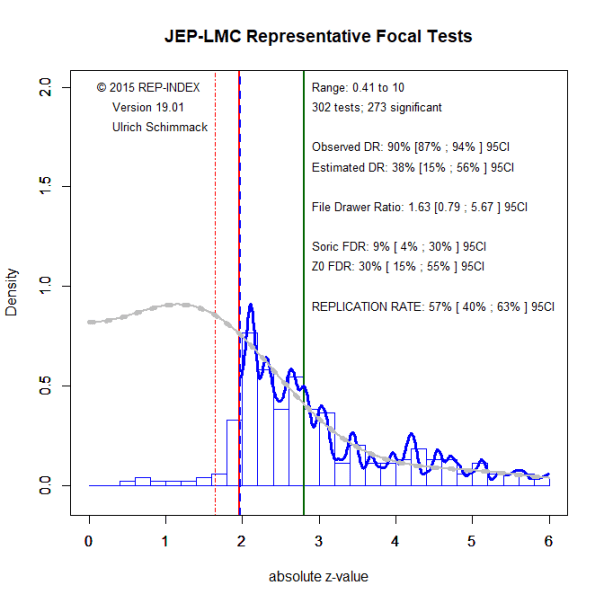

Another piece of evidence comes from coding published results in the Journal of Experimental Psychology: Learning, Memory and Cognition. The project is ongoing, but so far a representative sample of tests from 2008, 2010, 2014, and 2017, as well as the most cited articles have been coded. The results have been analyzed with z-curve.19.1 (see detailed explanation of z-curve output here).

Consistent with the results form actual replication attempts, z-curve predicts that 57%, 95%CI 40-63%, of published results would produce a significant result if the study were replicated exactly with the same sample size.

Z-curve also estimates the maximum false discovery rate (Soric, 1980). The estimate is that up to 9% of all significant results could be false positive results, if the null-hypothesis is no effect or an effect in the opposite direction. Using a more liberal criterion that also includes studies with low power (< 17%) in the specification of the null-hypothesis, the false positive risk increases to 30%.

These results suggest that cognitive psychology is not in a crisis and publishes mostly replicable results. Thus, reality is closer to Shiffrin’s than Ioanndis’s opinion, but I would not characterize the state as very good. The z-curve graph shows clear evidence of publication bias and coding of articles reveals few replication studies with negative results that are needed to weed out false positives.

Given the common format of a multiple study article and low power, we would expect non-significant results regularly (Schimmack, 2012). Honest reporting of these results is crucial for the credibility of an empirical science (Sterling, 1959; Soric, 1989). It is time for cognitive psychology to increase power and to report their findings honestly.