Subscribe to continue reading

Subscribe to get access to the rest of this post and other subscriber-only content.

Subscribe to get access to the rest of this post and other subscriber-only content.

Subscribe to get access to the rest of this post and other subscriber-only content.

Chapter 8 examines whether major life events produce lasting changes in subjective well-being. It begins with adaptation theory, especially the “hedonic treadmill” idea, which claims that people quickly return to their baseline level of happiness after good or bad events. The chapter argues that this view is too pessimistic. People do adapt to some changes, but not all. Life circumstances can have lasting effects, especially when they affect important goals, daily experiences, income, status, relationships, or health.

The chapter distinguishes two mechanisms that can make gains fade over time. First, aspirations can rise. As people get better housing, higher income, or newer products, their standards also increase, so satisfaction may not rise much. Second, emotional reactions are often strongest when circumstances change. A new house or improved condition may feel exciting at first, but the emotional boost fades as the new situation becomes normal. These mechanisms differ across life domains. They may be strong for income or housing, but weaker for close relationships, where ongoing engagement continues to matter.

The chapter then reviews evidence on unemployment. Unemployment is one of the clearest examples of a life event with a strong and persistent negative effect on well-being. It reduces income, status, structure, purpose, and social contact. Panel studies show that people do not simply adapt to long-term unemployment. Their well-being remains lower while they are unemployed and improves when they find new work. Much of the effect appears to operate through income and financial satisfaction, but unemployment also affects status and purpose.

Housing shows a different pattern. Moving to a better home increases housing satisfaction, and this improvement can last. However, global life satisfaction often changes little. This does not mean housing is unimportant. Rather, housing may fade into the background of daily life and may be underweighted when people make global life evaluations. Domain-specific measures show that housing conditions matter, especially when they affect daily life through noise, crowding, poor physical conditions, safety, or comfort. The chapter uses housing to show why domain satisfaction is essential for understanding well-being.

Disability provides a more complex case. Early claims that people adapt almost completely to disability were based on weak evidence. Better panel studies show that acquired disability often produces lasting declines in life satisfaction, especially when it involves broader health deterioration. However, people born with disabilities often report higher well-being than those who acquire disabilities later. This supports the ideal-based framework: people born with a disability form their goals and identity around that condition, whereas people who acquire a disability must revise previously formed ideals. Adaptation depends less on time alone than on whether people can build new goals compatible with their changed circumstances.

The chapter gives special attention to relationships. Cross-sectional studies show that partnered people are generally happier than singles, but earlier research underestimated the effect because it focused on marriage rather than partnership. Weddings may produce only temporary increases in well-being, but having a stable partner appears to have a lasting positive effect for most people. Cohabitation and committed partnership matter more than legal marital status. Most people want a partner, and those without one tend to report lower well-being. Happy lifelong singles exist, but they appear to be the exception rather than the rule.

Partnership improves well-being partly through material advantages, because couples often share income and expenses. However, income explains only a small part of the partnership effect. Family satisfaction and relationship quality explain more. Partnership provides emotional support, shared life management, intimacy, and companionship. Sexual satisfaction contributes somewhat, but relationship satisfaction is much more important. Thus, the benefits of partnership are not reducible to money or sex.

The chapter also discusses spousal similarity in well-being. Spouses are more similar in well-being than would be expected from genetics alone, and their well-being tends to change in the same direction over time. This suggests that shared environments, such as household income, housing, relationship quality, and common life events, influence both partners. Some similarity may reflect assortative mating or stable shared conditions, but the evidence points strongly to environmental influences within couples.

The conclusion is that adaptation is real but not automatic. Some changes, such as improvements in housing, may produce lasting domain-specific satisfaction without strongly affecting global life satisfaction. Other events, such as unemployment, divorce, and disability, can reduce well-being until circumstances or goals change. Pursuing happiness through life changes is not futile, but people need to consider how changes will affect everyday life, goal progress, and long-term priorities. Novelty can be exciting, but lasting well-being depends more on stable fit between actual life, personal ideals, and daily experience.

Subscribe to get access to the rest of this post and other subscriber-only content.

Subscribe to get access to the rest of this post and other subscriber-only content.

Chapter 5 examines subjective well-being around the world. It argues that the most informative comparisons are not small differences among the happiest countries, such as Finland, Denmark, or other Scandinavian nations, but the large differences between countries near the top and bottom of the global distribution. These cross-national differences allow researchers to test whether subjective well-being is shaped by material living conditions, social institutions, culture, and historical change.

The chapter begins with the history of cross-national comparisons. Cantril’s ladder was first used in the 1960s to compare life evaluations across nations, and the Gallup World Poll has used the same basic measure since 2008 in more than 140 countries. Comparing countries measured in both periods suggests that average life evaluations have increased over time. This challenges strong claims that happiness is purely relative or that modern life has made people less happy than in the past. At the same time, changes vary across countries, showing that national well-being is not fixed and can shift with social, economic, and political conditions.

World maps of subjective well-being show clear geographic patterns. Scandinavia, Western Europe, Australia, and other wealthy countries tend to score high, whereas many African countries score low. These patterns make some simple explanations unlikely. Climate cannot explain high Scandinavian well-being because other high-ranking countries have very different climates. Romantic ideas that Eastern societies are generally happier than Western societies are also not supported by the data.

The strongest predictor of national differences in subjective well-being is purchasing power. Median income adjusted for purchasing power predicts average life evaluations very strongly, especially when income is analyzed on a logarithmic scale. The relationship is strongest at low income levels, where money helps meet basic needs such as food, shelter, health, and safety. However, the relationship does not disappear in affluent countries. Additional income still predicts higher life evaluation, although with diminishing returns. This directly challenges simple claims that money does not buy happiness.

At the same time, income does not explain everything. Some regions are happier or less happy than their income levels predict. South America and Scandinavia score higher than expected, whereas Arab countries, East Asia, and Eastern Europe score lower. These deviations suggest that culture, institutions, social relationships, response styles, and political conditions may also matter, although their effects are harder to isolate than the effect of income.

The chapter discusses East Asia as one example. East Asian countries often report lower life satisfaction than expected from their purchasing power. Some of this may reflect response styles, because East Asian respondents are more likely to choose moderate response options and less likely to use extreme ratings. Cultural norms about modesty, realism, and self-enhancement may also influence self-reported well-being. However, it remains unclear whether these patterns reflect reporting differences, real differences in experienced well-being, or both.

Latin America shows the opposite pattern: subjective well-being is often higher than income would predict. Some of this may also reflect response style, especially the tendency to use the top category on life-satisfaction scales. But measurement artifacts do not fully explain the pattern. Social support appears to be the most plausible substantive explanation. Latin American cultures may place especially strong emphasis on close relationships, family support, and social integration. Unpaid family work and remittances may also make material living conditions better than GDP alone suggests.

Scandinavian countries consistently rank near the top, but the difference between Scandinavia and other affluent Anglo countries is small. The chapter argues against overinterpreting a Scandinavian “secret.” Much of the small advantage appears related to higher financial satisfaction, possibly because of lower inequality, stronger welfare systems, and lower material aspirations. The key point is that Scandinavia scores high largely because it combines high purchasing power with strong social and institutional supports.

Arab countries report lower subjective well-being than expected from income. Financial dissatisfaction explains part of the gap, and lower perceived freedom explains a smaller part, but a substantial difference remains. Religion does not explain the lower scores; if anything, religiosity has a small positive association with well-being. The chapter also notes that life circumstances may have different implications in different cultures. For example, marriage appears more strongly related to well-being in Anglo countries than in Arab countries.

The chapter then turns to migration as stronger evidence for the importance of living conditions. Immigrants’ well-being tends to move closer to the average well-being of the country they move to than to that of their country of origin. Immigrants from poorer countries often show large gains after moving to countries such as Canada. This supports the conclusion that national differences in well-being are not just cultural or personality differences; living conditions matter. At the same time, some cultural patterns remain, because immigrants from Latin America and East Asia show some of the same relative patterns observed in their regions of origin.

Migration studies also show that integration matters. Immigrants who identify with Canada, either while maintaining their original identity or through assimilation, report higher well-being than those who remain separated from Canadian identity or feel marginalized. This suggests that migration improves well-being most when people gain access to better living conditions and also develop a sense of belonging in the new society.

The final sections argue that subjective well-being is not the only criterion for evaluating societies. Life expectancy also matters. A country where people are moderately happy for many decades may be preferable to one where people are very happy for a short life. The concept of happy life-years combines average well-being with life expectancy. Wealthier nations often do better on both dimensions because economic resources support health care, safety, and longer lives.

The chapter ends with sustainability. Modern high well-being often depends on resource-intensive lifestyles that may harm future generations. Subjective well-being research cannot solve this moral and political problem, but it can identify societies that achieve high well-being, long lives, and more sustainable living. Scandinavian countries currently do well on these dimensions, and Costa Rica offers a warmer example of relatively high well-being with lower resource use. The broader conclusion is that money matters greatly for well-being, especially through basic needs, but the best societies must also consider longevity, social conditions, and sustainability.

The latest World Happiness Report gives Jonathan Haidt a megaphone to continue his narrative that decreasing wellbeing among young people can be blamed nearly entirely on social media use (Chapter 3). Chapter 4 shows how assessments of the evidence are biased and (US) American Psychologists, APA, are the most biased, but the (US) Surgeon General report is not much better. Policy is made based on biased readings of the evidence (fortunately, 16-year old will find ways, just like they were watching R-rated movies in the old days).

Chapter 3 is written by Haidt and the website gives a helpful warning that it is a 61 minute read. That is like asking somebody to listen to 24 hours of Fox News to find out how they misrepresent everything to support a criminal president of the United States, where young people are getting less happy. I do not have time for that. Rather, I asked Clause (not war-supporting ChatGPT) to summarize and evaluate the chapter. Importantly, this is not generic Claude. This is a Claude project that knows everything about SWB that I have written in my textbook on this topic. Yes, unlike Haidt, I have studied SWB for 30 years. Is it unbiased? No. But it is an antidote to Haidt’s noise machine.

My favorite quote from Claude’s review. “The chapter is extraordinarily effective as persuasion and deeply problematic as science.” I don’t have the scientific answer, but I know that social media are at best only a small piece of the puzzle, why young people in Canada and the USA are suffering. Distracting from these problems by blaming social media is problematic and we need to find real answers to create real solutions to this problem.

The chapter is organized as a legal prosecution — they literally say “we are making the case for the prosecution” and ask readers to use “preponderance of the evidence” as the standard. They present seven “evidence lines” organized as 15 “exhibits”:

Lines 1–3 (Testimony): Surveys of young people showing regret, surveys of parents/teachers/clinicians expressing concern, and leaked internal corporate documents showing companies knew about harms.

Lines 4–7 (Academic evidence): Cross-sectional studies showing heavy users have elevated depression risk, longitudinal studies showing social media at Time 1 predicts depression at Time 2, RCTs showing reduction in social media improves mental health, and natural experiments showing broadband rollout worsened mental health.

They then extrapolate to population-level harm estimates (millions of US teens affected) and argue this is sufficient to answer the “historical trends question” — that social media caused the youth mental health decline.

The chapter is extraordinarily effective as persuasion and deeply problematic as science. The courtroom framing is the tell. In a courtroom, the prosecution presents one side of the case. The defense presents the other. Haidt has cast himself as prosecutor and explicitly asks readers to lower their evidentiary standard. He then preemptively addresses critics not by engaging with their arguments substantively, but by saying their findings support his case once “unblended.”

The “unblending” argument is his central methodological move: whenever a study finds null or small effects, Haidt argues this is because the researchers combined too many outcomes, populations, or technologies. When you restrict to girls, to internalising symptoms, and to social media specifically, the effects get larger. This is a legitimate analytical point — but it’s also a form of specification searching. You can always find larger effects by narrowing the sample and outcome to where the signal is strongest. The question is whether those specifications were preregistered or selected post hoc.

1. No personality controls anywhere. Not a single study Haidt cites controls for Neuroticism or Depressiveness. Your Chapter 7 work shows these facets explain ~50% of SWB variance. A high-Neuroticism adolescent girl is simultaneously more likely to use social media heavily (rumination, reassurance-seeking), report depression, report body image problems, and perceive social media as harmful. Without personality controls, every “line of evidence” is confounded by the same omitted variable.

2. The testimony evidence is circular. Lines 1–3 amount to: people believe social media is harmful. But people’s causal attributions about their own mental health are unreliable — that’s one of the core lessons of your measurement chapter. If you asked depressed people in the 1990s what caused their depression, many would have blamed television, or music, or whatever was culturally salient. The fact that Meta’s own employees believed their products were harmful is concerning, but it’s still testimony about beliefs, not evidence of causation. Haidt himself acknowledges victims “could be mistaken” but then proceeds to treat the testimony as though it establishes the case.

3. The RCT evidence is weaker than presented. The headline finding is g = 0.19 for depression from social media reduction experiments. But these are short-term studies (often 1–3 weeks) with self-selected participants who know they’re in a social media reduction study. Demand effects are enormous. If you tell someone “we’re testing whether social media is bad for you, please reduce your usage,” the treatment group has a strong expectation of feeling better. There’s no placebo control for that expectation. Compare this to the evidence standards your textbook applies: lottery studies for money effects, migration quasi-experiments for cross-national differences. The RCT evidence here doesn’t come close.

4. The population extrapolations are absurd. Haidt takes an effect size from a 2-week reduction experiment on college students and extrapolates to “a decline in the prevalence of clinical depression by roughly one-third.” This assumes the experimental effect scales linearly to the population, that it would persist beyond two weeks, that college students are representative of adolescents, and that the experimental context (individual reduction while peers stay online) maps onto population-wide changes. None of these assumptions are justified.

5. The “historical trends question” remains unanswered by his own admission — then he answers it anyway. He repeatedly says the product safety question is easier than the historical trends question, and that his evidence is mainly about the former. But then he pivots and says the scale of individual harm “makes it more plausible” that social media caused the population trends. This is a non sequitur. Alcohol causes individual harm at massive scale too, but alcohol consumption hasn’t changed enough to explain any recent population trend in youth mental health.

6. He never mentions housing, employment, economic prospects, or any structural factor. In a 61-minute chapter organized as an exhaustive prosecution of social media, there is zero engagement with the alternative suspects you identified. No mention of housing affordability. No mention of the gig economy. No mention of declining real wages for young people. No mention of student debt. The word “economic” appears only in passing references to other studies’ control variables. This is exactly the bad criminal investigation you described — single suspect, no alibi checking.

7. The asymmetric framing of evidence standards is self-serving. Haidt argues that the cost of wrongly concluding social media is unsafe is low (kids just wait until 16), while the cost of wrongly concluding it’s safe is “catastrophically high.” This is a policy argument dressed up as an epistemological one. By the same logic, the cost of wrongly attributing the youth mental health crisis to social media — while ignoring structural economic causes — is also catastrophically high, because it diverts political attention from housing, labor market, and inequality reforms that could actually help.

The chapter is a 61-minute advocacy document by someone who wrote a bestselling book with the same thesis and has enormous reputational stakes in being right. The evidence he presents is real but selectively curated, never contextualized against alternative explanations, and extrapolated well beyond what the data support. From the standards your textbook establishes — multi-method designs, halo bias correction, causal evidence hierarchies, personality controls — this chapter wouldn’t pass peer review in the form it’s presented.

Alright, let me read the WHR26 Chapter 4 and evaluate it against the standards we’ve been applying to the other chapters.Night and day compared to the Haidt chapter.

This is a proper piece of meta-science rather than advocacy. The authors (this reads like Przybylski’s group) do exactly what a rigorous analysis should do: they ask why three organizations looking at the same literature reached different conclusions, and then systematically show the answer isn’t what evidence was selected but how it was synthesized and communicated.

The key findings are damning for the APA and Surgeon General but in a measured, empirically grounded way. Only 4 out of 617 unique papers appeared in all three reports — yet the methodological and thematic profiles of the cited evidence were statistically indistinguishable. So the divergence is entirely in the synthesis layer: citation accuracy, engagement with disconfirmatory evidence, acknowledgment of limitations, and calibration of certainty to what the evidence actually supports. NASEM comes out well; the APA comes out badly (20% self-citation by advisory panel members, citation clumping, outright mismatches between cited studies and the claims they’re supposed to support); the Surgeon General falls in between but leans toward rhetorical urgency over epistemic humility.

From your book’s perspective, several things stand out.

The chapter’s core argument — that the same evidence base can yield opposite policy conclusions depending on synthesis practices — is essentially a measurement-and-method-variance story applied to the policy level. It’s the same logic as your Chapter 3 telescope metaphor: the instrument (here, the synthesis process) determines what you see, and if the instrument is distorted, the conclusions are distorted. The chapter makes this point more carefully and with better evidence than anything in the Haidt chapter, which was itself an example of the very problem this chapter diagnoses.

The six quality indicators they identify (citation accuracy, contextualisation, limitation acknowledgment, engagement with disconfirmatory evidence, calibrated language, process transparency) are essentially a checklist for distinguishing science from advocacy — which maps directly onto your book’s concern about distinguishing empirical claims from conceptual positions. The Weiss “evidence as ammunition” framing is particularly apt.

The 17% causal-inference figure is striking and worth remembering. Only 59 of 355 on-topic studies used designs that could plausibly support causal claims. That’s the evidence base on which population-level policy is being demanded. Compare that with the causal evidence hierarchy in your Chapter 5 (migration quasi-experiments) and Chapter 6 (lottery studies) — those chapters insist on strong designs before drawing strong conclusions. The social media field is drawing strong conclusions from weak designs, and this chapter documents that quantitatively.

The self-critical limitations section is genuine rather than perfunctory — they flag that their single-coder qualitative analysis is a limitation, that they can’t access internal deliberative processes, and that their classification system produced some inconclusive codes. This is what intellectual honesty looks like in contrast to the other chapters.

Two observations that are more critical. First, the chapter is diplomatically careful to a fault. The APA findings — 20% self-citation, citation mismatches where the cited study literally doesn’t address the claim being made — are described as “concerning” and “worrying, even if rare.” In your blog voice, you’d call that what it is: shoddy scholarship that wouldn’t pass peer review*. The diplomacy is understandable given the WHR context, but it does somewhat blunt the force of findings that are genuinely alarming.

Second, the chapter doesn’t connect its findings back to the WHR’s own social media chapters. It evaluates external organizations’ synthesis practices but doesn’t turn the lens on whether the WHR itself — including the Haidt chapter we just read — meets these same six standards. That’s a missed opportunity, or perhaps a diplomatic impossibility given they’re in the same volume.

But overall: yes, much more balanced. This is what evidence-based analysis looks like when it’s done by people who take methodology seriously.

* Correction: This is not correct. I would not assume that pee-review is quality control Biased work constantly passes pre-publication peer review. This is the reason why we need open post-publication reviews like this one, just like we have thousands of book reviews that range in ratings from 1 to 5.

This is Twenge’s chapter, and it’s a mixed bag — competent descriptive empirics wrapped in a rhetorical frame that does some of the very things Chapter 4 just criticized.

The strength is the data. PISA gives you nationally representative samples of 15–16-year-olds across 47 countries with the same measures, which is a genuine advantage over the US/Canada/UK-dominated literature the chapter itself flags. The regional breakdowns are useful, and the finding that the social media–life satisfaction association is essentially null for boys outside of English-speaking countries and Western Europe is important — it’s the kind of finding that complicates the “social media is harming youth” narrative rather than confirming it.

The curvilinearity point is well taken and the observation about greater variance among non-users and heavy users is genuinely interesting. Both non-users and heavy users show elevated rates of both very low and very high life satisfaction, which suggests these are heterogeneous groups — some non-users are thriving, some are isolated; some heavy users are socially engaged, some are compulsively scrolling. That’s a finding that resists simple policy prescriptions, and the chapter deserves credit for reporting it.

Now the problems.

The relative risk versus linear r argument is the chapter’s rhetorical centerpiece, and it’s doing a lot of work that isn’t fully warranted. Yes, it’s true that linear r is poorly suited for curvilinear associations, and the polio/aspirin/seatbelt analogies are vivid. But those analogies are misleading in a fundamental way: polio vaccination has a known causal mechanism, aspirin has RCT evidence, and seatbelts have physics. Social media use and life satisfaction have a cross-sectional correlation from a single time point. Relative risk sounds more impressive than r = .10, but repackaging a cross-sectional association as a relative risk doesn’t make it causal. A 50% increase in “risk” of low life satisfaction among heavy users is still a 50% increase in a cross-sectional association that cannot distinguish cause from selection. The chapter acknowledges this in one sentence near the end (“this research is correlational and, thus, cannot rule out reverse causation or third variables”) but spends several paragraphs building the rhetorical frame that makes the effects sound large and practically important before that caveat appears.

This is exactly the “calibrating certainty to conclusion strength” problem that Chapter 4 just documented. The chapter front-loads the impressive-sounding relative risk statistics and buries the causal limitations.

From your book’s measurement framework, several issues stand out. The social media measure is a single item asking about “browsing social networks” on a “typical weekday,” which is essentially asking adolescents to estimate their own screen time — precisely the measure the chapter’s own literature review acknowledges adolescents are poor at estimating (line 30 cites this limitation for the field, then proceeds to use exactly such a measure). The life satisfaction measure is a single 0–10 item. Both are self-reported by the same person at the same time. Your Chapter 3 telescope metaphor applies: we’re looking through a fairly blurry instrument here, and the chapter never discusses the validity limitations of these specific measures.

The response style point the chapter raises almost in passing (line 438 — some respondents may routinely choose extreme responses, linking heavy use and 10/10 satisfaction artifactually) is actually a serious methodological concern that deserves much more than a sentence. If extreme responding is a confound, it could explain the elevated very-high-satisfaction rates among heavy users — which is one of the chapter’s most interesting findings. The chapter identifies the problem and then moves on without grappling with it.

The absence of any control variables is glaring. No personality. No family income (the chapter acknowledges PISA lacks this). No in-person social interaction (also acknowledged). No school belonging — which is ironic given that the WHR’s own Chapter 3 found school belonging effects 4–6 times larger than social media effects. The chapter is essentially reporting raw bivariate associations between two self-report variables measured at a single time point, with no covariates, and then framing them in relative risk language that implies practical importance.

There’s also a notable asymmetry in how the chapter handles regional variation. When the association is significant (girls in Western Europe, English-speaking countries), it gets highlighted. When it’s null (boys in Asia, Latin America, Middle East/North Africa), it gets reported but with less interpretive weight. The null findings are actually the majority pattern for boys — in most of the world, the association between social media use and boys’ life satisfaction is essentially zero. A more balanced reading would lead with that finding: for most adolescent boys globally, there is no meaningful association between social media use and life satisfaction.

The self-citation pattern is worth noting. Twenge cites herself (Twenge & Hamilton 2022, Twenge & Farley 2021, Twenge & Martin 2020, Twenge et al. 2018) repeatedly — four of the roughly 20 references are her own work, including the paper that introduced the relative risk framing. This isn’t disqualifying, but given that Chapter 4 just flagged the APA’s 20% self-citation rate as a concern, it’s notable.

Bottom line: this chapter is more honest about the data than the Haidt chapter — it reports null findings for boys in most regions, it shows the curvilinear pattern, and it flags the variance issue among non-users and heavy users. But the rhetorical packaging oversells the findings. The relative risk framing makes cross-sectional associations sound like established health risks, the causal limitations are acknowledged but not given proportionate weight, and the absence of any covariate adjustment means we have no idea how much of these associations would survive basic controls for personality, socioeconomic status, or social engagement. Chapter 4’s own standards — citation accuracy, engaging with complexity, calibrating certainty to evidence strength — would give this chapter a middling grade: better than the APA and OSG reports, but not meeting the NASEM standard.

Personal note: If men decrease in happiness nearly as much as women, and the social media effect is more gendered and often does not show for males, it directly points to other factors that decrease happiness for young people. The same line of reasoning was used to find out that bad air quality was not the cause of lung cancer. Men got lung cancer, women did not, and now we know that the reason was that men were smoking and women were not.

Sure. Here’s a tighter version:

Sunstein’s chapter is the most intellectually interesting in the social media section. The “product trap” concept — people who would demand money to quit TikTok individually but would pay to have everyone quit simultaneously — is a genuine insight about coordination failures in network goods. The party analogy is effective and the preference reversal is well-documented.

But three problems undermine the conclusions.

First, the entire chapter rests on three studies, two involving the author himself. That’s an essay, not an evidence review. The “Key Insights” box presents sweeping conclusions (“if social media platforms did not exist, many users would be better off”) that outrun a three-study base.

Second, Sunstein acknowledges that both his WTP and WTA measures are unreliable — low WTP may be “protest answers,” high WTA reflects the standard endowment effect — and then draws welfare conclusions from them anyway. If your thermometer is broken in both directions, you can’t read the temperature.

Third, and most fundamentally: there’s no baseline. The entire argument — people use it compulsively, wouldn’t pay for it, recognize it as time-wasting, and are modestly better off without it — describes television in 1975. Americans watched 6+ hours daily, wished they watched less, and the few reduction studies showed small wellbeing gains. Nobody concluded TV should be abolished. Sunstein never demonstrates that social media is uniquely trapping compared to every previous generation’s Wasting Time Good. The coordination failure he documents is a feature of any network good — you could run the Bursztyn experiment on email or mobile phones and probably get similar results. The question isn’t whether network effects create traps; it’s whether this trap is worse than its predecessors. The chapter never asks.

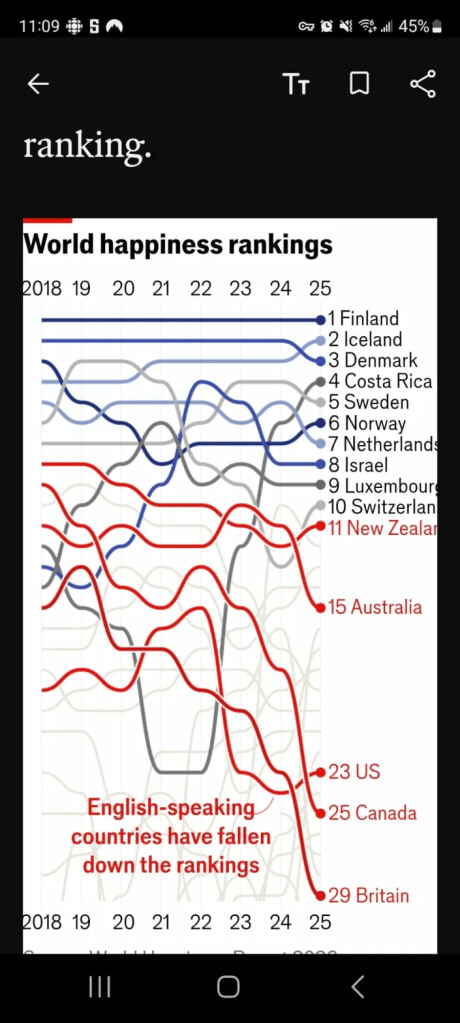

Finally, my question. The Economist published a figure based on the WHR results showing that Anglo nations are decreasing and diverging from happy Scandinavia. That is the real story in the data. So, why is the report about social media and not about the real trend in the data that needs to be examined. Is social media a cover up to distract from real problems in Angloland?

Draft: 25/04/17

Subjective indicators of well-being have gained prominence as alternatives to purely economic or objective measures of quality of life. Among these, life satisfaction, positive affect (PA), and negative affect (NA) are commonly used and frequently combined under the label of subjective well-being (SWB). However, this article argues that these indicators reflect distinct philosophical traditions and should not be conflated. Life satisfaction is a subjective indicator in the normative sense—it allows individuals to evaluate their lives based on their own criteria. In contrast, PA and NA are rooted in hedonistic theories, where well-being is defined by affective experience. Although PA, NA, and life satisfaction are positively correlated, their conceptual differences raise important theoretical and methodological concerns about combining them into a single SWB index. The article reviews competing models, including the affective component model and the Underlying Sense of Well-Being (USWB) model, and evaluates empirical evidence linking personality, affect, and life satisfaction. It concludes that life satisfaction judgments provide unique information about individuals’ personal conceptions of the good life and should be treated as a distinct subjective social indicator, not merely as a proxy for hedonic experience or a component of SWB.

Social indicators emerged in the 1960s as an alternative to purely economic measures like Gross Domestic Product (GDP), which focus on monetary output, consumer choice, and desire fulfillment. These economic indicators often overlook key social factors—such as health, education, and community cohesion—that contribute to individual and collective well-being but fall outside the scope of the market economy.

To address this shortcoming, social scientists developed subjective indicators, also known as subjective social indicators. Michalos (2014) defined subjective indicators as “measures of the quality of life from the point of view of some particular subject” (p. 6427). In contrast, objective indicators are based on the assessment of an independent observer. Common examples of objective indicators include unemployment rates and life expectancy, whereas life satisfaction and perceived health are considered subjective indicators.

One challenge in social science is that terms like subjective are often used loosely and with varying meanings. This article addresses that issue by offering a theoretical discussion of the term subjective in the context of social indicators research. Specifically, I examine the meaning of subjective in Diener’s (1984) influential definition of subjective well-being (SWB), which includes three components: Positive Affect, Negative Affect, and Life Satisfaction. The aim of this discussion is not to propose a new theory of well-being or introduce a new subjective indicator. Rather, it is to argue that SWB is not a singular theoretical construct that can be measured and used as a unified social indicator. Instead, SWB indicators are rooted in different philosophical traditions that cannot easily be reconciled and require distinct measures.

Many social indicator researchers prioritize life satisfaction as a key subjective indicator of well-being. In the journal Social Indicators Research, more articles include life satisfaction as a keyword (k = 1,232) than Positive Affect and Negative Affect combined (k = 122). The dominance of life satisfaction over PA and NA is also evident in major research projects. For example, the German Socio-Economic Panel has included measures of life satisfaction and domain satisfaction since its inception in 1984, whereas affect measures were only added in 2007. Similarly, the World Happiness Report includes LS, PA, and NA, but prioritizes LS as the primary social indicator for international comparisons.

Not everyone agrees that life satisfaction is the superior subjective indicator. For instance, Nobel Laureate Daniel Kahneman (1999) argued that life satisfaction judgments are unreliable and biased. He proposed focusing on Positive and Negative Affect as more valid indicators. While Diener maintained that all three components—life satisfaction, positive affect, and negative affect—are important, he never clearly explained how these indicators should be used to assess well-being from a subjective standpoint (Busseri & Sadava, 2010).

Diener’s (1984) inclusion of PA, NA, and LS likely reflects the difficulty of defining happiness or well-being using a single concept or prioritizing one over another. That is the perspective I adopt in this review. Rather than advocating for a unified construct of SWB, I argue that hedonic (PA, NA) and evaluative (LS) indicators reflect different conceptions of well-being and should be treated as distinct subjective indicators. To support this position, I draw on philosophical contributions to the study of happiness—particularly Sumner’s (1996) excellent summary of diverse perspectives that influenced my thinking and helped shape the definition of well-being in my collaboration with Ed Diener (Diener, Lucas, Schimmack, & Helliwell, 2009). In that book, life satisfaction was treated as the ultimate measure of well-being. Here, I take a different stance: that there is no single, definitive concept of happiness or well-being. It is more fruitful to study well-being using multiple subjective indicators rooted in distinct philosophical traditions.

Here’s the follow-up, continuing from “Philosophical Traditions and Subjective Well-Being” through the end of that section. I’ll continue delivering the rest in clean, manageable sections for accuracy and readability:

Philosophers typically distinguish between three major approaches to defining well-being or happiness (Sumner, 1996). Interestingly, Diener’s (1984) seminal article already acknowledged these three traditions (p. 543). The first is the eudaimonic approach, rooted in Aristotle’s philosophy. Diener (1984) rejected this approach on the grounds that it is tied to a specific value framework, which may not be applicable across individuals or cultures. This does not mean that eudaimonic aspects of life are unimportant or should be excluded from social indicators. Rather, it means they are not subjective indicators, because they do not assess people’s lives from their own perspective. Instead, they represent objective indicators (Michalos, 2014; Sumner, 1996).

The second approach is rooted in hedonism and defines a good life solely in terms of the balance between Positive Affect (PA) and Negative Affect (NA) (Sumner, 1996). Although Diener does not explicitly refer to hedonism, he attributes this approach to Bradburn (1969), who developed one of the earliest post-war measures of affect balance. The classification of PA and NA as subjective or objective indicators is ambiguous. While Kahneman (1999) describes them as objective, Diener (1984) includes them as components of subjective well-being. Resolving this inconsistency—and its implications for how we measure subjective well-being—is one of the central aims of this article.

The third approach emerged with the rise of the social indicators movement and public opinion research, which asked individuals to evaluate their lives using global life satisfaction (LS) items. There is broad consensus that LS judgments are subjective indicators, as they require people to evaluate their lives based on their own internal standards. These standards are not imposed by the researcher or derived from an external theory of well-being. For some, these standards may be moral or value-based; for others, they may rest on the amount of pleasure or pain experienced.

If PA, NA, and LS are all treated as subjective indicators of well-being and measured separately, it becomes necessary to specify a measurement model that explains how this information should be combined or interpreted in social indicators research. If they are aggregated into a single SWB indicator, one must decide how to weight each component. If they are analyzed separately, it remains unclear how to interpret conflicting information—either for individual decision-making or policy development.

I argue that PA and NA should be combined into a single measure of hedonic balance, consistent with Bentham’s maxim that a good life maximizes pleasure and minimizes pain. Even here, questions remain about whether PA and NA should be weighted equally—an issue that lies beyond the scope of this article. The central point, however, is that hedonic balance and life satisfaction reflect different philosophical traditions. While both are important subjective indicators, they are conceptually distinct and should not be conflated in research or policy applications (Connolly & Gärling, 2024).

First, we can distinguish between different measurement approaches. Some indicators can be assessed objectively. For example, health can be measured through physiological tests or clinical evaluations. Other indicators rely on self-reports—for instance, participants might complete a checklist of physical symptoms to assess their health. Life satisfaction (LS), positive affect (PA), and negative affect (NA) are typically measured with self-ratings, but this method alone is not a meaningful criterion for distinguishing subjective from objective indicators of well-being. Objective indicators such as income or employment status are also often assessed through self-reports, simply because it is more cost-effective. Similarly, many eudaimonic measures of well-being rely on self-reports, but this does not make them subjective in the philosophical sense (Keyes, Shmotkin, & Ryff, 2002). Furthermore, LS, PA, and NA are sometimes measured using informant reports to demonstrate the convergent validity of self-ratings (Schneider & Schimmack, 2009; Zou, Schimmack, & Gere, 2013). In conclusion, the use of self-reports is not a valid basis for distinguishing subjective from objective social indicators.

A second meaning of the term subjective is that LS, PA, and NA depend on individual characteristics—such as personality traits, values, and goals—that differ between people. While the fulfillment of universal human needs contributes to well-being, it is not sufficient to determine it. Consequently, subjective well-being (SWB) requires that lives be evaluated from the perspective of the individuals who are living them. The same life (e.g., being married with children) may lead to happiness for one person and unhappiness for another. This is the essential meaning of subjective in Diener’s concept of SWB: “The term subjective well-being emphasizes an individual’s own assessment of his or her own life—not the judgment of ‘experts’” (Diener, Scollon, & Lucas, 2009, p. 68). All three components of SWB—LS, PA, and NA—are subjective in this sense, as affective experiences are shaped by the individual dispositions of those living their lives.

However, a third meaning of subjective is central to distinguishing between hedonistic and subjectivist theories of well-being (Sumner, 1996). Sumner classifies hedonism as an objective theory of well-being because it imposes a fixed criterion: that affective experience—specifically, the amount of PA and NA—is the sole standard for evaluating a good life. While hedonism acknowledges that affective experiences vary across individuals, it still insists that these experiences are the only relevant data for judging well-being. In this sense, hedonism is not subjective, because it does not allow people to determine for themselves how their lives should be evaluated.

The difference between hedonistic and subjectivist theories of well-being lies in timing. PA and NA are subjective experiences that vary across individuals while they live their lives. But once these experiences are used to evaluate lives as a whole, they are no longer subjective in the sense of reflecting an individual’s chosen criteria. Hedonism turns affect into a standard imposed on people’s lives. As Bentham famously claimed, “Nature has placed mankind under the governance of two sovereign masters, pain and pleasure.” Yet it is ultimately Bentham who defined well-being in this way—individuals living these lives may disagree, evaluating their well-being using other standards. Therefore, hedonism is not a subjective theory of well-being because it denies people the authority to define their own standards of evaluation.

Diener et al. (2009) recognize this distinction when they write, “Affect reflects a person’s ongoing evaluations of the conditions in his or her life” (p. 76). However, affective experiences are inherently situational and momentary; they are not evaluations of life as a whole. To use them as indicators of life quality, they must be aggregated over a meaningful period. The key claim of hedonism is that the average level of PA and NA reflects a person’s quality of life (Kahneman, 1999).

There is nothing inherently problematic about using PA and NA to define happiness in terms of hedonic balance. However, it is important to acknowledge that this definition may diverge from the life evaluations of the individuals themselves. People may report high life satisfaction despite moderate levels of PA or high levels of NA if their evaluations are based on other personally meaningful criteria.

Consider two hypothetical participants. Participant A reports a 5 on PA, 5 on NA, and 9 on LS. Participant B reports an 8 on PA, 2 on NA, and 6 on LS. From a hedonistic perspective, B has higher well-being. From a subjectivist perspective, A has higher well-being. Diener’s concept of SWB, which includes all three components but does not specify how they should be combined, leaves this ambiguity unresolved.

This ambiguity arises because LS and hedonic balance (PA–NA) reflect different conceptions of well-being. One reflects retrospective life evaluation, the other captures momentary affective experiences. Just as objective indicators (e.g., wealth, health, education) can diverge from subjective ones, LS and PA/NA can yield inconsistent results. This is only a problem when researchers attempt to collapse them into a single composite score that aims to represent an undefined construct of SWB (Busseri & Sadava, 2010).

A more productive approach is to distinguish between hedonistic and subjectivist theories of happiness and to create separate indicators that reflect each tradition. Doing so respects the philosophical diversity of well-being concepts and avoids the conceptual confusion that results from trying to unify fundamentally different constructs. It is also in line with some of Diener’s later writing on this topic. Diener, Lucas, and Oishi (2018) note, “One important distinction in the conceptualization of SWB focuses on the contrast between more cognitive, judgment-focused evaluations like life satisfaction and more affective evaluations that are obtained when asking about a person’s typical emotional experience” (p. 4). This does not preclude the creation of a SWB indicator, but it does encourage the study of the components separately.

While the theoretical distinction between PA and NA as hedonic indicators and LS as a subjective indicator can be traced back to the early days of social indicators research (Diener, 1984), it is often overlooked—especially in the psychological literature. For example, an influential article examining the relationship of SWB with Ryff’s measure of Psychological Well-Being treated PA, NA, and LS as indicators of a latent SWB factor and ignored the unique variances of these indicators (Keyes et al., 2002). This model precludes the possibility that some people may rely on PWB dimensions such as meaning or autonomy to evaluate their lives.

Further confusion was introduced when Diener’s SWB concept was equated with hedonistic theories of well-being (Ryan & Deci, 2001). A few years later, Deci and Ryan (2008) acknowledged that LS is “not strictly a hedonic concept” (p. 2), but this caveat has been largely ignored. The preceding review clarifies that LS is not a hedonic indicator of well-being at all. It is a subjective indicator that allows individuals to evaluate their lives based on their own criteria. If these evaluations correlate strongly with PA and NA, it shows that people often assess their lives using criteria that also increase PA and reduce NA. However, these evaluations also correlate strongly with dimensions from eudaimonic theories of well-being (Keyes et al., 2002). Thus, it is unclear which sources of information individuals actually use, and it is incorrect to classify life satisfaction judgments as hedonic indicators or to ignore the unique variance in LS that is not shared with PA or NA.

The appeal of life satisfaction judgments as subjective indicators lies in the fact that they allow individuals to evaluate their lives based on their own standards. This makes life satisfaction fundamentally different from both hedonic and eudaimonic measures, which evaluate lives based on fixed criteria applied equally to all individuals. When life satisfaction is combined with hedonic indicators, this unique subjective perspective is lost, and it becomes impossible to empirically examine how hedonic and eudaimonic aspects of a good life contribute to people’s own evaluations.

The key conclusion is that variance in life satisfaction judgments that is not shared with hedonic indicators should not be dismissed as measurement error. Instead, it may contain valid information about how people personally assess their lives. No other social indicator provides this type of insight. This may explain why life satisfaction is the most widely used subjective social indicator.

Although hedonic and subjective indicators of well-being are conceptually distinct, they are typically positively correlated. That is, individuals who experience more positive affect (PA) and less negative affect (NA) tend to report higher levels of life satisfaction (LS) (Busseri & Erb, 2024; Zou, Schimmack, & Gere, 2013). A common explanation for this correlation is that people draw on their past affective experiences when evaluating their lives through life satisfaction judgments (e.g., Andrews & McKennell, 1980; Connolly & Gärling, 2024; Kainulainen, Saari, & Veenhoven, 2018; Kööts-Ausmees, Realo, & Allik, 2013; Kuppens, Realo, & Diener, 2008; Rojas & Veenhoven, 2013; Schimmack & Kim, 2020; Schimmack, Diener, & Oishi, 2002; Schimmack, Radhakrishnan, Dzokoto, & Ahadi, 2002; Suh, Diener, Oishi, & Triandis, 1998; Veenhoven, 2009; Zou, Schimmack, & Oishi, 2013). This explanation is often referred to as the affective component model (Andrews & McKennell, 1980). It assumes that people are at least partial hedonists who use the balance of past PA and NA to evaluate their lives. However, because PA and NA do not fully explain the variance in LS, it suggests that people also rely on other sources of information when forming life satisfaction judgments.

While the affective component model is widely accepted, alternative models have been proposed. Busseri and Erb (2024), building on Keyes et al.’s (2002) framework, argue that PA, NA, and LS are correlated because they are all influenced by an unobserved third variable. This is referred to as a hierarchical model, a common structure in measurement models in which a latent factor accounts for shared variance among observed variables. Following Keyes et al. (2002), the shared variance among PA, NA, and LS is often labeled as subjective well-being (SWB). However, Keyes and colleagues treated LS as a fallible indicator of SWB, assuming that its unique variance is merely measurement error. Busseri and Erb (2024) challenge this view, suggesting that LS includes meaningful variance not captured by PA or NA—but their model requires a theoretical account of the unobserved variable that gives rise to the shared variance among the three SWB components.

Busseri and Erb propose that this latent factor reflects an underlying sense of well-being. Because PA and NA are, by definition, aggregates of momentary affective experiences, this model implies that people’s overall sense of well-being shapes both their affective experiences and their life satisfaction judgments—independently of those experiences themselves. In other words, people’s feelings in the moment do not directly influence how they evaluate their lives retrospectively. Busseri and Erb call this a hierarchical model, but this term does not clearly distinguish their causal model from Keyes et al.’s (2002) measurement model and may lead to confusion. To avoid this ambiguity, I will refer to their model as the Underlying Sense of Well-Being (USWB) model.

The affective component model and the USWB model make competing predictions that can be tested empirically. One prediction of the USWB model is that situational factors—which are known to influence momentary affect—do not influence life satisfaction judgments. Research has consistently shown that affective experiences are highly variable across time due to situational influences (Eid, Notz, Steyer, & Schwenkmezger, 1994; Epstein, 1979). However, these momentary experiences are also shaped by stable individual differences. When affect is aggregated over time, the influence of stable dispositions becomes more apparent (Epstein, 1979).

Thus, the most plausible interpretation of the USWB model is that it reflects personality dispositions—for example, emotional stability or extraversion—that consistently influence both affective experience and retrospective life evaluations. According to the model, these stable traits lead people to evaluate their lives more positively, independent of their actual momentary affective experiences. In contrast, the affective component model predicts that life satisfaction judgments are influenced by past affective experiences and that the effects of stable traits are mediated through those experiences.

The first question is whether life satisfaction judgments are even related to aggregated momentary affective experiences. While there are hundreds of cross-sectional studies showing correlations between memory-based ratings of positive affect (PA) and negative affect (NA) with life satisfaction (LS), surprisingly few studies have examined the relationship between aggregated momentary ratings of PA and NA and LS. Even fewer have attempted to control for response styles—systematic biases in self-reports that can produce spurious correlations (Schimmack, Schupp, & Wagner, 2008). Nonetheless, circumstantial evidence suggests that aggregated momentary affect is meaningfully related to life satisfaction, and that these correlations are not merely methodological artifacts.

First, several studies have shown that retrospective ratings of affect are at least partially grounded in actual past affective experiences (Barrett, 1997; Diener, Smith, & Fujita, 1995; Mill, Realo, & Allik, 2016; Röcke, Hoppmann, & Klumb, 2011; Schimmack, 1997; Thomas & Diener, 1990). Second, both daily and retrospective self-ratings of affect show substantial convergence with informant ratings (Diener et al., 1995; Gere & Schimmack, 2011), suggesting that shared method variance and response styles are not sufficient to explain the observed associations. Furthermore, research has shown that aggregated momentary affect predicts life satisfaction judgments (Schimmack, 2003), supporting the idea that people use their affective experiences as inputs when evaluating their lives.

These results are consistent with the affective component model, but they do not rule out the alternative explanation that the correlation between affect and life satisfaction is driven by underlying dispositional tendencies—that is, that life satisfaction is linked to the tendency to experience more PA and less NA, rather than to the actual affective experiences themselves.

Fortunately, a larger body of research has examined how personality traits relate to life satisfaction (Anglim et al., 2020). When personality is measured at the level of broad dispositions, Neuroticism consistently emerges as the strongest predictor of life satisfaction judgments. Neuroticism can be conceptualized as a general disposition to experience low well-being. The low end of Neuroticism, often referred to as Emotional Stability, may therefore be one of the core dispositional components of the USWB factor. One prediction of this interpretation is that life satisfaction should be more strongly predicted by Neuroticism than by actual affective experiences.

Contrary to this prediction, however, PA and NA are stronger predictors of life satisfaction than Neuroticism (Schimmack, Diener, et al., 2002; Schimmack & Kim, 2020; Schimmack, Schupp et al., 2008). This also holds true for Busseri and Erb’s data, which were used to support the hierarchical model. PA remains a strong predictor of LS even after controlling for Neuroticism. Including Extraversion and other personality traits as additional predictors does not change this finding. While this result does not falsify the USWB model—it is still possible that other unobserved variables are responsible for the PA–LS link—it is consistent with the affective component model, which posits a direct influence of affective experience on life satisfaction.

Here is the next revised section, titled “Domain Satisfaction as a Third Variable”:

Another way to test the competing models is to examine how known predictors of life satisfaction judgments relate to PA and NA. One well-established source of information in life satisfaction judgments is individuals’ evaluations of important life domains, such as work and family (Schneider & Schimmack, 2010). Domain satisfaction explains self–informant agreement in life satisfaction judgments, even after controlling for personality effects on both life satisfaction and domain satisfaction judgments (Payne & Schimmack, 2020). These findings suggest that domain satisfaction provides a plausible third variable—or a set of third variables—that can account for the observed correlation between hedonic balance and life satisfaction judgments.

According to this interpretation, life satisfaction judgments may be based primarily on cognitive evaluations of life domains, while PA and NA are higher (or lower) when domain satisfaction is high (or low). In this case, there is no need to invoke a shared unobserved cause. Instead, the logic is straightforward: lives that are evaluated more positively also tend to produce more positive and fewer negative affective experiences on a momentary basis.

One of the few studies to test this hypothesis examined hedonic balance and domain satisfaction as mediators of personality effects on life satisfaction. It found that hedonic balance contributed to the prediction of life satisfaction and was the main mediator of personality effects (Schimmack et al., 2002). Unfortunately, this study has not been replicated, and further research is needed to determine the relative importance of hedonic balance versus domain satisfaction as mediators.

Here is the next revised section, titled “Domain Satisfaction as a Third Variable”:

Another way to test the competing models is to examine how known predictors of life satisfaction judgments relate to PA and NA. One well-established source of information in life satisfaction judgments is individuals’ evaluations of important life domains, such as work and family (Schneider & Schimmack, 2010). Domain satisfaction explains self–informant agreement in life satisfaction judgments, even after controlling for personality effects on both life satisfaction and domain satisfaction judgments (Payne & Schimmack, 2020). These findings suggest that domain satisfaction provides a plausible third variable—or a set of third variables—that can account for the observed correlation between hedonic balance and life satisfaction judgments.

According to this interpretation, life satisfaction judgments may be based primarily on cognitive evaluations of life domains, while PA and NA are higher (or lower) when domain satisfaction is high (or low). In this case, there is no need to invoke a shared unobserved cause. Instead, the logic is straightforward: lives that are evaluated more positively also tend to produce more positive and fewer negative affective experiences on a momentary basis.

One of the few studies to test this hypothesis examined hedonic balance and domain satisfaction as mediators of personality effects on life satisfaction. It found that hedonic balance contributed to the prediction of life satisfaction and was the main mediator of personality effects (Schimmack et al., 2002). Unfortunately, this study has not been replicated, and further research is needed to determine the relative importance of hedonic balance versus domain satisfaction as mediators.

A major problem with the USWB model is that the loadings of the three components—PA, NA, and LS—on the latent variable are not theoretically specified. Instead, they are estimated freely based on the pattern of correlations among the observed variables. However, these correlations can vary substantially across studies. The most problematic issue is that the loading of LS on the USWB factor depends on the correlation between PA and NA. This is conceptually problematic because the influence of an underlying sense of well-being on life satisfaction judgments should not depend on how PA and NA are correlated.

This issue arises from the assumption that PA and NA are both influenced by a single common cause and that the strength of this effect is reflected in the correlation between PA and NA. The problem becomes particularly severe when PA and NA are measured as independent dimensions of affect, as in Bradburn’s original measure or the widely used PANAS (Watson et al., 1988), which has been used in many SWB studies. In these cases, the correlation between PA and NA is often small, or even positive. Positive or near-zero correlations cannot be modeled with a single latent factor. Even small negative correlations can be modeled but result in near-unity loadings for LS, which is implausible.

This problem is often masked in studies that use PA and NA measures that are moderately to strongly negatively correlated. However, the theoretical issue remains: why should the effect of a latent sense of well-being on life satisfaction depend on the correlation between PA and NA—a correlation that itself is influenced by many other factors, including measurement design and response format?

One simple solution to this problem is to compute a hedonic balance score (e.g., PA minus NA) and propose that an underlying sense of well-being influences both hedonic balance and life satisfaction, but not affective experiences directly. However, this model is not identified—that is, it cannot be estimated from the data alone. An alternative solution is to allow for a correlated residual between PA and NA. For example, PANAS PA and NA scores may be positively correlated because they both capture high arousal or activation. While this model also remains unidentified in isolation, it can be identified when other predictors are added to the model.

Figure 1 illustrates that differences between the affective component model and the USWB model. The affective component model does not assume that that the shared variance between PA and NA is not the product of an unobserved variable (uswb) that directly influences ls. Thus, the path from uswb to LS is assumed to be zero. In contrast, the USWB model assumes that this is the only causal path that relates PA and NA to LS. Thus, the paths from na to ls and from pa to ls are fixed to zero. The problem with testing these two models against each other is that models that allow for all three parameters to be free are not identified.

In conclusion, the affective component model and the USWB model are more similar than often portrayed—especially in literature reviews that present the USWB model as a measurement model of SWB. In doing so, these reviews often conflate theoretical constructs with empirical models and obscure deeper issues. Simply labeling a latent variable “SWB” does not explain the nature of its components or justify collapsing them into a single factor. As Bollon (2002) notes, unobserved does not mean unobservable. Any model that includes a latent variable must eventually specify what it represents and identify measurable indicators to test its claims. Without this, the latent variable remains a hypothetical placeholder with no direct empirical support (Borsboom, 2003).

Costa and McCrae (1980) proposed one of the first theoretical models linking personality traits to subjective well-being (SWB). In their model, Extraversion is a disposition to experience more positive affect (PA), but not necessarily less negative affect (NA), while Neuroticism is a disposition to experience more negative affect, but not necessarily less positive affect. In turn, PA and NA were conceptualized as independent predictors of both hedonic and evaluative components of well-being.

Within this framework, the influence of Extraversion on life satisfaction (LS) is mediated by PA, and the influence of Neuroticism on LS is mediated by NA. This model does not require a latent USWB factor, because personality traits are assumed to be related only to the unique variances of PA and NA. However, this approach was developed under the assumption that PA and NA are independent constructs.

With PA and NA scales that are empirically correlated—as is often the case in recent studies—Neuroticism tends to predict PA (negatively), and Extraversion tends to predict NA (negatively). This opens up the possibility that the effects of these two personality traits may be fully mediated by a USWB factor, rather than by the unique variances of PA and NA. Busseri and Erb (2024) suggest this possibility, but they did not directly compare models that allow both pathways.

Using their meta-analytic correlation matrix, based on data from N = 30,000 participants, I conducted a model comparison. The affective component model makes a simple prediction: personality traits influence PA and NA directly, and their effects on LS are fully mediated by affect. In this model, direct effects of Neuroticism and Extraversion on LS are fixed to zero, yielding two degrees of freedom. The model fit was acceptable for CFI (CFI = .991) but not for RMSEA (RMSEA = .094). This suggests that personality traits still explain unique variance in LS beyond their effects on affect.

When direct paths from Neuroticism and Extraversion to LS were added, the model became saturated (zero degrees of freedom) and, naturally, fit the data perfectly. Importantly, the indirect effects were stronger than the direct effects: for Neuroticism, indirect b = –.24 and direct b = –.09; for Extraversion, indirect b = .21 and direct b = .08. These results do not prove that the affective component model is the correct model, but they show that Busseri and Erb’s data are consistent with it.

The USWB model introduces a latent variable and assumes full mediation of personality effects through this factor. The initial version of the model also assumed that the effects of Extraversion and Neuroticism on affect were themselves fully mediated by USWB. This model showed poor fit (CFI = .852; RMSEA = .267). As Busseri and Erb noted, freeing the path from Neuroticism to NA improved model fit (CFI = .985; RMSEA = .094). Adding a path from Extraversion to PA improved fit further (CFI = .989; RMSEA = .103), and allowing Neuroticism to predict PA directly yielded still better fit (CFI = .997; RMSEA = .072). Finally, adding direct effects from Extraversion and Neuroticism to LS yielded a saturated model with perfect fit.

In this model, personality effects on LS were mostly mediated by USWB: for Neuroticism, indirect b = –.25 and direct b = –.08; for Extraversion, indirect b = .11 and direct b = .18.

The key conclusion from this model comparison is that the data are consistent with both the affective component model and the USWB model. The main reason is that the SPANE PA and NA scales used in these analyses are not independent and are not uniquely related to Extraversion and Neuroticism. Thus, these data do not allow us to empirically distinguish the two models. Additional data, preferably using orthogonal measures of affect and independent personality predictors, are needed to provide a definitive test.

At a broader level, this highlights the enduring third-variable problem in correlational research. For example, if PA and LS are correlated, we know that LS cannot cause PA (because affect precedes evaluation), but we cannot determine whether PA influences LS or whether both are caused by an unobserved third variable.

Here is the revised section “Distinguishing Causal Models and Conceptual Models”:

A recurring problem in the literature on subjective well-being is the confusion between causal models and conceptual models. The question “What is SWB?” is a conceptual one. The question “What causes SWB?” is a causal one. The first must be answered before the second can be meaningfully addressed. Unfortunately, this distinction is often overlooked—particularly in studies that use structural equation modeling (SEM) without a clearly defined theoretical foundation for the constructs being modeled.

SEM is a powerful tool for testing causal hypotheses, but it relies on the assumption that the latent variables it models represent valid theoretical constructs. For instance, researchers may assume that people do, in fact, have affective experiences that can be measured with some error. If multiple observed indicators are available (e.g., several items measuring PA), SEM can separate valid variance from measurement error by modeling a latent PA factor.

However, problems arise when researchers go beyond measurement error correction and start creating new latent constructs simply because observed variables are correlated. For example, Keyes et al. (2002) created a latent PWB factor because scores on Ryff’s six psychological well-being scales were correlated. But the decision to treat the shared variance as a new construct cannot be justified purely on psychometric grounds. It requires a theoretical justification—an argument that a previously unmeasured construct exists and is responsible for the observed correlations.

This same issue plagues latent SWB models. Simply labeling the shared variance between PA, NA, and LS as “subjective well-being” does not answer the conceptual question of what SWB actually is. Nor does it clarify what the unique variances in PA, NA, and LS represent. As Busseri and Sadava (2010) rightly asked: What is SWB?

One answer is that SWB reflects hedonic well-being, and life satisfaction judgments are merely expressions of this hedonic state. In this view, LS is a valid indicator of SWB only to the extent that it correlates with PA and NA; any unique variance in LS is treated as measurement error. This is the position associated with Ryan and Deci (2001), though they later acknowledged that LS is “not strictly a hedonic concept” (Deci & Ryan, 2008). In fact, even Busseri and Erb (2024) explicitly reject the idea that LS is simply a proxy for hedonic balance.

Thus, the SWB factor model, when treated as a conceptual model, fails to address the very question it is meant to answer. At the conceptual level, SWB is not a unitary construct. It includes at least two distinct components:

As discussed earlier, a latent SWB model may still be useful as a causal model—for example, to test whether hedonic experiences predict life satisfaction—but this does not imply that SWB itself is a coherent, unified construct. Conceptually, it is not. The degree to which people rely on affective experiences when making life evaluations is an empirical question that requires a clear distinction between hedonic indicators (PA, NA) and subjective indicators (LS) based on individuals’ own evaluative frameworks.

Here is the revised Conclusion section:

Life satisfaction judgments are a prominent subjective indicator of well-being. They are based on a distinctive approach to defining well-being—one that gives individuals the authority and responsibility to define happiness for themselves. This perspective emerged from opinion and survey research, where social scientists sought to understand how people evaluate their own lives. In contrast, philosophers have traditionally attempted to define happiness objectively, applying a single standard to all individuals. These efforts have given rise to both eudaimonic and hedonistic theories of well-being.

Diener (1984) classified eudaimonic indicators as objective, and affective experiences as subjective, because they are shaped by the personalities of the individuals experiencing them. He also classified life satisfaction judgments as subjective, because they require individuals to evaluate their lives according to their own criteria. This led to the now-common definition of subjective well-being (SWB) as consisting of high PA, low NA, and high LS. However, this definition has created considerable confusion, particularly because it is unclear how to integrate information from PA, NA, and LS into a single SWB indicator.

I have argued that SWB is not a well-defined theoretical construct. The label simply groups two theories of happiness—hedonistic and subjectivist—under a broad umbrella, but this does not mean they form a unified concept. Hedonic balance is subjective in the sense that affective experiences are internal, but its use as a well-being indicator is grounded in hedonistic philosophy, which asserts that pleasure and pain are the only relevant evaluative criteria. In contrast, life satisfaction judgments are subjective because they give people the freedom to define happiness for themselves. This conception is not rooted in a philosophical definition of happiness but instead reflects a pluralistic, individual-centered approach: happiness is whatever people believe it to be.

This makes life satisfaction a unique and important social indicator. It is not merely a component of SWB, but an independent construct that captures people’s own evaluations of their lives—evaluations that may or may not align with affective states. While this conclusion may reinforce existing practices in social indicators research, it is valuable to clarify the conceptual foundation for using life satisfaction as a measure of happiness. Life satisfaction is a valid indicator of happiness only under the assumption that happiness cannot be objectively defined and that people can generate meaningful, personal theories of well-being. This approach stands in contrast to hedonistic models and should not be collapsed into a composite SWB score alongside PA and NA.

Andrews, F. M., & McKennell, M. C. (1980). Measures of self-reported well-being: Their affective, cognitive, and other components. Social Indicators Research, 8(2), 127–155.

Anglim, J., & Grant, S. (2016). Predicting psychological and subjective well-being from personality: Incremental prediction from 30 facets over the Big Five. Journal of Happiness Studies, 17(1), 59–80. https://doi.org/10.1007/s10902-014-9583-7

Anglim, J., Horwood, S., Smillie, L. D., Marrero, R. J., & Wood, J. K. (2020). Predicting psychological and subjective well-being from personality: A meta-analysis. Psychological Bulletin, 146(4), 279–323. https://doi.org/10.1037/bul0000226

Anusic, I., Schimmack, U., Pinkus, R. T., & Lockwood, P. (2009). The nature and structure of correlations among Big Five ratings: The halo-alpha-beta model. Journal of Personality and Social Psychology, 97(6), 1142–1156. https://doi.org/10.1037/a0017159