Introduction

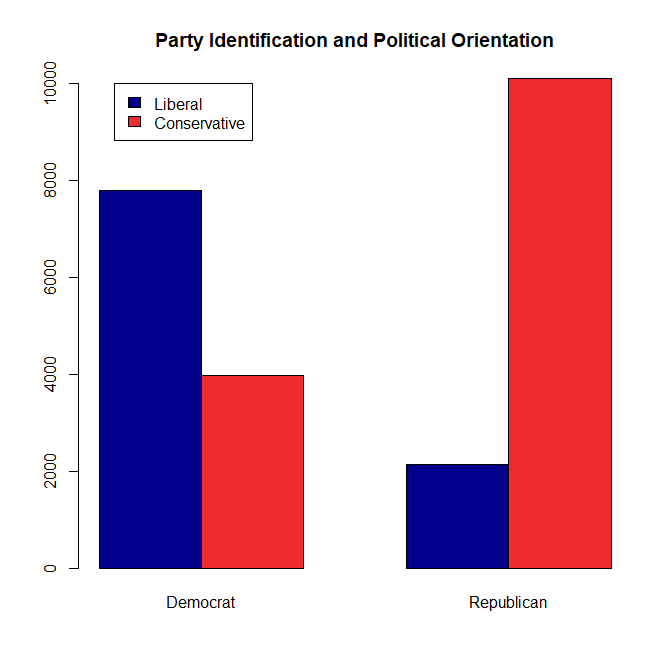

Social psychology aims to study real-world problems with the tools of experimental psychology. The classic obedience studies by Milgram aimed to provide insights into the Holocaust by examining participants’ reactions to a sadistic experimenter. In this tradition, social psychologists have studied prejudice against African Americans since the beginning of experimental social psychology.

As Amadio and Cikara (2021) note, these studies aim to answer questions such as “How do humans learn to favor some groups over others?” and “Why does merely knowing a person’s ethnicity or nationality affect how we see them, the emotions we feel toward them, and the way we treat them?”

In their chapter, the authors review the social neuroscience of prejudice. Looking for prejudice in the brain makes a lot of sense. A person without a brain is not prejudiced, so we know that prejudice is somewhere in the brain. The problem for neuroscience is that the brain is complex and it is not easy to figure out how it does what it does. Another problem is that prejudice is most relevant when individuals act on their prejudices. For example, it is possible that prejudice contributes to the high prevalence of police shootings that involve Black civilians (Schimmack & Carlson, 2020). However, the measurement of brain activity often requires repeated measurements of many trials under constrained laboratory conditions.

In short, using neuroscience to understand prejudice faces some practical challenges. I was therefore skeptical that this research has produced much useful information about prejudice. When I voiced my skepticism on twitter, Amadio called me a bully. I therefore took a closer look at the chapter to see whether my skepticism was reasonable or whether I was uninformed and prejudice against social neuroscientists.

Face Processing

To act prejudice, the brain has to detect a difference between White and Black faces. Research shows that people do indeed notice these differences, especially in faces that were selected to be clear examples of the two categories. If asked to indicate whether a face is White or Black, responses can be made within a few hundred milliseconds.

The authors acknowledge that we do not need social neuroscience to know this. “behavioral studies suggest that social categorization occurs quickly (Macrae & Bodenhausen, 2000)” (p. 3), but they suggest that neuroscience produces additional information. Unfortunately, the meaning of early brain signals in the EEG are often unclear. Thus, the main conclusion that the authors can draw from these findings is that they provide “support for the early detection and categorization of race.” In other words, when the brain sees a Black person, we usually notice that the person is Black. It is not clear however that any of these early brain measures reflect the evaluation of the person, which is really the core of prejudice. Categorization is necessary, but not sufficient for prejudice. Thus, this research does not really help us to understand why noticing that somebody is Black leads to negative evaluations of this person.

Another problem with this research is the artificial nature of the task. Rather than presenting a heterogeneous set of faces that is representative of participants’ social environment, participants see 50% White and 50% Black faces. Every White person who has been suddenly in a situation where 50% or more of the people are Black may notice that they respond differently to this situation. The brain may look very different in these situations than in situations where race is not salient. In addition, the faces are strangers. Thus, these studies have no relevance for prejudice in work-settings with colleagues or in classrooms where teachers know their students. This lack of ecological validity is of course not unique to brain studies of prejudice. It applies also to behavioral experiments.

The only interesting and surprising claim in this section is that Black participants respond to White participants just like White participants respond to Black faces. “Research with

Black participants, in addition to White participants, has replicated this pattern and clarified that it is typically larger to racial outgroup faces rather than Black faces per se” (p. 5). The statement is a bit muddled because the out-group for Black participants is White.

Looking up the results from Dickter and Bartholow (2007) shows a clear participant x picture interaction effect (i.e., responses for opposite race trials are different for same race trials). While the effect for White participants is clearly significant, the effect for Black participants is not, F(1,13) = 4.46, p = .054, but was misreported as significant, p < .05. The second study did not examine Black participants or faces. It also did not include White participants. It showed that Asian participants responded stronger to the outgroup (White) than the in-group (Asian), F(1, 19) = 17.06, p = .0006. The lack of a White group of participants is puzzling. The third study had the largest sample of Black and White participants (Volpert-Esmond & Bartholow, 2019), but did not replicate Dickter and Bartholow’s findings. “the predicted Target race × Participant race interaction was not significant, b=−0.09, t(61.3)=−1.47, p=.146” I have seen shady citations and failure to cite disconfirming evidence, but it is pretty rare for authors to simply list a disconfirming study as if it produced consistent evidence. In conclusion, there is no clear evidence how minority groups respond to faces of different groups because most of the research is done by White researchers at White universities with White students.

The details are of course not important because the authors main goal is to sell social neuroscience. “Social neuroscience research has significantly advanced our understanding of the social categorization process” (p. 11). A close reading shows that this is not the case and that it is unclear what early brain signals mean and how they are modulated by the context, race of participants, and race of faces.

How is prejudice learned, represented, and activated?

Studying learning is a challenging task in an experimental context. To measure learning some form of memory task must be administered. Moreover, this assessment has to be preceded by a learning task. To make learning experiments more realistic, it is ideal to have a retention interval between the learning and the memory task. However, most studies in psychology are one-shot laboratory studies. Thus, the ecological validity of learning studies is low. Not surprisingly, the chapter contains no studies that examine neurological responses during learning or memory tasks related to prejudice.

Instead, the chapter reviews circumstantial evidence that may be related to prejudice. First, the authors review the general literature on Pavlovian aversive conditioning. However, they provide no evidence that prejudice is rooted in fear conditioning. In fact, many White Americans in White parts of the country are prejudice without threatening interactions with Black Americans. Not surprisingly, even the authors note that fear conditioning is not the most plausible root of prejudice.

“Some research has attempted to demonstrate a Pavlovian basis of prejudice using prepared fear or reversal learning paradigms (Dunsmoor et al., 2016; Olsson et al., 2005), but these results have been inconclusive regarding a prepared fear to Black faces (among White Participants) or have failed to replicate (Mallan et al., 2009; Molapour et al., 2015; Navarrete et al., 2009; Navarette et al., 2012). To our knowledge, research has not yet directly tested the hypothesis that social prejudice can be formed through Pavlovian aversive conditioning” (p. 14)

As processing of feared objects often involves the amygdala, one would expect White brains to show an amygdala response to Black faces. Contrary to this prediction, “most fMRI studies of race perception have not observed a difference in amygdala response to viewing racial outgroup compared with ingroup members (e.g., Beer et al., 2008; Gilbert et al., 2012; Golby et al., 2001; Knutson et al., 2007; Mattan et al., 2018; Phelps et al., 2000; Richeson et al., 2003; Ronquillo et al., 2005; Stanley et al., 2012; Telzer et al., 2013; Van Bavel et al., 2008, 2011).” (p. 15). The large number of studies shows how many resources were wasted on a hypotheses that is not grounded in an understanding of racism in the United States.

The chapter then reviews research on stereotypes. The main insight provided here is that “while the neural basis of stereotyping remains understudied, existing research consistently identifies the ATL (anterior temporal lobe) as supporting the representation of social stereotypes” (p. 17). However, it remains unclear what we learn about prejudice from this finding. If stereotypes were supported by some other brain area, would this change prejudice in some important way?

The authors next examine the involvement of instrumental learning in prejudice. “Although social psychologists have long hypothesized a role for instrumental learning in attitudes and social behavior (e.g., Breckler, 1984), this idea has only recently been tested using contemporary reinforcement learning paradigms and computational modeling (Behrens et al.,

2009; Hackel & Amodio, 2018).” (p. 19). Checking Hackel and Amodio (2018) shows that this review article does not mention prejudice. Other statements have nothing to do with prejudice, but rather explain why prejudice may not influence responses to all group-members. “Behavioral studies confirm that people incrementally update their attitudes about both persons (Hackel et al., 2019)” (p. 19). The authors want (us) to believe that “a model of instrumental prejudice may help to understand aspects of implicit prejudice” (p. 20), but they fail to make clear how instrumental learning is related to prejudice, let alone implicit prejudice.

The section on prejudice as habits starts with a wrong premises. “Habits: A basis for automatic prejudice? Automatic prejudices are often likened to habits; they appear to emerge from repeated negative experiences with outgroup members, unfold without intention, and resist change (Devine, 1989).” Devine’s (1989) classic subliminal priming study has not been replicated and subliminal priming in general has been questioned as producing robust findings. Moreover, the study has been questioned on methodological grounds and it has been shown that classifying an individual as Black does not automatically trigger negative responses. The main reason why prejudice is not a habit is that it requires often many repeated instances to form a habit and many White individuals have too little contact with Black individuals to form prejudice habits. The whole section is irrelevant because the authors note that “social neuroscience has yet to investigate the role of habit in prejudice” (p. 21). We can only hope that funding agencies are smart enough not to waste money on this kind of research.

This whole section ends with the following summary.

” A major contribution of social neuroscience research on prejudice has been to link different aspects of prejudice—stereotypes, affective bias, and discriminatory actions—to neurocognitive models of learning and memory. It reveals that intergroup bias, and implicit bias in particular, is not one phenomenon, but a set of different processes that may be formed, represented in the mind, expressed in behavior, and potentially changed via distinct interventions.” In short, we don’t know anything more about prejudice that we did not know without social neuroscience.

Effects of prejudice on perception

The first topic is face perception. Behavior studies show that individuals tend to be better able to discriminate between faces of their own group than faces of another group. Faces are processed in a brain area called the fusiform gyrus. A study by Golbi et al. (2001) with 10 White and 10 Black participants confirmed this finding for White Americans, t(8) = 2.10, p = .03, but not for African Americans. t(9) = 0.63. Given the small sample size the interaction is not significant in this study. The more important finding was that the fusiform gyrus showed more activation to same-race faces, t(18) = 2.58, p = .02. Inconsistent with the behavioral data, African American participants showed more activation of the fusiform face area as much as White participants. Over the past two decades, this preliminary study has been cited over 300 times. We would expect a review in 2021 to include follow-up and replication studies, but the preliminary results of this seminal study are offered as evidence as if they are conclusive. Yet, in 2021 it is clear that many results with just significant p-values, p > .005, often do not replicate. The authors seem to be blissfully unaware of the replication crisis. I was able to find a recent study that examined own-group bias for White participants only with three age groups. The study replicated findings that White participants show more activation to White faces than to Black faces, especially for adolescents and adults. The study also linked this finding to prejudice, but I will discuss these results later because it was not the focus of the review article.

In short, behavioral studies have demonstrated that White Americans have difficulties in distinguishing Black faces. This has led to false convictions based on misidentification by eye-witnesses. Expert testimony by psychologists has helped to draw awareness to this problem. Social neuroscience shows that this problem is correlated with activity in the fusiform gyrus. It is not clear, however, how knowledge about the localization of face processing in the brain provides a deeper understanding of the problem.

The authors suggest, however, that face processing may directly lead to discriminatory behavior based on an article by Krosch and Amodio (2019). In a pair of experiments, White participants were given a small or large amount of money and then had to allocate it to White or Black recipients based on some superficial impression of deservingness. In Study 1 (N = 81, 10 excluded), EEG responses to the faces showed a greater N170 response to Black faces, but only when resources were scarce, 2way interaction F(1, 69) = 4.97, p = .029. Furthermore, the results showed a significant mediation effect on resource allocation, b = .14, se = .09, p = .039. Study 2 used fMRI (N = 35, 5 excluded). This study showed the race effect on the fusiform gyrus, but only in the scarcity condition, F(1, 28) = 7.16, p = .012. Despite the smaller sample size, the mediation analysis was also significant, b = .43, se = .17, t = 2.64, p = .014. While the conceptual replication of the finding across two different studies with different brain measures makes these result look credible, the fact that all critical tests produced just significant results, p > .01 undermines the credibility of these findings (Schimmack, 2012). The most powerful test of credibility for a small set of tests is the Test of Insufficient Variance (Schimmack, 2014; Renkewitz & Keiner, 2019). The test first converts the p-values into z-scores. It then compares the observed variance to the expected variance of 1. The observed variance for these four p-values is much smaller, V = .05. A chi-square test shows that the probability of this outcome by chance is p = .013. Thus, it is unlikely that sampling error alone produced this restricted amount of variation. A more likely explanation is that the authors used questionable research practices to produce a perfect picture of significant results when the actual studies had insufficient power to produce significant results even if the main hypotheses are true. The main problem are the mediation analysis that rely on correlations in small sample sizes. It has been shown that many mediation analyses cannot be trusted because they are biased by questionable research practices

Effects of prejudice on emotion

Emotion is the most important topic for understanding prejudice. Whereas attitudes are broad dispositions to evaluate members of a specific group positively or negatively, emotions are the actual, momentary affective reactions to members of these groups. Ideally, neuroscience would be able to provide objective measures of emotions. These measures would reveal whether a White person responds with negative feelings in an interaction with a Black person. Obtaining objective, physiological indicators of emotions has been the holy grail of emotion research. First attempts to locate emotions in the body failed. Facial expressions (smiles and frowns) can provide valid information, but facial expressions can be controlled and do not always occur in response to emotional stimuli. Thus, the ability to measure brain activity seemed to open the door for objective measures of emotions. However, attempts to find signals of emotional valence in the EEG have failed. fMRI research focused on amygdala activity as a signal of fear, but latter research showed that the amygdala also responds to some positive stimuli, specifically erotic stimuli. Given this disappointing history, I was curious to see what the latest social neuroscience research on emotion has uncovered.

As it turns out, this section provides no new insights into emotional responses to members of an outgroup. The main focus is on empathy in the context of taking the perspective of an in-group or out-group member and guilt. The main reason why fear or hate are not explored is probably that there are no known neural correlates of these emotions and that research with undergraduate students in response to pictures of Black and White faces is unlikely to elicit strong emotions.

In short, the main topic where neuroscience could make a contribution lacks from knowledge of valid measures of emotions in the brain.

Effects of prejudice on decision making

Emotional responses would be less of a problem if individuals would not act on their emotions. Most adult individuals learn to regulate their emotions and to inhibit undesirable behaviors. The reason prejudice is a problem for minority groups is that some White individuals do not feel a need to regulate their negative emotions towards African Americans or that they lack the ability to do so in some situations, which is often called implicit bias. Thus, understanding how the brain is involved in actual behaviors is even more important than understanding its contribution to emotions. Although of prime importance, this section is short and contains few citations. One reference is to the resource allocation study by Krosch and Amodio that I reviewed in detail earlier. Blissfully aware of the questions raised about oxytocin research, another reference is to a study with oxytocin administration (Marsh et al., 2017). Thus, there is no research reviewed here that illuminates what the brain is doing when White individuals discriminate against African Americans. This does not stop the authors from making a big summary statement that “social neuroscience research has refined our understanding of how prejudice influences the visual processing of faces, intergroup emotion, and decision-making processes, particularly as each type of response pertains to behavior” (p. 34).

Self-regulation of Prejudice

This section starts of with a study by Amodio et al. (2004) and the claim that the results of this study have been replicated in numerous studies (Amodio et al., 2006; 2008; Amodio & Swencionis, 2018; Bartholow et al., 2006; Beer et al., 2008; Correll et al., 2006; Hughes et al., 2017). The main claim based on these studies is that self-regulation of prejudice relies on “detection of bias and initiation of control, in dACC—a process that can operate rapidly and in the absence of deliberation, and which can explain individual differences in prejudice control failures” (p. 39).

Amodio et al.’s (2004) study used the weapons – identification task. This task is an artificial task that puts participants in the position of a police officer who has to make a split second decision whether a civilian is holding a gun or some other object (cell phone). Respondents have to respond as quickly as possible whether the object is a gun or not. The race of the civilians is manipulated to examine racial biases. A robust finding is that White participants are faster to identify guns after seeing a Black face than a White face and slower to identify a tool after seeing a White face than a Black face. On some trials, participants also make mistakes. When the brain of participants notices that a mistake was made, EEG shows a distinct signal that is called the error-related negativity (ERN). The key finding in this article is that the ERN was more pronounced when participants identified a tool as a gun in trials with Black faces than in trials with White faces, t(33) = 2.94, p = .006. Correlational analysis suggested that participants with larger ERNs after mistakes with Black faces learned from their mistakes and reduced their errors, r(32) = -.50, p = .004. These results show that at least some individuals are aware when prejudice influences their behaviors and control their behaviors to avoid acting on their prejudice. It is difficult to generalize from this study to regulation of prejudice in real-life because the task is artificial and most situations provide only ambiguous feedback about the appropriateness of actions. Even the behavior here is a mere identification rather than an actual behavior such as a shoot or no-shoot decision, which might produce more careful responses and fewer errors especially in more realistic training scenarios (Andersen zzz).

Another limitation of these studies is the reliance on a few pictures to represent the large diversity of Black and White people.

All replication studies seem to have used the same faces. Therefore, it is unclear how generalizable these results and how much contextual factors (e.g., gender, age, clothing, location, etc.) might moderate the effect.

Some limitations of the generalizability were reported by Amadio and Swencionis (2018). The racial bias effect was eliminated (no longer statistically significant) when 80% of trials showed Black faces with tools rather than guns. This finding is not predicted by models that assume racial bias often has an implicit (automatic and uncontrollable) effect on behavior. Here it seems that simple knowledge about the low frequency of Black people with guns was sufficient to block the behavioral expression of prejudice. Study 4 measured EEG, but did not report ERN results.

The summary of this section concludes that “social neuroscience research on prejudice control has significantly expanded psychological theory by identifying and distinguishing multiple mechanisms of control” (p. 39). I would disagree. The main finding appears to be that the brain sometimes fails to notice that it made an error and that lack of awareness of these errors prohibits correcting this error. However, the studies are designed to produce errors in the first place to be able to measure the ERN. Without time pressure, few errors would be made and as shown by Amadio and Swencionis show that racial bias depends on a specific context. That being said, lack of awareness may cause sustained prejudice in the real world. One important role of diversity training is to make majority members aware of behaviors that hurt minority members. Awareness of the consequences should reduce the frequency of these behaviors because they are controllable as the reviewed research suggests.

The conclusion section repeats the claim that the review highlights “major theoretical advances produced by this literature to date” (p. 42). However, this claim rings hollow in comparison to the dearth of findings that inform our understanding of prejudice. The main problem for social neuroscience of prejudice is that the core component of prejudice, negative affect, has no clear neural correlates in EEG or fMRI measures of the brain, and that experimental designs suitable for neuroscience have low ecological validity. The authors suggests that this may change in the future. They provide a study with Black and White South Africans as an example. The study measured fMRI while participants viewed short video-clips of Black and White individuals in distress. The videos were taken from the South African Truth

and Reconciliation Commission. The key finding was that brain signals related to empathy showed an in-group bias. Both groups responded more to distress by members of their own group. The fact that this study is offered as an example for greater ecological validity shows the problems for social neuroscience to study prejudice in realistic settings where one individual responds to another individual and their behavior is influenced by prejudice. The authors also point to technological advances as a way to increase ecological validity. Wearable neuroimaging makes it possible to measure the brain in naturalistic settings, but it is not clear what brain signals would produce valuable information about prejudice.

My main concerns is that social neuroscience research on prejudice takes away resources from other, in my opinion more important, prejudice research that focuses on actual behaviors in the real world. I am not the only one who has observed that the focus on cognition and the brain has crowded out research of actual behaviors (Baumeister, Vohs, & Funder, 2007; Cesario, 2021). If a funding agency can spend a million dollars on a grant to study the brains of undergraduate students while they look at Black and White faces or on the shooting errors of police officers in realistic simulations, I would give money to the study of actual behavior. There is also a dearth of research on prejudice from the perspective of the victims. They know best what prejudice is and how it affects them. There needs to be more diversity in research and White researchers should collaborate with Black researchers who can draw on personal experiences and deep cultural knowledge that White researchers lack or fail to use in their research. Finally, the incentive structure needs to change. Prejudice researchers are rewarded like all other researchers for publishing in prestigious journals that are controlled by White researchers. Even journals dedicated to social issues have this systemic bias. Prejudice research more than any other field needs to ensure equity, diversity, and inclusions at all levels. Moving social neuroscience of prejudice out of White social cognition research into a diverse and interdisciplinary field might help to ensure that these studies actually inform our understanding of prejudice. Thus, a reallocation of funding is needed to ensure that funding for prejudice research benefits African Americans and other minority groups.