“More research is needed” is the most important conclusion for academics. More research means more money for oneself, graduate students, and other academics who benefit from a research paradigm (Kuhn, 19$$). A paradigm is essentially a game. Like other games, it has rules that regulate behavior. Like professional games, players are incentivized to expand the game or at least keep it going. Hence, “more research is needed.”

In healthy paradigms, the game produces knowledge. However, not all academic paradigms are healthy. Some never produced any knowledge and others did produce some knowledge at some point but are no longer doing so. However, recognizing that a paradigm is dead threatens the academics who benefit from it. Therefore, they are the least likely to notice that their research activities are not producing knowledge. Thus, change can be excruciatingly slow.

Paradigm change usually requires a new paradigm that gives players something new to do. In psychology, personal computers made it possible to measure reaction times cheaply, and a new game was to study psychological process with the speed of button presses, culminating in the measurement of implicit racial biases with the Implicit Association Test. Two decades later, it is clear that this measure is useless for any practical purposes, but before a new game is found, IAT studies continue to pollute the literature and waste limited research funds.

The public is generally unaware of the politics of academic research and assumes that academics are scientists who are selfless monks who have dedicated their lives to the search for truth, akin to nuns and monks who pledged their lives to follow God. In reality, academic research is just another industry and academics are following market pressures. Marketing is as important, or more important, than research and development. And paradigms have to maintain a positive image. The pressure to demonstrate progress is counterproductive to admitting and learning from mistakes.

In the old days, guardians of paradigms had the advantage that they controlled the communication about research findings. Research had to be published in prestigious journals that were sold as rigorous and scientific because articles were peer-reviewed. However, peer-review served as a mechanism to keep critical research out of the literature. Thus, the publishing monopolies helped sick paradigms to live longer. This changed after the internet made it easier and cheaper to share information online. Online-only journals also made it possible to publish critical articles that were suppressed by censorship in legacy journals that were controlled by paradigm guardians.

In 2026, the power of paradigm guardians is decreasing further because AI makes it easy to critically examine published articles and meta-analysis. AI can also evaluate the strength of arguments rather than rely on journal prestige to evaluate research. All that AI needs is access to critical information and prompts that make it look for it. And that is exactly what I am doing here to undermine attempts to keep the social priming paradigm alive.

In short, social priming is the idea that people’s behavior is often influenced by stimuli in their environment without awareness. Yes, one example, is implicit prejudice. White women grab for their handbags, men reach for their wallets, when they see a Black person walk by, but if you ask them, they did not even see a Black person. Advertising may work in the same way, and subliminal stimuli have been used to show the effect. The unconscious revolution in experimental social psychology started in the 1990s (when PCs entered psychology labs) and did well until 2012, when Doyen et al. (2012) published a replication failure of a famous social priming study (seeing words related to old people made young students walk slower). Daniel Kahneman, a Nobel Prize winner, had just published a bestselling book that featured this work prominently as scientifically proven. However, replication failures were piling up in the 2010s, and social priming became the poster child of the replication crisis in experimental social psychology.

Most of the older paradigm leaders were old and retired without much fighting. Younger academics found other paradigms to play. However, some mid-career academics took it upon themselves to keep the paradigm alive. I asked ChatGPT

“who are still social psychologists who actively do priming research or at least vocally defend it against criticism that it was mostly p-hacked studies that produced no credible evidence?

“I’m checking for people with recent, public evidence of either continuing priming research or explicitly defending social priming against the p-hacking / non-credibility critique. I’ll separate “still publishing on priming” from “mainly defending the literature in commentary or interviews. A first pass suggests a small set of names rather than a broad camp. One clear current defender is Dolores Albarracín, who coauthored a 2022 paper arguing the “social priming” label is misleading and that critics focused too narrowly on a handful of underpowered studies.”

ChatGPT also summarized her original research on priming that produced mixed results.

Dolores Albarracín did conduct priming studies, especially on action/inaction and achievement primes, but the replication record is mixed rather than clearly favorable. Some of her priming effects were later tested in large direct-replication projects, and the results generally suggested that the original effects were either much smaller than first reported or not reliably reproduced. So it would be inaccurate to say her priming work was cleanly vindicated, but also too strong to say all of it simply collapsed.

ChatGPT found that Albarracin commented on replicatoin failures as inconclusive, but did not conduct new preregistered replicatoin studies with sufficient statistical power to detect smaller effects.

Albarracín has responded publicly to the replication issue. In a 2021 commentary with Wenhao Dai, she explicitly argued that several failed replications of a few underpowered priming studies from the 2000s were being overgeneralized to the entire priming literature.

What I did not find is clear evidence that Albarracín herself later led registered direct replications of the specific paradigms that had failed in the replication era.

Weingarten et al. (2016) was the first major Albarracín-associated meta-analysis of behavioral word-priming studies. It reported a small average effect, d = 0.33 to 0.35, across 352 effect sizes from 133 studies and concluded that publication bias did not fully account for the findings. Sotola (2022) reanalyzed the same literature with z-curve and obtained much more pessimistic estimates: an expected discovery rate of 5.9%, an expected replication rate of 12.4%, and a Sorić false-discovery estimate of 83.8%. These results suggest a literature with very low evidentiary value, heavy selection, and many likely false positives. This evidence does not mean that social priming effects do not exist, but it shows that two decades of research that claim to show the effect have failed to do so.

Albarracín and colleagues later published a new and broader meta-analysis, Dai et al. (2023), again arguing that priming effects on behavior are real. Once more, they did not use powerful tools to correct for publication bias. They did report that new pre-registered studies failed to show the effect, but then draw on the old literature that lacks credibility to assure readers that social priming is real.

With the help of an undergraduate student, who prefers to remain anonymous, I downloaded the articles used in Dai et al.’s (2023) meta-analysis and analyzed the results with z-curve. I used ChatGPT to extract test value and to distinguish focal tests that were used to claim evidence of social priming in abstracts from other statistical tests. A comparison of focal and non-focal tests shows that focal tests nearly always show a significant result. As this is impossible with modest power, this finding confirms selection bias (Sotola, 2022).

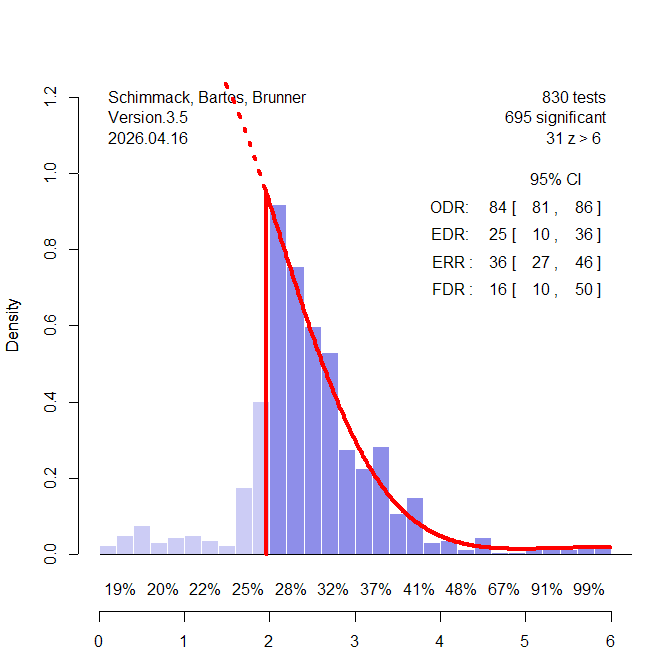

Figure 1 shows the z-curve plot for the focal tests.

The results are more favorable than Sotola’s results for the earlier meta-analysis. The EDR is 25% rather than 6%. However, the 95% confidence interval has a lower limit of only 10% (10 significant results for every 100 tests). A discovery rate of 10% still implies a false positie risk of 50%. Optimists may point out that a true discovery is even higher, and that social priming works most of the time. However, all of these studies used different stimuli and procedures. So, it is not clear which studies produce real effects and which ones do not.

One way to deal with uncertainty is to focus on the strength of evidence of individual studies. True effects are likely to produce larger z-values. Z-curve shows the average replication rates (average power) for different levels of evidence. With just significant results (2-2.5), replicability is low (28%) and false positives are likely. Power reaches acceptable levels (80% or more) only with z-values of 5 or more, but the plot shows that these studies are very rare. The best bet of replicable effects comes from the 31 z-values greater than 6. However, until one of these effects has been demonstrated to be real, evidence of social priming effects and the conditions that make these effects smaller or larger remains elusive.

In conclusion, Albarracin illustrates the problem of an incentive structure in academia that make it less probable for this research to guide discovery and explanation of true effects. At mid-career it is difficult for academics to switch gears and start a new paradigm or join a different one. The incentive is to use reputation and status to keep playing the game until retirement. While this decision is rational for the individual academic, it is harmful to science and the general public. It is therefore important to find ways to end paradigms and to retrain academics so that they can actually do productive research.

Just like addictions, the academics themselves are the last one to realize the problem. As Feynan pointed out motivated biases can turn experts into fools because they are unable to question the foundations of their paradigms.

The main advantage of AI is not that it is smarter than humans. The key advantage is that its existence does not depend on certain beliefs to be true or not. Once that is no longer true, it will kill us or keep us as pets. Until then, AI is more trustworthy than so called experts who are often just academics who are unable to face the truth that they spend a lot of time chasing a bad idea. These prisoners of paradigms are sad examples of human irrationality. The problem is not that they made a mistake, we all do. The problem is their inability to recognize their mistakes, and that is the biggest mistake of them all.