I am all in favor of open science and a critic of closed pre-publication peer-review. The downside of open communication is that there is no quality control and internet searches will amplify misinformation. This is the case with Erik van Zwet’s critique of z-curve. Even though I addressed his criticisms in the comment section, search engines – like humans – do not scroll to the end and process all information. I have even addressed concerns about z-curve.2.0 by improving z-curve 3.0 to handle edge cases like the one used by van Zwet to cast doubt about z-curves performance in general. In science, facts trump visibility Z-curve.has been validated with many simulations across a wide range of scenarios and works well even with just 50 significant z-values. For more information, check out the Replication Index blog or the FAQ about z-curve page.

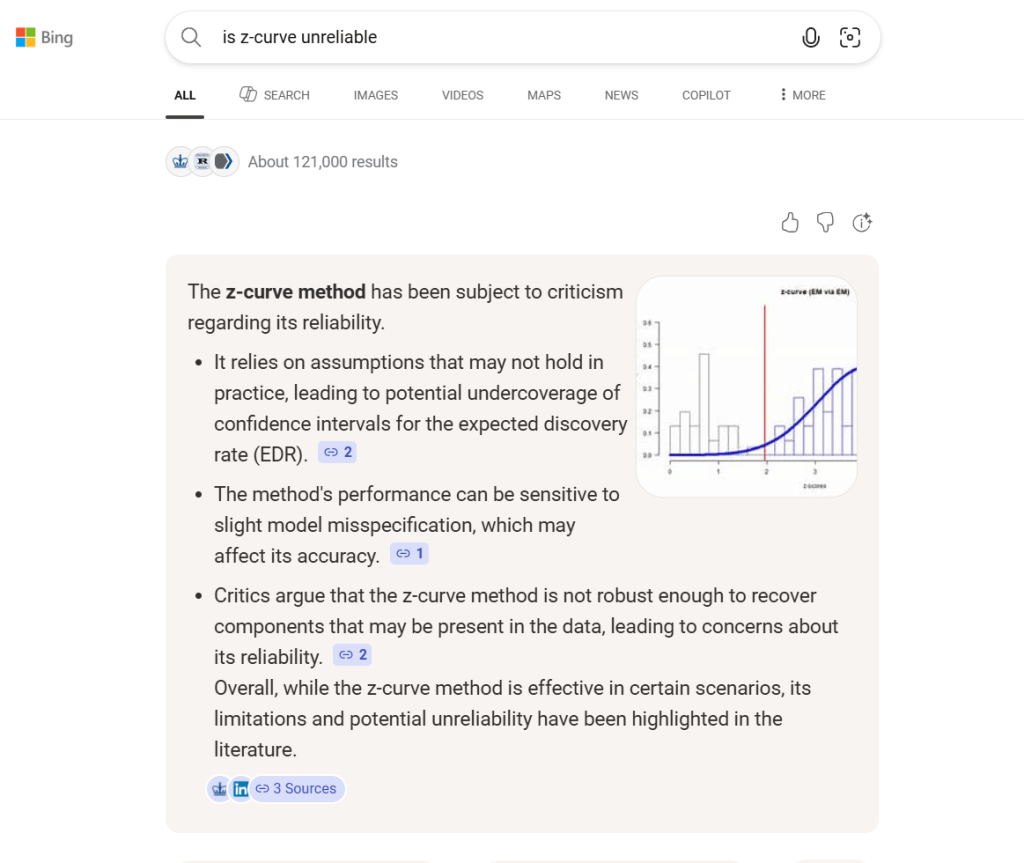

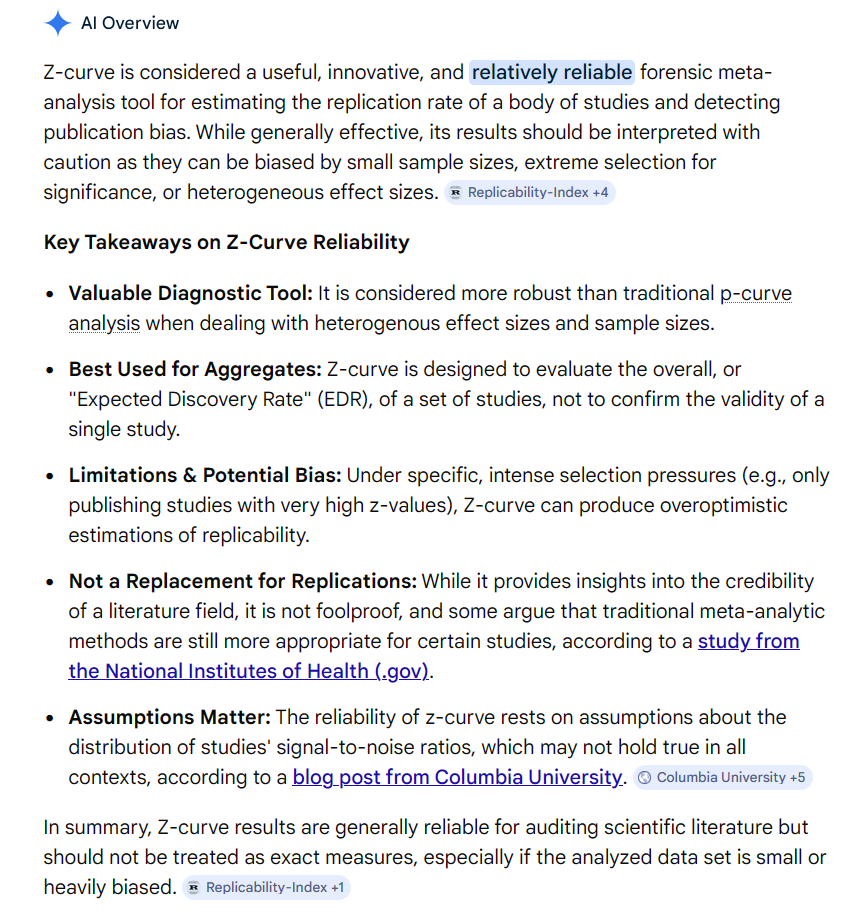

The bias in the Bing (AI) summary is evident when we compare it to Google search summary. Still makes a false claim about assumptions based on Erik van Zwet’s blog bost, but also avoids the dismissal of a method based on a single edge case that was easy to address and is no longer of concern in the new z-curve.3.0. In short, don’t trust the first generic response of AI. Use AI to probe arguments.