Chapter 3 shows the use of z-curve.3.0 with the Open Science Collaboration Reproducibility Project (Science, 2015) p-values of the replication studies. The r-code can be found on my github page.

zcurve3.0/Tutorial.R.Script.Chapter3.R at main · UlrichSchimmack/zcurve3.0

Here are the links to Chapter 1 and links to the other chapters.

Introduction to Chapter 3

Z-curve was developed to examine the credibility (publication bias, replicability, false positive risk) of articles that report statistical results, typically null-hypothesis significance tests. The need for such a tool became apparent in the early 2010s, when concerns about replication failures and high false positive risks led to a crisis of confidence in published results.

Another remarkable investigation of the credibility of psychological science was the Reproducibility Project of the Open Science Collaboration (Science, 2015). Nearly 100 results published in in three influential journals were replicated as closely as possible. The key finding that has been cited in thousands of articles was that the percentage of significant results in the replication studies was much lower, and that effect sizes were much smaller as well.

In line with the emphasis on transparence, the project also made the data from this study openly available. The data provide a valuable learning tool to illustrate the use of z-curve.3.0. The data from this project are unique in that z-curve results based on the original results can be compared to the results of the replication studies. Normally, the “truth” is unknown or simulated with simulation studies. Here, the replication studies serve as an approximation of the truth. For example, the replicability estimate based on the p-values of the original studies can be compared to the actual outcome of the replication studies. Chapter 2 analyzed the original data. Chapter 3 analyzes the replication data. The replication data are unusual in that results were reported independent of the outcome of a study. This means that there should be no bias against non-significant results. Z-curve tests whether this is actually the case. The most interesting application of z-curve is to estimate the false positive risk of the original studies based on the replication data.

3.0 First Examination of the Z-curve Plot

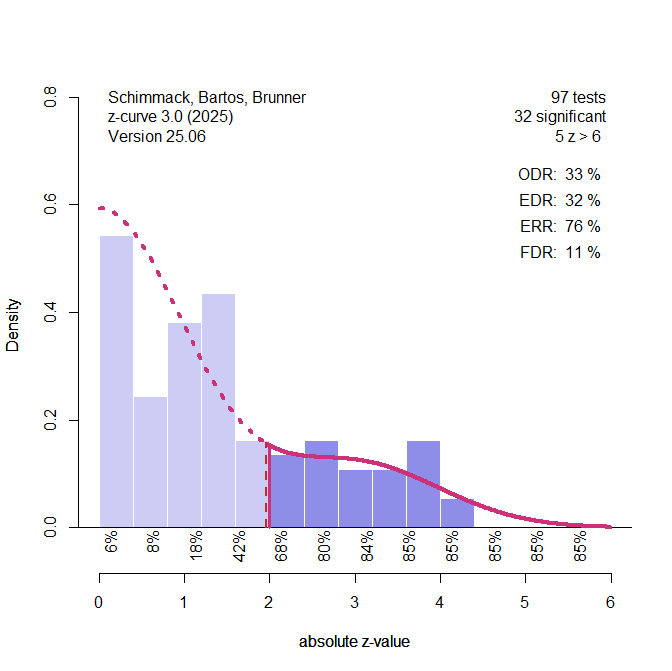

The first step is to run a quick z-curve analysis with the fast density method and no bootstrap and then change parameters to adjust the y-axis and the width of the histogram bars to make the figure visually appealing.

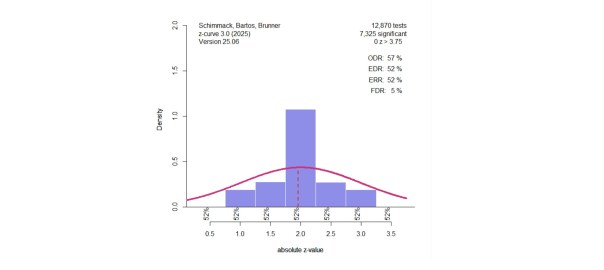

Visual inspection of the plot suggests that there is no selection bias. This is confirmed by the comparison of the observed discovery rate (ODR, i.e., the percentage of significant results) and the expected discovery rate (EDR, i.e., the percentage of significant results predicted by the model). The ODR is 33% and the EDR is 32%. The ODR is even lower than the EDR. More important is that the two estimates are practically identical. Even if there were a small statistically significant difference in a large sample, selection bias would be small and not affect the results.

Z-curve.3 provides a simple test of bias that showed evidence of bias in the original studies (Chapter 2). This test assumes that there is no bias (the null-hypothesis). Under this assumption, z-curve is fitted to all z-values, not just significant ones. Bias will produce too many just significant results. The default range for just significant results is 2 to 2.6 (about p = .05 to .01).

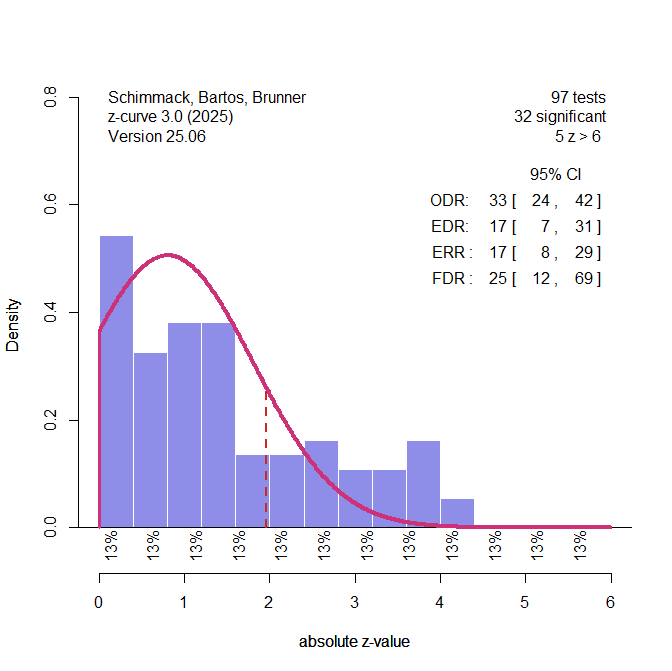

Figure 2 shows that the model fits the data well. The test of excessive just significant results is not significant, p = .7082. Another test examines whether p-hacking is present. It is hardly necessary to run this test with these data, but I am presenting the results anyways because this test was significant for the original results.

To test for p-hacking, we fit z-curve to the “really” significant results (z > 6). The model predicts the distribution of “just” significant results. This time evidence of too many significant results would suggest that p-hacking added just significant results.

Figure 3 shows the results. The test is also not significant, p = .6789. Thus, there is no evidence of bias in these data.

The next test examines heterogeneity of power. With some successful and many failed replications, it is plausible that the studies are heterogeneous. For non-significant results the true power is likely to be low, or the null-hypothesis may even be true. However, significant results in a replication study imply that the null hypothesis is unlikely to be true and that the study had a low false negative risk (i.e., adequate power).

Surprisingly, the heterogeneity test of the original studies did not show evidence of heterogeneity. This may be due to the problem of estimating heterogeneity with strong bias. Here the test has more power because we can fit the model to all results. This increases the set of studies from ~30 to ~ 100.

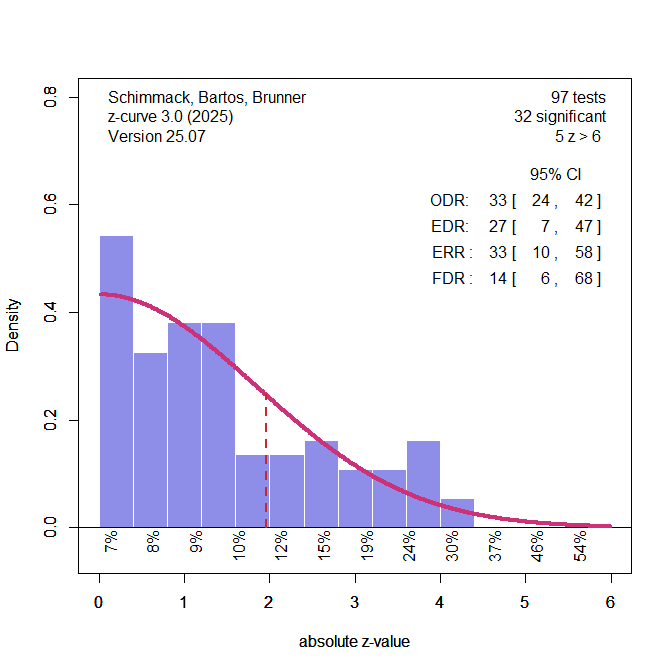

To illustrate the heterogeneity test, I generated z-curve plots that are not included in the typical heterogeneity test. The first plot shows the results for a model that assumes homogeneity. As a result, we have only one component with a fixed SD of 1, while the mean estimates the power of all studies, assuming equal power for all studies.

We see that the model fails to predict the z-values of significant results.

The second model freely estimates the SD of the component. Sampling error alone produces a standard deviation of 1. Values above 1 suggest that the non-central z-values of studies also vary. The test of heterogeneity uses the 95%CI of the SD estimates. If the lower limit of the CI is above 1, the data are heterogeneous. The heterogeneity of the non-central z-values is simply (SD – 1).

The figure shows that the model better fits the significant results. The estimated SD is 1.84 and the 95% confidence interval is 1.06 to 2.21. Thus, there is evidence of heterogeneity.

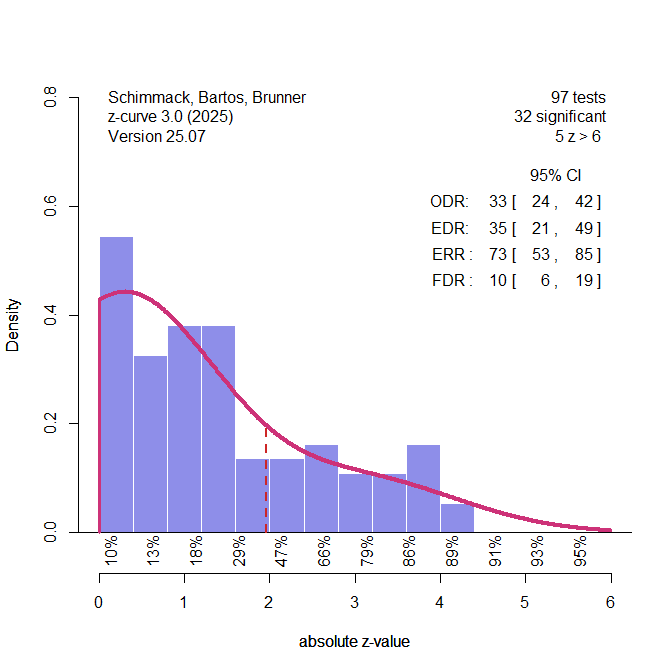

The other model does not make assumptions about the distribution of the non-central z-values. This is the advantage of z-curve over other models. To test heterogeneity, a z-curve model with two free components and fixed SD of 1 is fitted to the data. If the model fits better than the model with a single component, there is evidence of heterogeneity. Moreover, model fit is compared to the fit of the model with one component and free SD.

The two-component model suggests that there are two populations of studies. 73% of studies have a population mean of 0.45, which corresponds to 7% power. The remaining 27% of studies have a population mean of 3.28, which corresponds to 91% power.

The comparison of model fit is inconclusive. The 95%CI ranges from -.001 to .014. Using a more liberal criterion, however, would favor the two-component model, 90%CI = .000 to .012. Another consideration is that the free component model does not make assumptions that could bias results. If both models produced the same results, it would not be important to pick one model over the other. However, the two-component model has a much higher estimate of the ERR. To test this prediction, it would be necessary to replicate the replication studies again. The normal distribution model predicts only a 33% success rate, whereas the 2-component model predicts a success rate of 76%. The truth is unknown, but I believe that the two-component model is closer to the truth.

The final model is estimated with the “EM” algorithm implemented in the z-curve package with the default of seven fixed components at z = 0 to 6. The results are similar to the previous results.

The results suggest that there is no bias in the replication studies and that the average power of all original studies was 35%. The 95% confidence interval allows for 49% significant results, but not 90%, which was the success rate of the original studies.

The EDR is used to estimate the false positive risk (FDR). However, the FDR of 10% tells us only that 10% of the significant results in the replication studies may be false positive results. The more interesting question is how many of the original studies could be false positive results. The analysis of the original studies was inconclusive because the confidence interval ranged from 3% to 88%.

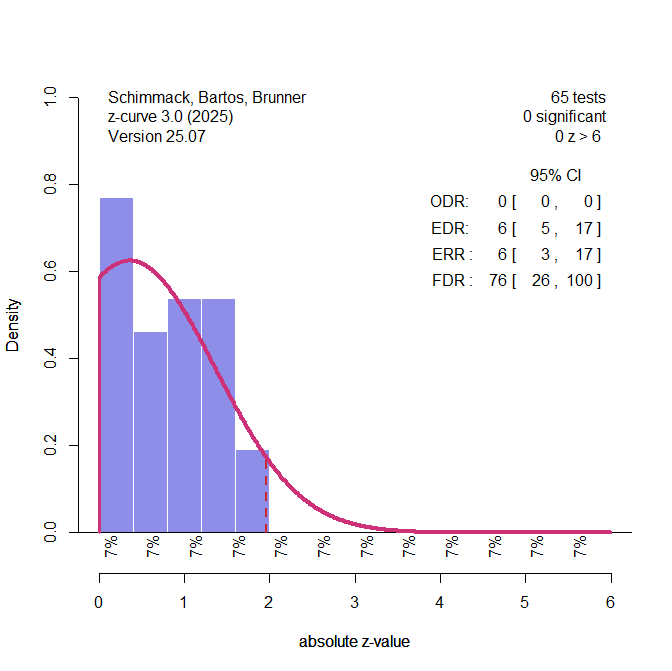

To use the replication data to estimate the FDR of the original studies, we can limit the analysis to the non-significant result and estimate the true average power of the non-significant results. This estimate can then be used to estimate the FDR of studies with non-significant results.

The estimated EDR of non-significant replication studies is 6%, just 1 percentage point over 5%, which is expected if all results were false positives in the original studies. This implies a high FDR of 76% and the 95% confidence interval includes 100%. The lower limit is 27%. Therefore, we cannot conclusively say that most of these studies tested a true null-hypothesis, but we can say that they provided no evidence against the null-hypothesis, despite significant results in the original studies.

The replication failures in the OSC reproducibility project have been discussed in many articles, and some articles correctly pointed out that replication failures do not show that the original result was a false positive. This is true, but the current results suggest that the evidence of these studies is so weak that they do not rule out this possibility.

1 thought on “Z-Curve.3.0 Tutorial: Chapter 3”