Consumer psychology is an applied branch of social psychology that uses insights from social psychology to understand consumers’ behaviors. Although there is cross-fertilization and authors may publish in more basic and more applied journals, it is its own field in psychology with its own journals. As a result, it has escaped the close attention that has been paid to the replicability of studies published in mainstream social psychology journals (see Schimmack, 2020, for a review). However, given the similarity in theories and research practices, it is fair to ask why consumer research should be more replicable and credible than basic social psychology. This question was indirectly addressed in a diaologue about the merits of pre-registration that was published in the Journal of Consumer Psychology (Krishna, 2021).

Open science proponents advocate pre-registration to increase the credibility of published results. The main concern is that researchers can use questionable research practices to produce significant results (John et al., 2012). Preregistration of analysis plans would reduce the chances of using QRPs and increase the chances of a non-significant result. This would make the reporting of significant results more valuable because signifiance was produced by the data and not by the creativity of the data analyst.

In my opinion, the focus on pre-registration in the dialogue is misguided. As Pham and Oh (2021) point out, pre-registration would not be necessary, if there is no problem that needs to be fixed. Thus, a proper assessment of the replicability and credibility of consumer research should inform discussions about preregistration.

The problem is that the past decade has seen more articles talking about replications than actual replication studies, especially outside of social psychology. Thus, most of the discussion about actual and ideal research practices occurs without facts about the status quo. How often do consumer psychologists use questionable research practices? How many published results are likely to replicate? What is the typical statistical power of studies in consumer psychology? What is the false positive risk?

Rather than writing another meta-psychological article that is based on paranoid or wishful thinking, I would like to add to the discussion by providing some facts about the health of consumer psychology.

Do Consumer Psychologists Use Questionable Research Practices?

John et al. (2012) conducted a survey study to examine the use of questionable research practices. They found that respondents admitted to using these practices and that they did not consider these practices to be wrong. In 2021, however, nobody is defending the use of questionable practices that can inflate the risk of false positive results and hide replication failures. Consumer psychologists could have conducted an internal survey to find out how prevalent these practices are among consumer psychologists. However, Pham and Oh (2021) do not present any evidence about the use of QRPs by consumer psychologists. Instead, they cite a survey among German social psychologists to suggest that QPRs may not be a big problem in consumer psychology. Below, I will show that QRPs are a big problem in consumer psychology and that consumer psychologists have done nothing over the past decade to curb the use of these practices.

Are Studies in Consumer Psychology Adequately Powered

Concerns about low statistical power go back to the 1960s (Cohen, 1961; Maxwell, 2004; Schimmack, 20212; Sedlmeier & Gigerenzer, 1989; Smaldino & McElreath, 2016). Tversky and Kahneman (1971) refused “to believe that any that a serious investigator will knowingly accept a .50 risk of failing to confirm a valid research hypothesis” (p. 110). Yet, results from the reproducibility project suggest that social psychologists conduct studies with less than 50% power all the time (Open Science Collaboration, 2015). It is not clear why we should expect higher power from consumer research. More concerning is that Pham and Oh (2021) do not even mention low power as a potential problem for consumer psychology. One advantage of a pre-registration is that researchers are forced to think ahead of time about the sample size that is required to have a good chance to show the desired outcome, assuming the theory is right. More than 20 years ago, the APA taskforce on on statistical inference recommended a priori power analysis, but researchers continued to conduct underpowered studies. Pre-registration, however, would not be necessary if consumer psychologists already conduct studies with adquate power. Here I show that power in consumer psychology is unacceptably low and has not increased over the past decade.

False Positive Risk

Pham and Oh note that Simmons, Nelson, & Simmonsohn’s (2011) influential article relied exclusively on simulations and speculations and suggest that the fear of massive p-hacking may be unfounded. “Whereas Simmons et al. (2011) highly influential computer simulations point to massive distortions of test statistics when QRPs are used, recent empirical estimates of the actual impact of self-serving analyses suggest more modest degrees of distortion of reported test statistics in recent consumer studies (see Krefeld-Schwalb & Scheibehenne, 2020). Here I presents of empirical analyses to estimate the false discovery risk in consumer psychology.

Data

The data are part of a larger project that examines research practices in psychology over the past decade. For this purpose, my research team and I downloaded all articles form 2010 to 2020 published in 120 psychology journals that cover a broad range of disciplines. Four journals represent research in consumer psychology, namely the Journal of Consumer Behavior, the Journal of Consumer Psychology, the Journal of Consumer Research and Psychology and Marketing. The articles were converted into text files and the text files were searched for test statistics. All F, t, and z-tests were used, but most test statistics were F and t tests. There were 2,304 tests for Journal of Consumer Behavior, 8940 for Journal of Consumer Psychology, 10,521 for Journal of Consumer Research, and 5,913 for Psychology and Marketing.

Results

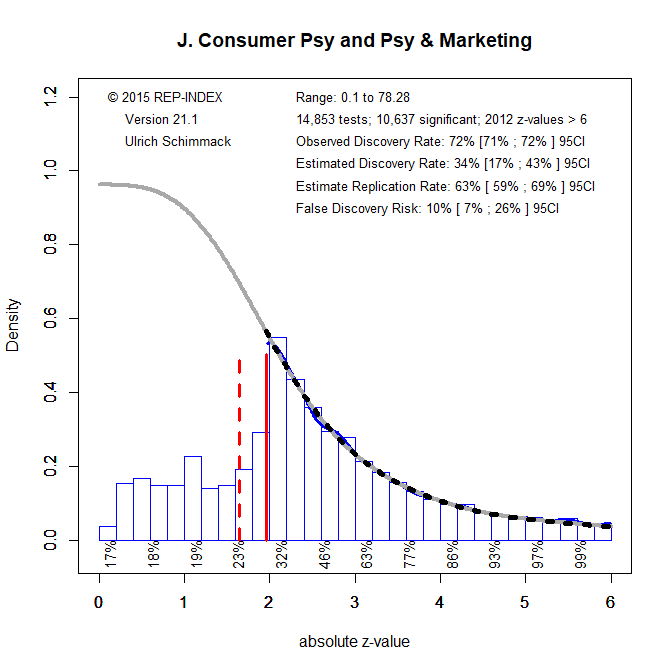

I first conducted z-curve analyses for each journal and year separately. The 40 results were analyzed with year as continuous and journal as categorical predictor variable. No time trends were significant, but the main effect for the expected replication rate of journals was significant, F(3,36) = 9.63, p < .001. Inspection of the means showed higher values for Journal of Consumer Psychology and Psychology & Marketing than for the other two journals. No other effects were significant. Therefore, I combined the data of Journal of Consumer Psychology and Psychology of Marketing and the Journal of Consumer Behavior and Journal of Consumer Reserach.

Figure 1 shows the z-curve analysis for the first set of journals. The observed discovery rate (ODR) is simply the percentage of results that are significant. Out of the 14,853 tests, 10636 were significant which yields an ODR of 72%. To examine the influence of questionable research practices, the ODR can be compared to the estimated discovery rate (EDR). The EDR is an estimate that is based on a finite mixture model that is fitted to the distribution of the signifiant test statistics. Figure 1 shows that the fitted grey curve closely matches the observed distribution of test statistics that are all converted into z-scores. Figure 1 also shows the projected distribution that is expected for non-significant results. Contrary to the predicted distribution, observed non-significant results sharply drop off at the level of significance (z = 1.96). This pattern provides visual evidence that non-significant results do not follow a sampling distribution. The EDR is the area under the curve for the significant values relative to the total distribution. The EDR is only 34%. The 95%CI of the EDR can be used to test statistical significance. The ODR of 72% is well out side the 95% confidence interval of the EDR that ranges from 17% to 34%. Thus, there is strong evidence that consumer researchers use QRPs and publish too many significant results.

The EDR can also be used to assess the risk of publishing false positive results; that is significant results without a true population effect. Using a formula from Soric (1989), we can use the EDR to estimate the maximum percentage of false positive results. As the EDR decreases, the false discovery risk increases. With an EDR of 34%, the FDR is 10%, with a 95% confidence interval ranging from 7% to 26%. Thus, the present results do not suggest that most results in consumer psychology journals are false positives as some meta-scientists suggested (Ioannidis, 2005; Simmons et al., 2011).

It is more difficult to asses the replicability of results published in these two journals. On the one hand, z-curve provides an estimate of the expected replication rate. That is, the probability that a significant result produces a significant result again in an exact replication study (Brunner & Schimmack, 2020). The ERR is higher than the EDR because studies that produced a significant result have higher power than studies that did not produce a significant result. The ERR of 63% suggests that more than 50% of significant results can be successfully replicated. However, a comparison of the ERR with success rate in actual replication studies showed that the ERR overestimates actual replication rates (Brunner & Schimmack, 2020). There are a number of reasons for this discrepancy. One reason is that replication studies in psychology are never exact replications and that regression to the mean lowers the chances of reproducing the same effect size in a replication study. In social psychology, the EDR is actually a better predictor of the actual success rate. Thus, the present results suggest that actual replication studies in consumer psychology are likely to produce as many replication failures as studies in social psychology have (Schimmack, 2020).

Figure 2 shows the results for the Journal of Consumer Behavior and the Journal of Consumer Research.

The results are even worse. The ODR of 73% is above the EDR of 26% and well outside the 95%CI of the EDR, . The EDR of 24% implies a false discovery risk of 15%, 95%CI =

Conclusion

The present results show that consumer psychology is plagued by the same problems that have produced replication failures in social psychology. Given the similarities between consumer psychology and social psychology, it is not surprising that the two disciplines are alike. Researchers conduct underpowered studies and use QRPs to report inflated success rates. These illusory results cannot be replicated and it is unclear which statistically significant results reveal effects that have practical significance and which ones are mere false positives. To make matters worse, social psychologists have responded to awareness of these problems by increasing power of their studies and by implementing changes in their research practices. In contrast, z-curve analyses of consumer psychology show no improvement in research practices over the past year. In light of this disappointing tend, it is disconcerting to read an article that suggests improvements in consumer psychology are not needed and that everything is well (Pham and Oh, 2021). I demonstrated with hard data and objective analysis that this assessment is false. It is time for consumer psychologists to face reality and to follow in the footsteps of social psychologists to increase the credibility of their science. While preregistration may be optional, increasing power is not.

Huh. Just intuitively I would have predicted that JCR would score a bit worse than JCP, and there it is. A prominent consumer researcher I know says she doesn’t even bother submitting stuff to JCR anymore because they reject everything that doesn’t have ‘perfect data’

Maybe honesty and consumer psychology don’t go together. I mean how much marketing is aimed at selling stuff to people who don’t need it. Opportunity to create a new consumer psychology science. Honest research that helps consumers to make better decisions to improve their well-being.

Nope, that’s sales. Marketing is about figuring out what people want, and how to give it to them (marketing and sales people often don’t like each other much in any given organization). The better critique of marketing (not original to me) is that it has two principles: 1) Give people what they want, and 2) who cares what they want (i.e., if it’s any good for them).

And even then, that is a critique of marketing practitioners. JCR and to a marginally lesser extent JCP are largely about academics peddling very tidy stories about some tiny (but bigged-up) mechanism, with a cute counter-intuitive twist, and a couple of conceptual replications that all have perfect data. Their reject rates are sky high, and there are very few back-up mid-range journals that are acceptable to hiring and promotion boards at R1 schools, so there’s intense competition to perfect this formula to get in. It’s a very familiar recipe for exactly what your data observe here.

Also, FWIW, there *is* a big movement for what’s called “transformative consumer research” which is exactly the kind of do-gooding you ask for. Has its own conference and everything.

This seems way, way, way overly optimistic compared to the actual current rate of success in direct replications in consumer psychology: https://www.openmktg.org/research/replications What do you suppose could be causing the discrepancy? With a current replication rate of 8.7% (as of November 26, 2021) we are way outside of the CI you’ve listed for expected replicability at JCP and Psych & Marketing.

Dear Aaaron,

thank you for bringing the actual replication rate to my attention. A simple explanation for the discrepancy is that this analysis is based on all published test values. The power of focal tests is often lower when we compare focal and non-focal tests. Another explanation is that the expected replication rate assumes experiments can be replicated exactly. Any deviation is likely to further decrease replication success rates. This is also the case for the Open Science Collaboration project. We find that the Expected Discovery Rate is often a better predictor of actual replication outcomes. This would also be the case here. Finally, selection of questionable findings may further decrease the outcome of replication studies. It would be interesting to compute a z-curve for the original studies that were replicated.

Thanks Uli.

Thanks for your reply.

I just remembered that 2 of the studies didn’t replicate because of confound issues. One had an uneven drop out rate that seemed to be causing the effect and the other had shady instructions aimed at only the treatment group to basically get them to do what they wanted (both were Data Colada replications). If we counted those as significant (not because they worked but because we’re acknowledging the limitations of forensic analysis of p-values), that would bring us up to a 17% replication rate.

I would be very interested to see a z-curve of the original papers as well. I will try to get them to you soon. I think my RA can help out on that next week. Thanks!

Great to see that you are planning to do a z-curve of the original studies. May I suggest to code all studies in the original articles, not only the replicated one. This can examine whether the replicated study was representative or weak. It can also increase the sample size to get a better point estimate of the EDR which unfortunately has a wide confidence interval in small samples.

LOL I was going to be lazy and just send them to you but we should do it ourselves 🙂 It might just take some time to get to it. Thanks for the advice. It’s very related to our other coding project in which we’re looking at conceptual replications in the field of marketing (which have nearly all worked). I wrote a post about that project: https://blog.openmktg.org/2021/08/feedback-request-how-should-i-compare.html Having gone through a lot of them, there are many that appear not to be p-hacked so there’s a lot more nuance than I expected.

I am busy at the moment, but let’s keep in touch about coding and z-curve analysis of marketing research.