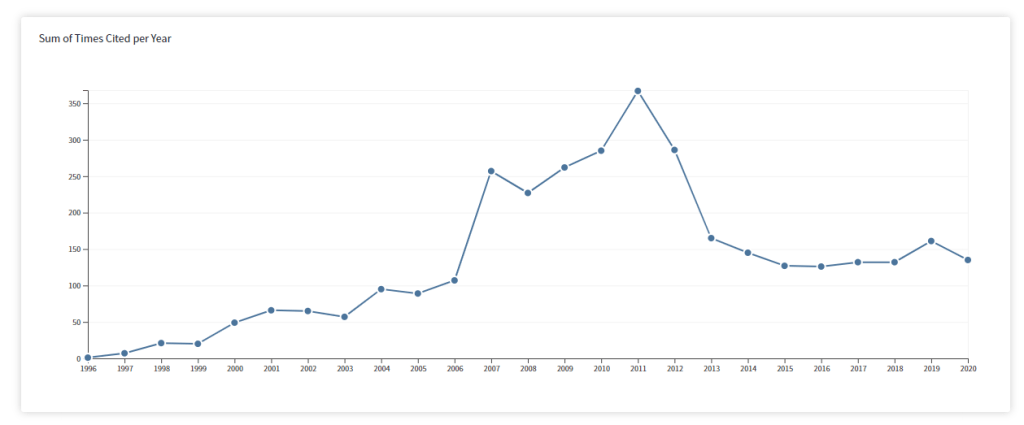

One popular topic in social psychology is persuasion. How can we make people believe something and change their attitudes. A number of variables influence how persuasive a message is. One of them is source credibility. When Donald Trump claims that he won the election, we may want to check what others say. If even Fox News calls the election for Biden, we may not trust Donald Trump and ignore his claim. Similarly, scientists respond to the reputation of scientists. For example, in 2011 it was revealed that Diederik Stapel faked the data for many of his articles. The figure below shows that other scientists responded by citing these articles less.

In 2011, it also became apparent that social psychologists used other practices to publish results that cannot be replicated (OSC, 2015). These practices are known as questionable research practices, but unlike fraud they are not banned and articles that reported these results have not been retracted. As a result, social psychologists continue to cite articles with false evidence that present misleading information.

One literature that has lost credibility is research on implicit cognitions. Early on, replication failures of implicit priming undermined the assumption that social behavior is often elicited by stimuli without awareness (Kahneman, 2012). Now, research with implicit bias measures has come under scrutiny. A main problem with implicit bias measures is that they have low convergent validity with each other (Schimmack, 2019). As most of the variance in these measures is measurement error, one can only expect small effects of experimental manipulations. This means that studies with implicit measures often have low statistical power to produce replicable effects. Nevertheless, articles that use implicit measures report mostly successful results. This can only be explained with questionable research practices. Therefore it is currently unclear whether there are any robust and replicable results in the literature with implicit bias measures.

This is even true, when an article reports several replication studies (Schimmack, 2012). Although multiple replications give the impression that a result is credible, questionable research practices undermine the trustworthiness of results. Fortunately, it is possible to examine the credibility of published results with forensic statistical tools that can reveal the use of questionable practices. Here I use these tools to examine the credibility of a five-study article that claimed implicit measures are influenced by the credibility of a source.

Consider the Source: Persuasion of Implicit Evaluations Is Moderated by Source Credibility

The article by Colin Tucker Smith, Jan De Houwer, and Brian A. Nosek reports five experimental manipulations of attitudes towards a consumer product.

Study 1

“Supporting the central hypothesis of the study, source expertise significantly affected implicit preferences; participants showed a stronger implicit preference for Soltate when that information was presented by an individual “High” in expertise (M = 0.54, SD = 0.36) than “Low” in expertise (M = 0.42, SD = 0.41), t(195) = 2.24, p = .026, d = .32″

Study 2

Participants indicated a stronger implicit preference for Soltate when that information was presented by an individual “High” in expertise (M = 0.60, SD = 0.33) than “Low” in expertise (M = 0.48, SD = 0.40), t(194) = 2.18, p = .031, d = 0.31

Study 3

Implicit preferences were significantly affected by manipulating the level of source trustworthiness; participants indicated a stronger implicit preference for Soltate when that information was presented by an individual “High” in trustworthiness (M = 0.52, SD = 0.34) than “Low” in trustworthiness (M = 0.42, SD = 0.39), t(280) = 2.40, p = .017, d = 0.29.

Study 4

Replicating Study 3, manipulating the level of source trustworthiness significantly affected implicit preferences as measured by the IAT. Participants implicitly preferred Soltate more when presented by an individual “High” in trustworthiness (M = 0.51, SD = 0.35) than “Low” in trustworthiness (M = 0.43, SD = 0.43), t(419) = 2.17, p = .031, d = 0.21.

Study 5

There was a main effect of credibility, F(1, 549) = 4.43, p = .036, such that participants implicitly preferred Soltate more when presented by a source high in credibility (M = 0.62, SD = 0.36) than low in credibility (M = 0.55, SD = 0.39).

Forensic Analysis

For naive readers of social psychology results, it may look impressive that the key finding was replicated in five studies. After all, the chance of a false positive result decreases exponentially with each significant result. While a single p-value less than .05 can occur by chance in 1 out of 20 studies, five significant results can only happen by chance in 1 out of 20*20*20*20*20 = 3,200,000 attempts. So, it would seem reasonable to believe that implicit attitude measures are influenced by the credibility of the source. However, when researchers use questionable practices, a p-value less than .05 is ensured and even 9 significant results do not mean that an effect is real (Bem, 2011). So, the question is whether the researchers used questionable practices to produce their results.

To answer this question, we can examine the probability of obtaining five very similar p-values in a row, p = .026, p = .031, p = .017, p = .031, p = .036. The Test of Insufficient Variance (TIVA) converts the p-values into z-scores and compares the variance against the sampling error of z-scores, which is 1. The variance is just 0.012. The probability of this happening by chance is 1/3287. In other words, it is extremely unlikely that five independent studies would produce such a small variance in p-values by chance.

Another test is to compute the average observed power of the studies (Schimmack, 2012), which is 60%. We can now ask how probable it is to get five significant results in a row with a probability of 60%, which is .6*.6*.6*.6*.6 = .08. The probability is also low, but not as low as the one for the previous test. The reason is that QRPs also inflate observed power. Thus, the 60% estimate is an overestimation. A rough index of inflation is simply the difference between the 100% success rate and the inflated power estimate of 60%, which is 40%. Subtracting the inflation from the observed power index, gives a replicability index of 20%. A value of 20% is also what is obtained in simulation studies where all studies are false positives (i.e., there is no real effect).

So, does source credibility influences implicitly measured attitudes? We do not know. At least these five studies provide no evidence for it. However, these results do provide further evidence that consumers of IAT researchers should consider the source. IAT researchers have a vested interest in making you believe that implicit measures can reveal something important about you that exists outside of your awareness. This gives them power to make big claims about social behavior that benefits their careers.

However, you also need to consider the source of this blog post. I have a vested interest in showing that social psychologists are full of shit. After all, who cares about bias analyses that always show there is no bias. So, who should you believe? The answer is that you should believe the data. Is it possible to get five p-values between .05 and .005 in a row? If you disregard probability theory, you can ignore this post. If you trust probability theory, you might wonder what other results in the IAT literature you can trust. In science we don’t trust people. We trust the evidence, but only after we make sure that we are presented with credible evidence. Unfortunately, this is often not the case in psychological science, even in 2020.