INTRODUCTION

Norbert Schwarz is an eminent social psychologist with an H-Index of 80 (80 articles cited more than 80 times as of January 3, 2019). Norbert Schwarz’s most cited article examined the influence of mood on life-satisfaction judgments (Schwarz & Clore, 1983). Although this article continues to be cited heavily (110 citations in 2018), numerous articles have demonstrated that the main assumption of the article (people rely on their current mood to judge their overall wellbeing) is inconsistent with the reliability and validity of life-satisfaction judgments (Eid & Diener, 2004; Schimmack & Oishi, 2005). More important, a major replication attempt failed to replicate the key finding of the original article (Yap et al., 2017).

The replication failure of Schwarz and Clore’s mood-as-information study is not surprising, given the low replication rate in social psychology in general, which has been estimated to be around 25% (OSC, 2015). The reason is that social psychologists have used questionable research practices to produce significant results, at the risk that many of these significant results are false positive results. In a ranking of the replicability of eminent social psychologists, Norbert Schwarz ranked in the bottom half (43 out of 71). It is therefore possible that other results published by Norbert Schwarz are also difficult to replicate.

EASE-OF-RETRIEVAL PARADIGM

The original article that introduced the ease of retrieval paradigm is Schwarz’s 5th most cited article.

The aim of the ease-of-retrieval paradigm was to distinguish between two accounts of frequency or probability judgments. One account assumes that people simply count examples that come to mind. Another account assumes that people rely on the ease with which examples come to mind.

The 3rd edition of Gilovich et al.’s textbook, introduces the ease-of-retrieval paradigm.

An ingenious experiment by Norbert Schwarz and his colleagues managed to untangle the two interpretations (Schwarz et al., 1991). In the guise of gathering material for a relaxation-training program, students were asked to review their lives for experiences relevant to assertiveness. The experiment involved four conditions. One group was asked to list 6 occasions when they had acted assertively, and another group was asked to list 12 such examples. A third group was asked to list 6 occasions when they had acted unassertively, and the final group was asked to list 12 such examples. The requirement to generate 6 or 12 examples of either behavior was carefully chosen; thinking of 6 examples would be easy for nearly everyone, but thinking of 12 would be extremely difficult. (p. 138).

The textbook shows a table with the mean assertiveness ratings in the four conditions. The means show a picture perfect cross-over interaction, with no information about standard deviations or statistical significance. The pattern shows higher assertiveness after recalling fewer examples of asssertive behaviors and lower assertiveness after recalling fewer unassertive behaviors. This pattern of results suggest that participants relied on the ease of retrieving instances of assertive or unassertive behaviors from memory.

But there are reasons to believe that these textbook results are too good to be true. Sampling error alone would sometimes produce less-perfect pattern of results, even if the ease-of-retrieval hypothesis is true.

Reading the original article provides the valuable information that each of the four means in the textbook is based on only 10 participants (for a total of 40 participants). Results from such small samples are nowadays considered questionable. The results section also contains the valuable information that the perfect results in Study 1 were only marginally significant; that is the risk of a false positive result was greater than 5%.

More important, weak statistical results such as p-values of .07 often do not replicate because sampling error will produce less than perfect results the next time.

The article reported several successful replication studies. However, this does not increase the chances that the result are credible. As studies with small samples often produce non-significant results, a series of studies should show some failures. If those failures are missing, it suggests that questionable research practices were used (Schimmack, 2012).

Study 2 replicated the finding of Study 2 with about 20 participants in each cell. The pattern of means was again picture perfect and this time the interaction was significant, F(1, 142) = 6.35, p = .013. However, even this evidence is just significant and results with p-values of .01 often fail to replicate.

Study 3 again replicated the interaction with less than 10 participants in each condition and a just significant result, F(1,70) = 4.09, p = .030.

Given the small sample sizes, it is unlikely that three studies would produce support for the ease-of-retrieval hypothesis without any replication failures. The median probability to produce a significant result (power) is 59% for p < .05 and 70% for p < .10; and these are based on probably inflated effect size estimates. Thus, the chance of obtaining three significant results with p < .10 and 70% power is less than .70*.70*.70 = 34%. Maybe Schwarz and colleagues were just lucky, but maybe they also used questionable research practices, which is particularly easy in small samples (Simmons, Nelson, & Simonsohn, 2011).

Using the Replicability Index (Schimmack, 2015), it is reasonable to expect a replication failure rather than a replication success in a replication attempt without QRPs (R-Index = 70 – 30 = .40%).

A low R-Index does not mean that a theory is false or that a replication study will definitely fail. However, it does raise concern about the credibility of textbook findings that present the results of Study 1 as solid empirical evidence.

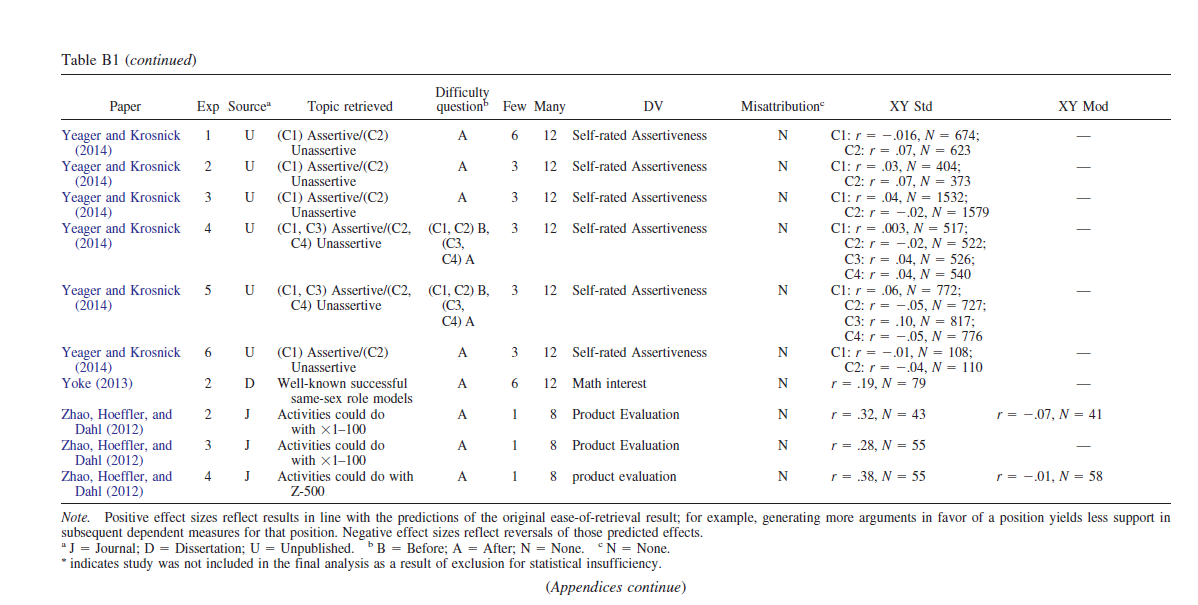

Given the large number of citations, there are many studies that have also reported ease-of-retrieval effects. The problem is that social psychology journals only report successful studies. As a result, these replication studies do not test the ease-of-retrieval hypothesis, and results are inflated by selective publication of significant results. This is confirmed in a recent meta-analysis that found evidence of publication bias in ease-of-retrieval studies.

Although the meta-analysis suggests that there still is an effect after correcting for publication bias, corrections for publication bias are difficult and may still overestimate the effect. What is needed is a trustworthy replication study in a large sample.

In 2012 I learned about such a replication study at a conference about the replication crisis in social psychology. One of the presenters was Jon Krosnick, who reported about a replication project in a large, national representative sample. 11 different studies were replicated and all but one produced a significant result (recalled from memory) . The one replication failure was the ease-of-retrieval paradigm. The data of this study and several follow-up studies with large samples were included in the Weingarten and Hutchinson meta-analysis.

The results show that these replication attempts failed to reproduce the effect despite much larger samples that could detect even smaller effects.

Interestingly, the 5th edition of the textbook (Gilovich et al., 2019) no longer mentions Schwarz et al.’s ingenious ease-of-retrieval paradigm. Although I do not know why this study was removed, the deletion of this study suggests that the authors lost confidence in the effect.

Broader Theoretical Considerations

There are other problems with the ease-of-retrieval paradigm. Most important, it does not examine how respondents answer questions about their personality under naturalistic conditions, without explicit instructions to recall a specified number of concrete examples.

Try to recall 12 examples when you were helpful.

Could you do this in less than 10 second? If so, you are a very helpful person, but even if you are a very helpful person, it might take more time than that to do so. However, personality judgments or other frequency and probability judgments are often made in under 5 seconds. Thus, even if ease-of-retrieval is one way to make social judgments, it is not the typical way social judgments are made. Thus, it remains an open question how participants are able to make fast and partially accurate judgments of their own and other people’s personality, the frequency of their emotions, or other judgments.

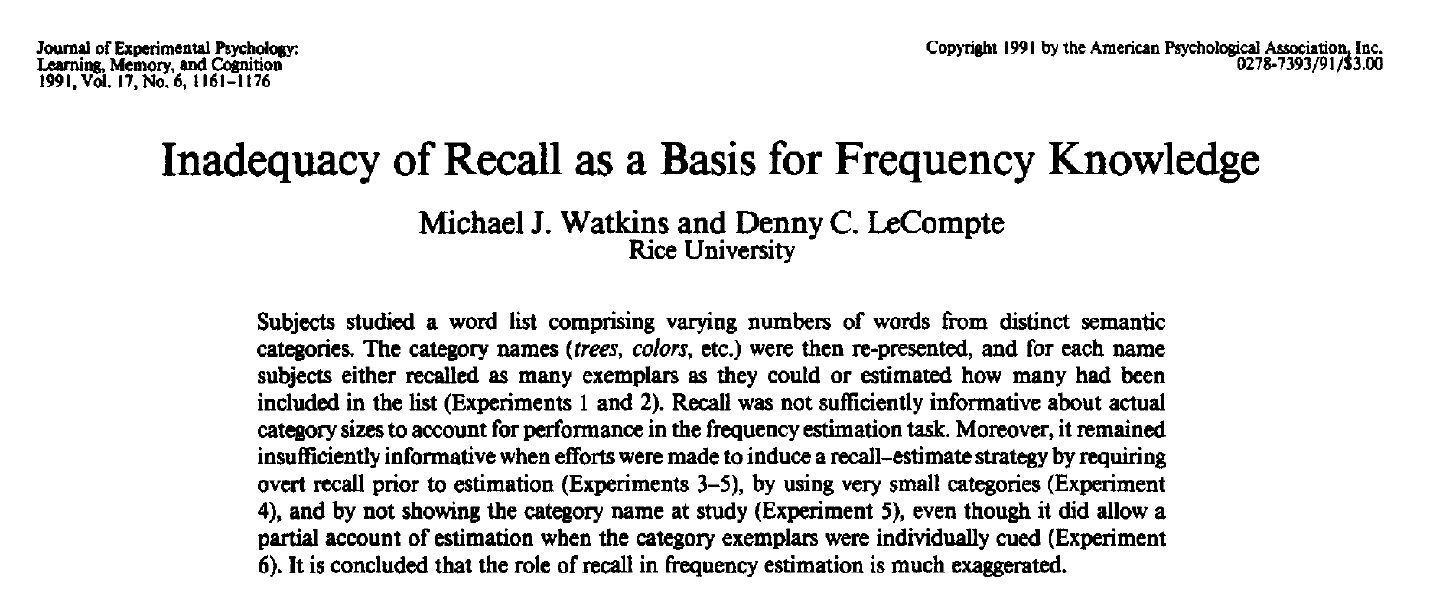

Ironically, an article published in the same year as Schwarz et al.’s article made this point. However, this article was published in a cognitive journal, which social psychologists rarely cite. Overall, this article has been cited only 15 times. Maybe the loss of confidence in the ease-of-retrieval paradigm will generate renewed interest in models of social judgments that do not require retrieval of actual examples.

Ah, yes, another paradigm I could never replicate. Some of this research may have been with Lawrence Sanna who had a number of papers later retracted — not sure if they were with Schwarz — easy to check but I can’t now…

http://retractionwatch.com/2013/01/11/retraction-eight-appears-for-social-psychologist-lawrence-sanna/

Thanks for sharing.