Original Post: 11/26/2018

Modification: 4/15/2021 The z-curve analysis was updated using the latest version of z-curve

“Trust is good, but control is better”

I asked Fritz Strack to comment on this post, but he did not respond to my request.

INTRODUCTION

Information about the replicability of published results is important because empirical results can only be used as evidence if the results can be replicated. However, the replicability of published results in social psychology is doubtful.

Brunner and Schimmack (2018) developed a statistical method called z-curve to estimate how replicable a set of significant results are, if the studies were replicated exactly. In a replicability audit, I am applying z-curve to the most cited articles of psychologists to estimate the replicability of their studies.

Fritz Strack

Fritz Strack is an eminent social psychologist (H-Index in WebofScience = 51).

Fritz Strack also made two contributions to meta-psychology.

First, he volunteered his facial-feedback study for a registered replication report; a major effort to replicate a published result across many labs. The study failed to replicate the original finding. In response, Fritz Strack argued that the replication study introduced cameras as a confound or that the replication team actively tried to find no effect (reverse p-hacking).

Second, Strack co-authored an article that tried to explain replication failures as a result of problems with direct replication studies (Strack & Stroebe, 2014). This is a concern, when replicability is examined with actual replication studies. However, this concern does not apply when replicability is examined on the basis of test statistics published in original articles. Using z-curve, we can estimate how replicable these studies are, if they could be replicated exactly, even if this is not possible.

Given Fritz Strack’s skepticism about the value of actual replication studies, he may be particularly interested in estimates based on his own published results.

Data

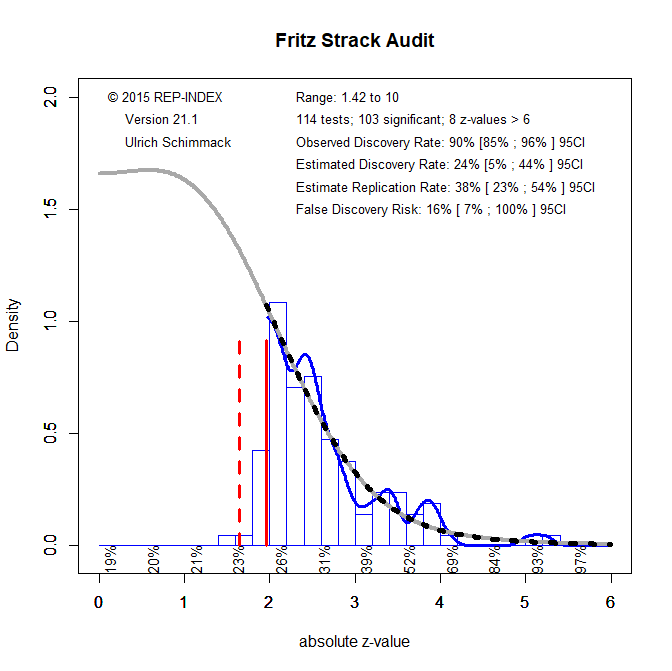

I used WebofScience to identify the most cited articles by Fritz Strack (datafile). I then selected empirical articles until the number of coded articles matched the number of citations, resulting in 42 empirical articles (H-Index = 42). The 42 articles reported 117 studies (average 2.8 studies per article). The total number of participants was 8,029 with a median of 55 participants per study. For each study, I identified the most focal hypothesis test (MFHT). The result of the test was converted into an exact p-value and the p-value was then converted into a z-score. The z-scores were submitted to a z-curve analysis to estimate mean power of the 114 results that were significant at p < .05 (two-tailed). Three studies did not test a hypothesis or predicted a non-significant result. The remaining 11 results were interpreted as evidence with lower standards of significance. Thus, the success rate for 114 reported hypothesis tests was 100%.

The z-curve estimate of replicability is 38% with a 95%CI ranging from 23% to 54%. The complementary interpretation of this result is that the actual type-II error rate is 62% compared to the 0% failure rate in the published articles.

The histogram of z-values shows the distribution of observed z-scores (blue line) and the predicted density distribution (grey line). The predicted density distribution is also projected into the range of non-significant results. The area under the grey curve is an estimate of the file drawer of studies that need to be conducted to achieve 100% successes with 28% average power. Although this is just a projection, the figure makes it clear that Strack and collaborators used questionable research practices to report only significant results.

Z-curve.2.0 also estimates the actual discovery rate in a laboratory. The EDR estimate is 24% with a 95%CI ranging from 5% to 44%. The actual observed discovery rate is well outside this confidence interval. Thus, there is strong evidence that questionable research practices had to be used to produce significant results. The estimated discovery rate can also be used to estimate the risk of false positive results (Soric, 1989). With an EDR of 24%, the false positive risk is 16%. This suggests that most of Strack’s results may show the correct sign of an effect, but that the effect sizes are inflated and that it is often unclear whether the population effect sizes would have practical significance.

The false discovery risk decreases for more stringent criteria of statistical significance. A reasonable criterion for the false discovery risk is 5%. This criterion can be achieved by lowering alpha to .005. This is in line with suggestions to treat only p-values less than .005 as statistically significant (Benjamin et al., 2017). This leaves 35 significant results.

CONCLUSION

The analysis of Fritz Strack’s published results provides clear evidence that questionable research practices were used and that published significant results have a higher type-I error risk than 5%. More important, the actual discovery rate is low and implies that 16% of published results could be false positives. This explains some replication failures in large samples for Strack’s item-order and facial feedback studies. I recommend to use alpha = .005 to evaluate Strack’s empirical findings. This leaves about a third of his discoveries as statistically significant results.

It is important to emphasize that Fritz Strack and colleagues followed accepted practices in social psychology and did nothing unethical by the lax standards of research ethics in psychology. That is, he did not commit research fraud.

DISCLAIMER

It is nearly certain that I made some mistakes in the coding of Fritz Strack’s articles. However, it is important to distinguish consequential and inconsequential mistakes. I am confident that I did not make consequential errors that would alter the main conclusions of this audit. However, control is better than trust and everybody can audit this audit. The data are openly available and the z-curve results can be reproduced with the z-curve package in R. Thus, this replicability audit is fully transparent and open to revision.

If you found this audit interesting, you might also be interested in other replicability audits.

3 thoughts on “Replicability Audit of Fritz Strack”