Introduction

In 2015, Science published the results of the first empirical attempt to estimate the reproducibility of psychology. One key finding was that out of 97 attempts to reproduce a significant result, only 36% of attempts succeeded.

This finding fueled debates about a replication crisis in psychology. However, there have been few detailed examinations of individual studies to examine why a particular result could be replicated or not. The main reason is probably that it is a daunting task to conduct detailed examinations of all studies. Another reason is that replication failures can be caused by several factors. Each study may have a different explanation. This means it is important to take an ideographic (study-centered) perspective.

The conclusions of these ideographic reviews will be used for a nomothetic research project that aims to predict actual replication outcomes on the basis of the statistical results reported in the original article. These predictions will only be accurate if the replication studies were close replications of the original study. Otherwise, differences between the original study and the replication study may explain why replication studies failed.

Special Introduction

The replication crisis has split psychologists and disrupted social networks. I respected Jerry Clore as an emotion researcher when I started my career in emotion research. His work on appraisal theories of emotions made an important contribution and influenced my thinking about emotions. I enjoyed participating in Jerry’s lab meetings when I was a post-doctoral student of Ed Diener at Illinois. However, I was never a big fan of Jerry’s most famous article on the effect of mood on life-satisfaction judgments. Working with Ed Diener convinced me that life-satisfaction judgments are more stable and more strongly based on chronically accessible information than the mood as information model suggested (Anusic & Schimmack, 2016; Schimmack & Oishi, 2005). Nevertheless, I had a positive relationship with Jerry and I am grateful that he wrote recommendation letters for me when I was on the job market.

When researchers started doing replication studies after 2011, some of Jerry’s articles failed to replicate, and one reason for these replication failures is that the original studies used questionable research practices. Importantly, nobody considered these practices unethical and it was not a secret that these methods were used. Books even taught students that the use of these practices is good science. The problem is that Jerry didn’t acknowledge that questionable practices could at least partially explain replication failures. Maybe he did it to protect students like Simone Schnall. Maybe he had other reasons. Personally, I was disappointed by this response to replication failures, but I guess that is life.

Summary of Original Article

In five studies, the authors crossed the priming of happy and sad concepts with affective experiences. In all studies, the expected interaction was significant. Coherence between affective concepts and affective experiences led to better recall of a story than in the incoherent conditions.

Study 1

56 students were assigned to six conditions (n ~ 10) of a 2 x 3 design. Three priming conditions with a scrambled sentence task were crossed with a manipulation of flexing or extending one arm. This manipulation is supposed to create an approach or avoidance motivation (Cacioppo et al., 1993). The expected interaction was significant, F(2, 50) = 3.50, p = .038.

Study 2

75 students participated in Study 2, which was a replication study with two changes: the arm position manipulation was paired with the priming task and half the participants rated their mood before the measurement of the DV. The ANOVA result was marginally significant; F(2, 69) = 2.81, p = .067.

Study 3

58 students used the same priming procedure, but used music as a mood manipulation. The neutral priming condition was dropped (n ~ 15 per cell). The interaction effect was marginally significant, F(1, 54) = 3.48, p = .068.

Study 4

132 students participated in Study 4. The study changed the priming task to a subliminal priming manipulation (although the 60ms presentation time may not be fully subliminal). Affect was manipulated by asking participants to hold a happy or sad facial expression. The interaction was significant, F(1, 128) = 3.97, p = .048.

Study 5

133 students participated in Study 5. Study 5 combined the scrambled sentence priming manipulation from Studies 1-3 with the facial expression manipulation from Study 4. The interaction effect was significant, F(1, 129) = 5.21, p = .024.

Replicability Analysis

Although all five studies showed support for the predicted two-way interaction, the p-values in the five studies are surprisingly similar (ps = .038, .067, .068, .048, .025). The probability of such small variability or even less variability in p-values is p = .002 (TIVA). This suggests that QRPs were used to produce (marginally) significant results in five studies with low power (Schimmack, 2012).

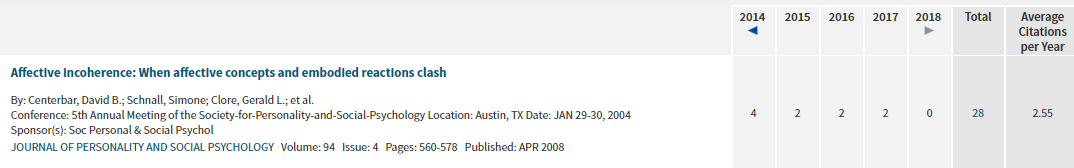

A small set of studies provides limited information about QRPs. It is helpful to look at these p-values in the context of other results reported in articles with Jerry Clore as co-author.

The plot shows a large file-drawer (missing studies with non-significant results) that is produced by a large number of just significant results. Either many studies were run to obtain a just significant result or other QRPs were used. This analysis supports the conclusion that QRPs contributed to the reported results in the original article.

Replication Study

The replication project attempted a replication of Study 5. However, the authors did not pick the 2 x 2 interaction as the target finding. Instead, they used the finding in a “repeated measures ANOVA with condition (coherent vs. incoherent) and story prompt (tree vs. house. vs. car) produced a significant linear trend for the interaction of Condition X Story, F(1, 131), 5.79, p < .02, η2 = .04” (Centerbar, et a., 2008, p. 573). The replication study did not find this trend, F(2, 110) = .759, p = .471. However, the difference in degrees of freedom shows that the replication analysis had less power because it did not test the linear contrast. Moreover, the replication report states that the replication study showed a trend regarding the main effect of affective coherence on the percentage of causal words used, F(1, 111) = 3.172, p = .078. This makes it difficult to evaluate whether the replication study was really a failure.

I used the posted data to test the interaction for the total number of words produced. It was not significant, F(1,126) = 0.602, p = .439.

In conclusion, the reported significant interaction failed to replicate.

Conclusion

The replication study of this 2 x 2 between-subject social psychology experiment failed to replicate the original result. Bias tests suggests that the replication failure was at least partially caused by the use of questionable research practices in the original study.