I don’t know anything about construal level theory. It emerged in social psychology after I paid less and less attention to the latest development in a field that published incredible results that were unlikely to be true. After 2011, it became clear that my intuitions about the credibility of social psychology were correct. (Schimmack, 2020) After a string of fraud cases and major replication failures, one would have to be a fool to trust published results in social psychology journals (OSC, 2015).

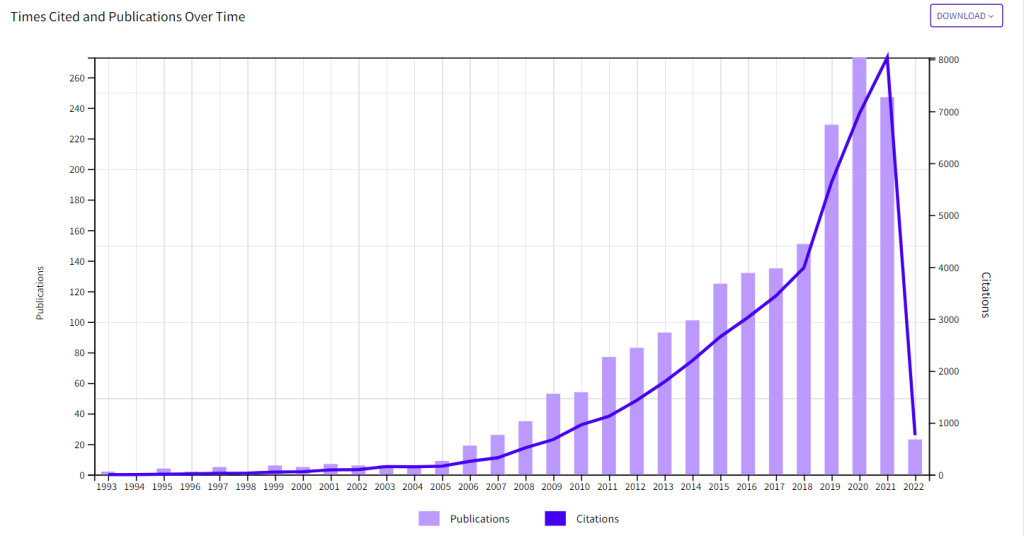

To protect myself and other consumers of social science, I have developed powerful statistical methods to examine the credibility of published results. One way to validate these tools is to apply them to literatures that have been discredited by actual replication failures, such as studies of single gene variations (Schimmack, 2022). These literatures show clear evidence that significant results were obtained with questionable methods and that non-significant results remained unpublished. Today, this literature is in decline and geneticists have increased sample sizes and improved methods to reduce the risk of false positive findings. The same cannot be said about social psychology. Most social psychologists have responded to replication failures in their fields with defiant and stoic ignorance. They continue to cite questionable results in the introduction section of their new articles and present these findings to unaware undergraduate students as if they are robust evidence from credible empirical tests of theories. The following figure shows that the construal level literature is still growing in terms of publications and citations. There is no sign of self-correction.

However, I became skeptical about the credibility of this work, when I examined the replicability of published work by professors at New York University (Schimmack, 2022). In these analyses, the inventor of construal level theory had a very low ranking (17/19) and the z-curve analysis showed clear evidence that questionable practices were used to report more significant results than were actually obtained in tests of theoretical predictions. That is, the observed discovery rate of 74% is much higher than the expected discovery rate of 14%, an inflation by 500%!

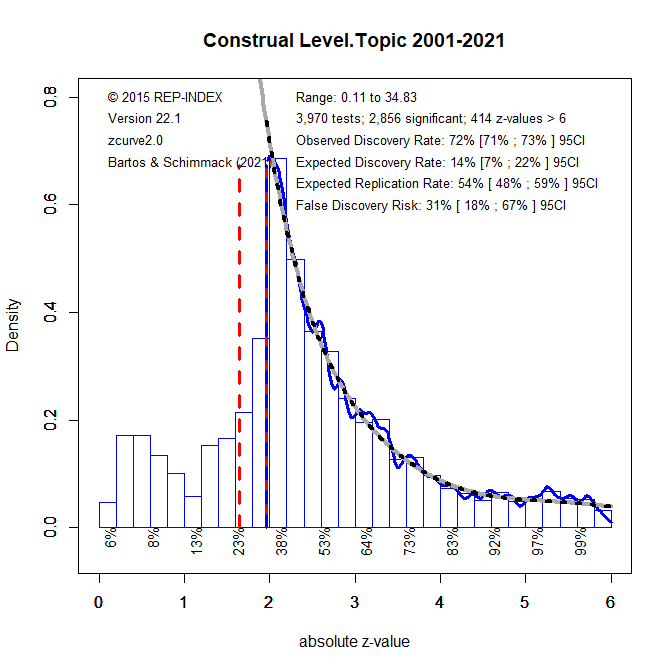

To examine whether this finding generalizes to the construal level literature, I used Web of Science (WOS) to look for articles with “construal level” as a topic. This search retrieved 1915 articles. I then looked for matching articles in a database of articles from 121 journals that cover a broad range of psychology and include most if not all social psychology journals. I found 200 matching articles. I then extracted the test-statistics reported in these articles and converted them into z-scores as a measure of the strength of evidence against the null-hypothesis. The next figure shows the z-curve for the 3,970 test statistics reported in the 200 construal level articles. The results are very similar to those for Yaacov Trope’s articles.

The key finding is that the expected discovery rate is only 14%. This is even lower than the expected discovery for candidate gene studies that have been discredited. An EDR of 14% implies that up to 31% of published significant results might be false positives. The 95%CI interval around this point estimate is 67%. Proponents of construal level theory might argue that this shows that 33% of published results are not false positives with an error rate of 2.5%. However, it is not clear which of the published results are false positives and which ones are not. Moreover, this analysis includes focal tests that actually test construal level theory and other statistical tests that have nothing to do with construal level theory. Thus, the false positive risk of actual tests of construal level theory could be even higher.

One solution to this problem is to lower the criterion of statistical significance to a level that ensures a low false positive risk. For the construal level literature, a criterion value of .001 is needed to do so. The question is whether any of the remaining significant results provide credible evidence for construal level effects under some specific conditions. Another problem is that the uncertainty around the point estimate increases and that the upper limit of the 95%CI now includes 100%. Thus, there is no credible evidence for construal level effects despite a large literature with many statistically significant results. However, when questionable research practices are used, these significant results do not provide empirical evidence for the presence of an effect.

Not everybody is going to be convinced by these statistical analyses. Fortunately, some researchers are planning a major replication project (https://climr.org/). This blog post can serve as a pre-registered prediction of the outcome of this project. While a large sample may produce a statistically significant result, p < .05, the population effect size will be negligible and much lower than the effect size estimates in studies that used questionable research practices, d < .2. This means that many of the basic findings and extensions of the theory lack empirical support. The only question is when social psychologists will eventually correct their theoretical reviews, textbooks, and lectures.

Dear Ulrich Schimmack,

Hello! This is my third attempt at submitting my comment, as the previous two seems to have disappeared.

Thank you very much for your excellent summary here regarding the reliability and replicability of statistical analyses published in scientific journals in general and social science, behavioural science and social psychology in particular. Your post is also highly relevant for some both researchers and students.

In any case, the validity and reliability of scientific research, data and publications have been fraught with various issues. A few of my blog posts deal with some of these issues. One of them is entitled “Two Thirds of Scientific Publications Retracted Are Fraudulent“, which you can easily locate from the Home page of my blog.

Indeed, there have been ongoing and rather intractable issues, where many false hopes and misdirections have occurred. Your contribution here is highly commendable.

Happy February to you! Wishing you a productive weekend doing or enjoying whatever that satisfies you the most!

Yours sincerely,

SoundEagle

Dear SoundEagle, thank you for your support. I’ll check out your blog. Best, Uli

Dear Ulrich,

I look forward to reading more of your posts and to your perusing my posts and pages. I would like to inform you that when you visit my blog, it is preferrable to use a desktop or laptop computer with a large screen to view the rich multimedia contents available for heightening your multisensory enjoyment at my blog, which could be too powerful and feature-rich for iPad, iPhone, tablet or other portable devices to handle properly or adequately.

Furthermore, since my intricate blog contains advanced styling and multimedia components plus animations, it is advisable to avoid viewing the contents of my blog using the WordPress Reader, which cannot show many of the advanced features and animations in my posts and pages. It is best to read the posts and pages directly in my blog so that you will be able to savour and relish all of the refined and glorious details plus animations.

May you find 2022 very much to your liking and highly conducive to your researching, writing, reading, thinking and blogging whatever topics that satisfy your intellectual rigour and scientific curiosity!

Take care and prosper!

Yours sincerely,

SoundEagle