Since 2011, psychologists are wondering which published results are credible and which results are not. One way to answer this question would be for researchers to self-replicate their most important findings. However, most psychologists have avoided conducting or publishing self-replications (Schimmack, 2020).

It is therefore always interesting when a self-replication is published. I just came across Shah, Mullainathana, and Shafir (2019). The authors conducted high-powered (much larger sample-sizes) replications of five studies that were published in Shah, Mullainathana, and Shafir’s (2012) Science article.

The article reported five studies with 1, 6, 2, 3, and 1 focal hypothesis tests. One additional test was significant, but the authors focussed on the small effect size and considered it not theoretically important. The replication studies successfully replicated 9 of the 13 significant results; a success rate of 69%. This is higher than the success rate in the famous reproducibility project of 100 studies in social and cognitive psychology; 37% (OSC, 2015).

One interesting question is whether this success rate was predictable based on the original findings. An even more interesting question is whether original results provide clues about the replicability of specific effects. For example, why were the results of Study 1 and 5 harder to replicate than those of the other studies.

Z-curve relies on the strength of the evidence against the null-hypothesis in the original studies to predict replication outcomes (Brunner & Schimmack, 2020; Bartos & Schimmack, 2020). It also takes into account that original results may be selected for significance. For example, the original article reported 14 out of 14 significant results. It is unlikely that all statistical tests of critical hypotheses produce significant results (Schimmack, 2012). Thus, some questionable practices were probably used although the authors do not mention this in their self-replication article.

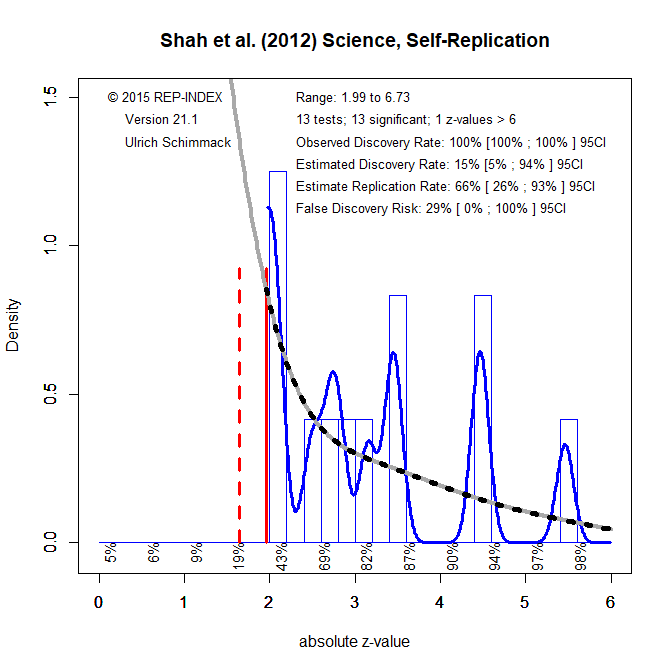

I converted the 13 test statistics into exact p-values and converted the exact p-values into z-scores. Figure 1 shows the z-curve plot and the results of the z-curve analysis. The first finding is that the observed success rate of 100% is much higher than the expected discovery rate of 15%. Given the small sample of tests, the 95%CI around the estimated discovery rate is wide, but it does not include 100%. This suggests that some questionable practices were used to produce a pretty picture of results. This practice is in line with widespread practices in psychology in 2012.

The next finding is that despite a low discovery rate, the estimated replication rate of 66% is in line with the observed discovery rate. The reason for the difference is that the estimated discovery rate includes the large set of non-significant results that the model predicts. Selection for significance selects studies with higher power that have a higher chance to be significant (Brunner & Schimmack, 2020).

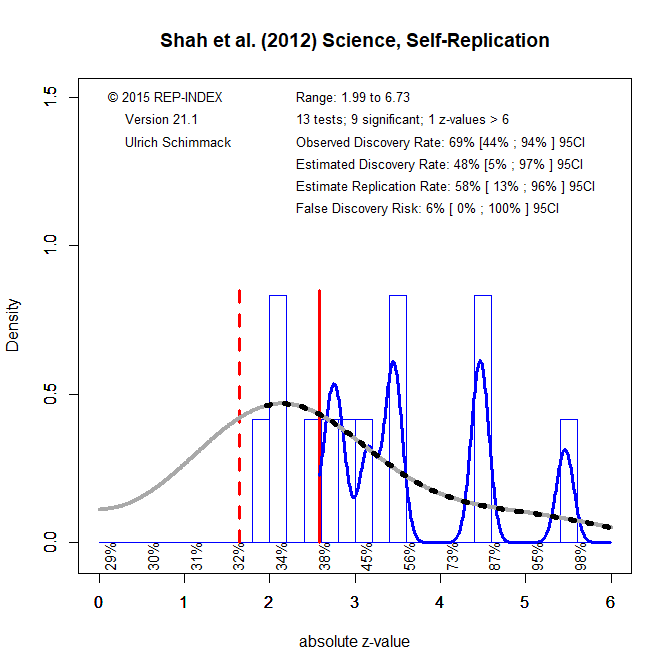

It is unlikely that the authors conducted many additional studies to get only significant results. It is more likely that they used a number of other QRPs. Whatever method they used, QRPs make just significant results questionable. One solution to this problem is to alter the significance criterion post-hoc. This can be done gradually. For example, a first adjustment might lower the significance criterion to alpha = .01.

Figure 2 shows the adjusted results. The observed discovery rate decreased to 69%. In addition, the estimated discovery rate increased to 48% because the model no longer needs to predict the large number of just significant results. Thus, the expected and observed discovery rate are much more in line and suggest little need for additional QRPs. The estimated replication rate decreased because it uses the more stringent criterion of alpha = .01. Otherwise, it would be even more in line with the observed replication rate.

Thus, a simple explanation for the replication outcomes is that some results were obtained with QRPs that produced just significant results with p-values between .01 and .05. These results did not replicate, but the other results did replicate.

There was also a strong point-biseral correlation between the z-scores and the dichotomous replication outcome. When the original p-values were split into p-values above or below .01, they perfectly predicted the replication outcome; p-values greater than .01 did not replicate, those below .01 did replicate.

In conclusion, a single p-values from a single analysis provides little information about replicability, although replicability increases as p-values decrease. However, meta-analyses of p-values with models that take QRPs and selection for significance into account are a promising tool to predict replication outcomes and to distinguish between questionable and solid results in the psychological literature.

Meta-analyses that take QRPs into account can also help to avoid replication studies that merely confirm highly robust results. Four of the z-scores in Shah et al.’s (2019) project were above 4, which makes it very likely that the results replicate. Resources are better spend on findings that have high theoretical importance, but weak evidence. Z-curve can help to identify these results because it corrects for the influence of QRPs.

Conflict of Interest statement: Z-curve is my baby.

1 thought on “A Z-Curve Analysis of a Self-Replication: Shah et al. (2012) Science”