Over the past decades, psychologists have experienced a crisis of confidence. Published results are not credible because psychologists have used a number of statistical tricks (deceptive research practices) to increase their chances of reporting results that confirm their hypothesis. One simple trick is to simply not report non-significant results (Rosenthal, 1979). Some replication failures in social psychology have raised concerns about the replicability of results in psychological science more broadly. However, empirical studies of problems in other areas are lacking because studies in other areas are more costly and time-consuming than 10-minute experiments on Mturk.

Over the past decade, my colleagues and I have developed a statistical tool, z-curve, that makes it possible to estimate the replication rate and the discovery rate based on published test-statistics (t-values, F-values) (Brunner & Schimmack, 2019; Bartos & Schimmack, 2020). I have also developed code to extract these test-statistics from published articles.

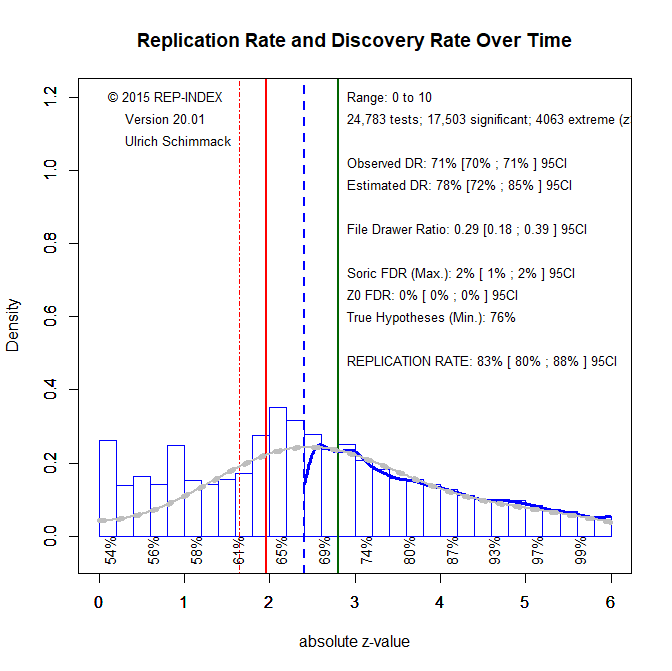

For the journal ‘Psychology & Aging’, I have data covering the years 2000 to 2019. I extracted 24,783 test-statistics and submitted them to a z-curve analysis. The main results are shown in Figure 1.

All test-statistics are converted into two-sided p-values and then converted into absolute z-scores. Figure 1 shows a histogram of these z-scores. Z-scores below 1.96 are not significant with the conventional p < .05 (two-sided) criterion. Z-scores above 1.96 are significant. The model is only fitted to z-scores greater than 2.4 because just-significant p-values may be produced by questionable research practices, while larger z-scores are less likely to be obtained by these practices. The expected replication rate (ERR) is the rate of significant results that would be significant again in an exact replication study with the same sample size. The expected replication rate for all significant values is high with an average of 83%. However, evidence for the use of questionable research practices is revealed by the pile of just-significant p-values that exceeds the predicted density (the grey curve). Thus, just significant results are questionable and should not be trusted. However, p-values below .005 are likely to produce significant results again in a replication attempt with the same sample size.

Figure 1 also shows the predicted frequency of non-significant results (grey curve in the area from 0 to 1.96). As can be seen the observed frequency matches the predicted frequency. Thus, there is little evidence that researchers are not reporting non-significant results. As a result the observed discovery rate (the percentage of significant results out of all reported results) matches the expected discovery rate; it is actually a bit smaller (71% vs. 78%).

Based on the EDR it is also possible to estimate the maximum false positive rate (Soric, 1989). That is, how many of the significant results reported in Psychology and Aging are false positive results. The estimate is low, with a maximum of 2%. Thus, there is no evidence in this research area for the unfounded claim that most published results are false (Ioannidis, 2005).

The results in Figure 1 also suggest that the majority of non-significant results are type-II errors. Thus, readers of Psychology and Aging should be weary of any interpretations of these results as evidence for the null-hypothesis.

It is also possible to estimate how many of the hypotheses that are being tested are false; that is, the null-hypothesis is true. The minimum percentage is 76%, which is much higher than the estimate of 9% that was based on a small sample of less than 100 studies (Dreber,Pfeiffer, Almenberg, Isaksson, Wilson, Chen, Nosek, & Johannesson, 2015). While the estimate of 76% may be too high, an estimate of 9% is clearly too low for studies in this research area. Thus, it seems questionable to apply the 9% estimate to all areas of psychology (Lewandowsky & Oberauer, 2020).

One possibility is that ERR and EDR increased in response to concerns about the replication crisis in social psychology. To examine this question, I fitted z-curve individually for each year from 2000 to 2019. I did this using significance (z > 1.96, black) and the more robust selection criterion (z > 2.4, grey) and estimated the ERR (solid line) and EDR (broken line).

Visual inspection of Figure 2 shows no increase in EDR or ERR after 2011, the beginning of the replication crisis. Linear regression confirms this. Thus, there have been no changes in research practices or editorial decision criteria in this research area. It is also noteworthy that the estimates of the EDR based on all significant results (including just significant ones) are much lower and much more variable. This shows the effect of questionable research practices. Once more there is no evidence that publication of these results has decreased, which would be revealed by higher and more stable EDR estimates. The other estimates all show high replicabilicability.

In sum, a replicability analysis of results published in the journal Psychology and Aging suggests that many significant results are robust and replicable and that only a very small percentage of these findings are false positives. Thus, there is no evidence to suggest that the replication crisis in social psychology generalizes to aging research. The main caveat is that results with just significant p-values (.05 to .005) may have been obtained with questionable practices that inflate effect size estimates. However, given the low percentage of false hypotheses in this literature, even these results are likely to be replicated with larger samples. A main concern in this literature may be the low percentage of false hypotheses. Demonstrating statistical significance if all hypotheses are true (older people differ from younger people) is not producing a lot of new insights. Thus, ageing research might benefit from testing riskier hypotheses that require empirical data to determine whether they are true or false.