This blog post was co-authored by ChatGPT. I asked ChatGPT questions during the research for this post and ChatGPT wrote the draft. I verified the content and made some edits. I am fully responsible for the content of this post and all mistakes are my own. Happy to correct mistakes if you point them out in the comment section or an email.

Erratum: In an earlier post, I misattributed the definition of power as a conditional probability to Jacob Cohen. This was a mistake. I checked Cohen (1988) and found – much to my surprise and chagrin – that I had trusted ChatGPT too much. My mistake. Cohen actually writes.

I happily apologize to Cohen and to restore his reputation in my mind. His dedication and futile efforts to teach psychologists how to do better research remains a shining example. I regret that I never had a chance to meet him in person. In hindsight, it makes perfect sense that lesser minds introduced the conditioning on a true effect (why? because psychologists are never wrong?) that led to ridiculous claims about power by Pek et al. (2024) in a journal for psychology methodologists (apparently an oxymoron). Pek et al. claimed that Cohen never meant for power analysis to be used to evaluate completed studies. Even this is a lie.

So much for the credibility revolution in psychology. Credibility requires expertise and proper citation of experts. I don’t see much of that in the “psycho – sciences.”

100 Years of Statistical Power: A Long and Confusing History

- Neyman’s Type II error

Jerzy Neyman introduced the importance of thinking about the probability of obtaining an undesirable non-significant result when the alternative hypothesis is true. He called this the Type II error. - Power curves and the null point

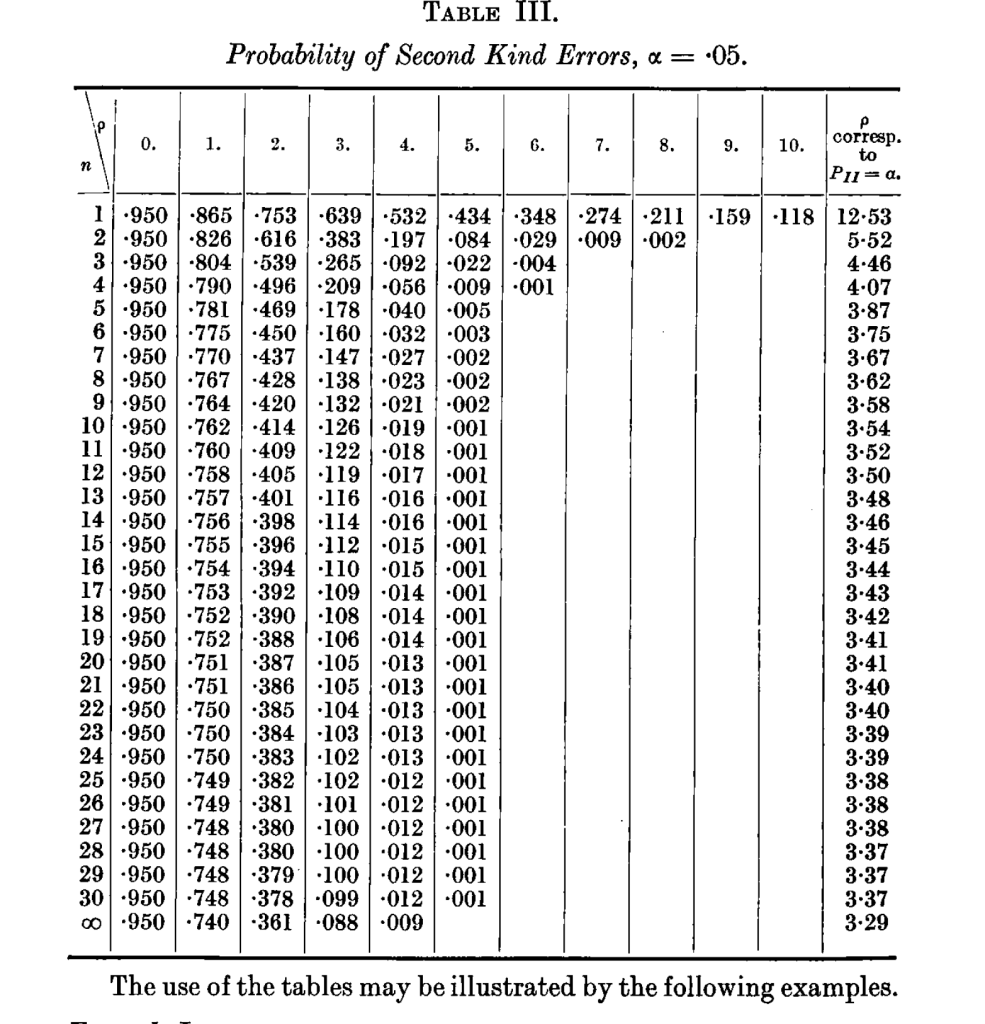

Neyman provided researchers with curves and tables showing the probability of a Type II error for different effect sizes.

- These included a value of zero effect size, which yields a probability of a non-significant result equal to 1−α. This is not a Type II error—because the null is true—but simply the probability of a non-significant result under the null.

- The term “power”

Neyman and Pearson also used the term power for the complement of the probability of a non-significant result. The corresponding probability of a significant result under the null is α. In other words, Power=1−P(non-significant result) or simply Power = P(significant result). - Implication for meta-analysis

This original definition of power makes it straightforward to talk about the average power of a set of studies in meta-analysis. Even if some studies have true effect sizes of zero, the power values can be averaged to predict the overall percentage of significant results—what Bartos & Schimmack (2020) call the expected discovery rate. It is also possible to estimate the average power conditioning on significance by taking selection bias into account (Brunner & Schimmack, 2020), even if some of the significant results are false positive results with a true effect size of zero. - Cohen’s Influential Work on Power

Jacob Cohen popularized the concept of power in the social sciences, especially psychology. He followed Neyman’s definition of power and defined it as follows.

“The power of a statistical test of a null hypothesis is the probability that it will lead to the rejection of the null hypothesis, i.e., the probability that it will result in the conclusion that the phenomenon exists” (p. 2). This definition implies that power is also defined when the null-hypothesis is true and a significant result is a false positive result. The probability in this case is easy to compute. Power = alpha, ween H0 is true. - Psychologists Redefine Power

However, psychologists started to redefine power (a history that still needs to be researched). Nowadays many textbooks and articles by psychologists define power as a conditional probability that assumes an effect size different from zero (H0 is false, H1 is true). Many psychologists falsely belief that this was always the definition of power (including myself until I did this research). This definition made sense for planning studies, but it is less suited for describing completed studies, because in reality, the true effect size could be zero. - Cohen’s assumption about true effects

Cohen may also not have thought that it is important to distinguish between the probability of a significant result and the conditional probability of a significant result if the null hypothesis is false. He made fun of the nil-hypothesis as a serious hypothesis (The Earth is round, p < .05) and assumed that most studies have non-zero effect sizes. So, the distinction between conditional and unconditional probabilities is practically irrelevant. - The resulting confusion

The definition of power as a conditional probability, however, led many psychologists to adopt a restricted view of power. Some now argue (e.g., Pek et al., 2024) that power is only meaningful as a pre-study, hypothetical quantity based on assumed non-zero effects. In contrast, Cohen clearly considered the use of power to evaluate completed studies. “They can also be performed on completed studies to determine the power which a statistical test had” (p. 14). Pek et al. (2014) use the new definition of power as conditional on a true effect to argue that this is not possible to apply power to completed studies. The use of power “as a criterion to evaluate the credibility of results from completed research, conflicts with definitional properties of statistical power.” (p. 2). This is true, but only if we define power as a conditional probability, which is not the original meaning of the term. Pek et al. are not alone in their confusion about power. In an article that aims to dispel myths about power on a blog post by the American Psychological Society (renamed as Association for Psychological Science), Lengersdorff and Lamm (2025) use the conditional definition of power as “the probability with which a statistical test will produce a significant result, under the assumption that the tested effect exists” They then make the false claim that “it depends on the expected effect size” This has nothing to do with the actual probability of getting a significant result that depends on the true population effect size. It gets worse from there on. With self-proclaimed experts who cannot even quote Cohen correctly, psychology is doomed to remain the science with important questions and no answers. , - Resolving the confusion

We can resolve the confusion in two ways. Either we reeducate psychologists about the definition of power as the unconditional probability of a statistically significant result in an actual study that depends on the actual size of the effect, if it could be observed without sampling error or we concede that this is a futile battle and simply find a new term for this important probability that can be used to evaluate the p-values that psychological scientists use to claim that they discovered some fundamental laws of human nature. We could call it, Probability of Statistical Significance (POSS). Studies with high POSS are more credible than studies with low POSS, especially when the studies with low POSS surprisingly always have significant results. Everything psychologists’ power cannot do, POSS can do. - My Personal Preference

My preference is clear. Cohen has done a lot to popularize power among social scientists, and his 1988 book remains the foundational book on this topic. The book clearly defines power as the probability to obtain a significant result. If psychologists are not able to read, it is their problem and not the problem of those who actually understand statistics. If they want to remain ignorant and do not want to evaluate the power of their studies, so be it. Others will and if the results are not good, they may lose funding. Cohen (1988) predicted that psychologists will ignore power “at their peril.” Well, they were able to survive with power for another 40 decades, but maybe Cohen’s prophecy is coming finally true. All their attempts to avoid facing the music are futile. The use of power to evaluate their published p-values is like using modern doping test to detect doping in frozen urine samples from 1960. We can tell, which p-values were obtained with high POSS, which ones were not, building on Neyman, Pearson, and Cohen. - Epilogue (Personal Reflection) I

Jerry (Brunner) and I are big fans of Cohen and without his work, we would not have developed z-curve; a tool that can estimate average power. I was happy to learn (after I first attributed the mistake to Cohen) that he correctly stated the definition of power as the probability to obtain a significant result, in accordance with Neyman. Psychologists then messed it up and are now fighting the use of power to evaluate their studies (Pek et al., 2024; ), Lengersdorff and Lamm (2025). Cohen clearly stated that we can use power to evaluate study outcomes. It remains a mystery to me why Cohen never tried to estimate the true power of studies to make a stronger case for the need of power analysis in psychology. It was only in the early 2010s that I started doing so and discovered the power of statistical power to reveal publication bias in multiple study articles (Schimmack, 2012). This work and the work on z-curve with my colleague follows directly from Neyman-Pearson’s influential work nearly 100 years ago. Neyman is the GOAT of statistics (he also created confidence intervals that make Fisher’s p-values useless). Although APA now recommends confidence intervals, too many psychologists still focus on p-values and do not understand that power determines whether their confidence intervals include zero or not. Statistics training would be easier and better if we got rid of Fisher, who was also a racist and eugenicist into the 1950s. Others before me have complained about the mindless use of statistics in psychology (Gigerenzer, 2004), but that has not changed practices. Maybe the time has come. Let’s build our statistics training on solid foundations. Fisher is dead. Long live Neyman and the definition of power as the probability to get a significant result in an actual study that depends on the true population effect size.