Politics in the United States is extremely divisive and filled with false claims based on fake facts. Ideally, social scientists would provide some clarity to these toxic debates by informing US citizens and politicians with objective and unbiased facts. However, social scientists often fail to do so for two reasons. First, they often lack the proper data to provide valuable scientific input into these debates. Second, when the data do not provide clear answers, social scientists’ inferences are shaped as much (or more) by their preexisting beliefs than by the data. It is therefore not a surprise, that the general public increasingly ignores scientists because they don’t trust then to be objective.

Unfortunately, the causes of police killings in the United States is one of these topics. While a few facts are known and not disputed, these facts do not explain why Black US citizens are killed by police more often than White citizens. While it is plausible that there are multiple factors that contribute to this sad statistic, the debate is shaped by groups who either blame a White police force on the one hand and those who blame Black criminals on the other hand.

On September 26, the House Committee on the Judiciary held an Oversight Hearing on Policing Practices. In this meeting, an article in the prestigious journal Proceedings of the National Academy of Sciences (PNAS) was referenced by Heather Mac Donald, who works for the conservative think tank Manhattan Institute, as evidence that crime is the single factor that explains racial disparities in police shootings.

The Manhattan Institute posted a transcript of her testimony before the committee. Here claim is clear. She not only claims that crime explains the higher rate of Black citizens being killed, she even claims that taking crime into account shows a bias of the police force to kill disproportionally FEWER Black citizens than White citizens.

Heather MacDonald is not a social scientist and nobody should expect that she is an expert in logistic regression. This is the job of scientists; authors, reviewers, and editors. The question is whether they did their job correctly and whether their analyses support the claim that after taking population ratios and crime rates into account, police officers in the United States are LESS likely to shot a Black citizen than a White citizen.

The abstract of the article summarizes three findings.

1. As the proportion of Black or Hispanic officers in a FOIS increases, a person shot is more likely to be Black or Hispanic than White.

In plain English, in counties with proportionally more Black citizens, proportionally more Black people are being shot. For example, the proportion of Black people killed in Georgia or Florida is greater than the proportion of Black people killed in Wyoming or Vermont. You do not need a degree in statistic to realize that this tells us only that police cannot shoot Black people if there are no Black people. This result tells us nothing about the reasons why proportionally more Black people than White people are killed in places where Black and White people live.

2. Race-specific county-level violent crime strongly predicts the race of the civilian shot.

Police do not shoot and kill citizens at random. Most, not all, police shootings occur when officers are attacked and they are justified to defend themselves with lethal force. When police officers in Wyoming or Vermont are attacked, it is highly likely that the attacker is White. In Georgia or Florida, the chance that the attacker is Black is higher. Once more this statistical fact does not tell us why Black citizens in Georgia or Florida or other states with a large Black population are killed proportionally more often than White citizens in these states.

3. The key finding that seems to address racial disparities in police killings is that “although we find no overall evidence of anti-Black or anti-Hispanic disparities in fatal shootings, when focusing on different subtypes of shootings (e.g., unarmed shootings or “suicide by

cop”), data are too uncertain to draw firm conclusions”

First, it is important to realize that the authors do not state that they have conclusive evidence that there is no racial bias in police shootings. In fact, they clearly that that for shootings of unarmed citizens” their data are inconclusive. It is a clear misrepresentation of this article to claim that it provides conclusive evidence that crime is the sole factor that contributes to racial disparity in police shootings. Thus, Heather Mac Donald lied under oath and misrepresented the article.

Second, the abstract misstates the actual findings reported in the article, when the authors claim that they “find no overall evidence of anti-Black or anti-Hispanic disparities in fatal shootings”. The problem is that the design of the study is unable to examine this question. To see this, it is necessary to look at the actual statistical analyses more carefully. Instead, the study examines another question: Which characteristics of a victim make it more or likely that a victim is Black or White. For example, an effect of age could show that young Black citizens are proportionally more likely to be killed than young White citizens, while older Black men are proportionally less likely to be shot than older White men. This would provide some interesting insights into the causal factors that lead to police shootings, but it doesn’t change anything about the proportions of Black and White citizens being shoot by police.

We can illustrate this using the authors’ own data that they shared (unfortunately, they did not share information about officers to fully reproduce their results). However, they did find a significant effect for age. To make it easier to interpret the effect, I divided victims into those under 30 and those 30 and above. This produces a simple 2 x 2 table.

An inspection of the cell frequencies shows that the group with the highest frequency are older White victims. This is only surprising if we ignore the base rates of these groups in the general population. Older White citizens are more likely to be victims of police shootings because there are more of them in the population. As this analysis does not examine proportions in the population this information is irrelevant.

It is also not informative, that there are about two times more White victims (476) than Black victims (235). Again, we would expect more White victims simply because more US citizens are White.

The meaningful information is provided by the odds of being a Black or White victim in the two age groups. Here we see that older victims are much less likely to be Black (122/355) than younger victims (113/121). When we compute the odds ratio, we see that young victims are 1.89 times more likely to be Black than old victims. This shows that young Black man are disproportinally more likely to be the victims of police shootings than young White men. Consistent with this finding, the article states that “Older civilians were 1.85

times less likely (OR = 0.54 [0.45, 0.66]) to be Black than White”

In Table 2, the age effect remains significant after controlling for many variables, including rates of homicides committed by Black citizens. Thus, the authors found that young Black citizens are killed more frequently by police than young White men, eve when they attempted to control statistically for the fact that young Black men are disproportionally involved in criminal activities. This finding is not surprising to critics who claim that there is a racial bias in the police force that has resulted in deaths of innocent young Black men. It is actually exactly what one would expect if racial bias plays a role in police shootings.

Although this finding is statistically significant and the authors actually mention it when they report the results in Table 1, they never comment on this finding again in their article. This is extremely surprising because it is common practice to highlight statistically significant results and to discuss their theoretical implications. Here, the implications are straightforward. Racial bias does not target all Black citizens equally. Young Black men (only 10 Black and 25 White victims were female) are disproportionally more likely to be shoot by police even after controlling for several other variables.

Thus, while the authors attempt to look for predictors of victims’ race provides some interesting insights into the characteristics of Black victims, these analyses do not address the question why Black citizens are more likely to be shot than White citizens. Thus, it is unclear how the authors can state “We find no evidence of anti-Black or anti-Hispanic disparities across shootings” (p. 15877) or “When considering all FOIS in 2015, we did not find anti-Black or anti-Hispanic disparity” (p. 15880).

Surely, they are not trying to say that they didn’t find evidence for it because their analysis didn’t examine this question. In fact, their claims are based on the results in Table 3. Based on these results, the authors come to the conclusion that “controlling for predictors at the civilian, officer, and county levels,” a victim is more than 6 times more likely to be to be White than Black. This makes absolutely no sense if the authors did, indeed, center continuous variables and effect coded nominal variables, as they state.

The whole point of centering and effect coding is to keep the intercept of an analysis interpretable and consistent with the odds ratio without predictor variables. To use age again as an example, the odds ratio of a victim being Black is .49. Adding age as a predictor shows us how the odds change within the two age groups, but this does not change the overall odds ratio. However, if we do not center the continuous age variable or do not take the different frequencies of young (224) and old (477) victims into account, the intercept is no longer interpretable as a measure of racial disparities.

To illustrate this, here are the results of several logistic regression analysis with age as a predictor variable.

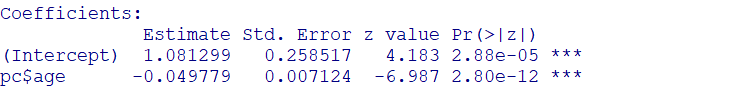

First, I used raw age as a predictor.

summary(glm(race ~ pc$age,family=binomial(link=”logit”)))

The intercept changes from -.71 to 1.08. As these values are log-odds, we need to transform them to get the odds ratios, which are .49 (235/711) and 2.94. The reason is that the intercept is a prediction of the racial bias at age 0, which would suggest that police officers are 3 times more likely to kill a Black newborn than a White newborn. This prediction is totally unrealistic because there are fortunately very few victims younger than 15 years of age. In short, this analysis changes the intercept, but the results do no longer tell us anything about racial disparities in general because the intercept is about a very small, and in this case, non-existing subgroup.

We can avoid this problem by centering or standardizing the predictor variable. Now a value of 0 corresponds to the average age.

age.centered = pc$age – mean(pc$age)

summary(glm(race ~ age.centered,family=binomial(link=”logit”)))

The age effect remains the same, but now the intercept is proportional to the odds in the total sample [disclaimer: I don’t know why it changed from -.71 to -.78; any suggestions are welcome]

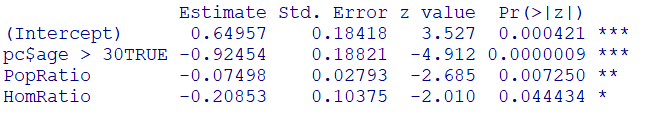

This is also true when we split age into young (< 30) and old (30 or older) groups.

When the groups are dummy coded (< 30 = 0, 30+ = 1), the intercept changes and shows that victims are now more likely to be Black in the younger group coded as zero.

summary(glm(race ~ (pc$age > 30),family=binomial(link=”logit”)))

However, with effect coding the intercept hardly changes.

summary(glm(race ~ (scale(pc$age > 30)),family=binomial(link=”logit”)))

Thus, it makes no sense when the authors claim that they centered continuous variables and effect coded nominal variables and the intercept changed from exp(-.71) = .49 to exp(-1.90) = .15, which they report in Table 3. Something went wrong in their analyses.

Even if this result were correct, the interpretation of this result as a measure of racial disparities is wrong. One factor that is omitted from the analysis is the proportion of White citizens in the counties. It doesn’t take a rocket scientist to realize that counties with a larger White population are more likely to have White victims. The authors do not take this simply fact into account, although they did have a measure of the population size in their data set. We can create a measure of the proportion of Black and White citizens and center the predictor so that the intercept reflects a population with equal proportions of Black and White citizens.

When we use this variable as a predictor, the surprising finding that police officers are much more likely to shot and kill White citizens disappears. The odds ratio changes from 0.49 to exp(-.04) = .96, and the 95%CI includes 1, 95%CI = 0.78 to 1.19.

This finding may be taken as evidence that there is little racial disparity after taking population proportions into account. However, this ignores the age effect that was found earlier. When age is included as a predictor, we see now that young Black men are disproprotionally likely to be killed, while the reverse is true for older victims. One reason for this could be that criminals are at a higher risk of being killed. Even if White criminals are not killed in their youth, they are likely to be killed at an older age. As Black criminals are killed at a younger age, there are fewer Black criminals that get killed at an older age. Importantly, this argument does not imply that all victims of police shootings are criminals. The bias to kill Black citizens at a younger age also affects innocent Blacks, as the age effect remained significant after controlling for crime rates.

The racial disparity for young citizens becomes even larger when homicide rates are included using the same approach. I also excluded counties with a ratio greater than 100:1 for population or homicide rates.

summary(glm(race ~ (pc$age > 30) + PopRatio + HomRatio,family=binomial(link=”logit”)))

The intercept of 0.65 implies that young (< 30) victims of police shootings are two times more likely to be Black than White when we adjust the risk for the proportion of Black vs. White citizens and homicides. The significant age effect shows again that this risk switches for older citizens. As we are adjusting for homicide rates, this suggest that older White citizens are at an increased risk of being killed by police. This is an interesting observation as much of the debate has been about young Black men who were innocent. According to these analyses, there should also be cases of older White men who are innocent victims of police killings. Looking for examples of these cases and creating more awareness about these cases does not undermine the concerns of the Black Lives Matter movement. Police killings are not a zero sum game. The goal should be to work towards reducing the loss of Black, Blue (police), White, and all other lives. Scientific studies can help to do that when authors analyze and interpret the data correctly. Unfortunately, this is not what happened in this case. Fortunately, the authors shared (some of) their data and it was possible to put their analyses under the microscope. The results show that their key conclusions are not supported by their data. First, there is no disparity that leads to the killing of more White citizens than Black or Hispanic citizens by police. This claim is simply false. Second, the authors have an unscientific aversion to take population rates into account. In counties with mostly White population, crime is mostly committed by White citizens, and police is more likely to encounter and kill White criminals. It is not a mistake to include population rates in statistical analyses. It is a mistake not to do so. Third, the authors ignored a key finding of their own analysis that age is a significant predictor of police shootings. Consistent with the Black Lives Matter claim, their data show that police disproportionally shoots young Black men. This bias is offset to some extent by the opposite bias in older age groups, presumably because Black men have already been killed, which reduces the at risk population of Black citizens in this age group.

In conclusion, the published article already failed to show that there is no racial disparity in police shootings, but it was easily misunderstood as providing evidence for this claim. A closer inspection of the article shows even more problems with the article, which means this article should not be used to support empirical claims about police shootings. Ideally, the article would be retracted. At a minimum, PNAS should publish a notice of concern.

We first would like to thank Uli Schimmack for providing a critique of our paper. We believe that the best way to move forward on difficult issues such as fatal shootings is through ongoing discussion and post-publication peer review.

At the same time, we want to address the assertion that we made mistakes in our reporting of the intercepts in Table 3. The criticism is that the point of “centering and effect coding is to keep the intercept of an analysis interpretable and consistent with the odds ratio without predictor variables.” The implication is that in an analysis where all predictors are centered or effects coded, the intercept should be identical compared to the raw odds ratios.

This interpretation is incorrect. Odds ratios derived from the model intercept may vary even if predictors are centered or standardized when those predictors explain variability in the outcome. This even occurs within the critique—the raw odds ratio indicating the odds of a person fatally shot being Black vs. White (235/476 = 0.49) changes to 0.46 when age is entered as a centered predictor. While centering and effects coding keeps model intercepts closer to the raw value than dummy coding, they will not be identical. This difference is small when only including one predictor (age), but the models we report have many predictors that explain variability in the outcome (e.g., homicide rates, income inequality), which impact the intercept.

The second issue raised is that our analyses of racial disparities amongst those fatally shot by police (Table 3) were uninformative because they did not take into account racial differences in crime rates or population proportions. This criticism is also incorrect. We were aware of this issue and did take it into account. We document this in the Supplemental Materials (see the sections “Multinomial Regression Models” and “Additional Tests of Racial Disparities”).

In the main text, our analyses of the intercept (Table 3) control for differences in crime rates, which mirror the analyses in Tables 1 and 2. We also examine specific types of fatal shootings (e.g., shootings of young civilians)—again, when controlling for differences in crime rates. In the Supplemental Materials we report these tests controlling for differences in population rates, as well as crime and population rates simultaneously, although we do not examine specific types of fatal shootings.

To clarify, the raw odds of a person fatally shot by police being Black is .49 (i.e., a person fatally shot is 2.0 times more likely to be White than Black. As Schimmack notes, this test ignores racial differences in exposure to police. He controls for exposure using population rates as a proxy and finds the odds of a person fatally shot by police being Black changes to 0.96 (a person is equally likely to be White or Black).

However, as we note in our work (Cesario, Johnson, & Terrill, 2019), the assumption that police are exposed to civilians in situations where fatal force is used at rates matching their population proportions is not supported by data. This is why we relied on homicide rates as a proxy for exposure, because the vast majority of fatal shootings happen in the context of violent crime.

We did not have an “unscientific aversion” to use population rates (and indeed, we report population rate data in the Supplemental Materials, see “Additional Tests of Racial Disparities”) —rather, our decision was motivated by criminal justice research demonstrating the pitfalls of population rates as a proxy for exposure to police in violent crime situations as well as the high correlation between crime and population rates (rs > .85).

When we control for crime rate differences (and all other predictors), we obtain the odds ratios reported in Table 3 (OR = .15), indicating that a person fatally shot is 6.7 times more likely to be White than Black. However, in the Supplemental Materials we reran this analysis controlling for differences in population rates, and population and crime rates simultaneously. When controlling for population rate differences we obtain the odds ratio of 0.98, indicating no racial disparity. In other words, we come the same conclusion in the critique if using a similar analytic approach.

Finally, when we control for both crime and population rate differences (as Schimmack suggests and as we did in the Supplemental Material), the odds ratio is 0.43, indicating a person fatally shot is 2.3 times more likely to be White than Black. The point of these additional tests was to serve as a sensitivity analysis to examine the robustness of our conclusions based on different proxies for exposure. We provide all these analyses in the Supplemental Materials and note that regardless of the choice, none result in anti-Black disparity across all fatal officer-involved shootings.

These criticisms aside, we appreciate Schimmack’s point about how racial disparities amongst those fatally shot might vary by age, with anti-Black disparity only present for young Black men. Our data show a similar pattern; the analyses in Table 3 reveal some of the most evidence for anti-Black disparity in shootings of young civilians. However, when controlling for differences in crime rate, these disparities are not significant (and still show anti-White disparity).

Even if taken at face value, it is difficult to interpret the effect that an older person fatally shot is less likely to be Black than White. Young Black men being fatally shot at disproportionate rates vs. young White men could drive this effect (as Schimmack suggests), but it also could be that older White men are fatally shot more than older Black men, with little race difference among young men. Without a point of comparison (how often men of different ages and races are exposed to police in lethal force contexts) these hypotheses are not easily disentangled. We do not say this to dismiss the real possibility of anti-Black disparity, but to stress the limitations of what conclusions can be drawn when we do not have data on those who have encountered police in similar contexts and not been fatally shot.

Other differences between the analyses in our paper and the analyses in the critique also impact the crucial test of the intercept value. Our approach to controlling for exposure was a difference approach adopted from the analyses in Scott, Ma, Sadler, and Correll (2017) and differs slightly from the ratio approach used in the critique. The approach in the critique also removes over 100 cases from the dataset, which makes the datasets less comparable. The critique also only choses some of the predictors in the model, which impacts the test of the model intercept. And the critique does not control for the fact that shootings are nested within counties.

We do not just point out these differences to simply criticize Schimmack’s approach. We note these differences because they impact the intercept test for racial disparity amongst those fatally shot. We justify our decisions in the paper (controlling for crime rates vs. population rates) based on past work, but know that researchers can and do disagree with these decisions—the issue of benchmarking and exposure has been debated in criminal justice for decades.

Reasonable researchers can debate about methodological and analytic choices because we have made these public and present alternative choices for the reader to consider. As clarified above, the differences between our analyses and Schimmack’s are due to different choices in methods and his use of a subset of the data, not because of errors. By posting our data online, other researchers can explore it and make their own decisions about how to best analyze the data.

Your claims about the intercept make no sense. As I asked you on email, please share the MPLUS syntax and the output files so that other scientists can examine what you did and why you obtained results that make no sense. The whole point of centering and effect coding is to obtain an intercept that reflects the odds-ratios in the entire sample that is at the average level of all predictor variables. If your intercept changed notably, something went wrong.

Hi there mates, good piece of writing and nice arguments commented here, I am actually enjoying by these.